Between integrating its Grace Hopper chip directly with a quantum processor and showing off the ability to simulate quantum systems on classical supercomputers, Nvidia is making waves in the quantum computing world this month.

Nvidia is certainly well positioned to take advantage of the latter. It makes GPUs that supercomputers use, the same GPUs that AI developers crave. These same GPUs are also valuable as tools for simulating dozens of qubits on classical computers. New software developments mean that researchers can now use more and more supercomputing resources in lieu of real quantum computers.

But simulating quantum systems is a uniquely demanding challenge, and those demands loom in the background.

Few quantum computer simulations to date have been able to access more than one multi-GPU node or even just a single GPU. But Nvidia has made recent behind-the-scenes advances, now making it possible to ease those bottlenecks.

Classical computers serve two roles in simulating quantum hardware. For one, quantum computer builders can use classical computation to test-run their designs. “Classical simulation is a fundamental aspect of understanding and design of quantum hardware, frequently serving as the only means to validate these quantum systems,” says Jinzhao Sun, a postdoctoral researcher at Imperial College London.

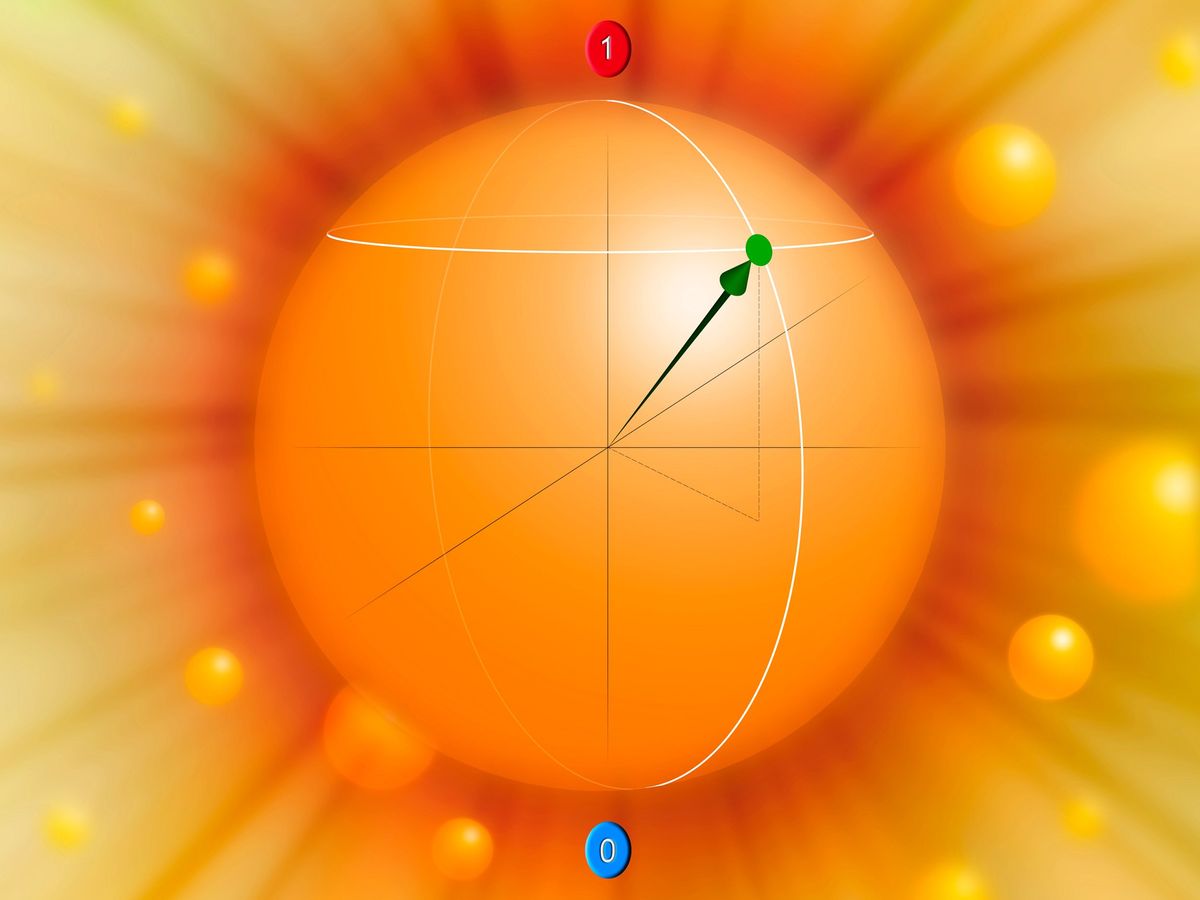

For another, classical computers can run quantum algorithms in lieu of an actual quantum computer. It’s this capability that especially interests researchers who work on applications like molecular dynamics, protein folding, and the burgeoning field of quantum machine learning, all of which benefit from quantum processing.

Classical simulations are not perfect replacements for the genuine quantum articles, but they frequently make suitable facsimiles. The world only has so many quantum computers, and classical simulations are easier to access. Classical simulations can also control the noise that plagues real quantum processors and often scuttles quantum runs. Classical simulations may be slower than authentic quantum ones, but researchers still save time from needing fewer runs, according to Shinjae Yoo, a computer-science and machine-learning staff researcher at Brookhaven National Laboratory, in Upton, N.Y.

The catch, then, is a size problem. Because a qubit in a quantum system is entangled with every other qubit in that system, the demands of exactly simulating that system scale exponentially. As a rule of thumb, every additional qubit doubles the amount of classical memory the simulation needs. Moving from a single GPU to an entire eight-GPU node is an increase of three qubits.

Many researchers still dream of pressing as far up this exponential slope as they can manage. “If we are doing, let’s say, molecular dynamics simulation, we want a much bigger number of atoms and a bigger-scale simulation to have a more realistic simulation,” Yoo says.

GPUs can speed up quantum simulations

GPUs are key footholds. Swapping in a GPU for a CPU, Yoo says, can speed up a simulation of a quantum system by an order of magnitude. That kind of acceleration may not come as a surprise, but few simulations have been able to take full advantage because of bottlenecks in sending information between GPUs. Consequently, most simulations have stayed within the confines of one multi-GPU node or even a single GPU within that node.

Several behind-the-scenes advances are now making it possible to ease those bottlenecks. Closer to the surface, Nvidia’s cuQuantum software development kit makes it easier for researchers to run quantum simulations across multiple GPUs. Where GPUs previously needed to communicate via CPU—creating an additional bottleneck—collective communications frameworks like Nvidia’s NCCL let users conduct operations like memory-to-memory copy directly between nodes.

cuQuantum pairs with quantum computing tool kits such as Canadian startup Xanadu’s PennyLane. A stalwart in the quantum machine-learning community, PennyLane lets researchers play with techniques like PyTorch on quantum computers. While PennyLane is designed for use on real quantum hardware, PennyLane’s developers specifically added the capability to run on multiple GPU nodes.

GPUs are key footholds. Swapping in a GPU for a CPU, Yoo says, can speed up a simulation of a quantum system by an order of magnitude.

On paper, these advances can allow classical computers to simulate around 36 qubits. In practice, simulations of that size demand too many node hours to be practical. A more realistic gold standard today is in the upper 20s. Still, that’s an additional 10 qubits over what researchers could simulate just a few years ago.

To wit, Yoo performs his work on the Perlmutter supercomputer, which is built from several thousand Nvidia A100 GPUs—sought for their prowess in training and running AI models, even in China, where their sale is restricted by U.S. government export controls. Quite a few other supercomputers in the West use A100s as their backbones.

Classical hardware’s role in qbit simulations

Can classical hardware continue to grow in size? The challenge is immense. The jump from an Nvidia DGX with 160 gigabytes of GPU memory to one with 320 GB of GPU memory is a jump of just one qubit. Jinzhao Sun believes that classical simulations attempting to simulate more than 100 qubits will likely fail.

Real quantum hardware, at least on the surface, has already long outstripped those qubit numbers. IBM, for instance, has steadily ramped up the number of qubits in its own general-purpose quantum processors into the hundreds, with ambitious plans to push those counts into the thousands.

That does not mean simulation won’t play a part in a thousand-qubit future. Classical computers can play important roles in simulating parts of larger systems—validating their hardware or testing algorithms that might one day run in full size. It turns out that there is quite a lot you can do with 29 qubits.