In 2019 Michael Kagan was leading the development of accelerated networking technologies as chief technology officer at Mellanox Technologies, which he and eight colleagues had founded two decades earlier. Then in April 2020 Nvidia acquired the company for US $7 billion, and Kagan took over as CTO of that tech goliath—his dream job.

Nvidia is headquartered in Santa Clara, Calif., but Kagan works out of the company’s office in Israel.

At Mellanox, based in Yokneam Illit, Israel, Kagan had overseen the development of high-performance networking for computing and storage in cloud data centers. The company made networking equipment such as adapters, cables, and high-performance switches, as well as a new type of processor, the DPU. The company’s high-speed InfiniBand products can be found in most of the world’s fastest supercomputers, and its high-speed Ethernet products are in most cloud data centers, Kagan says.

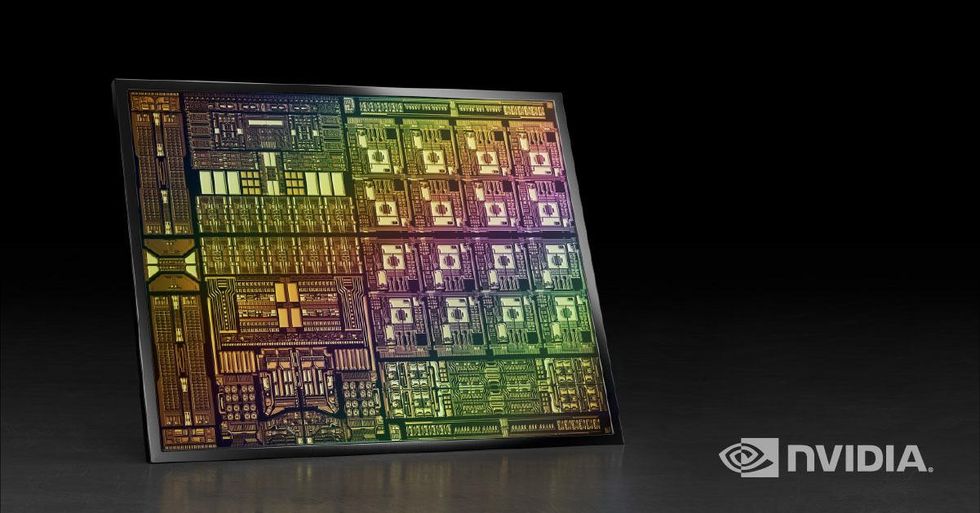

The IEEE senior member’s work is now focused on integrating a wealth of Nvidia technologies to build accelerated computing platforms, whose foundation are three chips: the GPU, the CPU, and the DPU, or data-processing unit. The DPU can support the ability to offload, accelerate, and isolate data center workloads, reducing CPU and GPU workloads.

“At Mellanox we worked on the data center interconnect, but at Nvidia we are connecting state-of-the-art computing to become a single unit of computing: the data center,” Kagan says. Interconnects are used to link multiple servers and combine the entire data center into one, giant computing unit.

“I have access and an open door to Nvidia technologies,” he says. “That’s what makes my life exciting and interesting. We are building the computing of the future.”

From Intel to Mellanox

Kagan was born in St. Petersburg, Russia—then known as Leningrad. After he graduated high school in 1975, his family moved to Israel. As with many budding engineers, his curiosity led him to disassemble and reassemble things to figure out how they worked. And, with many engineers in the family, he says, pursuing an engineering career was an easy decision.

He attended the Technion, Israel’s Institute of Technology, because “it was one of the best engineering universities in the world,” he says. “The reason I picked electrical engineering is because it was considered to be the best faculty in the Technion.”

Kagan graduated in 1980 with a bachelor’s degree in electrical engineering. He joined Intel in Haifa, Israel, in 1983 as a design engineer and eventually relocated to the company’s offices in Hillsboro, Ore., where he worked on the 80387 floating-point coprocessor. A year later, after returning to Israel, Kagan served as an architect of the i8060XP vector processor and then led and managed design of the Pentium MMX microprocessor.

During his 16 years at Intel, he worked his way up to chief architect. In 1999 he was preparing to move his family to California, where he would lead a high-profile project for the company. Then a former coworker at Intel, Eyal Waldman, asked Kagan to join him and five other acquaintances to form Mellanox.

About Michael Kagan

Employer: Nvidia, Tel Aviv

Title: CTO

Member grade: Senior member

Alma mater: Technion, Israel’s Institute of Technology, Haifa

Kagan had been turning down offers to join startups nearly every week, he recalls, but Mellanox, with its team of cofounders and vision, drew him in. He says he saw it as a “compelling adventure, an opportunity to build a company with a culture based on the core values I grew up on: excellence, teamwork, and commitment.”

During his more than 21 years there, he said, he had no regrets.

“It was one of the greatest decisions I’ve ever made,” he says. “It ended up benefiting all aspects of my life: professionally, financially—everything.”

InfiniBand, the startup’s breakout product, was designed for what today is known as cloud computing, Kagan says.

“We took the goodies of InfiniBand and bolted them on top of the standard Ethernet,” he says. “As a result, we became the vendor of the most advanced network for high-performance computing. More than half the machines at the top 500 computer companies use the Mellanox interconnect, now the Nvidia interconnect.

“Most of the cloud providers, such as Facebook, Azure, and Alibaba, use Nvidia’s networking and compute technologies. No matter what you do on the Internet, you’re most likely running through the chip that we designed.”

Kagan says the partnership between Mellanox and Nvidia was “natural,” as the two companies had been doing business together for nearly a decade.

“We delivered quite a few innovative solutions as independent companies,” he says.

BlueField and Omniverse supercomputers

As CTO of Nvidia for the past two years, Kagan has shifted his focus from pure networking to the integration of multiple Nvidia technologies including building BlueField data-processing units and the Omniverse real-time graphics collaboration platform.

He says Nvidia’s vision for the data center of the future is based on its three chips: CPU, DPU, and GPU.

“These three pillars are connected with a very efficient and high-performance network that was originally developed at Mellanox and is being further developed at Nvidia,” he says.

Development of the BlueField DPUs is now a key priority for Nvidia. It is a data center infrastructure on a chip, optimized for high-performance computing. It also offloads, accelerates, and isolates a variety of networking, storage, and security services.

“In the data center, you have no control over who your clients are,” Kagan says. “It may very well happen that a client is a bad guy who wants to penetrate his neighbors’ or your infrastructure. You’re better off isolating yourself and other customers from each other by having a segregated or different computing platform run the operating system, which is basically the infrastructure management, the resource management, and the provisioning.”

Kagan is particularly excited about the Omniverse, a new Nvidia product that uses Pixar’s Universal Scene Description software for creating virtual worlds—what has become known as the metaverse. Kagan describes the 3D platform as “creating a world by collecting data and making a physically accurate simulation of the world.”

Car manufacturers are using the Omniverse to test-drive autonomous vehicles. Instead of physically driving a car on different types of roads under various conditions, data about the virtual world can be generated to train the AI models.

“You can create situations that the car has to handle in the real world but that you don’t want it to meet in the real world, like a car crash,” Kagan says. “You don’t want to crash the car to train the model, but you do need to have the model be able to handle hazardous conditions on the road.”

Access to IEEE Information

Kagan joined IEEE in 1997. He says membership gives him access to information about technical topics that would otherwise be challenging to obtain.

“I enjoy this type of federated learning and being exposed to new things,” he says.

He adds that he likes connecting with members who are working on similar projects, because he always learns something new.

“Being connected to these people from more diverse communities helps a lot,” he says. “It inspires you to do your job in a different way.”

The Omniverse platform can generate millions of kilometers of synthetic driving data in orders of magnitude faster than actually driving the car.

Nvidia is investing heavily in technology for self-driving cars, Kagan says.

The company is also building what it calls the most powerful AI supercomputer for climate science: Earth-2, a digital twin of the planet. Earth-2 is designed to continuously run models to predict climate and weather events at both the regional and global levels.

Kagan says the climate modeling technology will enable people to try mitigation techniques for global warming and see what their impact is likely to be in 50 years.

The company is also working closely with the health care industry to develop AI-based technologies. Its supercomputers are helping to identify cancer by generating synthetic data to enable researchers to train their models to better identify tumors. Its AI and accelerated computing products also assist with drug discovery and genome research, Kagan says.

“We are actually moving forward at a fairly nice pace,” he says. “But the thing is that you always need to reinvent yourself and do the new thing faster and better, and basically win with what you have and not look for infinite resources. This is what commitment means.”

This article has been updated from an earlier version.

This article appears in the March 2023 print issue as “Michael Kagan on the Future of High-Performance Computing.”

- Nvidia's Next GPU Shows That Transformers Are Transforming AI ... ›

- Can Earth's Digital Twins Help Us Navigate the Climate Crisis ... ›

- How Researchers Use Nvidia's GPUs To Simulate Qubits - IEEE Spectrum ›

- Silicon Data Launches First GPU Rental Price Index - IEEE Spectrum ›

- AI Networking: Cornelis' CN500 Boosts Performance - IEEE Spectrum ›

Kathy Pretz is editor in chief for The Institute, which covers all aspects of IEEE, its members, and the technology they're involved in. She has a bachelor's degree in applied communication from Rider University, in Lawrenceville, N.J., and holds a master's degree in corporate and public communication from Monmouth University, in West Long Branch, N.J.