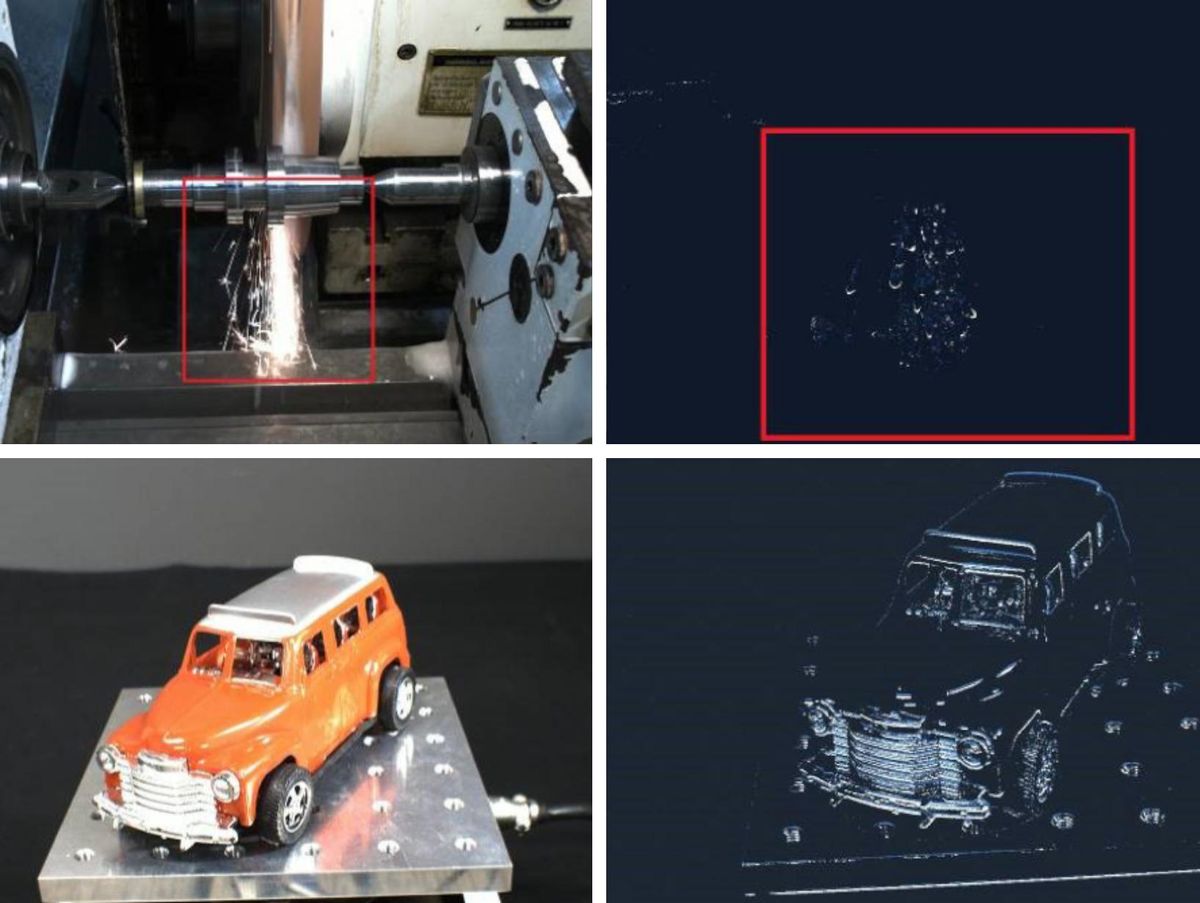

This month, Sony starts shipping its first high-resolution event-based camera chips. Ordinary cameras capture complete scenes at regular intervals even though most of the pixels in those frames don't change from one scene to another. The pixels in event-based cameras—a technology inspired by animal vision systems—only react if they detect a change in the amount of light falling on them. Therefore, they consume little power and generate much less data while capturing motion especially well. The two Sony chips—the 0.92 megapixel IMX636 and the smaller 0.33 megapixel IMX637—combine Prophesee's event-based circuits with Sony's 3D-chip stacking technology to produce a chip with the smallest event-based pixels on the market. Prophesee CEO Luca Verre explains what comes next for this neuromorphic technology.

Luca Verre on…

The role of event-based sensors in cars

The long road to this milestone

IEEE Spectrum: In announcing the IMX636 and 637, Sony highlighted industrial machine vision applications for it. But when we talked last year augmented reality and automotive applications seemed top of mind.

Luca Verre: The scope is broader than just industrial. In the space of automotive, we are very active, in fact, in non-safety related applications. In April we announced a partnership with Xperi, which developed an in-cabin driver monitoring solution for autonomous driving. [Car makers want in-cabin monitoring of the driver to ensure they are attending to driving even when a car is autonomous mode.] Safety-related applications [such as sensors for autonomous driving] is not in the scope of the IMX636 because it would require safety compliancy, which this design is not meant for. However, there are a number of OEM and Tier 1 suppliers that are doing evaluation on it, fully aware that the sensor cannot be, as-is, put in mass production. They're testing it because they want to evaluate the technology's performance and then potentially consider pushing us and Sony to redesign it, to make it compliant with safety. Automotive safety remains an area of interest, but more longer-term. In any case, if some of this evaluation work leads to a decision for product development, it would require quite a few years [before it appears in a car].

IEEE Spectrum: What's next for this sensor?

Luca Verre: For the next generation, we are working along three axes. One axis is around the reduction of the pixel pitch. Together with Sony, we made great progress by shrinking the pixel pitch from the 15 micrometers of Generation 3 down to 4.86 micrometers with generation 4. But, of course, there is still some large room for improvement by using a more advanced technology node or by using the now-maturing stacking technology of double and triple stacks. [The sensor is a photodiode chip stacked onto a CMOS chip.] You have the photodiode process, which is 90 nanometers, and then the intelligent part, the CMOS part, was developed on 40 nanometers, which is not necessarily a very aggressive node. Going for more aggressive nodes like 28 or 22 nm, the pixel pitch will shrink very much.

The benefits are clear: It's a benefit in terms of cost; it's a benefit in terms of reducing the optical format for the camera module, which means also reduction of cost at the system level; plus it allows integration in devices that require tighter space constraints. And then of course, the other related benefit is the fact that with the equivalent silicon surface, you can put more pixels in, so the resolution increases.The event-based technology is not following necessarily the same race that we are still seeing in the conventional [color camera chips]; we are not shooting for tens of millions of pixels. It's not necessary for machine vision, unless you consider some very niche exotic applications.

The second axis is around the further integration of processing capability. There is an opportunity to embed more processing capabilities inside the sensor to make the sensor even smarter than it is today. Today it's a smart sensor in the sense that it's processing the changes [in a scene]. It's also formatting these changes to make them more compatible with the conventional [system-on-chip] platform. But you can even push this reasoning further and think of doing some of the local processing inside the sensor [that's now done in the SoC processor].

The third one is related to power consumption. The sensor, by design, is actually low-power, but if we want to reach an extreme level of low power, there's still a way of optimizing it. If you look at the IMX636 gen 4, power is not necessarily optimized. In fact, what is being optimized more is the throughput. It's the capability to actually react to many changes in the scene and be able to correctly timestamp them at extremely high time precision. So in extreme situations where the scenes change a lot, the sensor has a power consumption that is equivalent to conventional image sensor, although the time precision is much higher. You can argue that in those situations you are running at the equivalent of 1000 frames per second or even beyond. So it's normal that you consume as much as a 10 or 100 frame-per-second sensor.[A lower power] sensor could be very appealing, especially for consumer devices or wearable devices where we know that there are functionalities related to eye tracking, attention monitoring, eye lock, that are becoming very relevant.

IEEE Spectrum: Is getting to lower power just a question of using a more advanced semiconductor technology node?

Luca Verre: Certainly using a more aggressive technology will help, but I think marginally. What will substantially help is to have some wake-up mode program in the sensor. For example, you can have an array where essentially only a few active pixels are always on. The rest are fully shut down. And then when you have reached a certain critical mass of events, you wake up everything else.

IEEE Spectrum: What's the journey been like from concept to commercial product?

Luca Verre: For me it has been a seven year journey. For my co-founder, CTO Christoph Posch, it has been even longer because he started on the research in 2004.To be honest with you, when I started I thought that the time to market would have been shorter. And over time, I realized that [the journey] was much more complex, for different reasons. The first and most important reason was the lack of an ecosystem. We are the pioneers, but being the pioneers also has some drawbacks. You are alone in front of everyone else, and you need to bring friends with you, because as a technology provider, you provide only a part of the solution. When I was pitching the story of Prophesee at the very beginning, it was a story of a sensor with huge benefit. And I was thinking, naively to be honest, that the benefits were so evident and straightforward that everyone would jump to it. But in reality, although everyone was interested, they were also seeing the challenge to integrate it—to build an algorithm, to put the sensor inside the camera module, to interface with a system on chip, to build an application. What we did with Prophesee over time was to work more at the system level. Of course, we kept developing the sensor, but we also developed more and more software assets.

By now, more than 1,500 unique users are experimenting with our software. Plus we implemented an evaluation camera and development kit, by connecting our sensor to an SoC platform. Today we are able to give to the ecosystem not only the sensor but much more than that. We can give them tools that can enable them to make the benefits clear.The second part is more fundamental to the technology. When we started seven years ago, we had a chip that was huge; it was a 30-micrometer pixel pitch. And of course, we knew that the path to enter high volume applications was very long, and we needed to find this path using a stacked technology. And that's the reason why, four years ago, we convinced Sony to work together. Back then, the first backside-illumination 3D stacking technology was becoming available, but it was not really accessible. [In backside illumination, light travels through the back of the silicon to reach photosensors on the front.

Sony's 3D stacking technology moves the logic that reads and controls the pixels to a separate silicon chip, allowing pixels to be more densely packed.] The two largest image sensor companies, Sony and Samsung, have their own process in-house. [Others' technologies] are not as advanced as the two market leaders. But we managed to develop a relationship with Sony, gain access to their technology, and manufactured the first industrial grade, commercially available, backside-illumination 3D stack event-based sensor that has a size that's compatible with larger volume consumer devices.We knew from the beginning that this would have to done, but the path to reach the point was not necessarily clear. Sony doesn't do this very often. The IMX636 is one of the only [sensor] that Sony has co-developed with an external partner. This, for me, is a reason for pride, because I think they believed in us—in our technology and our team.

This post was corrected on 15 October to clarify Prophesee's relationship with Sony.

- Intel's Neuromorphic Chip Gets A Major Upgrade - IEEE Spectrum ›

- Move Over, CMOS: Here Come Snapshots by Quantum Dots - IEEE ... ›

- Prophesee's Event-Based Camera Reaches High Resolution - IEEE ... ›

- Samsung and Omnivision Claim Smallest Camera Pixels - IEEE Spectrum ›

- Event Sensors Bring Just the Right Data to the Edge - IEEE Spectrum ›

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.