Will China Attain Exascale Supercomputing in 2020?

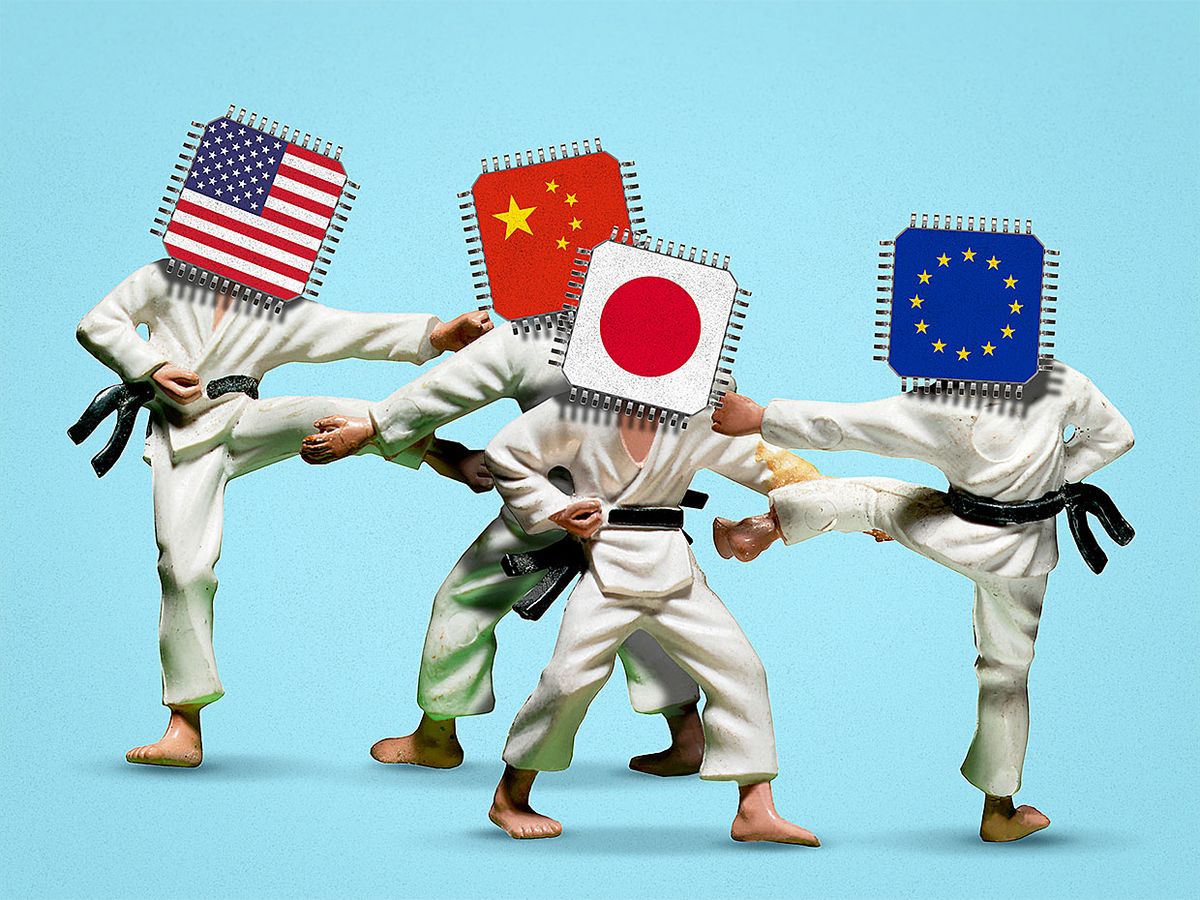

The U.S., China, Japan, and the EU are all striving to reach the next big milestone in supercomputing, but only China has claimed it will do so this year

To the supercomputer world, what separates “peta" from “exa" is more than just three orders of magnitude.

As measured in floating-point operations per second (a.k.a. FLOPS), one petaflop (1015 FLOPS) falls in the middle of what might be called commodity high-performance computing (HPC). In this domain, hardware is hardware, and what matters most is increasing processing speed as cost-effectively as possible.

Now the United States, China, Japan, and the European Union are all striving to reach the exaflop (1018 ) scale. The Chinese have claimed they will hit that mark in 2020. But they haven't said so lately: Attempts to contact officials at the National Supercomputer Center, in Guangzhou; Tsinghua University, in Beijing; and Xi'an Jiaotong University yielded either no response or no comment.

It's a fine question of when exactly the exascale barrier is deemed to have been broken—when a computer's theoretical peak performance exceeds 1 exaflop or when its maximum real-world compute speed hits that mark. Indeed, the sheer volume of compute power is less important than it used to be.

“Now it's more about customization, special-purpose systems," says Bob Sorensen, vice president of research and technology with the HPC consulting firm Hyperion Research. “We're starting to see almost a trend towards specialization of HPC hardware, as opposed to a drive towards a one-size-fits-all commodity" approach.

The United States' exascale computing efforts, involving three separate machines, total US $1.8 billion for the hardware alone, says Jack Dongarra, a professor of electrical engineering and computer science at the University of Tennessee. He says exascale algorithms and applications may cost another $1.8 billion to develop.

And as for the electric bill, it's still unclear exactly how many megawatts one of these machines might gulp down. One recent ballpark estimate puts the power consumption of a projected Chinese exaflop system at 65 megawatts. If the machine ran continuously for one year, the electricity bill alone would come to about $60 million.

Dongarra says he's skeptical that any system, in China or anywhere else, will achieve one sustained exaflop anytime before 2021, or possibly even 2022. In the United States, he says, two exascale machines will be used for public research and development, including seismic analysis, weather and climate modeling, and AI research. The third will be reserved for national-security research, such as simulating nuclear weapons.

“The first one that'll be deployed will be at Argonne [National Laboratory, near Chicago], an open-science lab. That goes by the name Aurora or, sometimes, A21," Dongarra says. It will have Intel processors, with Cray developing the interconnecting fabric between the more than 200 cabinets projected to house the supercomputer. A21's architecture will reportedly include Intel's Optane memory modules, which represent a hybrid of DRAM and flash memory. Peak capacity for the machine should reach 1 exaflop when it's deployed in 2021.

The other U.S. open-science machine, at Oak Ridge National Laboratory, in Tennessee, will be called Frontier and is projected to launch later in 2021 with a peak capacity in the neighborhood of 1.5 exaflops. Its AMD processors will be dispersed in more than 100 cabinets, with four graphics processing units for each CPU.

The third, El Capitan, will be operated out of Lawrence Livermore National Laboratory, in California. Its peak capacity is also projected to come in at 1.5 exaflops. Launching sometime in 2022, El Capitan will be restricted to users in the national security field.

China's three announced exascale projects, Dongarra says, also each have their own configurations and hardware. In part because of President Trump's China trade war, China will be developing its own processors and high-speed interconnects.

“China is very aggressive in high-performance computing," Dongarra notes. “Back in 2001, the Top 500 list had no Chinese machines. Today they're dominant." As of June 2019, China had 219 of the world's 500 fastest supercomputers, whereas the United States had 116. (Tally together the number of petaflops in each machine and the numbers come out a little different. In terms of performance, the United States has 38 percent of the world's HPC resources, whereas China has 30 percent.)

China's three exascale systems are all built around CPUs manufactured in China. They are to be based at the National University of Defense Technology, using a yet-to-be-announced CPU; the National Research Center of Parallel Computer Engineering and Technology, using a nonaccelerated ARM-based CPU; and the Chinese HPC company Sugon, using an AMD-licensed x 86 with accelerators from the Chinese company HyGon.

Japan's future exascale machine, Fugaku, is being jointly developed by Fujitsu and Riken, using ARM architecture. And not to be left out, the EU also has exascale projects in the works, the most interesting of which centers on a European processor initiative, which Dongarra speculates may use the open-source RISC-V architecture.

All four of the major players—China, the United States, Japan, and the EU—have gone all-in on building out their own CPU and accelerator technologies, Sorensen says. “It's a rebirth of interesting architectures," he says. “There's lots of innovation out there."