The logic chip industry is heading toward a fundamental change in the structure of transistors. Today’s transistors, called FinFETs, will give way to devices variously called nanosheet transistors, multibridge channel FETs, and gate-all-around transistors. Apart from the drive to make transistors that are both better performing and smaller, nanosheets add a degree of freedom to circuit design that FinFETs lack. Earlier this month at the IEEE International Solid-State Circuits Conference, Samsung engineers showed how that extra flexibility leads to on-chip memory cells that can be written to using hundreds of millivolts less potential, likely saving power on future chips.

Although Taiwan Semiconductor Manufacturing Co. (TSMC) plans to stay with FinFETs for its next generation process, the 3-nanometer node, Samsung chose to forge ahead with its version of nanosheets, multibridge channel MOSFETs (MBCFET). In FinFETs, the channel region, the part of the transistor through which current flows, is a vertical fin that protrudes up from the surrounding silicon. The gate drapes over the fin, covering it on three sides to control the flow of current through the channel. Nanosheets replace the fin with a stack of horizontal sheets of silicon. The gate completely surrounds each sheet.

“We have used FinFET transistors for about a decade, however at 3-nm we are using a gate all around transistor,” Samsung Electronics vice president Taejoong Song told attendees of the virtual conference. The new transistor “provides high speed, low power, and small area.”

But, as early nanosheet developers explained in IEEE Spectrum, the new device structure adds a degree of design flexibility that FinFETs lack. The key here is the “effective width”, or Weff, of the transistor channel. Generally, a wider channel can drive more current through it for a given voltage, effectively reducing its resistance. Because you can’t vary the height of the fin in a FinFET, the only way to boost Weff with today’s transistors is to add more fins per transistor. So with a FinFET you can double or triple Weff, but you can’t increase it by 25 percent or decrease it by 20 percent. You can, however, vary the width of the sheets in a nanosheet device, so a circuit using them can be composed of transistors with a variety of properties.

“Recently, designers are facing many challenges for [achieving maximum device frequency] and low power,” Song said. “Due to this design flexibility SRAM… can be improved more.”

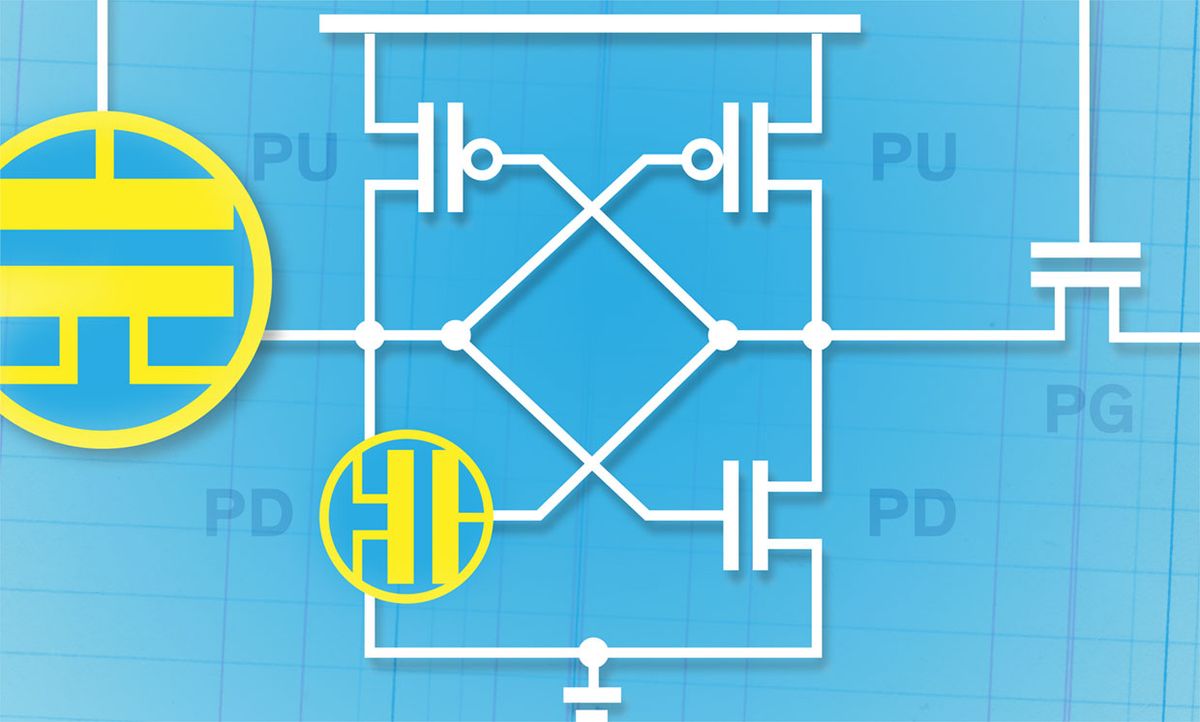

Song and his team took advantage of this flexibility to improve the performance of a potential next generation SRAM. SRAM is a six-transistor memory cell predominantly used as cache memory on processors, and it’s also among the most densely-packed parts of a logic chip. Samsung tested two schemes to improve SRAM’s write margin, the minimum voltage needed to switch the state of the cell. That value has been under pressure as chip interconnects have been shrunk down and their resistance has consequently increased.

SRAM’s six transistors can be divided into three pairs: the pass gates, the pull ups, and the pull downs. In a FinFET design, the Weff of all three types would be equal. But with nanosheet devices, the Samsung team was free to make alterations. In one they made the pass gates and the pull downs wider. In another, they made the pass gate wider and the pull down narrower.

The aim was to decrease the voltage needed to write to the SRAM cell without making the cell so unstable that reading it would accidentally flip a bit. The two schemes they came up with exploited these width adjustments—in particular widening the pass gate transistors relative to the pull up transistors—to arrive at an SRAM cell that writes at a voltage 230 mv lower than it otherwise would.

Samsung is expected to move to a 3-nm process with its MBCFET in 2022.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.