Researchers in Germany have tested a new form of high-speed data transfer that could boost connection speeds by five times or more. Laser light is still the carrier of information in this prototype technology, but the zeroes and ones are encoded according to the oscillating polarization in the light beam rather than its intensity.

The new technology also works at room temperatures and consumes less power than the intensity method—in which “1” would be represented by a bright laser burst and “0” is either a dim burst or no laser pulse at all.

Transmitting data via laser polarization, by contrast, would instead involve encoding a “1” as a burst of circularly polarized light whose corkscrew turns to the left, while a “0” would be a burst of circular polarization corkscrewing to the right.

The laser polarization method, if it can scale beyond the lab bench, would provide potentially welcome relief to data centers and server farms, whose high-speed interconnects produce substantial waste heat that requires additional cooling.

By the researchers’ calculations, the polarization-based data transfer method can hit 240 gigabits per second but still generate only about 7 percent as much heat as a traditional connection running at 25 gigabits per second.

“Normally, you make the throughput faster by pumping the laser harder, which automatically means you consume a lot of power and produce a lot of heat,” says Nils Gerhardt, chair of photonics and terahertz technology at Ruhr-University Bochum in Germany. “Which is actually a big problem for today’s server farms. But the bandwidth in our concept does not depend on the power consumption. We have the same bandwidth even [at] low currents.”

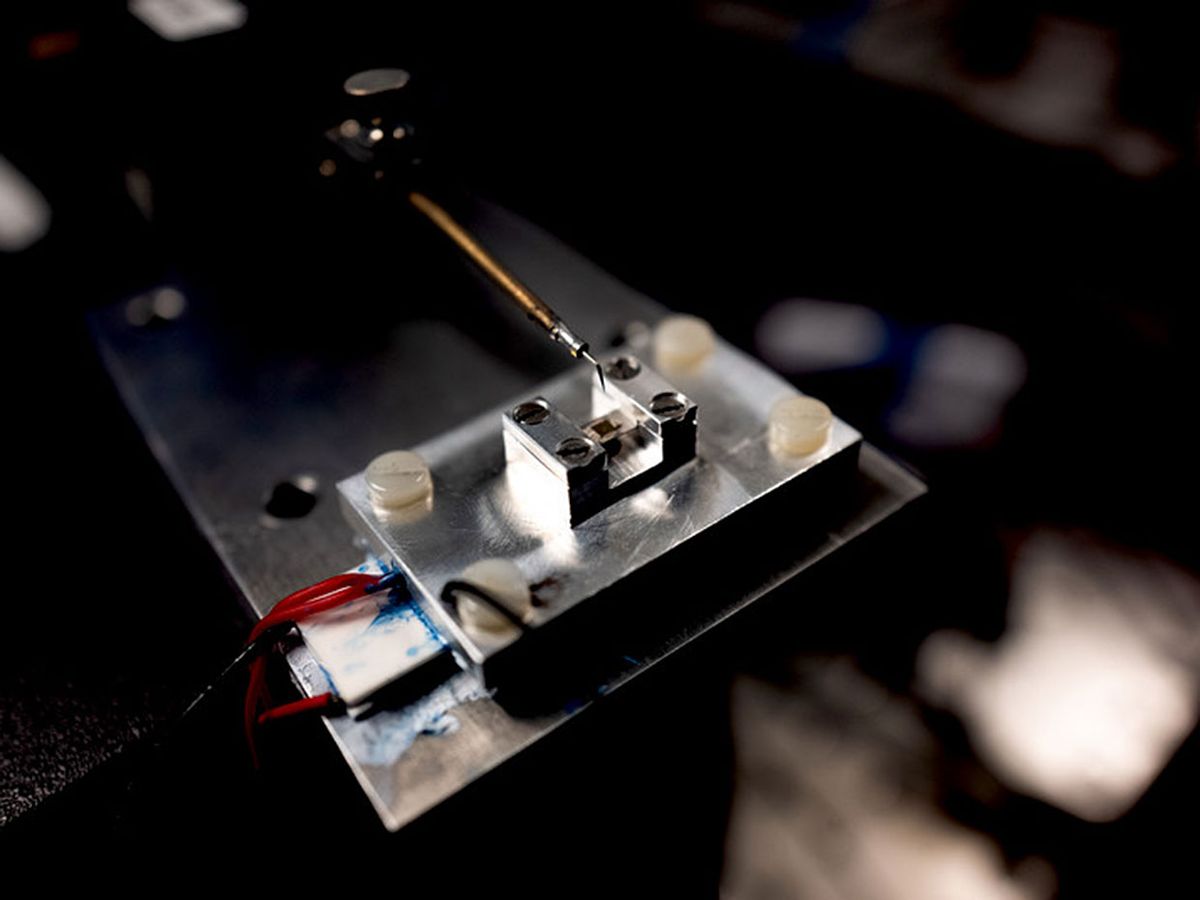

Gerhardt’s group, which published its work this month in the journal Nature (after posting an earlier draft to the preprint server arXiv), has so far only developed a proof-of-concept system in their lab. The current technology has a long backstory, too. Since 1997, researchers have been studying the connection between electron spin in certain semiconductor lasers and the polarization of its laser light.

Yet, only in the past few years have the technologies converged to deliver data transfer speeds in excess of the fastest speeds provided by an intensity-based laser.

In 2016, for instance, Gerhardt’s group published findings from an earlier version of their technology that achieved 44-GHz polarization modulation—potentially translating into data transfer speeds of 44 gigabits per second. This is in the neighborhood of the known speed limit of current-generation light intensity technologies (estimated to be between 40 and 50 gigabits per second).

On the other hand, don’t write off the traditional methods just yet. There may be other ways for light-intensity-based data transmissions to compete in the hundreds-of-gigabits-per-second realm. But as Gerhardt and coauthors point out, even exotic technologies like “mode-locked semiconductor laser diodes” and “quantum cascade lasers” haven’t yet proved intensity-based laser data transfer much above the 100-gigabit threshold.

By contrast, Gerhardt’s group estimates that 240 gigabits per second may only be a stair step to still even higher data transfer speeds. For instance, their paper discusses gallium-arsenide-based semiconductor lasers that could theoretically achieve polarization data transfer speeds in excess of 500 gigabits per second.

Of course, proving that this technology could actually work in the real world and not just in the lab is an important next step.

“Every new server farm placed by Google or Facebook or Amazon needs to have higher bandwidth with less energy consumption,” Gerhardt says. “And in particular, the speed of the interconnect is a limiting factor right now.”

The technology Gerhardt’s group is testing, he says, would be more suitable for interconnecting nodes in a data center or server farm than, say, acting as an Internet backbone—which requires more time-tested technologies that can reliably run at high volumes.

As it is, they’re still trying to figure out how to modulate circular polarization back and forth at high rates with high reliability and repeatability. (At the moment, they achieve some of their clever high-speed polarization modulation by physically bending the circuit board without breaking it. Which is more of a wonky lab trick than a manufacturing technique for mission-critical hardware in a data center.)

“Right now, this is a concept,” Gerhardt says. “We still have a lot of research before we can make a device that you can buy off the shelf. Many challenges to go.”

Margo Anderson is senior associate editor and telecommunications editor at IEEE Spectrum. She has a bachelor’s degree in physics and a master’s degree in astrophysics.