Using a new technique known as “entanglement forging,” IBM researchers say they can halve the amount of quantum-computing resources needed to run simulations, a new study finds.

The nearest-term applications for quantum computers may be chemistry and physics simulations—for instance, simulating molecules to investigate novel battery designs or discover new drugs. However, today’s quantum hardware is still low in qubits and error-prone, limiting its potential for practical use.

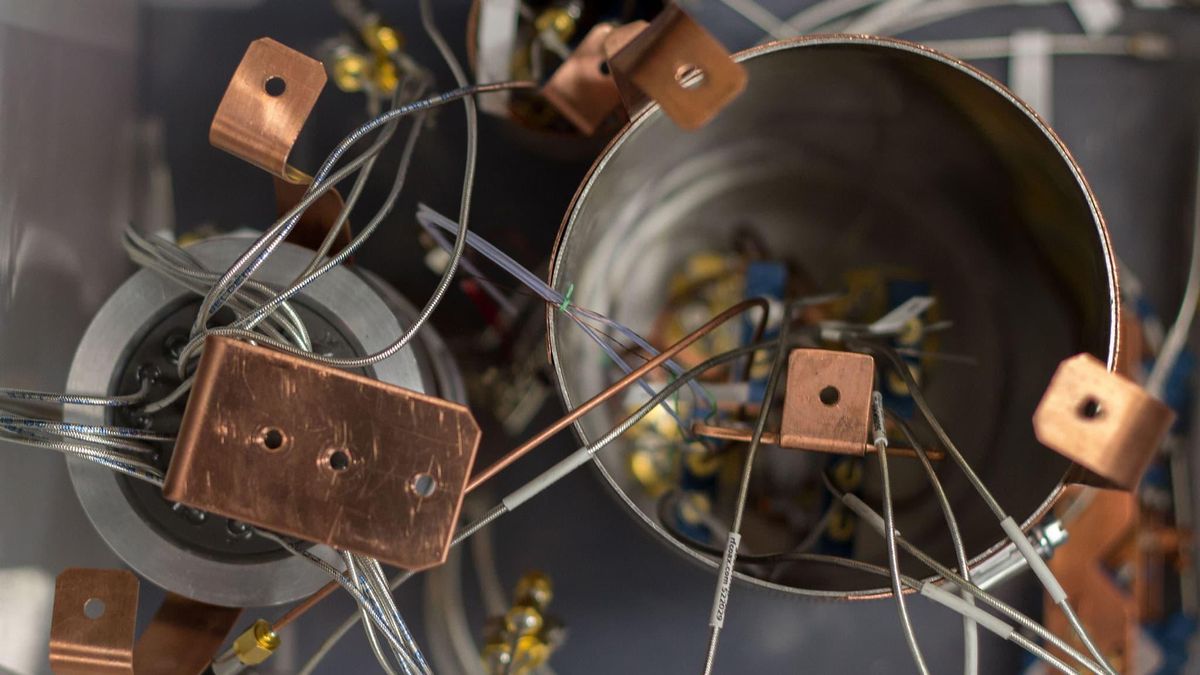

Now IBM scientists have developed a new method of quantum simulation—entanglement forging—to simulate a quantum system such as a molecule using only half as many qubits as typically needed. For instance, using IBM’s 27-qubit Falcon quantum processor, they revealed they could use entanglement forging to accurately simulate a water molecule's ground state, the one in which it has the least amount of energy, using just five qubits instead of the standard 10.

Entanglement forging unites quantum and classical computing to essentially double a quantum computer's capability. Such a hybrid approach is currently common in quantum computing—for instance, algorithms known as variational quantum eigensolvers combine quantum and classical computing to find optimal solutions to problems, such as a molecule’s ground state.

"The main idea is to tackle larger problems on given quantum hardware by absorbing part of the quantum computation into a classical computation," says study lead author Andrew Eddins, a research scientist at IBM Quantum in San Jose, Calif.

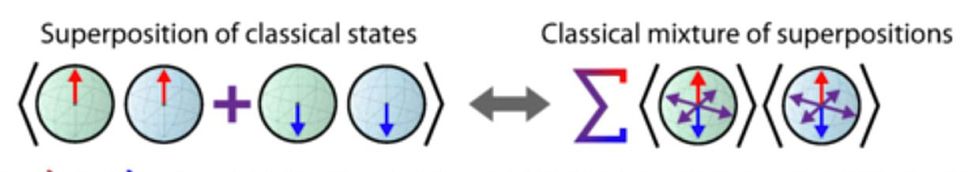

The new technique divides a quantum system into two halves. It then models those halves separately on a quantum computer—first one half, then the other—and then uses classical computing to calculate the entanglement between both halves and knit the models together.

One drawback of entanglement forging is that it works best with quantum systems whose halves are only weakly entangled, meaning there are relatively few links between them. The greater the entanglement within a system, the more difficult it becomes for entanglement forging to accurately simulate the system using just classical computation.

Still, Eddins notes it may be possible to extend a different version of forging to more strongly entangled quantum systems. The IBM researchers are exploring a strategy where they essentially divide a system into its past and future states. ”In theory, this approach, which we call ‘Heisenberg forging,’ could enable simulation of strongly entangled states,” Eddins says.

Another limitation of entanglement forging is time. “Quantum computers are naturally efficient at simulating entangled states, and here we give up some of this efficiency to shift part of that task into a classical computation,” Eddins says. "The result is each circuit run on the quantum computer is smaller, but there are many more circuits to run, so the whole computation takes longer. Fortunately, when the two halves of the simulated system are only weakly entangled, this time cost can be relatively small.”

The scientists now seek to explore how scalable entanglement forging is to larger quantum systems. Even if systems get large enough to make it impractical to include classical computing, “entanglement forging may give useful approximate solutions that otherwise would not have been accessible with a given set of physical qubits,” Eddins says.

The scientists detailed their findings on 14 January in the journal PRX Quantum.

- Flip-Flop Qubit Could Make Silicon the King of Quantum Computing ... ›

- IBM Shows First Full Error Detection for Quantum Computers - IEEE ... ›

Charles Q. Choi is a science reporter who contributes regularly to IEEE Spectrum. He has written for Scientific American, The New York Times, Wired, and Science, among others.