Artificial intelligence driven by deep learning often runs on many computer chips working together in parallel. But the deep-learning algorithms, called neural networks, can run only so fast in this parallel computing setup because of the limited speed with which data flows between the different chips. The Japan-based multinational Fujitsu has come up with a novel solution that sidesteps this limitation by enabling larger neural networks to exist on a single chip.

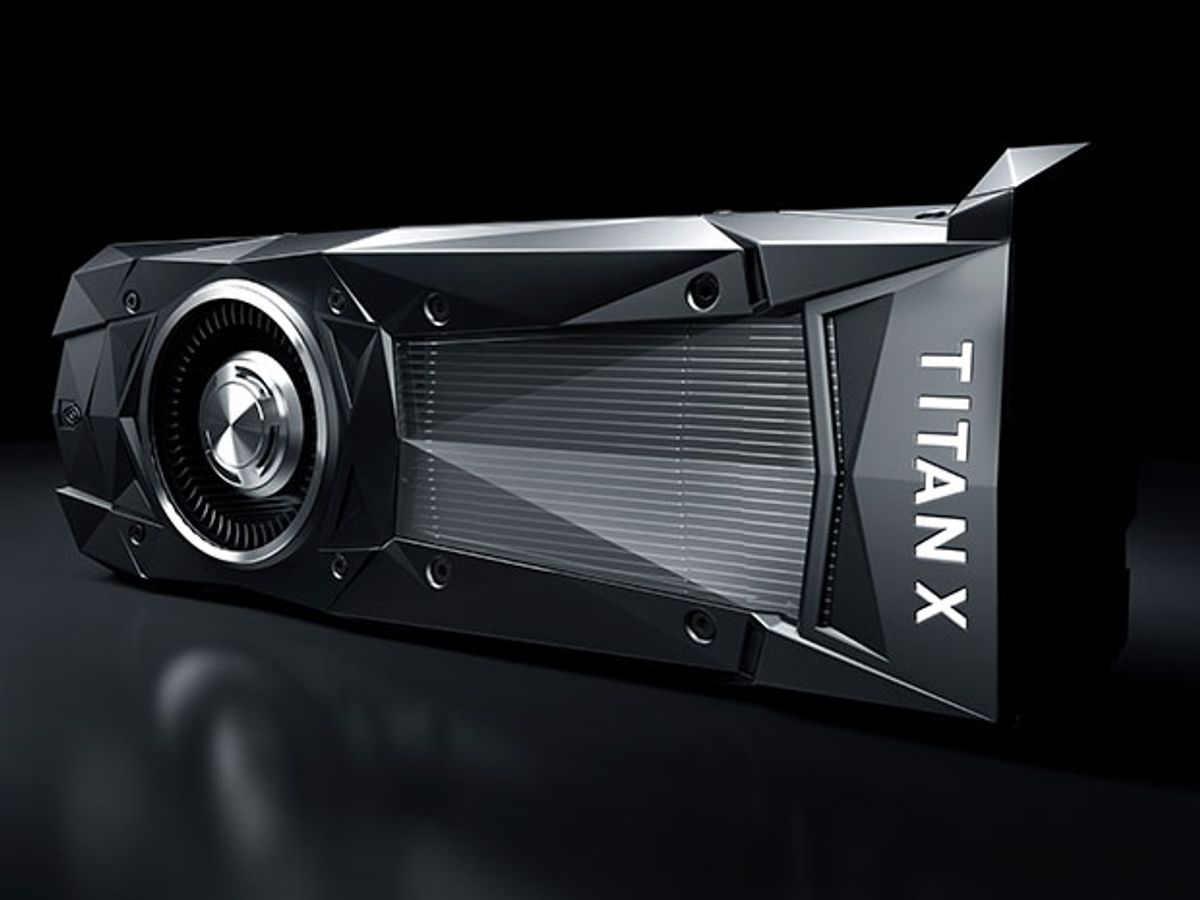

The neural networks used in deep learning typically run on graphics processing units (GPUs) that originated as components for generating and displaying images. By creating an efficiency shortcut in the calculations performed by neural networks, Fujitsu researchers reduced the amount of internal GPU memory used by 40 percent. Their solution allows for a larger and potentially more capable neural network to run on a single GPU.

“To the best of our knowledge, we are the first to propose this type of solution,” says Yasumoto Tomita, research manager of the Next-Generation Computer Systems Project at Fujitsu Laboratories Ltd.

Fujitsu’s memory efficiency technology was announced at the 2016 IEEE International Workshop on Machine Learning for Signal Processing, an international conference held in Salerno, Italy, from 13 to 16 September.

Understanding Fujitsu’s solution requires a bit of a deep dive into deep learning. The neural networks involved in deep learning train on huge amounts of data in order to perform tasks such as distinguishing between faces or translating between languages. Such networks consist of many layers of artificial neurons that are connected to each other and can influence one another.

As training data flows through a neural network, each layer of neurons performs calculations that influence the next layer of neurons and, ultimately, the final result. “Weighted data” represents the connection strength between input and output neurons. The neurons themselves handle “intermediate data” that represent the data input to and output from each layer. A typical neural network must calculate error data for both the weighted data and intermediate data.

All those calculations need to be stored by the internal memory of the GPU chips running the neural networks. Fujitsu figured out how to reuse certain parts of the GPU’s limited memory by calculating intermediate error data from weighted data and also generating weighted error data from intermediate data—all done independently but at the same time.

The 40 percent reduction in memory usage effectively allows a larger neural network with “roughly two times more layers or neurons” to operate on a single GPU, Tomita says. The greater neural network capability on a single GPU helps avoid some of the performance bottleneck that comes up when neural networks spread across many GPUs must exchange data during training. (The traditional method of spreading neural networks across many GPUs is an example of what is called “model parallelization.”)

Fujitsu has also been developing software technology that can speed up the sharing of data across multiple GPUs. Those software innovations may combine with this memory-efficiency tech to provide a significant boost to the company’s deep-learning ambitions.

“By combining the memory-efficiency technology developed by Fujitsu with GPU parallelization technology, fast learning on large-scale networks becomes possible, without model parallelization,” Tomita says.

By March 2017, Fujitsu aims to commercialize this memory-efficient deep learning within its consulting service, which goes by the name Human Centric AI Zinrai. That would allow Fujitsu to join the many other tech giants and startups trying to translate deep learning into online services for businesses that lack the expertise.

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.