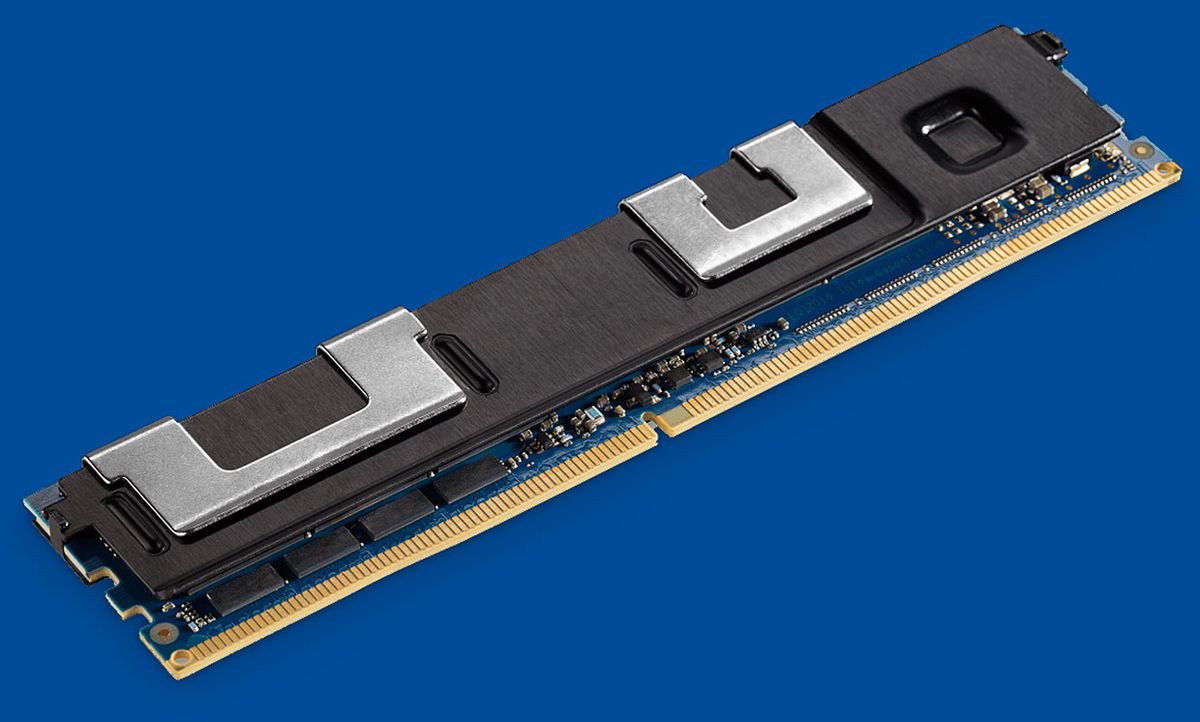

Intel has already released solid-state drives powered by the new 3D XPoint memory technology it pioneered with Micron Technology. But what got people excited was the potential to use the same technology as a computer’s main memory—potentially kicking aside DRAM and replacing it with something that doesn’t lose its data if the computer loses power.

Because 3D XPoint is nonvolatile, like flash memory, it should allow nearly instant recovery from power losses and software glitches. In addition, “this kind of memory is cheaper and denser than DRAM,” says Yan Solihin, professor of electrical and computer engineering at North Carolina State University. “So people are predicting—including myself—that eventually DRAM will gradually be replaced by this memory.”

But XPoint isn’t perfect. It’s more expensive in terms of both energy and the time it takes data to be written to it than DRAM, Solihin points out. Last week at the 45th International Symposium on Computer Architecture, in Los Angeles, his team came up with a way to cut down on the amount of writing needed, thus speeding up the memory.

Today, storing data that’s in a computer’s main memory, making it “persistent,” means converting it into a series of bytes for transport to a solid-state drive or hard disk drive, where it’s stored as a file. But if the memory itself is nonvolatile, just like the drive, there’s much less need for that time-consuming process.

Even so, you still need a process that prevents records from getting corrupted in the case of a crash. For example, you could be updating a mailing address. That would involve copying the original data from memory to the processor’s cache—SRAM memory that’s superfast because it’s integrated onto the processor chip. When the job is finished, the various fields have to be sent from the cache and stored in the nonvolatile memory. But what if, say, a crash occurred after all the address fields except the city field had made it to the nonvolatile memory? That would leave you with a record containing bad data when the program starts up again.

The state-of-the-art method of preventing such a situation is called eager persistency, explains Solihin, a Fellow of the IEEE. Unfortunately, it requires a lot of overhead, adding about 9 percent to transaction times. That overhead includes a lot of writing to nonvolatile memory, about 21 percent more, when things are going well and nothing has crashed. Extra writing is a problem for nonvolatile memories generally, because they have a finite lifetime that’s measured in writes.

The NC State method, called lazy persistency, turns eager persistency’s method on its head. During normal operation, it requires very little, but when stuff goes wrong, it needs a bit more work to set things right. Crashes are rare, says Solihin, “so we want to make the common case really fast.”

To understand how it works, you have to know a bit about how the cache ordinarily does its job. Solihin uses the analogy of a tool belt and a truck. If you’re a contractor on a job, you bring all your tools in the truck (main memory), but just what you’ll need right away on your belt (the cache). Then as you complete different jobs and need different tools, you go out to the truck and swap the least used tool on your belt for the next thing you need in the truck. The cache keeps the most recently used data and moves, or evicts, the least recently used data to main memory.

In addition to this “natural eviction,” eager persistency adds artificial eviction at a high rate to guard against losing data during a crash. Rather than use eager persistency’s high-overhead scheme, lazy persistency just lets this existing cache system work, counting on the fact that the data in the cache will eventually be evicted to the nonvolatile XPoint memory. The difference is that lazy persistency also stores a number called a checksum, a small bit of data that can be used to determine if a larger portion of data has changed. When things do go wrong, the processor calculates checksums for data it still has and compares it to the checksum for the same data in the nonvolatile memory. If they don’t match up, the processor knows it has to go back and redo its work.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.