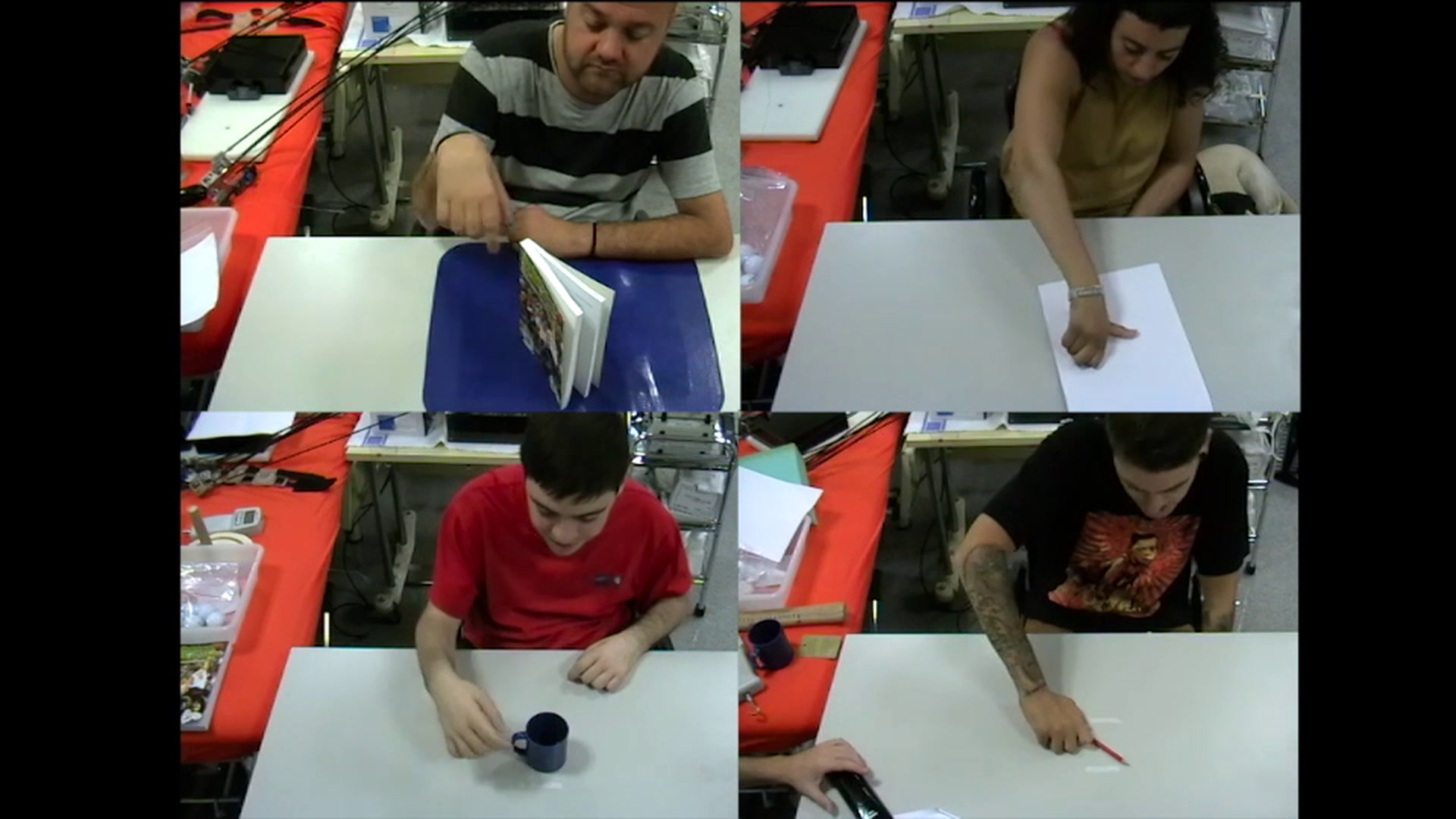

Researchers have developed a hand exoskeleton that can be controlled solely by thoughts and eye movements, according to a report published yesterday in the inaugural issue of Science Robotics. Six quadriplegic individuals tested the device in everyday situations; they successfully picked up coffee cups, ate donuts, squeezed sponges, and signed documents, the researchers reported.

The fact that the system functions outside the laboratory, in busy, unsupervised environments, is a dramatic improvement upon previous brain-controlled robotic limbs, says Surjo Soekadar, a neuroscientist and physician at the University Hospital of Tübingen in Tübingen, Germany. Soekadar led the study.

The system works by translating the brain’s electrical activity into actions in the robotic hand. The user, wearing a mesh cap with five electrodes, thinks about grasping an object. This produces a pattern of brain activity detected by electroencephalography, or EEG. An algorithm on a tablet computer identifies these specific brain activity patterns and translates them into control signals. A control box and set of actuators then commands the hand exoskeleton to carry out the set of coordinated movements that add up to the grasping motion.

The whole process—from intention to grasp—takes just over a second, says Soekadar. That’s probably not fast enough to catch a ball, but it’s suitable for picking up a cup or turning a knob. The hand exoskeleton itself was developed by Nicola Vitiello and Maria Chiara Carrozza at The BioRobotics Institute of the Scuola Superiore Sant’Anna in Pisa, Italy.

So, why did previous demonstrations of brain-machine interfaces (BMIs) keep participants stuck in the lab while this one allowed them to use the hand exoskeleton in real-world environments such as a restaurants? The distractions and unexpected events in public spaces had made it difficult for a person to focus on the thoughts required to activate such a system.

Soekadar’s team overcame that by adding specific voluntary eye movements to the technique for controlling the system. Those movements were measured using electrooculography, or EOG, which is the measurement of electrical potential between electrodes placed near the eye. Participants could veto a grasp or unlock the grip by moving their eyes to the far right or far left—an uncommon motion unlikely to trip up the algorithms.

The device was tested by six people with spinal cord injuries at the neck’s C5 and C6 vertebrae—a common injury site. All could move their shoulders and elbows, and some had limited wrist mobility but none of them had any control over their fingers.

The system falls short of enabling people to maneuver individual fingers, says Soekadar. That requires more precise recording of brain activity currently only possible using implantable BMI devices—an invasive procedure with several drawbacks.

Soekadar says it might be possible to use the hand exoskeletons to restore full function to the hands. He points to a widely publicized experiment in which paraplegic participants who trained to walk using a BMI and a lower-body robotic exoskeleton regained voluntary leg movements and the sensation of pain after 12 months of training.

Perhaps the same could be true for the hand, Soekadar says. That’s another study, of course, but one that could be made cheaper with a system like Soekadar’s. The ability to take the device out of the lab and into the home would enable participants to train on their own time, reducing costs—not a bad sales pitch to the funding organizations.

Emily Waltz is a features editor at Spectrum covering power and energy. Prior to joining the staff in January 2024, Emily spent 18 years as a freelance journalist covering biotechnology, primarily for the Nature research journals and Spectrum. Her work has also appeared in Scientific American, Discover, Outside, and the New York Times. Emily has a master's degree from Columbia University Graduate School of Journalism and an undergraduate degree from Vanderbilt University. With every word she writes, Emily strives to say something true and useful. She posts on Twitter/X @EmWaltz and her portfolio can be found on her website.