No matter how efficient we make our transistors and memory cells, they will always consume a fixed but tiny amount of energy set by the second law of thermodynamics, a new study suggests. Now the question is how close our real-world devices can get to this fundamental value.

The idea that there might be such a universal limit stems from a 1961 paper by Rolf Landauer of IBM. Landauer postulated that any time a bit of information is erased or reset, heat is released. At room temperature, such an irreversible computation—the sort used in today’s computers—will result in the loss of about 3 x 10-21 joules, or 3 zeptojoules, of energy.

In 2012, Eric Lutz, now at the University of Erlangen-Nuremberg, and colleagues demonstrated this limit could be reached by using a laser trap to move the physical location of a 2-micrometer-wide glass bead between two potential wells.

Now a group led by Jeffrey Bokor at the University of California, Berkeley has shown that this limit also seems to hold for a system that’s of more practical relevance to computing: bits made of nanomagnets. Small magnetic patches are already the staple of hard disks. They also form the basis of the bits inside next-generation nanomagnetic memories like STT-MRAM and are being eyed as a possible form of energy-efficient logic.

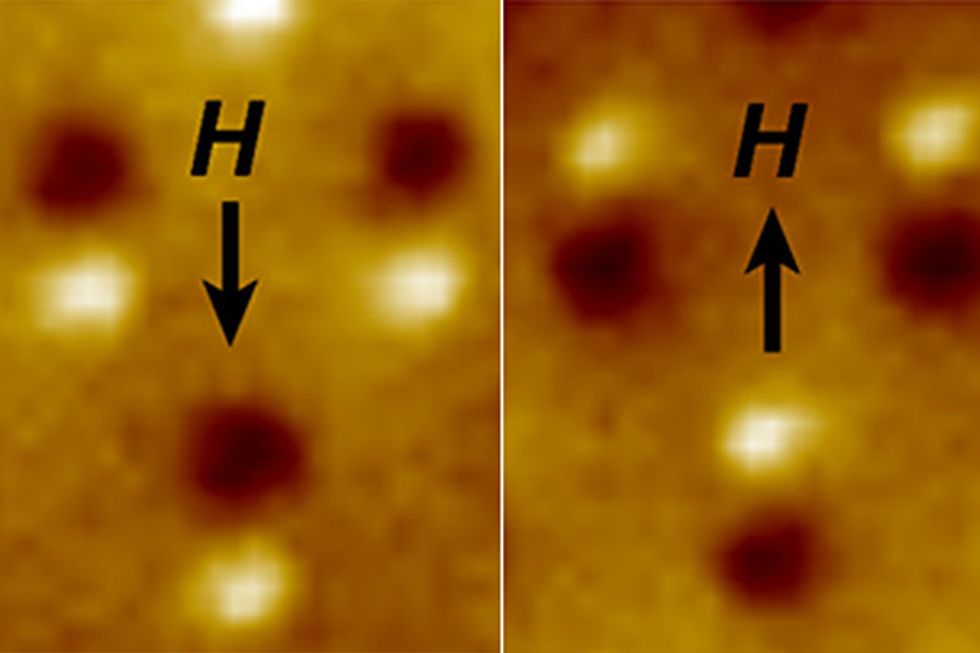

To see exactly how much energy is consumed in the switching process, Bokor and colleagues built an array of slightly elongated Permalloy magnetic dots less than 100 nanometers in radius. The nanomagnets were small enough that each had just one magnetic domain—the north and south poles of all the atoms were aligned. The north pole is naturally inclined to point in one of two directions, up or down, along the longer axis of such a nanomagnet. The two states can be used to represent binary states, “0” and “1”. (See our 2015 feature article on nanomagnetic computing for more on the basics of these devices.)

Bokor’s group used magnetic fields to rotate the orientation of the nanomagnets’ poles and so erase the information stored in them. By carefully measuring the strength and direction of the nanomagnets’ polarities as a function of the applied magnetic field, the team found the nanomagnets consumed about 6 zeptojoules of energy on average around room temperature. That’s about twice the Landauer limit but consistent with it given a certain level of uncertainty in the experiment. In a paper on the work, published on Friday in Science Advances, the team speculates that the somewhat higher value might have come from slight variations in the orientation of the nanomagnets; simulations suggest ideal nanomagnets should switch at the limit.

“At the end of the day, it confirms that Landauer’s theory seems to be correct, that there is a minimum amount of energy that one can’t get below,” says Bokor. Still, he adds, we’re nowhere close to zeptojoule switches: “The computers that we have today operate far far from this Landauer limit, probably on the order of a million times more energy per operation than what Landauer showed.”

A nanomagnet or transistor might release 3 zeptojoules of energy when it switches, but there is a substantial amount of overhead—all the energy that must be put in to get that switch to happen. In this experiment, for example, the zeptojoules figure doesn’t cover the energy needed to create the magnetic fields that manipulate the nanomagnets.

The success of future devices will depend on driving down that overhead, and so the overall energy efficiency of a switch. “How good you can do depends on how cleverly you can engineer your device,” Bokor says. At this point, he says, it’s not clear how low we could go.

Eric Lutz, who performed the earlier glass bead work, says the new experiment is a departure from previous tests, which tested the Landauer limit in “ systems that are far from direct technological relevance.” The nanomagnet experiment, he says, is “a significant step trying to bridge fundamental research and applied sciences like engineering.” He notes that another group, based at the University of Perugia, has also been exploring the Landauer limit in nanomagnets. The abstract of a paper from that group, published earlier this year, reports results ranging from about 10 to 1000 times the Landauer limit.

There is a hope that we might be able to circumvent the Landauer limit by moving from the computing paradigm we use today to a new one: reversible computing. “The idea of reversible computing is that you do the operation and then you run it backwards and you get the energy back,” Bokor explains. In the end though, he says, the success of that approach will also come down to having a “clever implementation”—one that can get that pesky overhead down to a minimum.

Follow Rachel Courtland on Twitter at @rcourt.

This post was updated on 4 April 2016 to correct the size of the glass bead used by Lutz and colleagues (thanks, Ken!).

Rachel Courtland, an unabashed astronomy aficionado, is a former senior associate editor at Spectrum. She now works in the editorial department at Nature. At Spectrum, she wrote about a variety of engineering efforts, including the quest for energy-producing fusion at the National Ignition Facility and the hunt for dark matter using an ultraquiet radio receiver. In 2014, she received a Neal Award for her feature on shrinking transistors and how the semiconductor industry talks about the challenge.