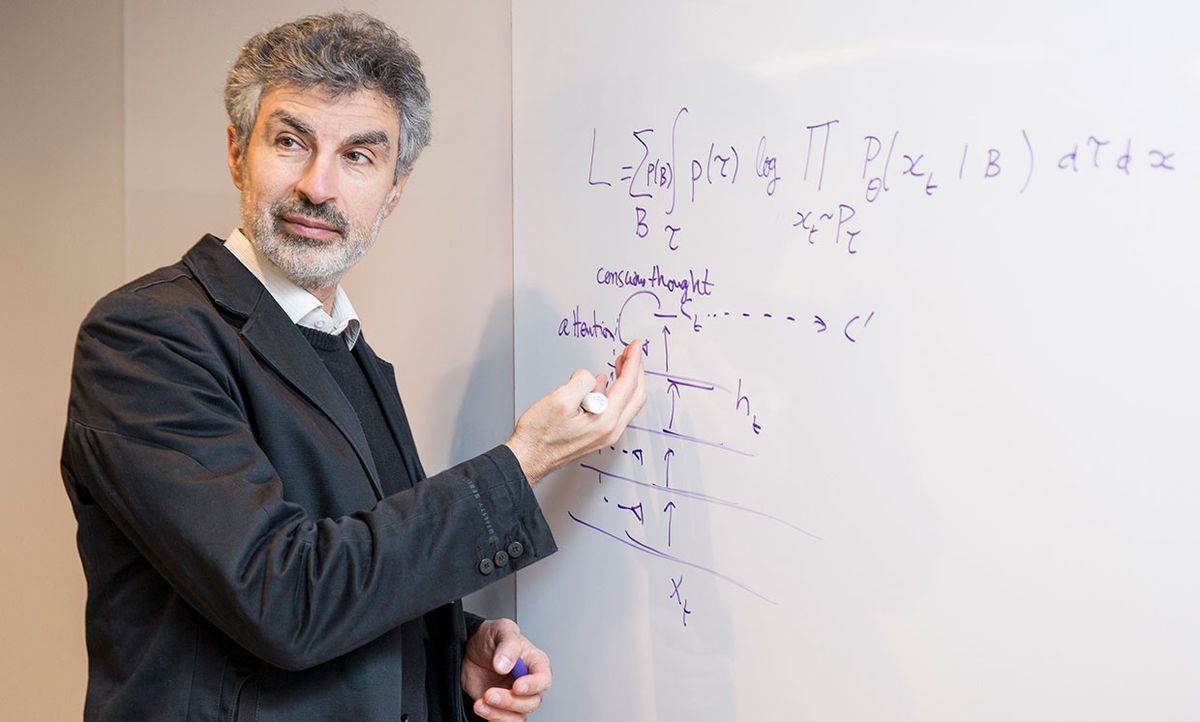

Yoshua Bengio is known as one of the “three musketeers” of deep learning, the type of artificial intelligence (AI) that dominates the field today.

Bengio, a professor at the University of Montreal, is credited with making key breakthroughs in the use of neural networks—and just as importantly, with persevering with the work through the long cold AI winter of the late 1980s and the 1990s, when most people thought that neural networks were a dead end.

He was rewarded for his perseverance in 2018, when he and his fellow musketeers ( Geoffrey Hinton and Yann LeCun) won the Turing Award, which is often called the Nobel Prize of computing.

Today, there’s increasing discussion about the shortcomings of deep learning. In that context, IEEE Spectrum spoke to Bengio about where the field should go from here. He’ll speak on a similar subject tomorrow at NeurIPS, the biggest and buzziest AI conference in the world; his talk is titled “From System 1 Deep Learning to System 2 Deep Learning.”

Yoshua Bengio on . . .

- Deep learning and its discontents

- The dawn of brain-inspired computation

- Learning to learn

- “This is not ready for industry”

- Physics, language, and common sense

Deep learning and its discontents

IEEE Spectrum: What do you think about all the discussion of deep learning’s limitations?

Yoshua Bengio: Too many public-facing venues don’t understand a central thing about the way we do research, in AI and other disciplines: We try to understand the limitations of the theories and methods we currently have, in order to extend the reach of our intellectual tools. So deep learning researchers are looking to find the places where it’s not working as well as we’d like, so we can figure out what needs to be added and what needs to be explored.

This is picked up by people like Gary Marcus, who put out the message: “Look, deep learning doesn’t work.” But really, what researchers like me are doing is expanding its reach. When I talk about things like the need for AI systems to understand causality, I’m not saying that this will replace deep learning. I’m trying to add something to the toolbox. What matters to me as a scientist is what needs to be explored in order to solve the problems. Not who’s right, who’s wrong, or who’s praying at which chapel.

Spectrum: How do you assess the current state of deep learning?

Bengio: In terms of how much progress we’ve made in this work over the last two decades: I don’t think we’re anywhere close today to the level of intelligence of a two-year-old child. But maybe we have algorithms that are equivalent to lower animals, for perception. And we’re gradually climbing this ladder in terms of tools that allow an entity to explore its environment. One of the big debates these days is: What are the elements of higher-level cognition? Causality is one element of it, and there’s also reasoning and planning, imagination, and credit assignment (“what should I have done?”). In classical AI, they tried to obtain these things with logic and symbols. Some people say we can do it with classic AI, maybe with improvements. Then there are people like me, who think that we should take the tools we’ve built in last few years to create these functionalities in a way that’s similar to the way humans do reasoning, which is actually quite different from the way a purely logical system based on search does it.

The dawn of brain-inspired computation

Spectrum: How can we create functions similar to human reasoning?

Bengio: Attention mechanisms allow us to learn how to focus our computation on a few elements, a set of computations. Humans do that—it’s a particularly important part of conscious processing. When you’re conscious of something, you’re focusing on a few elements, maybe a certain thought, then you move on to another thought. This is very different from standard neural networks, which are instead parallel processing on a big scale. We’ve had big breakthroughs on computer vision, translation, and memory thanks to these attention mechanisms, but I believe it’s just the beginning of a different style of brain-inspired computation. It’s not that we have solved the problem, but I think we have a lot of the tools to get started. And I’m not saying it’s going to be easy. I wrote a paper in 2017 called “The Consciousness Prior” that laid out the issue. I have several students working on this and I know it is a long-term endeavor.

Spectrum: What other aspects of human intelligence would you like to replicate in AI?

Bengio: We also talk about the ability of neural nets to imagine: Reasoning, memory, and imagination are three aspects of the same thing going on in your mind. You project yourself into the past or the future, and when you move along these projections, you’re doing reasoning. If you anticipate something bad happening in the future, you change course—that’s how you do planning. And you’re using memory too, because you go back to things you know in order to make judgments. You select things from the present and things from the past that are relevant. Attention is the crucial building block here. Let’s say I’m translating a book into another language. For every word, I have to carefully look at a very small part of the book. Attention allows you abstract out a lot of irrelevant details and focus what matters. Being able to pick out the relevant elements—that’s what attention does.

Spectrum: How does that translate to machine learning?

Bengio: You don’t have to tell the neural net what to pay attention to—that’s the beauty of it. It learns it on its own. The neural net learns how much attention, or weight, it should give to each element in a set of possible elements to consider.

Learning to learn

Spectrum: How is your recent work on causality related to these ideas?

Bengio: The kind of high-level concepts that you reason with tend to be variables that are cause and/or effect. You don’t reason based on pixels. You reason based on concepts like door or knob or open or closed. Causality is very important for the next steps of progress of machine learning. And it’s related to another topic that is much on the minds of people in deep learning. Systematic generalization is the ability humans have to generalize the concepts we know, so they can be combined in new ways that are unlike anything else we’ve seen. Today’s machine learning doesn’t know how to do that. So you often have problems relating to training on a particular data set. Say you train in one country, and then deploy in another country. You need generalization and transfer learning. How do you train a neural net so that if you transfer it into a new environment, it continues to work well or adapts quickly?

Spectrum: What’s the key to that kind of adaptability?

Bengio: Meta-learning is a very hot topic these days: Learning to learn. I wrote an early paper on this in 1991, but only recently did we get the computational power to implement this kind of thing. It’s computationally expensive. The idea: In order to generalize to a new environment, you have to practice generalizing to a new environment. It’s so simple when you think about it. Children do it all the time. When they move from one room to another room, the environment is not static, it keeps changing. Children train themselves to be good at adaptation. To do that efficiently, they have to use the pieces of knowledge they’ve acquired in the past. We’re starting to understand this ability, and to build tools to replicate it. One critique of deep learning is that it requires a huge amount of data. That’s true if you just train it on one task. But children have the ability to learn based on very little data. They capitalize on the things they’ve learned before. But more importantly, they’re capitalizing on their ability to adapt and generalize.

“This is not ready for industry”

Spectrum: Will any of these ideas be used in the real world anytime soon?

Bengio: No. This is all very basic research using toy problems. That’s fine, that’s where we’re at. We can debug these ideas, move on to new hypotheses. This is not ready for industry tomorrow morning. But there are two practical limitations that industry cares about, and that this research may help. One is building systems that are more robust to changes in the environment. Two: How do we build natural language processing systems, dialogue systems, virtual assistants? The problem with the current state of the art systems that use deep learning is that they’re trained on huge quantities of data, but they don’t really understand well what they’re talking about. People like Gary Marcus pick up on this and say, “That’s proof that deep learning doesn’t work.” People like me say, “That’s interesting, let’s tackle the challenge.”

Physics, language, and common sense

Spectrum: How could chatbots do better?

Bengio: There’s an idea called grounded language learning which is attracting new attention recently. The idea is, an AI system should not learn only from text. It should learn at the same time how the world works, and how to describe the world with language. Ask yourself: Could a child understand the world if they were only interacting with the world via text? I suspect they would have a hard time. This has to do with conscious versus unconscious knowledge, the things we know but can’t name. A good example of that is intuitive physics. A two-year-old understands intuitive physics. They don’t know Newton’s equations, but they understand concepts like gravity in a concrete sense. Some people are now trying to build systems that interact with their environment and discover the basic laws of physics.

Spectrum: Why would a basic grasp of physics help with conversation?

Bengio: The issue with language is that often the system doesn’t really understand the complexity of what the words are referring to. For example, the statements used in the Winograd schema; in order to make sense of them, you have to capture physical knowledge. There are sentences like: “Jim wanted to put the lamp into his luggage, but it was too large.” You know that if this object is too large for putting in the luggage, it must be the “it,” the subject of the second phrase. You can communicate that kind of knowledge in words, but it’s not the kind of thing we go around saying: “The typical size of a piece of luggage is x by x.” We need language understanding systems that also understand the world. Currently, AI researchers are looking for shortcuts. But they won’t be enough. AI systems also need to acquire a model of how the world works.

Eliza Strickland is a senior editor at IEEE Spectrum, where she covers AI, biomedical engineering, and other topics. She holds a master’s degree in journalism from Columbia University.