Video Friday is your weekly selection of awesome robotics videos, collected by your melodramatic Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next two months; here’s what we have so far (send us your events!):

MARSS 2016 – July 18-22, 2016 – Paris, France

IEEE WCCI 2016 – July 25-29, 2016 – Vancouver, Canada

RO-MAN 2016 – August 26-31, 2016 – New York, N.Y., USA

ECAI 2016 – August 29-2, 2016 – The Hague, Holland

NASA SRRC Level 2 – September 2-5, 2016 – Worcester, Mass., USA

ISyCoR 2016 – September 7-9, 2016 – Ostrava, Czech Republic

Let us know if you have suggestions for next week, and enjoy today’s videos.

I’m not entirely sure why being “targeted” by a bird robot like this would appeal to kids, but here you go:

Fun fact: this works with real seagulls too. Try it!*

* Do not try it.

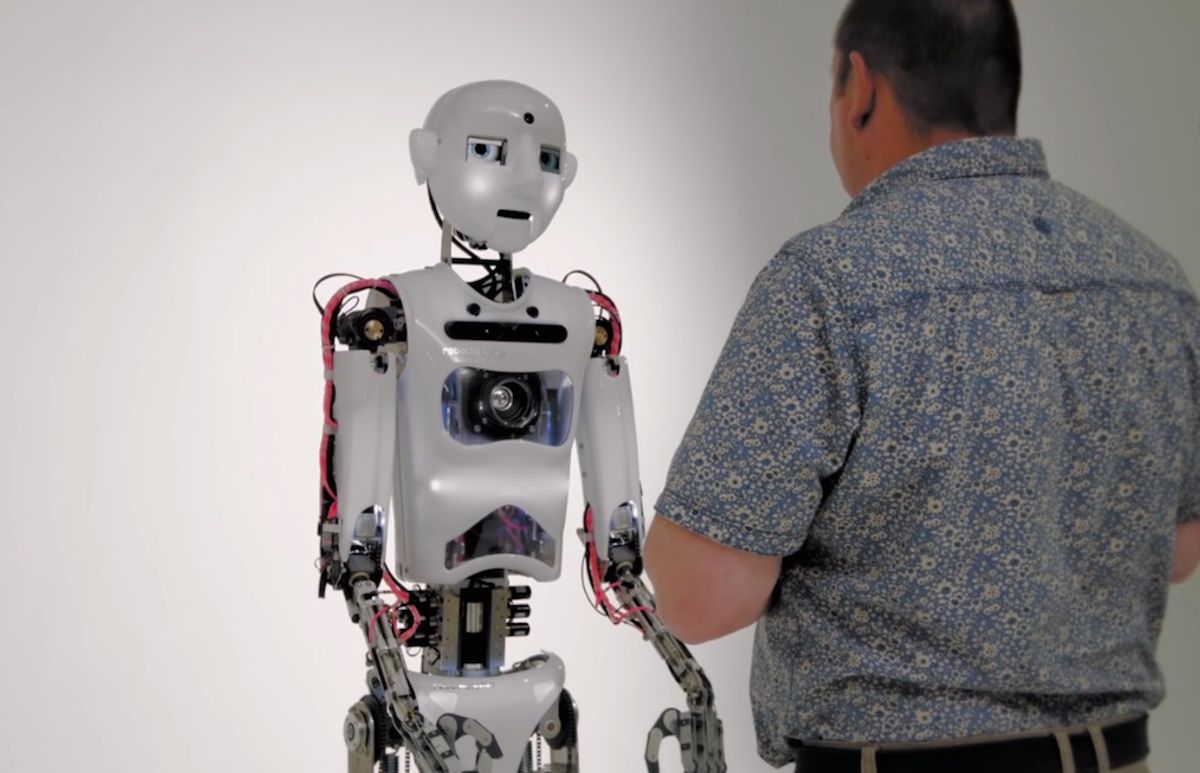

“This is a demonstration of Engineered Art’s telepresence app on its Robothespian Humanoid robot. Although recorded multiple times to capture different camera angles, what you are seeing is a real conversation with Robothespian. It is being controlled and voiced remotely over the internet. There is no trickery here. Although we scripted some of the conversation to ensure it was repeatable, none of the content was pre-programmed. It was all created in real time using a headset and the telepresence app. It is an excellent way to ensure genuine human interaction, without being tripped up with it’s complexities.”

[ RoboThespian ]

Clearpath’s Kingfisher robot boat is now called Heron (don’t ask). Here’s one carrying an automated water sampling system:

I’m still waiting for a version that I can wakeboard behind.

[ Clearpath ]

Robots designed to assist humans do best when the robots learn how to assist from the humans themselves. Ideally, this lets robots be helpful in the way that you want them to be helpful, while knowing when to just stay out of it. At MIT, researchers have published a pair of papers about teaching robots to help nurses and doctors in in a labor ward by making scheduling recommendations:

The reason that the robot also describes bad decisions is to show that it understands the difference between the two. This seems like more of an artificial intelligence project (in that having the physical robot may be slightly superfluous), but having a physical presence could help promote interactivity.

[ MIT ]

“Guided by the principle that interior space, particularly in high-density urban innovation centers around the world, has become too expensive to be static and unresponsive, Ori’s breakthrough innovation, technology and design create dynamic environments that act and feel as though they are substantially larger. Through architectural robotics, Ori’s systems promise to liberate urban design, provide new user experiences, and unlock the potential of the places we increasingly want to live, work and play. The robotic technologies come out of MIT Media Lab’s CityHome project, focused on utilizing technology to respond to the challenges of global urbanization.”

Get that? Yeah, me neither. It’s some interesting movable furniture:

Here’s an animated view showing the different configurations:

If it would somehow make the bed while it was under there, I’d be completely sold.

[ Ori ]

This is a fully autonomous demo from Pepper at RoboCup 2016:

Thanks to its capabilities (and relative affordability, we assume), Pepper will be the Standard Platform for the next RoboCup@Home competition.

This is a demonstration of the EU’s RECONFIG project, which is trying to teach robots how to work together. If you assign a robot a task, it’s going to try to complete that task, but it may be unable to do so itself. So, robots need to be able to ask for help, but they also need to be able to put their task aside for a sec and help other robots if necessary.

In this video, “two Youbots and a Pioneer are assigned tasks. Two objects simultaneously inserted create conflict of interest. Pioneer receives help request from right Youbot and informs left Youbot to leave its object and assist in transport of the other object, resolving the conflict.”

The project also worked on simple and intuitive visual communication and body language, like having one robot point at something it needs help picking up, which another robot can interpret. The project just ended, but there’s lots more information at the links below.

From CITEC Bielefeld:

“Adapting the concept of continuous tracks that are propelled and guided by wheels, a self-propelled continuous-track robot has been designed and built. The robot consists of actuated chain segments, thus enabling it to change its form, independent of guiding mechanisms. Using integrated sensors, the robot is able to adapt to the terrain and to overcome obstacles. This allows the robot to ‘roll’ and climb in two dimensions. Possible extensions of the concept to three dimensional navigation are presented as an outlook.”

“OUROBOT – A Self-Propelled Continuous-Track-Robot for Rugged Terrain,” by Jan Paskarbeit, Simon Beyer, Adrian Gucze, Johann Schroder, Matthaus Wiltzok, Manfred Fingberg, and Axel Schneider, was presented at ICRA 2016 in Stockholm, Sweden.

Everything you ever wanted to know about the latest version of McGill Robotics’ AUV:

[ McGill Robotics ]

Here’s KAIST’s third round video submission for DJI’s Developer Challenge, which has drones autonomously searching for objects and landing on moving vehicles:

The final competition will be held August 27-28.

Looks like RoboBoat is moving to Daytona Beach, Fla., for 2017:

[ AUVSI ]

I wish my TurtleBot was half as concerned about me as this Nao is about its owner:

It must be a truly horrible disease that requires this guy to medicate himself with a Brio brick.

[ Ed Sandoval ]

We usually prefer to post videos of real robots doing stuff as opposed to simulations, but the end of this video is impressive:

Because you never know when your robot is going to fall into a disjointed terrain.

“Robust Phase-Space Planning for Agile Legged Locomotion over Various Terrain Topologies,” by Y. Zhao, B. Fernandez, and L. Sentis, was presented at RSS 2016.

[ UT Austin ] via [ RSS 2016 ]

At a level of quality that’s forgivable considering how old some of these clips are, this is “Robots, a 50 Year Journey,” brought to you by Stanford University for ICRA 2000 (!).

16 years later, robots still have a hard time doing back flips. We haven’t made much progress, apparently.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.