Dyson sure did deliver this week. They managed to put together a fun little teaser video with just the right amount of truthiness mixed in, and followed it up with an excitingly unique consumer robot. Is it too much to ask that we get this sort of thing every single week from now on?

Probably, yeah.

The consumer robotics isn't quite at the level of, I dunno, the useless app market, where there are new products releases so relentlessly that they make me want to push that scary looking "Destroy Universe" button on my Android phone. What, you don't have one of those? Weird. But anyway, we have hope that one day, we will have the privilege of making announcements about new robots that you can buy much more frequently then we do now.

And then it'll be time to retire! But we're not there yet, so on to Video Friday.

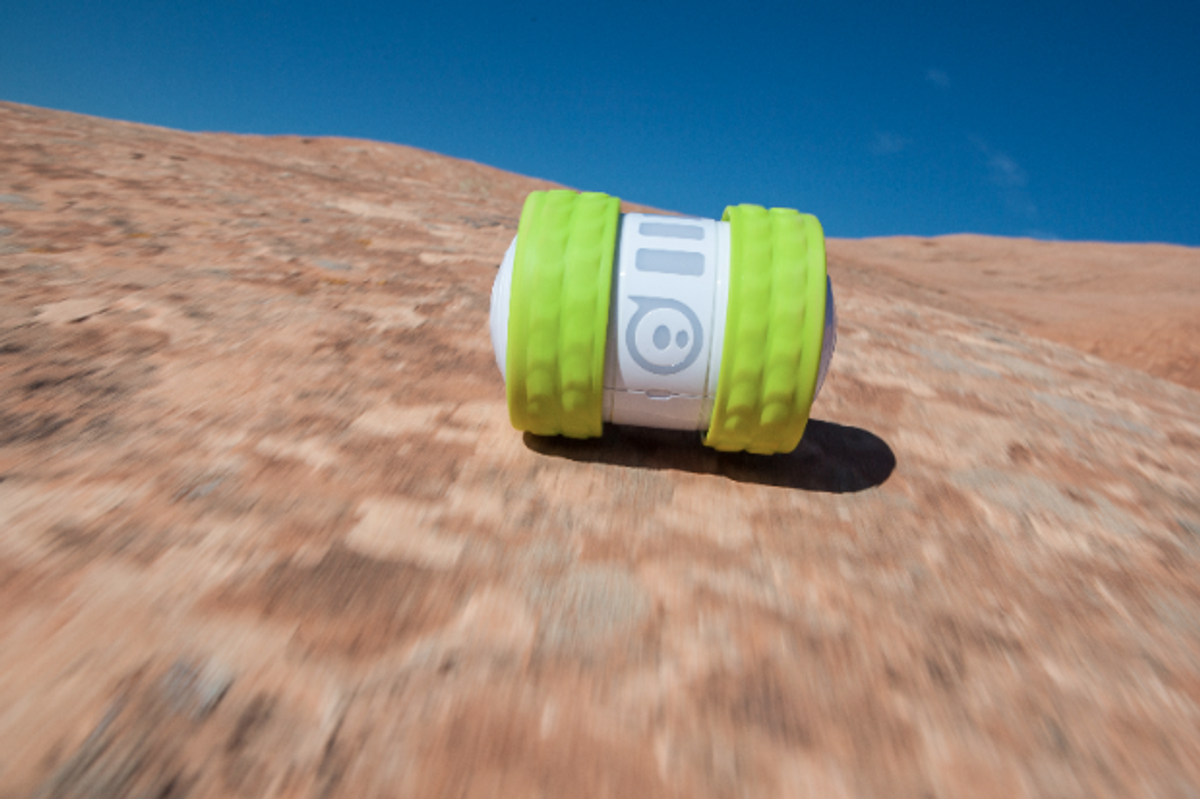

As it happens, there was another consumer robot launch this week. Orbotix, the maker of the Sphero robotic ball, officially unveiled Ollie, a smartphone-controlled wheeled bot. Here are two promotional vids:

[ Ollie ]

Too lazy to hand launch your flapping wing drone? You'd better get it its own robot:

[ Robo Raven ]

These ice bucket challenges are getting, um, involved. And it's probably not a coincidence that they're both from MIT.

CSAIL graduate student Ross Finman is nominated by his former colleague Hordur Johannsson to take the ALS Ice Bucket Challenge, but Ross soon discovers that Hordur is not the only one—or only thing—that wants him to participate...

And from MIT's department of mechanical engineering:

While we're on about MIT, here's a pair of videos from the MIT DRC team. The first shows ATLAS dragging a truss around like it's a comfort object, and the second shows a beautiful stereo depth fusion visualization:

This video demonstrates two features:

1) The use of stereo depth fusion using Kintinuous (originally used to build large maps with Kinect data) to a quality which matches LIDAR data. The heightmap shown was used to place the required footsteps a priori while stationary.

2) State estimation is provided by a highly tuned estimator developed by MIT. In this case it is running open loop (and not using any laser info). In open loop mode it drifts about 4cm in this total walking motion.

[ MIT DRC ]

The IMAV 2014 conference included a competition for, you guessed it, MAVs. Tasks included mapping, recognizing and observing targets, rooftop landings, window entries, interior inspections, and acting as a flying WiFi relay, all autonomously:

[ IMAV 2014 ]

How do you make the sport of curling even weirder? Turtlebots:

This video demonstrates some of the basic capabilities of the Vaultbot, a mobile manipulation system in beginning stages of development at the University of Texas at Austin.

The Vaultbot has two UR5 manipulator arms mounted on a Clearpath A200 Husky base, and is equipped with a Sick LMS511 LIDAR. Other components to be added include an RGBD vision sensor and various tooling for the manipulator end effectors.

[ UT Austin ]

In collaboration with Lockheed Martin, a team of research students and staff from Warsaw University of Technology successfully demonstrated the first phase of flight test and integration of unmanned aircraft platforms with an autonomous mission control system. The purpose of the project is to optimize the performance of unmanned aerial vehicles when flying in fleets manned aircraft, in order to make the best use of available assets for any given mission.

We cover a lot (all, if possible) of the research performed in Robert Wood's Harvard Microrobotics Lab, not least because he's working on ROBOT BEES. Usually, we don't ask roboticists personal questions, but the NSF has no qualms about doing so, so it's a bit of a different take on robotic research than we usually get.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.