A killer could be stopped cold—or at least be limited in its deadly toll—thanks to AI.

Apart from COVID-19, tuberculosis (TB) is the leading cause of death by an infectious disease worldwide, despite being largely preventable and treatable. While the World Health Organization (WHO) recommends using chest X-rays to help identify likely cases of TB, many health-care centers lack adequate radiologists to interpret these X-rays. In a study published on 6 September in the journal Radiology, researchers at Google along with colleagues from India, South Africa, and Zambia showed that their deep-learning algorithm could identify cases of TB from chest X-rays as well as radiologists could.

“From a global health standpoint, understanding how to efficiently and effectively identify people living with TB” is extremely valuable, said Dr. Christopher Hoffmann—an associate professor of medicine in the division of infectious disease at the Johns Hopkins University School of Medicine—who was not involved in the study.

To train their algorithm, the researchers used more than 165,000 chest X-ray images from 22,000 patients in several European countries, China, India, South Africa, and Zambia. The AI itself had three components—a cropping system, which helped the algorithm analyze the correct part of the image, a smart image-analysis routine, and a final part that combined the two to make the final decision. The algorithm sorts the images into three categories: normal, TB, or abnormal but not TB. As a result it could potentially help even patients who might not have TB but still require medical care. Moreover, the researchers said the abnormal-but-not-TB category also improved the accuracy of their TB-screening algorithm, allowing the model to home in on abnormalities specific to TB.

The “distinction between abnormal but not TB and abnormal and TB was one of the creative solutions that we had” that helped make the model better, said Shruthi Prabhakara, a Google researcher and an author of the study.

To be clear, the authors noted that Google’s system would not be the first AI ever devised to accurately screen for TB. Indeed, the WHO already recommends that such AI systems be used for TB screening. Nevertheless, researchers say adapting systems like this one can help combat that devastating impact of TB all over the world, especially in underserved health-care systems

To test the algorithm, researchers studied some 1,200 patients from four different countries as well as a separate data set of some 1,000 people from a gold-mining community in South Africa. The study was retrospective, meaning that the data in question was collected from medical records, not current patients. Researchers compared the AI’s accuracy with that of nine radiologists from India, where TB is endemic, and five from the United States, where it is not.

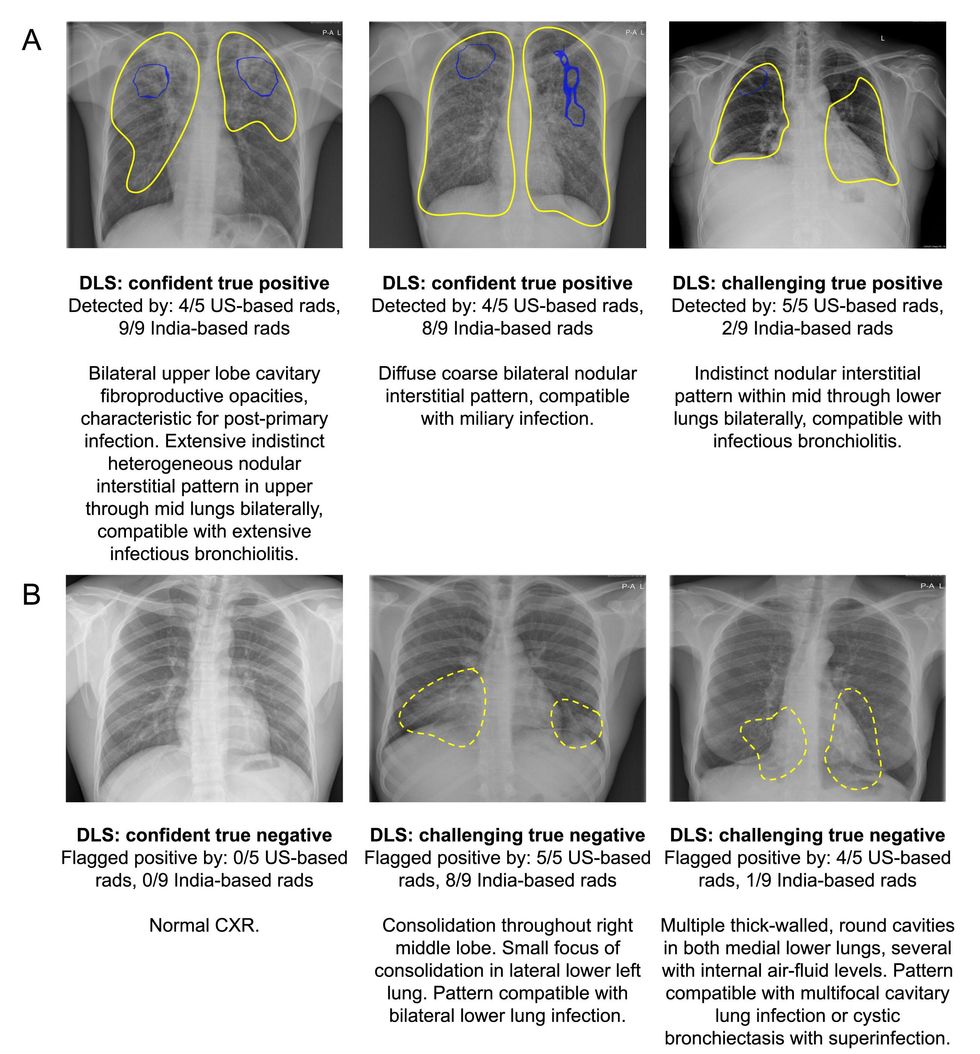

In most cases, the AI produced very similar results in terms of both sensitivity and specificity to radiologists. Sensitivity, or true positive rate, is how well a system can correctly identify a person with TB, while specificity, or true negative rate, is how well it can isolate the people who don’t have TB.

For most of the data sets, the AI was able to meet WHO requirements for TB screening tests of at least 90 percent sensitivity and 70 percent specificity. Though the AI did not meet these requirements for the South Africa and Zambia data, radiologists also couldn’t meet these standards. An analysis of some of the subgroups involved in the study helps highlight why. For both the AI and radiologists, patients with HIV, who made up most of the patients in the Zambia data set, were more difficult to correctly screen. Many of the false results, the researchers reported, occurred in patients with other lung conditions, which are more prevalent in populations like the South African miners data set.

“There are mimics of TB, which means that there may be things that may look almost like TB on a chest X-ray, which then is not TB,” said Dr. Sanjay K. Jain, a professor at the Johns Hopkins University School of Medicine who studies TB imaging and who was not involved in the study.

Google’s algorithm is not the first AI to use chest X-rays to screen TB patients. As part of a 2021 update to WHO recommendations on screening for TB, the agency looked at three similar technologies—concluding that because the technologies were about as accurate as human radiologists, who are scarce in many areas, health-care systems can use these AIs for TB screening and triage in place of human experts and specialists. Though only a laboratory test can truly diagnose TB, AI systems can screen patients so that only those who are likely to have TB receive lab tests, which are typically more expensive than chest X-rays on their own. However, the researchers also found that while this method can save money, it saves the most when disease prevalence is low, becoming closer to the cost of simply giving everyone a laboratory test as prevalence increases.

The study incorporated diverse data sets but did not incorporate study participants who were current patients.

“I think a study where the system is actually used by the people in the field is a logical next step,” said Dr. Ronald Summers, a radiologist who directs the Imaging Biomarkers and Computer-Aided Diagnosis Laboratory at the National Institutes of Health Clinical Center. In fact, the study’s researchers have already started doing that work, they said.

Although technology similar to the one used in the study already exists, Google researchers said they just want to provide another effective option for this technology. “Our goal is not to go out and try and prove superiority over other products,” said Daniel Tse, a Google researcher and one of the study’s authors.

Jain said that even if the system is not perfect, developing these sort of AI systems is still important.

“This is moving in the right direction,” he said. “I think we should encourage this kind of system.”

- News for Nose: Machines Use Odor to Diagnose Disease - IEEE ... ›

- Understanding the Coronavirus Is Like Reading a Sentence - IEEE ... ›

Rebecca Sohn is a freelance science journalist. Her work has appeared in Live Science, Slate, and Popular Science, among others. She has been an intern at STAT and at CalMatters, as well as a science fellow at Mashable.