When the HBO show that became “Silicon Valley” was still in development, and its creators decided its fictional startup would be in the compression business, they turned to Stanford professor Tsachy Weissman to come up with some novel and at least somewhat plausible compression technology. Weissman brought in electrical engineering graduate student Vinith Misra to help; Misra went on to field many technical questions for the show in its first two years, as a student and then as researcher working for IBM on the Watson team.

IBM was just fine with that relationship. But last year Misra changed jobs—he is now a senior data scientist at Netflix—and with HBO a Netflix competitor, Netflix was not so fine with the consulting arrangement. It was time to pass the baton. And who else to give it to but another student in Weissman’s Stanford lab—the one now seated at Misra’s former desk?

That student, Dmitri Pavlichin, is having a great time with the job.

“The gig is pretty irregular,” he says, “a month or two of nothing, then an intense couple of days, in which I have to put together something that is going to be included in the show, like a paper, or a whiteboard. They’ll give me a snippet of dialog to look at, or tell me that someone finds a document and I have to make the document be kind of interesting.”

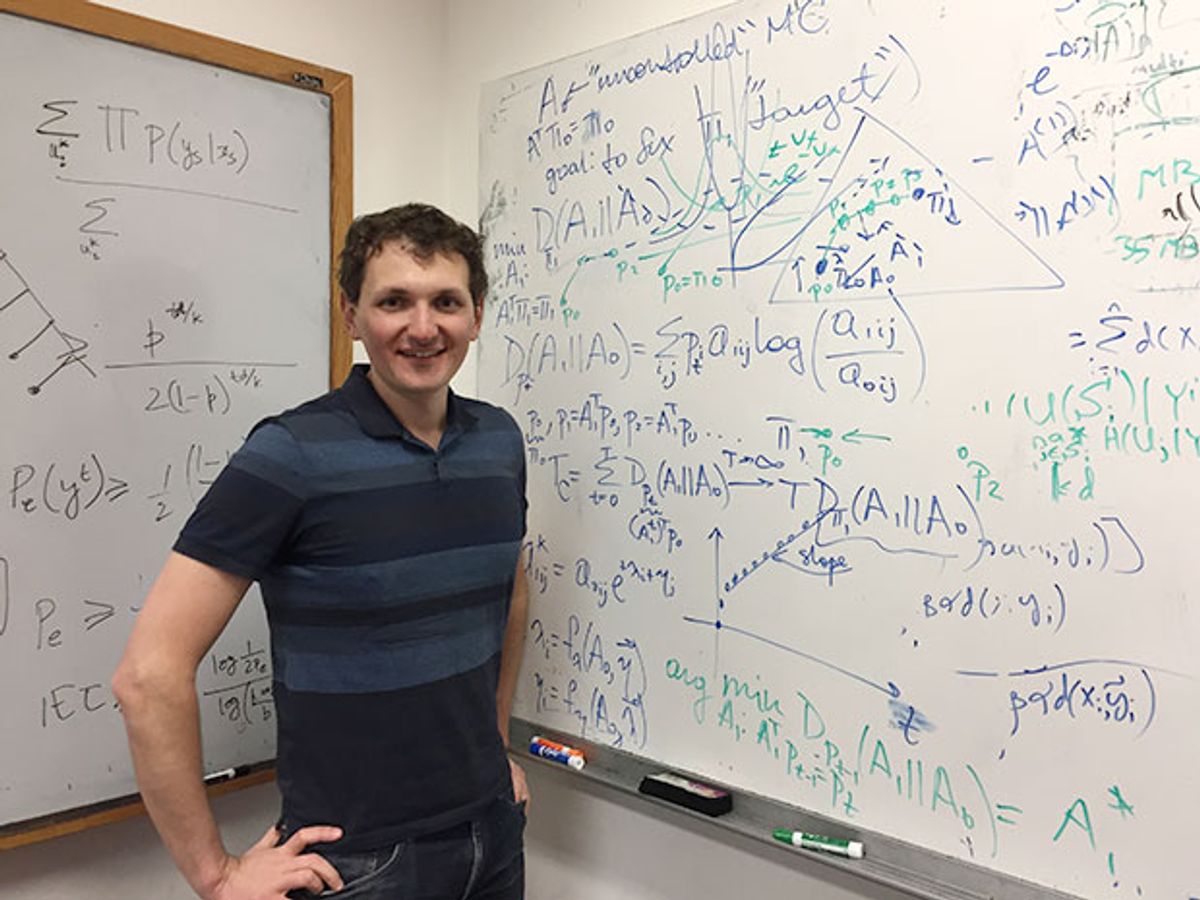

The whiteboards themselves are redrawn, based on Pavlichin’s text or sketches (the notes on the whiteboard in the photo above, however, are in Pavlichin’s own writing).

Pavlichin isn’t the only compression expert consulting for the show; the number of consultants, he says, has expanded since Season 1.

In real life, Pavlichin, who has a Ph.D. in physics and wrote a thesis on quantum optics, is now a postdoc working on research in genomic compression, that is, the most efficient ways to compress the explosion of genomic data created by DNA sequencers. Will any of that technology make it onto the show? Pavlichin can’t say anything specific about upcoming episodes, but promises this season, which starts Sunday, will have more technical content than Season 2, which focused more on the business issues involved in creating products based on Pied Piper’s compression algorithm than the algorithm itself.

Tekla S. Perry is a senior editor at IEEE Spectrum. Based in Palo Alto, Calif., she's been covering the people, companies, and technology that make Silicon Valley a special place for more than 40 years. An IEEE member, she holds a bachelor's degree in journalism from Michigan State University.