Fingerprints can be stolen, iris scans spoofed, and facial recognition software fooled. It has become increasingly challenging to unassailably authenticate a person’s identity, so academic teams have turned to brain waves as the next step in biometric identification.

Many of these efforts seek to outdo one another, boasting how accurately and accessibly they can verify a person’s identity using electroencephalograph (EEG) data. In April, for example, a team in New York achieved 100 percent accuracy at identifying individuals using a skullcap with 30 electrodes. Last week, we reported on a simple set of earbud sensors that worked with 80 percent accuracy.

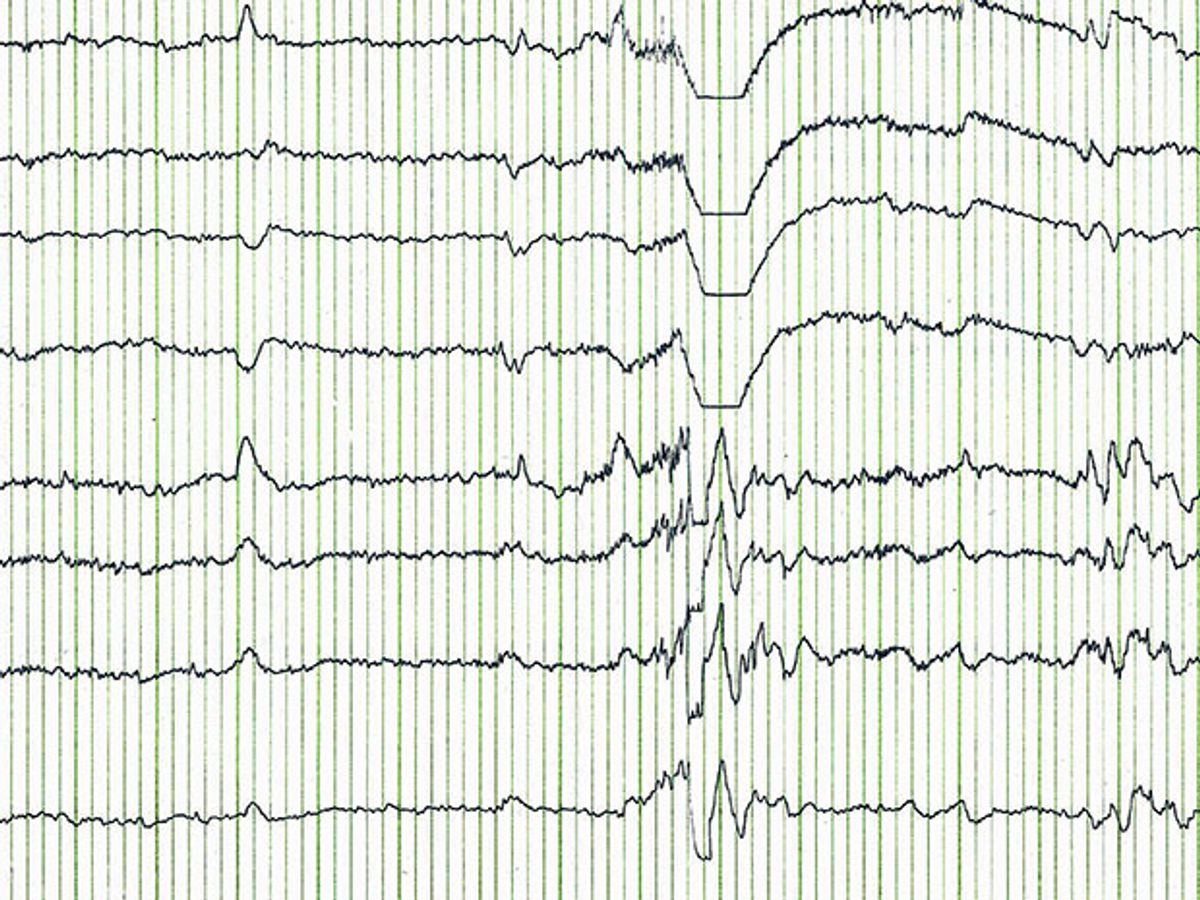

Yet our brains don’t produce a single, clear signal that can be checked like a fingerprint. Rather, they emit a messy, vibrant symphony of personal information, including one’s emotional state, learning ability, and personality traits. And as EEG headsets and technology become cheaper, portable, and more ubiquitous—not only for identity authentication, but in apps for monitoring relaxation levels, playing games, and more—there’s a high likelihood that someone will tap into that concerto of information for malicious purposes.

“If you have these apps, you don’t know what the app is reading from your brain or what [the app's creators are] going to use that information for, but you do know they’re going to have a lot of information,” says Abdul Serwadda, a cyber security researcher at Texas Tech University.

Serwadda and graduate student Richard Matovu recently played devil’s advocate to see if they could glean sensitive personal information from brain data captured by two popular EEG-based authentication systems. Surprise, surprise: they did. Serwadda presented the results earlier this week at the 8th IEEE International Conference on Biometrics in Buffalo, New York.

The systems the duo examined were EEG-based authentication systems that have claimed high levels of authentication accuracy: one, from John Chuang and colleagues at the University of California, Berkley; and the other adapted from the work of a research team at Binghamton University and the University of Buffalo. Such EEG-based authentication systems utilize specific features, or markers, of brain activity to identify a person, like isolating the melody of a specific orchestra instrument to identify a song.

Serwadda and Matovu wanted to see if those markers also contained sensitive personal information—in this case, a tendency for alcoholism. They put each system to work analyzing an old medical dataset of EEG scans from a group of alcoholics and non-alcoholics. In a blind trial, a machine learning classifier, trained to recognize brain patterns associated with alcoholism, used the brain wave data from the authentication systems to accurately identify 25 percent of the alcoholics in the sample. That’s 25 percent of people who just lost their privacy.

“We weren’t surprised, because we know the brain signal is so rich in information,” says Serwadda. “But it is scary. [Wearable brain measurement] is an application that’s just about to go mainstream, and you can infer a lot of information about users.”

That information isn’t limited to just alcohol use. Malicious third parties could mine brain data to make inferences about learning disabilities, mental illnesses, and more, says Serwadda. “Imagine if you made these things public, and insurance companies became aware of them,” he adds. “It would be terrible.”

Unfortunately, the researchers do not yet have a solution for how to secure such information—though in the study, compromising a little on authentication accuracy did reduce the ability to detect who was an alcoholic. Serwadda hopes other research teams will now take privacy, and not just accuracy, into account when optimizing such systems.

That is especially important with functional near-infrared spectroscopy (fNIRS) right around the corner. Compared to EEG, fNIRS measures brain activity with a significantly higher signal-to-noise ratio. And though it is still relatively expensive, prices systems based on the technology have begun to drop. “EEG pales in comparison to what will be seen once fNIRS hits the road,” adds Serwadda. “We have to prepare for the movement of brain wave [assessment] into our daily lives.”

Megan is an award-winning freelance journalist based in Boston, Massachusetts, specializing in the life sciences and biotechnology. She was previously a health columnist for the Boston Globe and has contributed to Newsweek, Scientific American, and Nature, among others. She is the co-author of a college biology textbook, “Biology Now,” published by W.W. Norton. Megan received an M.S. from the Graduate Program in Science Writing at the Massachusetts Institute of Technology, a B.A. at Boston College, and worked as an educator at the Museum of Science, Boston.