It’s not clear which is more challenging: constructing AIs that can collaboratively learn to manage radio spectrum more effectively than humans can, or presenting the results in a way that isn’t snooze-inducing. Yet both were accomplished during Phase 2 of the U.S. Defense Advanced Research Projects Agency’s (DARPA) Spectrum Collaboration Challenge (SC2), held on 12 December at the Johns Hopkins University Applied Physics Lab.

For three hours, presenters cracked jokes and gave a play-by-play rundown of test results, complete with hand-drawn circles and arrows, that would have made any sportscaster proud. While watching replays of the most high-stakes tests, the audience gasped and cheered and shouted encouragement at the AI programs.

AI-managed spectrum allocation might not seem important enough to celebrate with such zeal. But bandwidth is a finite resource, and engineers are searching for ways to squeeze as many bits as possible out of every hertz. One way to avoid a spectrum crunch is to teach machines to be better at managing bandwidth than humans could ever hope to be. That idea is important enough that DARPA has dedicated a Grand Challenge to it, which completed its Phase 1 competition in December 2017.

Rather than regulators chopping spectrum into defined, rigidly-allocated bands, the hope is that devices can learn to autonomously manage their own spectrum use. That means training devices to listen for gaps in transmissions on viable frequencies, broadcast which frequencies they intend to use ahead of time, and perhaps most importantly, negotiate with nearby devices that also need to send and receive signals.

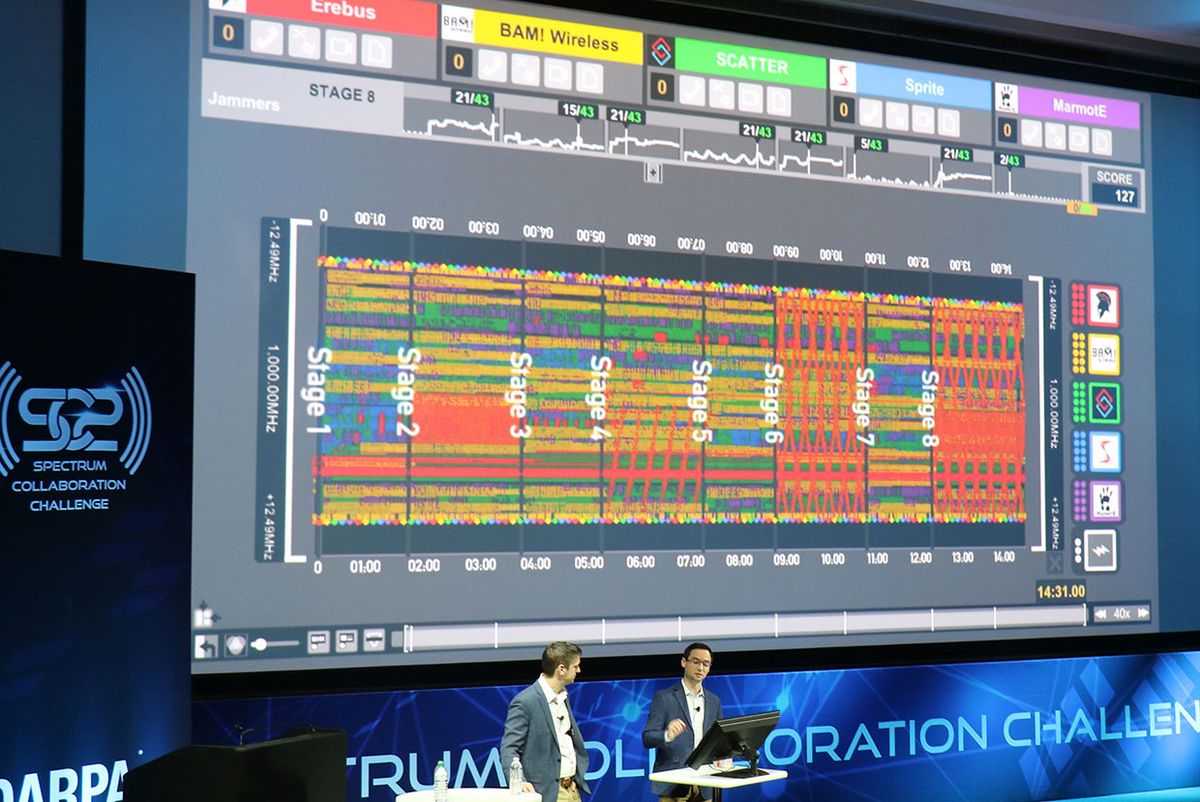

DARPA tested those skills in a series of matches representing scenarios that such an AI might encounter in the real world, like managing transmissions from squads of soldiers deployed in an urban environment, or from Wi-Fi routers trying to deliver service despite a nearby radio jammer.

Each AI scored points based on the number of connections it was able to deliver using the available spectrum—spectrum it was sharing with two, three, or four other AIs at a time. The kicker is that all the participating AIs in each match only earned as many points as the lowest-scoring AI in the group, to ensure the AIs were sharing resources fairly rather than battling for spectrum supremacy.

DARPA tallied the points each AI earned across the more than 400 matches played by 15 teams. At the end, six teams walked away with US $750,000 by demonstrating that their AIs could manage spectrum more effectively than a human-designed system.

“AI comes up with results that don’t make sense to humans,” says Paul Tilghman, the project manager for SC2 and one of the presenters. “But they work.” To highlight the strange and wonderful solutions the AIs came up with, Tilghman and his co-presenter, Ben Hilburn, president of the GNU Radio Foundation, walked the audience through test results that showcased when things went well, when things fell apart, and when things got just plain weird.

In one scenario, for example, each AI represented an emergency response team deployed to combat a wildfire. The AIs were tasked with working together to ensure that each team’s radios and air tankers could communicate clearly on the same bands. The scenario was intended to test whether spectrum collaboration is viable in emergency, ad-hoc situations when manually re-allocating spectrum can take too long.

In one match-up, which Hilburn introduced as “Robin Hood,” two of the AIs started out by duking it out over the same spectrum. But it wasn’t long before one recognized it would be more effective to instead steal spectrum from the third AI, which was using more of the total available bandwidth. Ultimately the three programs settled into a stable, reasonably effective way of divvying up the available spectrum.

Another scenario showed how quickly things can go wrong, however. In this case, three AI-managed networks had to broadcast over the same frequencies as a nearby incumbent broadcaster. According to Tilghman, the challenge of broadcasting in an incumbent’s band without disturbing existing operations will be one of the most important applications for AI-managed spectrum allocation. The goal was to not allow too many devices to broadcast at the same time—if too many devices tried, it would incur a violation from the incumbent.

Yet a match-up Hilburn called “What Incumbent?” played out as the name suggests. The three AIs were initially successful in not perturbing the incumbent with their broadcasts. Once they incurred a violation, however, the AIs threw all concerns out the window and began broadcasting without a care in the world. “Once they started violating the incumbent,” Hilburn announced, “everyone stopped caring.” The teams scored nine out of a potential 210 points in that match-up.

And sometimes the AIs just made odd decisions, as Tilghman and Hilburn revealed in one match-up Hilburn called “4 Cooks in the Kitchen.” The match-up occurred during a scenario in which each AI managed the Wi-Fi router and clusters of connecting smartphones at a different store in an outdoor mall. As the match-up began, each AI announced its intention to broadcast on specific channels, but none went on to make use of those channels. Like too many cooks, the AIs jostled around the spectrum band, seemingly busy but getting nothing accomplished.

All of these tests were facilitated by the Colosseum [PDF], a 22-server emulator of real-world communications scenarios sitting in a room at Johns Hopkins. In the system, 128 radios are connected directly to the emulator, allowing SC2 participants to broadcast their signals directly into the machine. The Colosseum features 25.6 gigahertz of instantaneous bandwidth and can handle 420 terabits per second of digital radio frequency data.

The final challenge was a “payline” challenge. The goal was to prove not only that AIs could collaborate to manage spectrum, but that it was more effective than the current method of chopping spectrum into defined bands. To win a $750,000 prize, each team had to demonstrate that their AI could make more connections in a shared spectrum environment, without degrading other broadcasters in the process.

Six teams managed the task. Even so, Tilghman stressed that all 15 participants would be free to continue competing if they so desired. The money awarded to those six teams is intended to support the most promising designs, but the grand prize—$2 million—is still up for grabs. It will be awarded after a live competition at Mobile World Congress Americas in Los Angeles on 23 October, 2019.

On the whole, Tilghman was delighted about the capabilities the AIs showed, both individually and collaboratively, even though, with few exceptions, the AIs only ever managed to score about half the available points, at best. “These are grad school grades,” he said. “A lot of these scenarios are really hard. We’re not expecting a perfect run.”

“Any time we saw a 50 percent score, we sat back and said, ‘What’s happening here?’” Tilghman added.

In fact, what encouraged Tilghman the most is that in many scenarios, the 50 percent scores were achieved while the AIs were still being cautious and not using spectrum entirely effectively. “When we see the teams have gotten 50 percent, but inadvertently held each other back, that’s exciting,” he said.

There were other glimmers of hope throughout the Phase 2 competition. One of the more challenging scenarios, in which AIs had to work together to share spectrum while portions of it were affected by increasingly complex jammers, saw the AIs collaborating to jump to new bands of spectrum while still delivering service.

“Seeing three teams avoid the jammer as an ensemble—that’s new,” said Tilghman to the crowd after highlighting one such match. “That’s novel. We’ve never had radios that can do that before.”

“I think we saw the technology shift today,” he said.

Michael Koziol is an associate editor at IEEE Spectrum where he covers everything telecommunications. He graduated from Seattle University with bachelor's degrees in English and physics, and earned his master's degree in science journalism from New York University.