Sony today announced that it has developed and is distributing smart image sensors. These devices use machine learning to process captured images on the sensor itself. They can then select only relevant images, or parts of images, to send on to cloud-based systems or local hubs.

This technology, says Mark Hanson, vice president of technology and business innovation for Sony Corp. of America, means practically zero latency between the image capture and its processing; low power consumption enabling IoT devices to run for months on a single battery; enhanced privacy; and far lower costs than smart cameras that use traditional image sensors and separate processors.

Sony’s San Jose laboratory developed prototype products using these sensors to demonstrate to future customers. The chips themselves were designed at Sony’s technology center in Atsugi, Japan. Hanson says that while other organizations have similar technology in development, Sony is the first to ship devices to customers.

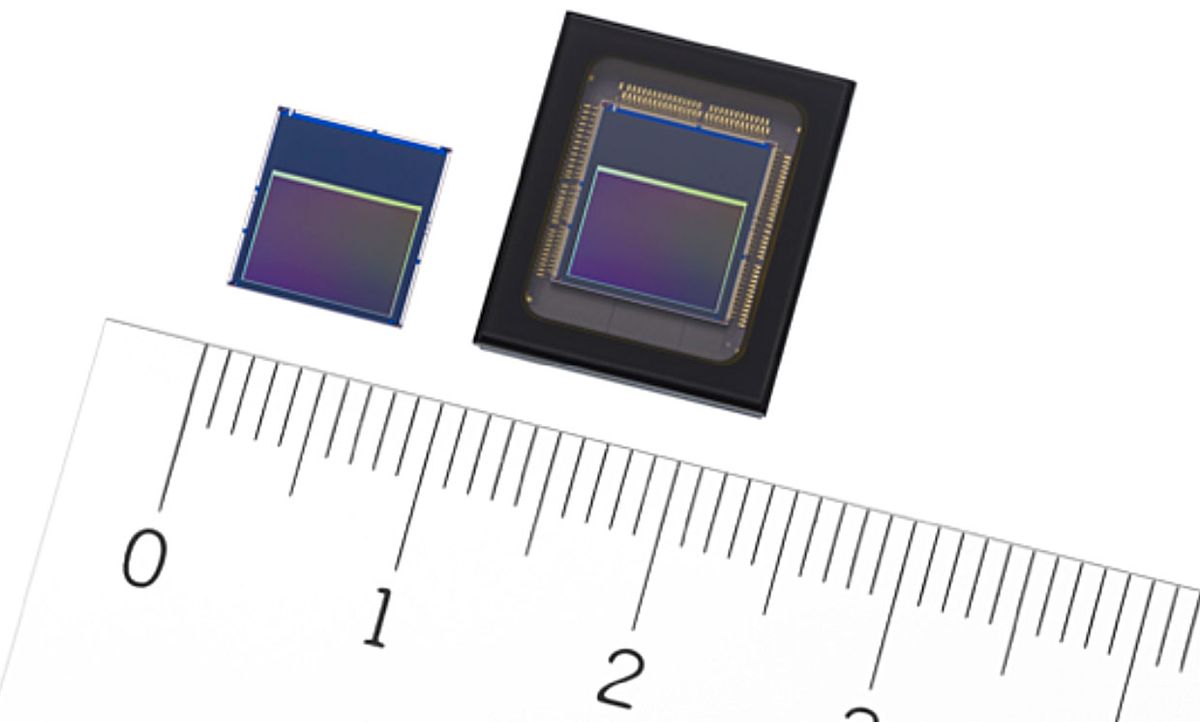

Sony builds these chips by thinning and then bonding two wafers—one containing chips with light-sensing pixels and one containing signal-processing circuitry and memory. This type of design is possible only because Sony is using a back-illuminated image sensor. In standard CMOS image sensors, the electronic traces that gather signals from the photodetectors are laid on top of the detectors. This makes them easy to manufacture but sacrifices efficiency, because the traces block some of the incoming light. Back-illuminated devices put the readout circuitry and the interconnects under the photodetectors, adding to the cost of manufacture.

“We originally went to backside illumination so we could get more pixels on our device,” says Hanson. “That was the catalyst to enable us to add circuitry; then the question was what were the applications you could get by doing that.”

Sony’s smart image processor can identify and track objects, sending data on to the cloud only when it spots an anomaly.Images: Sony

Hanson indicates that the initial applications for the technology will be in security, particularly in large retail situations requiring many cameras to cover a store. In this case, the amount of data being collected quickly becomes overwhelming, so processing the images at the edge would simplify that and cost much less, he says. The sensors could, for example, be programmed to spot people, carve out the section of the image containing the person, and send only that on for further processing. Or, he indicates, they could simply send metadata instead of the image itself—say, the number of people entering a building. The smart sensors can also track objects from frame to frame as a video is captured—for example, packages in a grocery store moving from cart to self-checkout register to bag.

Remote surveillance, with the devices running on battery power, is another application that’s getting a lot of interest, Hanson says, along with manufacturing companies that mix robots and people looking to use image sensors to improve safety. Consumer gadgets that use the technology will come later, but he expects developers to begin experimenting with samples.

Tekla S. Perry is a former IEEE Spectrum editor. Based in Palo Alto, Calif., she's been covering the people, companies, and technology that make Silicon Valley a special place for more than 40 years. An IEEE member, she holds a bachelor's degree in journalism from Michigan State University.