Robocopters to the Rescue

The next medevac helicopter won’t need a pilot

We’re standing on the edge of the hot Arizona tarmac, radio in hand, holding our breath as the helicopter passes 50 meters overhead. We watch as the precious sensor on its blunt nose scans every detail of the area, the test pilot and engineer looking down with coolly professional curiosity as they wait for the helicopter to decide where to land. They’re just onboard observers. The helicopter itself is in charge here.

Traveling at 40 knots, it banks to the right. We smile: The aircraft has made its decision, probably setting up to do a U-turn and land on a nearby clear area. Suddenly, the pilot’s voice crackles over the radio: “I have it!” That means he’s pushing the button that disables the automatic controls, switching back to manual flight. Our smiles fade. “The aircraft turned right,” the pilot explains, “but the test card said it would turn left.”

The machine would have landed safely all on its own. But the pilot could be excused for questioning its, uh, judgment. For unlike the autopilot that handles the airliner for a good portion of most commercial flights, the robotic autonomy package we’ve installed on Boeing’s Unmanned Little Bird (ULB) helicopter makes decisions that are usually reserved for the pilot alone. The ULB’s standard autopilot typically flies a fixed route or trajectory, but now, for the first time on a full-size helicopter, a robotic system is sensing its environment and deciding where to go and how to react to chance occurrences.

It all comes out of a program sponsored by the Telemedicine & Advanced Technology Research Center, which paired our skills, as roboticists from Carnegie Mellon University, with those of aerospace experts from Piasecki Aircraft and Boeing. The point is to bridge the gap between the mature procedures of aircraft design and the burgeoning world of autonomous vehicles. Aerospace, meet robotics.

The need is great, because what we want to save aren’t the salaries of pilots but their lives and the lives of those they serve. Helicopters are extraordinarily versatile, used by soldiers and civilians alike to work in tight spots and unprepared areas. We rely on them to rescue people from fires, battlefields, and other hazardous locales. The job of medevac pilot, which originated six decades ago to save soldiers’ lives, is now one of the most dangerous jobs in America, with 113 deaths for every 100 000 employees. Statistically, only working on a fishing boat is riskier.

These facts raise the question: Why are helicopters such a small part of the boom in unmanned aircraft? Even in the U.S. military, out of roughly 840 large unmanned aircraft, fewer than 30 are helicopters. In Afghanistan, the U.S. Marines have used two unmanned Lockheed Martin K-Max helicopters to deliver thousands of tons of cargo, and the Navy has used some of its 20-odd shipborne Northrop Grumman unmanned Fire Scout helicopters to patrol for pirates off the coast of Africa.

So what’s holding back unmanned helicopters? What do unmanned airplanes have that helicopters don’t?

It’s fine for an unmanned plane to fly blind or by remote control; it takes off, climbs, does its work at altitude, and then lands, typically at an airport, under close human supervision. A helicopter, however, must often go to areas where there are either no people at all or no specially trained people—for example, to drop off cargo at an unprepared area, pick up casualties on rough terrain, or land on a ship. These are the scenarios in which current technology is most prone to fail, because unmanned aircraft have no common sense: They do exactly as they are told.

If you absentmindedly tell one to fly through a tree, it will attempt to do it. One experimental unmanned helicopter nearly landed on a boulder and had to be saved by the backup pilot. Another recently crashed during the landing phase. To avoid such embarrassments, the K-Max dangles cargo from a rope as a “sling load” so that the helicopter doesn’t have to land when making a delivery. Such work-arounds throw away much of the helicopter’s inherent advantage. If we want these machines to save lives, we must give them eyes, ears, and a modicum of judgment.

In other words, an autonomous system needs perception, planning, and control. It must sense its surroundings and interpret them in a useful way. Next, it must decide which actions to perform in order to achieve its objectives safely. Finally, it must control itself so as to implement those decisions.

A cursory search on YouTube will uncover videos of computer-controlled miniature quadcopters doing flips, slipping vertically through slots in a wall, and assembling structures. What these craft are missing, though, is perception: They perform inside the same kind of motion-capture lab that Hollywood uses to record actors’ movements for computer graphics animations. The position of each object is precisely known. The trajectories have all been computed ahead of time, then checked for errors by software engineers.

If you give such a quadcopter onboard sensors and put it outdoors, away from the rehearsed dance moves of the lab, it becomes much more hesitant. Not only will it sense its environment rather poorly, but its planning algorithms will barely react in time when confronted with an unusual development.

True, improvements in hardware are helping small quadcopters approach full autonomy, and somewhat larger model helicopters are already quite far along in that quest. For example, several groups have shown capabilities such as automated landing, obstacle avoidance, and mission planning on the Yamaha RMax, a 4-meter machine originally sold for remote-control crop dusting in Japan’s hilly farmlands. But this technology doesn’t scale up well, mainly because the sensors can’t see far enough ahead to manage the higher speeds of full-size helicopters. Furthermore, existing software can’t account for the aerodynamic limitations of larger craft.

Another problem with the larger helicopters is that they don’t actually like to hover. A helicopter typically lands more like an airplane than most people realize, making a long, descending approach at a shallow angle at speeds of 40 knots (75 kilometers per hour) or more and then flaring to a hover and vertical descent. This airplane-like profile is necessary because hovering sometimes requires more power than the engines can deliver.

The need for fast flying has a lot to do with the challenges of perception and planning. We knew that making large, autonomous helicopters practical would require sensors with longer ranges and faster measurement rates than had ever been used on an autonomous rotary aircraft, as well as software optimized to make quick decisions. To solve the first problem, we began with ladar—laser detection and ranging—a steadily improving technology and one that’s already widely used in robotic vehicles.

Ladar measures the distance to objects by first emitting a tightly focused laser pulse and then measuring how long it takes for any reflections to return. It creates a 3-D map of the surroundings by pulsing 100 000 times per second, steering the beam to many different points with mirrors, and combining the results computationally.

The ladar system we constructed for the ULB uses a “nodding” scanner. A “fast-axis” mirror scans the beam in a horizontal line up to 100 times per second while another mirror nods up and down much more slowly. To search for a landing zone, the autonomous system points the ladar down and uses the fast-axis line as a “push broom,” surveying the terrain as the helicopter flies over it. When descending nearer to a possible landing site, the system points the ladar forward and nods up and down, thus scanning for utility wires or other low-lying obstacles.

Because the helicopter is moving, the ladar measures every single point from a slightly different position and angle. Normally, vibration would blur these measurements, but we compensate for that problem by matching the scanned information with the findings of an inertial navigation system, which uses GPS, accelerometers, and gyros to measure position within centimeters and angles within thousandths of a degree. That way, we can properly place each ladar-measured reflection on an internal map.

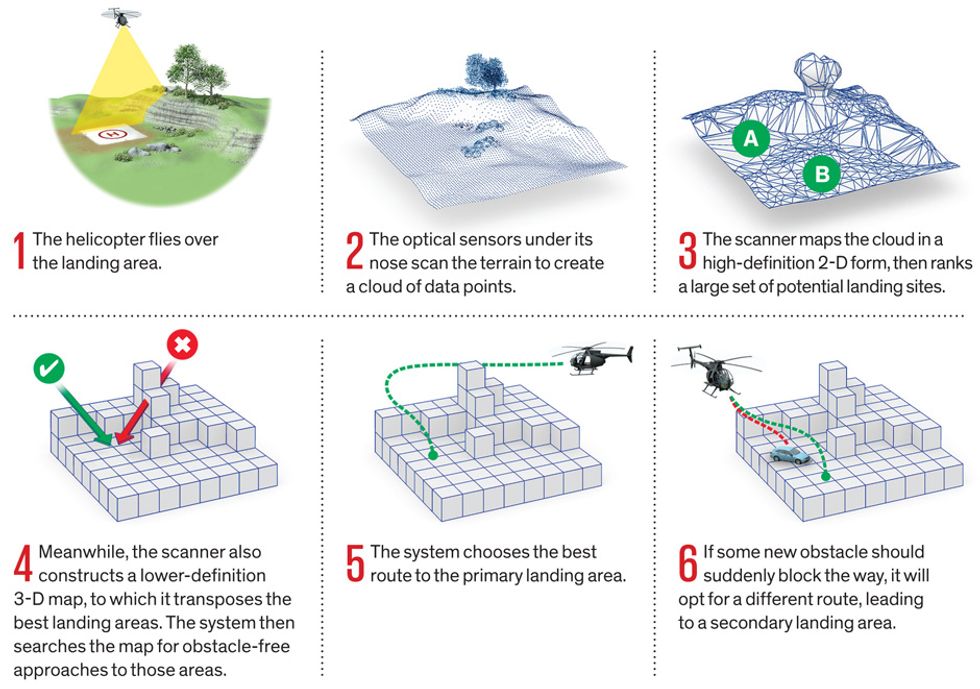

To put this stream into a form the planning software can use, the system constantly updates two low-level interpretations. One is a high-resolution, two-dimensional mesh that encodes the shape of the terrain for landing; the other is a medium-resolution, three-dimensional representation of all the things the robot wants to avoid hitting during its descent. Off-the-shelf surveying software can create such maps, but it may take hours back in the lab to process the data. Our software creates and updates these maps essentially as fast as the data arrive.

The system evaluates the mesh map by continually updating a list of numerically scored potential landing places. The higher the score, the more promising the landing site. Each site has a set of preferred final descent paths as well as clear escape routes should the helicopter need to abort the attempt (for example, if something gets in the way). The landing zone evaluator makes multiple passes on the data, refining the search criteria as it finds the best locations. The first pass quickly eliminates areas that are too steep or rough. The second pass places a virtual 3-D model of the helicopter in multiple orientations on the mesh map of the ground to check for rotor and tail clearance, good landing-skid contact, and the predicted tilt of the body on landing.

In the moments before the landing, the autonomous system uses these maps to generate and evaluate hundreds of potential trajectories that could bring the helicopter from its current location down to a safe landing. The trajectory includes a descending final approach, a flare—the final pitch up that brings the helicopter into a hover—and the touchdown. Each path is evaluated for how close it comes to objects, how long it would take to fly, and the demands it would place on the aircraft’s engine and physical structure. The planning system picks the best combination of landing site and trajectory, and the path is sent to the control software, which actually flies the helicopter. Once a landing site is chosen, the system continuously checks its plan against new data coming from the ladar and makes adjustments if necessary.

That’s how it worked in simulations. The time had come to take our robocopter out for a spin.

So it was that we found ourselves on a sunny spring afternoon in Mesa, Ariz. Even after our system had safely landed itself more than 10 times, our crew chief was skeptical. He had spent decades as a flight-test engineer at Boeing and had seen many gadgets and schemes come and go in the world of rotorcraft. So far, the helicopter had landed itself only in wide-open spaces, and he wasn’t convinced that our system was doing anything that required intelligence. But today was different: Today he would match wits with the robot pilot.

Our plan was to send the ULB in as a mock casualty evacuation helicopter. We’d tell it to land in a cleared area and then have it do so again after we’d cluttered up the area. The first pass went without a hitch: The ULB flew west to east as it surveyed the landing area, descended in a U-turn, completed a picture-perfect approach, and landed in an open area close to where the “casualty” was waiting to be evacuated. Then our crew chief littered the landing area with forklift pallets, plastic boxes, and a 20-meter-high crane.

This time, after the flyover the helicopter headed north instead of turning around. The test pilot shook his head in disappointment and prepared to push the button on his stick to take direct control. But the engineer seated next to him held her hand up. After days of briefings on the simulator, she had begun to get a feel for the way the system “thought,” and she realized that it might be trying to use an alternative route that would give the crane a wider berth. And indeed, as the helicopter descended from the north, it switched the ladar scanner from downward to forward view, checking for any obstacles such as power lines that it wouldn’t have seen in the east-west mapping pass. It did what it needed to do to land near the casualty, just as it had been commanded.

This landing was perfect, except for one thing: The cameras had been set up ahead of time to record an approach from the east rather than the north. We’d missed it! So our ground crew went out and added more clutter to try to force the helicopter to come in from the east but land further away from the casualty. Again the helicopter approached from the north and managed to squeeze into a tighter space nearby, keeping itself close to the casualty. Finally, the ground crew drove out onto the landing area, intent on blocking all available spaces and forcing the machine to land from the east. Once again the wily robot made the approach from the north and managed to squeeze into the one small (but safe) parking spot the crew hadn’t been able to block. The ULB had come up with perfectly reasonable solutions—solutions we had deliberately tried to stymie. As our crew chief commented, “You could actually tell it was making decisions.”

That demonstration program ended three years ago. Since then we’ve launched a spin-off company, Near Earth Autonomy, which is developing sensors and algorithms for perception for two U.S. Navy programs. One of these programs, the Autonomous Aerial Cargo/Utility System (AACUS), aims to enable many types of autonomous rotorcraft to deliver cargo and pick up casualties at unprepared landing sites; it must be capable of making “hot” landings, that is, high-speed approaches without precautionary overflight of the landing zone. The other program will develop technology to launch and recover unmanned helicopters from ships.

It took quite a while for our technology to win the trust of our own professional test team. We must clear even higher hurdles before we can get nonspecialists to agree to work with autonomous aircraft in their day-to-day routines. With that goal in view, the AACUS program calls for simple and intuitive interfaces to allow nonaviator U.S. Marines to call in for supplies and work with the robotic aircraft.

In the future, intelligent aircraft will take over the most dangerous missions for air supply and casualty extraction, saving lives and resources. Besides replacing human pilots in the most dangerous jobs, intelligent systems will guide human pilots through the final portions of difficult landings, for instance by sensing and avoiding low-hanging wires or tracking a helipad on the pitching deck of a ship. We are also working on rear-looking sensors that will let a pilot keep constant tabs on the dangerous rotor at the end of a craft’s unwieldy tail.

Even before fully autonomous flight is ready for commercial aviation, many of its elements will be at work behind the scenes, making life easier and safer, just as they are doing now in fixed-wing planes and even passenger cars. Robotic aviation will not come in one fell swoop—it will creep up on us.

About the Authors

Lyle Chamberlain is a founder of Near Earth Autonomy and Sebastian Scherer is a Systems Scientist at Carnegie Mellon University, which are helping the U.S. Navy develop an autonomous flight-control package for helicopters.