Positron emission tomography (PET) imaging uses radioactive tracers to detect metabolic activity in the body and brain, in order to detect cancers, cardiovascular diseases, and more. PET uses a process called tomographic reconstruction, in which algorithms used statistical methods to compensate for limited data, to form images. This causes PET scans to have relatively poor spatial resolution. While new advances have improved this resolution, they haven't eliminated the need for iterative reconstruction. Now, in a newstudy published in Nature Photonics, a group of scientists in the US and Japan have created a technique that eliminates the need for the guessing game of tomographic reconstruction.

"This has been a huge holy grail in our field for decades," saidSimon Cherry, a professor of biomedical engineering and radiology at the University of California, Davis and senior author of the new study.

As the name implies, PET scans rely on positron emission. Before the scan, a patient is injected with a glucose radiotracer, and the radioactive elements in the sugar will release positrons. As soon as a positron encounters an electron in the body, the two particles annihilate each other, producing two high-energy photons, called a gamma ray, traveling in opposite directions and forming a line. PET scanners work by detecting these photons and identifying roughly where they originated from by finding this line. That allows them to identify areas that took up more tracer, such as cancer cells.

The problem is, a straight line by itself doesn't do much to narrow down where the photons originated from, saidMichael King, a professor of radiology at the University of Massachusetts Chan Medical School who was not involved with the study. So an algorithm uses all the data it has on other photon lines combined with statistical models to guess where each positron originated from.

"The end result is that you get something which is pretty good," said King. "But it's still a guess."

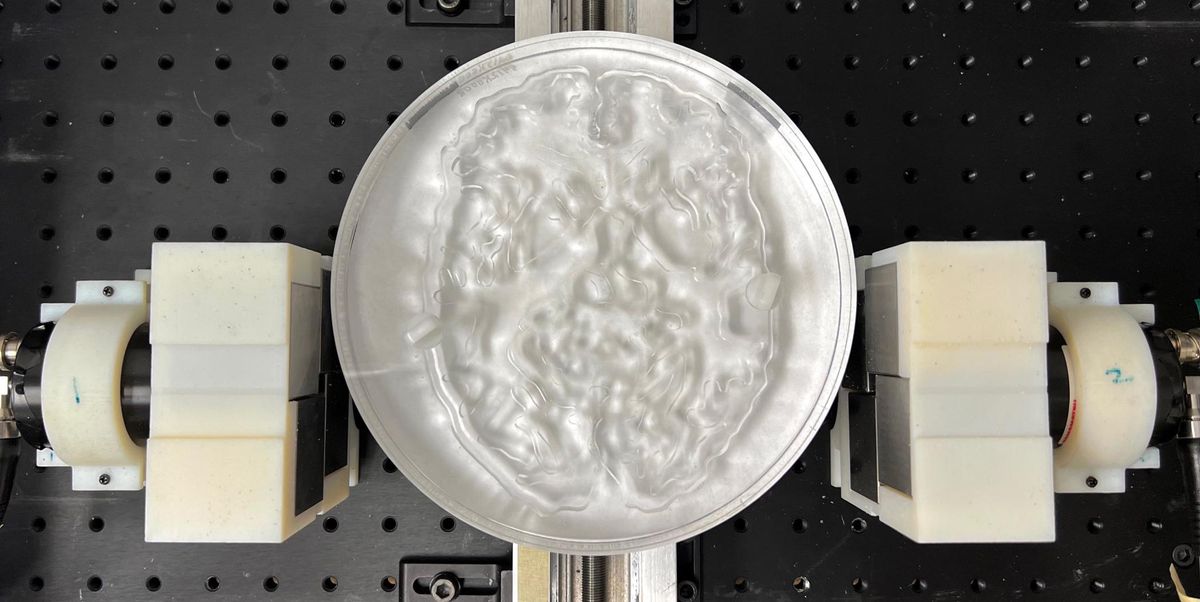

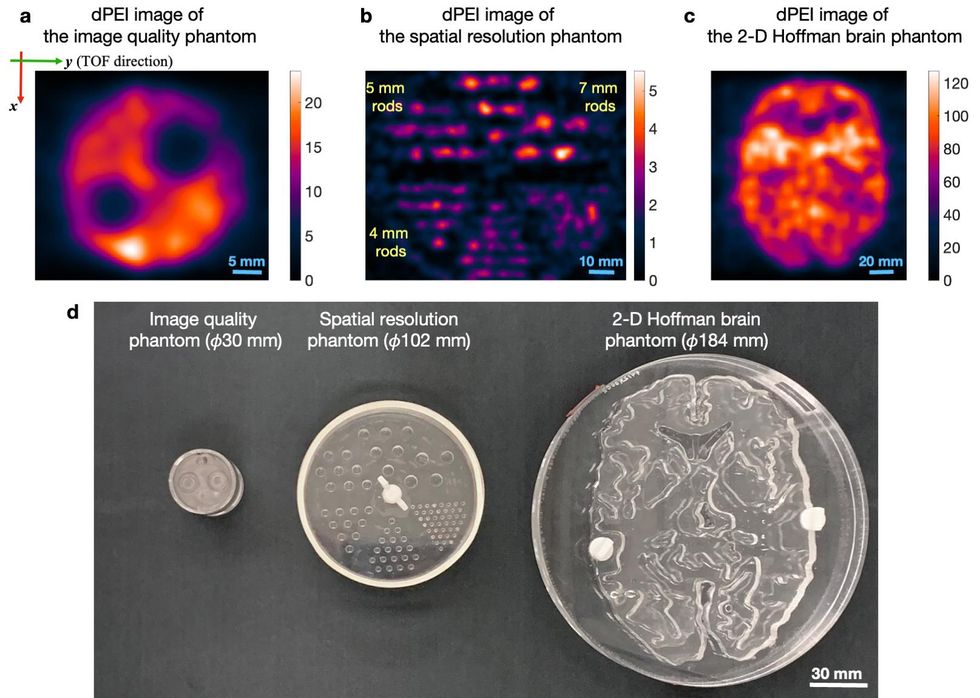

In recent years, detectors have gotten fast enough at detecting photons that they can estimate where they originated based on the time difference of when they arrive at the PET's scanner's sensors. This is called time-of-flight PET, and it makes scans more accurate—but not accurate enough to avoid tomographic reconstruction. The new study took time-of-flight PET to its logical conclusion—they created a system in which a sensor identified photons so fast that reconstruction became unnecessary.

The researchers used three different methods to accomplish this. They used a very fast method of converting gamma rays into visible light by utilizing vacuum tubes, and placed this mechanism inside the machine's photodetector, eliminating the time it would take for light to travel between them. They also used a convolutional neural network to predict timing.

Previous time-of-fight PET used sensors that took around 200 picoseconds to register photons. In that time, light can travel around 3 centimeters. On the other hand, the sensor in the new study has a lag time of just 32 picoseconds, in which time light travels just 4.8 millimeters.

King described the research as "not unique to [the researchers] in terms of striving towards this goal." Ever since time-of-flight PET was invented, radiologists have known that this method, which the researchers here call direct positron emission imaging, would be much more accurate if it were possible.

The method could also have other benefits, saidLacey McIntosh, the division chief of oncologic and molecular imaging at UMass Memorial Medical Center and an assistant professor of radiology at UMass Chan Medical School. These might include a lower dose of radiotracer for the same quality of image. Although the radiation that a single dose of radiotracer exposes the body to is tiny, any radiation exposure has the potential to be harmful. Scanners would also not have to have sensors in a ring, which would help claustrophobic patients. Also, scans could be done faster, which might enable doctors to do several scans in a session and would help children who struggle to stay still.

However, it would likely not be able to create a system with all of these benefits at once, said Cherry. For example, the increase in the signal could be used to decrease the radiation dose, make the scan faster, or increase the image quality. Which option you choose would depend on the patient, their preferences, and their situation.

"You're going to be able to tailor what you do to the specific clinical situation," he said.

It's also possible that such a system would be less expensive, said Cherry, because it may need less detectors. But he also says it may be the case that just as many detectors would be necessary to produce a higher-quality image than today's scanners can create.

This technology has a long way to go before it can be used in a medical setting. Cherry describes this study as a proof-of-concept, with the researchers' prototype design being impractical in a number of ways. For instance, the images took 10 to 24 hours to produce, and objects the researchers imaged were exposed to large amounts of radiation. Nevertheless, Cherry said the study shows the technique is possible, and there aren't any theoretical barriers—only technological ones.

"Some people thought there might be some other effects that might come into play at this very fast timing precisions that might make this not work the way that we thought it would," Cherry said. "I think we've put that to rest."

Rebecca Sohn is a freelance science journalist. Her work has appeared in Live Science, Slate, and Popular Science, among others. She has been an intern at STAT and at CalMatters, as well as a science fellow at Mashable.