This article is part of our exclusive IEEE Journal Watch series in partnership with IEEE Xplore.

Medical imaging techniques such as MRIs and CT scans have revolutionized the medical field, helping to advance novel therapies and provide hard data and new perspectives on the health of patients. Such noninvasive approaches allow doctors to peer into the brains and bodies of patients, detecting anything from fractured bones to brain tumors.

Yet while these imaging techniques are critical for modern-day health care, they can sometimes be deceptive—suggesting that an unusual phenomenon is present in the brain or body when in fact it is not.

This is because medical imaging devices do not record images directly. Instead, the raw data collected by the devices is analyzed by a computer, and machine-learning algorithms are used to reconstruct the images that doctors and radiologists use for diagnosing a health complication. Image reconstruction is done based on the known physics of the imaging device, along with a set of assumptions about how the final image should appear.

“However, if certain assumptions are wrong during image reconstruction, false structures may be introduced into the final image,” explains Mark Anastasio, a professor of bioengineering at the University of Illinois at Urbana–Champaign.

As one could imagine, the presence of false structures (also called “hallucinations”) in medical images could have serious consequences in diagnosing patients. “For example, a hallucination that resembles a tumorlike structure may influence a radiologist to conclude that a lesion is present in the tissue or organ being imaged, when it is actually not,” explains Anastasio.

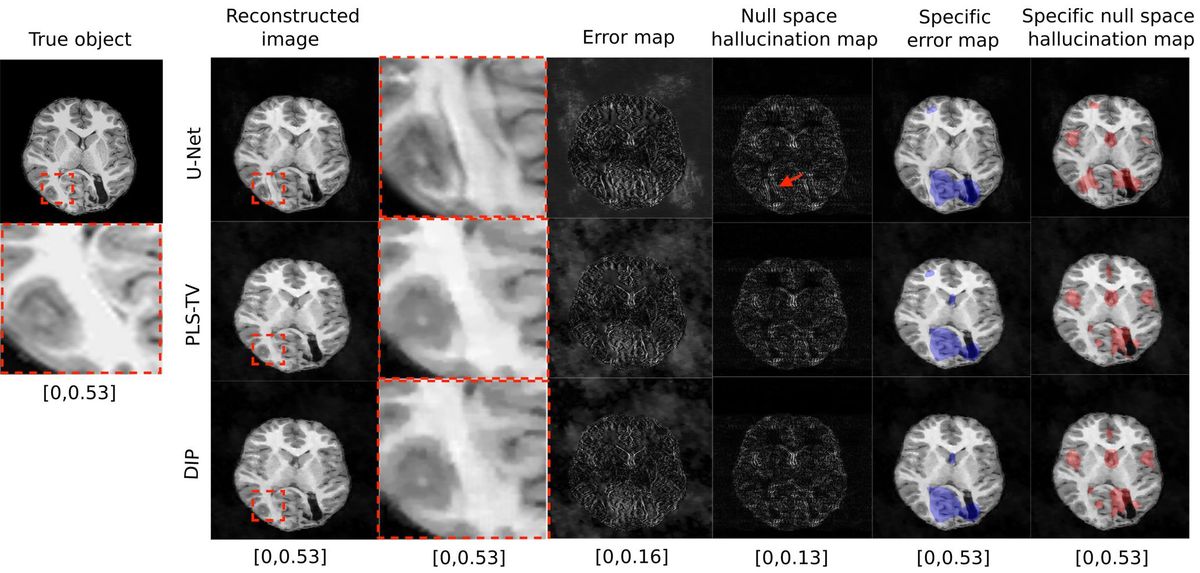

To address this issue, Anastasio and his colleagues have been working on a new technique that can identify when algorithms for image reconstruction are likely creating false structures. It works by mapping out errors in the reconstructed image that are not attributable to the raw image data.

The research team tested their approach against three different image-reconstruction models through numerous simulations. The results, which show that the new mapping technique is effective at detecting hallucinations, are described in a study published in November in IEEE Transactions on Medical Imaging.

As future data-driven image-reconstruction algorithms are designed, the hallucination map can be used to help find and analyze errors introduced by them, ultimately helping to identify and build more accurate methods for reconstructing medical images.

Anastasio notes that, although the hallucination map helps identify the existence of false structures in medical images, it does not tell us how harmful—or even useful—these structures are with respect to a given diagnosis. “Extending the proposed hallucination maps to incorporate such information is an exciting topic for future study,” he says.

- Hospitals Deploy AI Tools to Detect COVID-19 on Chest Scans ... ›

- Medical Imaging AI Software Is Vulnerable to Covert Attacks - IEEE ... ›

- ChatGPT's Hallucinations Could Keep It from Succeeding - IEEE Spectrum ›

Michelle Hampson is a freelance writer based in Halifax. She frequently contributes to Spectrum's Journal Watch coverage, which highlights newsworthy studies published in IEEE journals.