Engineers at Purdue University and the University of California at Berkeley have discovered that a simple combination of commonly available devices creates a computing element that could lead to some truly strange circuits: logic that can perform its inverse operation. Some important aspects of modern computing—notably encryption—depend on there being a significant difference in difficulty between multiplication and its inverse, factoring. But the engineers are cautious about the technology’s potential for code breaking. They describe the device in this month’s issue of IEEE Electron Device Letters.

Purdue electrical engineering and computer science professor Supriyo Datta and his colleagues had been exploring new ways of computing using simulated networks of devices that have a “tunable” randomness. That is, the devices output a string of bits that are random, but can be tuned to produce more of one bit than the other. Such a device, which they called a p-bit, could then represent a probability—40 percent say—as a string of bits of whatever length as long as for every 100 bits or so 40 of them were 1.

Many computing problems can be streamlined by computing with probabilities; Datta’s group showed their use in solving the travelling salesman problem and other important problems of optimization and inference. But Datta and post-doctoral researcher Kerem Yunus Camsari and some colleagues discovered something new about using p-bits: They could be combined in such a way that they form logic and arithmetic circuits that can perform their inverse operations—adders can subtract, multipliers can factor, and potentially stranger things can happen.

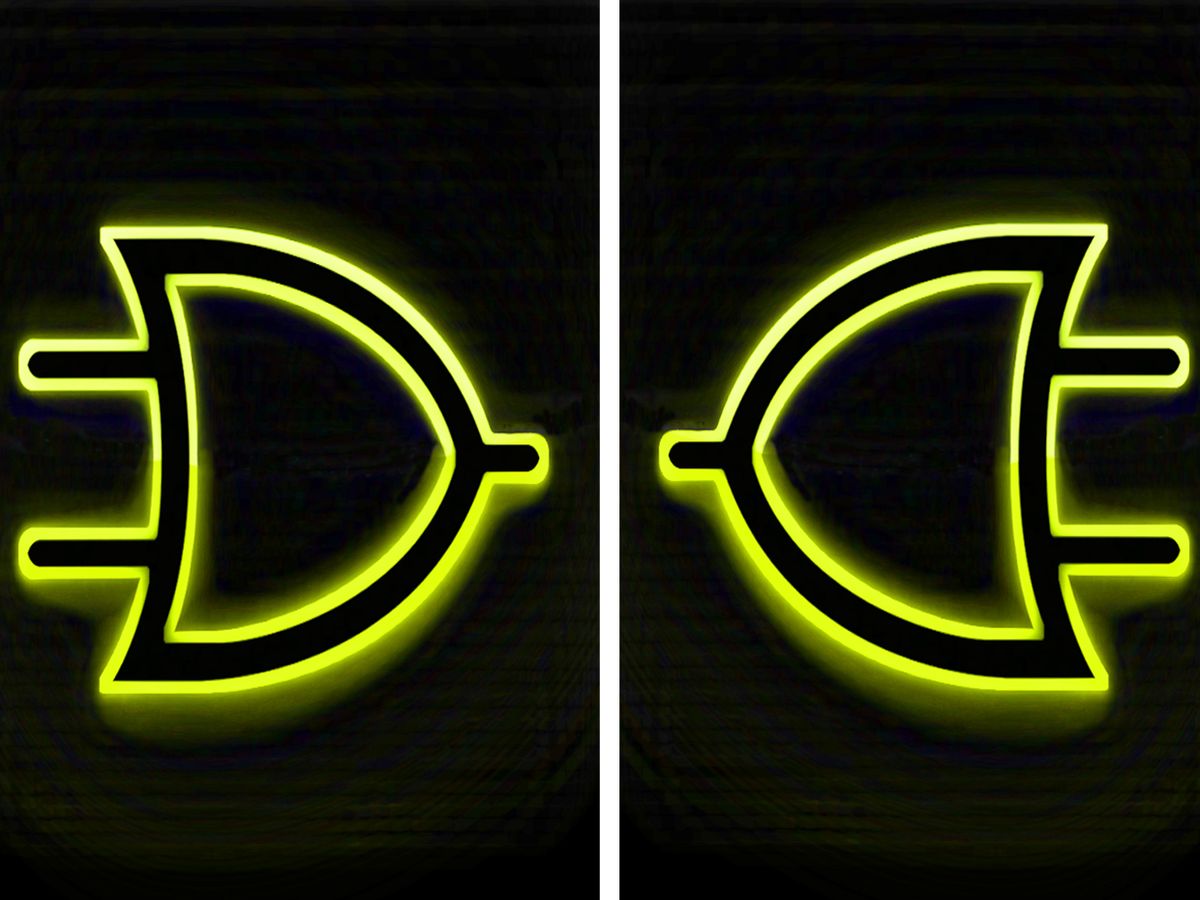

The inputs to such a logic gate would be random streams of bits, but they still obey the logic of that gate. So whenever a 2-input AND gate saw a 1 on both inputs, it would output a 1; everything else would generate a 0. But what p-bit-based gates can do that others can’t is run in reverse. Put a stream of random bits through what was the AND gate’s output and its input will produce two random streams that satisfy the AND gate’s logic: Whenever you put a 1 on the output, both inputs would generate a 1. But if you put a 0 on the output the inputs generate a random sequence of 00, 01, and 10.

The problem was this: there was no such thing as a p-bit. “Six-months ago I’d have said we’re not close to building even one, because we’d have to build a whole new device,” says Datta.

Sayeef Salahuddin at UC Berkeley suggested to Datta and Camsari that a magnetic RAM cell combined with a transistor might be the solution. Datta and Camsari have now shown it to be true. Just those combination of things recently became available on processors and systems-on-a-chip from GlobalFoundries and TSMC.

An MRAM cell is basically a two-terminal nanoscale device called a magnetic tunnel junction. It’s made up of two ferromagnetic layers sandwiching a non-ferromagnetic layer. If the two magnetic layers have magnetic fields oriented in the same direction, current can tunnel across them with little resistance. If they’re pointing in the opposite directions from each other, the resistance spikes. MRAM stores a bit by making one of the layers changeable by a particular type of current. They’ve now been engineered so well that the flippable magnetic layer will stay stable for a decade or more.

To make a p-bit, however, you need to basically make a bad MRAM cell. Instead of needing a high current to flip the magnetic layer, you need to make it so unstable that random thermal fluctuations will knock its magnetic field back and forth at gigahertz rates. The transistor component of the p-bit is there to pull the output towards either the one or zero states as needed—tuning the randomness to encode a probability.

Datta’s group hasn’t built p-bits yet, but they’ve simulated them and are seeking access to a foundry that can do the job.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.