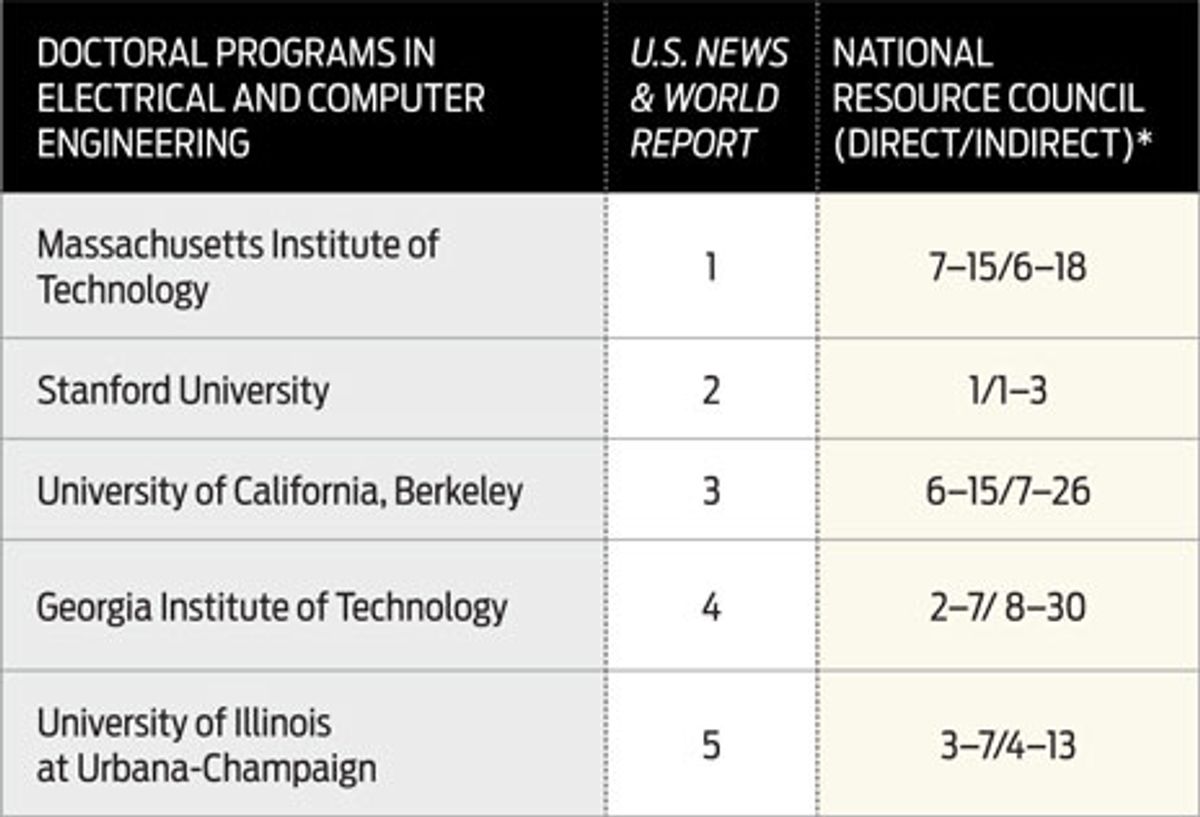

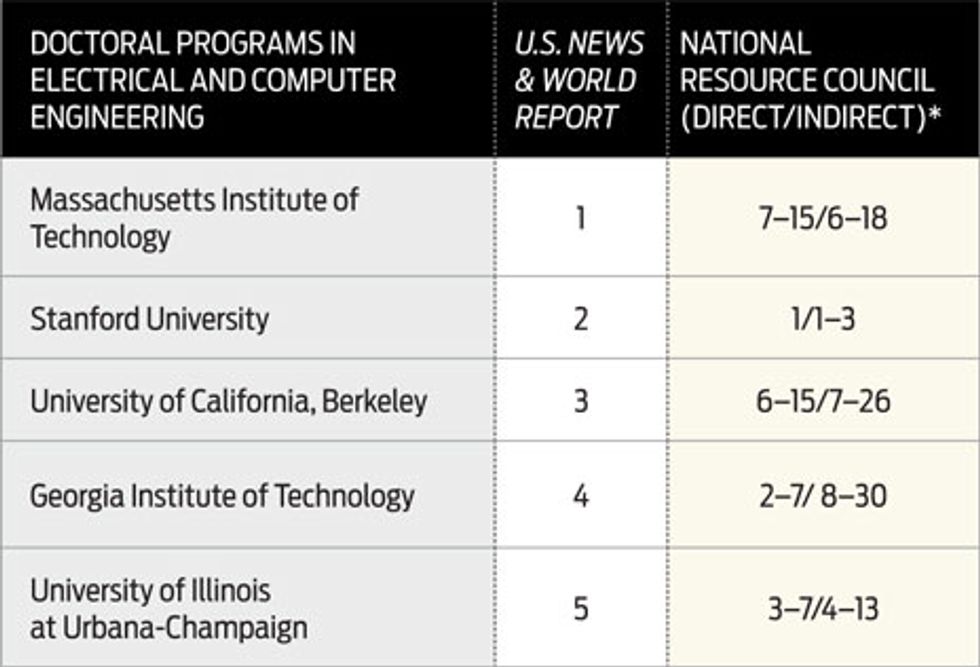

* For the direct ranking, faculty raters assign numerical weights directly. In the indirect method,rankings were derived from faculty evaluations of a graduate program's characteristics.

NOTE: U.S. News rankings were for the 2010–11 academic year. The NRC data concern 2005–06.

In an article published by The New Yorker in February, Malcolm Gladwell rips apart college rankings for their subjective, and even arbitrary, criteria. "Who comes out on top," he concludes, "is really about who is doing the ranking."

Gladwell would surely be thrilled by the National Research Council's assessment of U.S. doctoral programs, published last September, which acknowledges the uncertainty of its results in the very way it presents them. Instead of ranking the programs No. 1, 2, 3, and so on, it merely puts each one within a range.

For example, the Massachusetts Institute of Technology's electrical and computer engineering program falls somewhere between 6th and 18th place among the 50 such programs the report considers. "What this tells people is that it's not an exact science and that there is a great deal of variability built into the process," says James Voytuk, a senior program officer at the NRC.

By contrast, MIT is consistently No. 1 in the rankings done by U.S. News & World Report, the target of much of Gladwell's ire. In many cases, the NRC's rankings depart not just from U.S. News but from conventional wisdom. MIT's electrical and computer engineering program isn't even in the top 5 of the NRC's rankings, while those of Princeton University and the University of California, Santa Barbara—which don't even make U.S. News' top 10—are.

The NRC's factors—there are 20 in all—include characteristics of the faculty (such as number of publications and citations), students (average GRE, percentage with full financial support), and the program in general (diversity, average time to degree, student activities offered). The data come from responses to questionnaires sent to faculty, administrators, and students about the 2005–2006 academic year. A typical ranking method would normalize these values, multiply them by a weight, and add them together to get a score. But the NRC's computation is much more sophisticated.

For one, it takes into account that the numbers fluctuate year to year. Its algorithm takes the mean and standard deviation, creates a Gaussian probability distribution, and then randomly generates a sample of 500 values from the distribution. This represents the data set that would result if the questionnaire were repeated 500 times.

It also uses two methods to weigh the 20 factors. One method involves directly asking faculty raters to assign numerical weights. The other is implicit, inferring the weights from the faculty evaluations of the programs. This gave two different sets of ranking ranges, which can be pretty different for the same school [see table, "Range Finder"].

Finally, the team also incorporates the uncertainty in the weights themselves by applying the "random-halves" technique. For each of the 20 factors, the algorithm takes a random subset of weights assigned by half the faculty raters and computes the average. It repeats this process 500 times.

In the end, each Ph.D. program has a set of 500 different rankings. The NRC discards the lowest and highest 5 percent and reports the remaining range. "What we said was maybe there's some outliers," Voytuk says. "That's the most honest way of doing it."

U.S. News' college rankings are clean, simple lists placing academic institutions in order. But, as Geoff Davis, a Google senior researcher and founder of PhDs.org points out, that's exactly their problem. "[They're] not very nuanced," he says. "If your needs are exactly the same as Robert Morse's, then by all means U.S. News is right." Morse is the man behind the U.S. News rankings.

Is having more data necessarily better? Looking at two sets of rankings with ranges does not make deciding on a grad school easy. But, says Davis, it's a tough decision.

The PhDs.org website uses the NRC data in its Grad School Guide. The guide lets students choose attributes that are important to them, assign weights to these factors, and create a customized list of rankings. "The key premise is there's no best program; there's a best program for you," he says. "It's kind of like getting married. There's no best spouse; there's just a best spouse for you."

About the Author

Prachi Patel-Predd is an IEEE Spectrum Contributing Editor who regularly writes about careers, energy, and the environment. In January she wrote “The Other MEMs: The Master of Engineering Management Degree.”