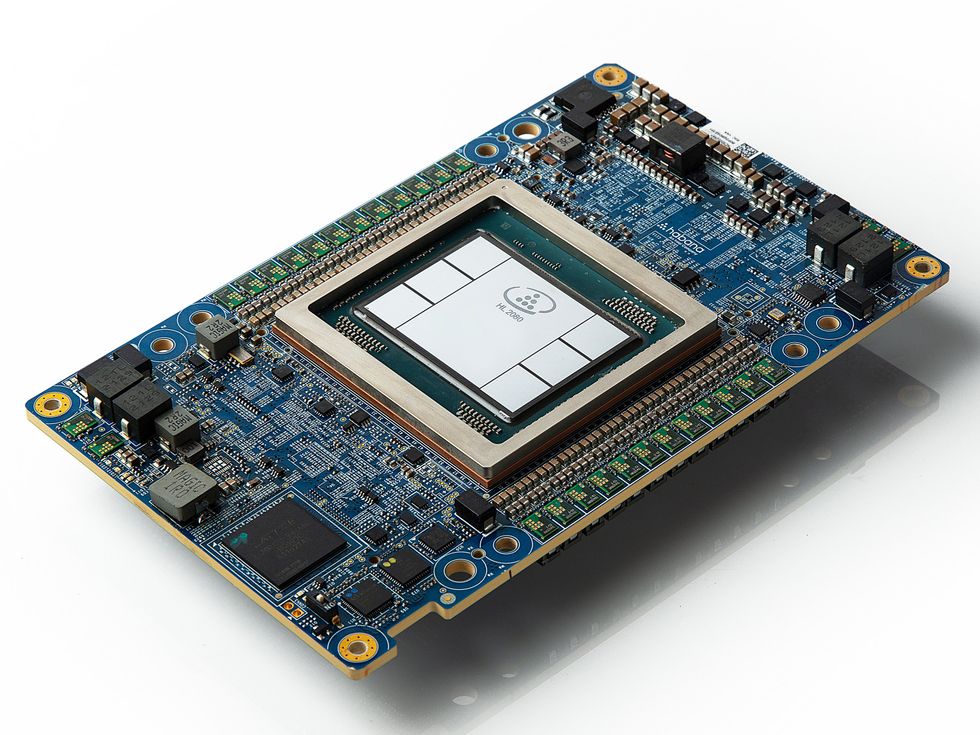

For the first time, a large language model—a key driver of recent AI hype and hope—has been added to MLPerf, a set of neural-network training benchmarks that have previously been called the Olympics of machine learning. Computers built around Nvidia’s H100 GPU and Intel’s Habana Gaudi2 chips were the first to be tested on how quickly they could perform a modified train of GPT-3, the large language model behind ChatGPT.

A 3,584-GPU computer run as a collaboration between Nvidia and cloud provider CoreWeave performed this task in just under 11 minutes. The smallest entrant, a 256-Gaudi2 system, did it in a little over 7 hours. On a per-chip basis, H100 systems were 3.6-times as fast at the task as Gaudi2. However, the Gaudi2 computers were operating “with one hand tied behind their back,” says Jordan Plawner, senior director of AI products at Intel, because a capability called mixed precision has not yet been enabled on the chips.

By one estimate, Nvidia and CoreWeave’s 11-minute record-setting training time would scale up to about two days of full-scale training.

Computer scientists have found that for GPT-3’s type of neural network, called a transformer network, training can be greatly accelerated by doing parts of the process using less-precise arithmetic. Versions of 8-bit floating point numbers (FP8) can be used in certain layers of the network, while more precise 16-bit or 32-bit numbers are needed in others. Figuring out which layers are which is the key. Both H100 and Gaudi2 were built with mixed-precision hardware, but it’s taken time for each company’s engineers to discover the right layers and enable them. Nvidia’s system in the H100 is called the transformer engine, and it was fully engaged for the GPT-3 results.

Habana engineers will have Gaudi2’s FP8 capabilities ready for GPT-3 training in September, says Plawner. At that point, he says, Gaudi2 will be “competitive” with H100, and he expects Gaudi2 to beat H100 on the combination of price and performance. Gaudi2, for what it’s worth, is made using the same process technology—7 nanometers—as the H100’s predecessor, the A100.

Making GPT-3 work

Large language models “and generative AI have fundamentally changed how AI is used in the market,” says Dave Salvatore, Nvidia’s director of AI benchmarking and cloud computing. So finding a way to benchmark these behemoths was important.

But turning GPT-3 into a useful industry benchmark was no easy task. A complete training of the full 1.75-billion parameter network with an entire training dataset could take weeks and cost millions of dollars. “We wanted to keep the runtime reasonable,” says David Kanter, executive director of MLPerf’s parent organization, MLCommons. “But this is still far and away the most computationally demanding of our benchmarks.” Most of the benchmark networks in MLPerf can be run on a single processor, but GPT-3 takes 64 at a minimum, he says.

Instead of training on an entire dataset, participants trained on a representative portion. And they did not train to completion, or convergence, in industry parlance. Instead, the systems trained to a point that indicated further training would lead to convergence.

Figuring out that point, the right fraction of data, and other parameters so that the benchmark is representative of the full training task took “a lot of experiments,” says Ritika Borkar, senior deep-learning architect at Nvidia and chair of the MLPerf training working group.

On Twitter, Abhi Venigalla, a research scientist at MosaicML, estimated that Nvidia and CoreWeave’s 11-minute record would scale up to about two days of full-scale training.

H100 training records

This round of MLPerf wasn’t just about GPT-3, of course; the contest consists of seven other benchmark tests: image recognition; medical-imaging segmentation; two versions of object detection; speech recognition; natural-language processing; and recommendation. Each computer system is evaluated on the time it takes to train the neural network on a given dataset to a particular accuracy. They are placed into three categories: cloud-computing systems, available on-premises systems, and preview systems, which are scheduled to become available within six months.

For these other benchmarks, Nvidia was largely involved in a proxy fight against itself. Most of the entrants were from system makers such as Dell, Gigabyte, and the like, but they nearly all used Nvidia GPUs. Eighty of 88 entries were powered by them, and about half of those used the H100, a chip made using Taiwan Semiconductors Manufacturing Co.’s 5-nanometer process that went to customers in the fourth quarter of 2022. Either Nvidia computers or those of CoreWeave set the records for each of the eight categories.

In addition to adding GPT-3, MLPerf significantly upgraded its recommender system test to a benchmark called DLRM DCN-V2. “Recommendation is really a critical thing for the modern era, but it’s often an unsung hero,” says Kanter. Because of the risk surrounding identifiable personal information in the dataset, “recommendation is in some ways the hardest thing to make a benchmark for,” he says.

The new DLRM DCN-V2 is meant to better match what industry is using, he says. It requires five times the memory operations, and the network is similarly more computationally complex. The size of the dataset it’s trained on is about four times as large as the 1 terabyte its predecessor used.

You can see all the results here.

- Meta’s Challenge to OpenAI—Give Away a Massive Language Model ›

- When AI’s Large Language Models Shrink ›

- OpenAI's GPT-3 Speaks! (Kindly Disregard Toxic Language) ›

- LLAMA and ChatGPT Are Not Open-Source - IEEE Spectrum ›

- Nvidia Still on Top in Machine Learning; Intel Chasing - IEEE Spectrum ›

- Nvidia AI: Challengers Are Coming for Nvidia’s Crown - IEEE Spectrum ›

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.