An update to IBM’s quantum-computing road map now says the company is on course to build a 4,000-qubit machine by 2025. But getting the device to do anything useful will require the development of a powerful new software stack that will help manage errors, share the load with classical hardware, and simplify the programming process.

Since IBM first unveiled its quantum hardware plans in 2020, the company has largely kept to the schedule; the most recent milestone from that forecast that has come to fruition was the release of the company’s 127-qubit Eagle processor last November. The first iteration of the road map topped out with the 1,121 Condor processor that is scheduled for release in 2023, but now the company has revealed plans for a 1,386-qubit processor, called Flamingo, to appear in 2024 and for a 4,158-qubit device called Kookaburra to make its debut in 2025.

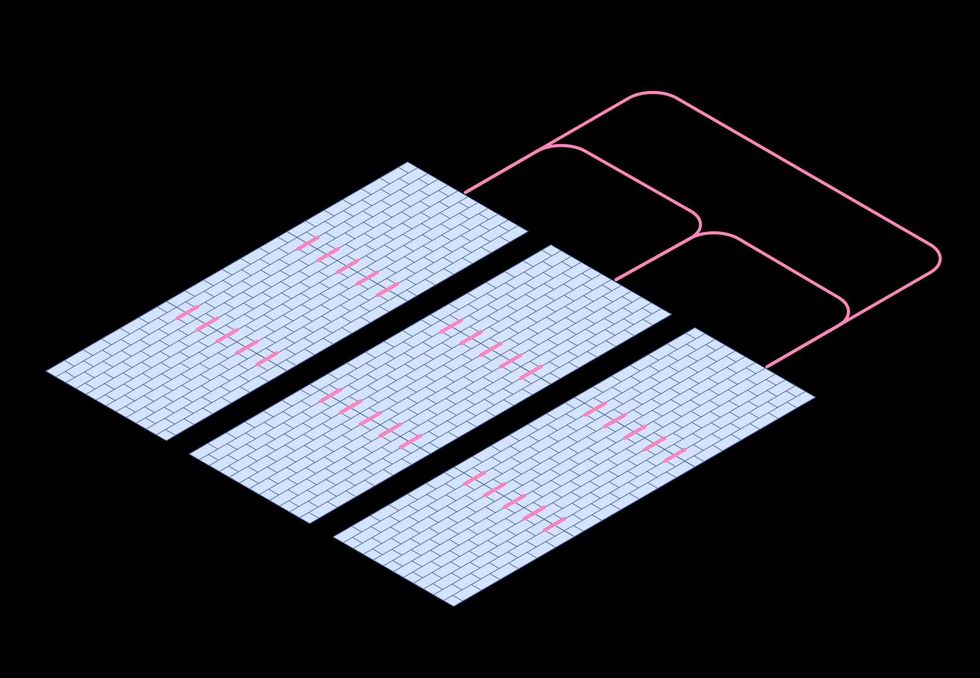

Key to building these devices will be a new modular architecture in which multiple chips are connected to create a single large processor. This will be made possible by new short-range couplers, which allow communication between qubits on adjacent chips, and cryogenic microwave cables, which allow longer-range connections between different processors.

But quantum hardware is error-prone and fiendishly complex, so simply wiring large numbers of qubits together doesn’t necessarily mean you can do much practical work with them. IBM thinks the key to tapping the power of these extra qubits will be an “intelligent software layer” that augments its quantum chips with classical tools that can help manage noise and amplify their processing power.

“We believe that classical resources can really enhance what you can do with quantum and get the most out of that quantum resource,” says Blake Johnson, IBM’s quantum platform lead. “And so we need to build tooling—an orchestration layer if you will–that can allow quantum and classical computations to work together in a seamless way.”

The most fundamental challenge for quantum hardware remains its inherent noisiness, and Johnson says there is “a furious amount of research activity” going on to scale up error-mitigation techniques. So far, most innovations have been tested only on smaller systems. But Johnson says early results suggest that an approach known as probabilistic error cancellation will work on devices the size of those envisioned by IBM at the end of its quantum road map. The technique involves deliberately running noisy versions of quantum circuits to learn the contours of that noise, then using postprocessing on classical computers to reduce the level of error in answers.

Applying these kinds of techniques requires considerable specialist knowledge, though. That limits the techniques’ usefulness for the average developer. That’s why, from 2024 forward, IBM plans to build error mitigation directly into its Qiskit Runtime software development platform so users can build programs without having to think specifically about how to reduce the noise. “We want these things to be automatic for the user,” says Johnson. “You shouldn't have to be a quantum control expert in order to get meaningful results out of a quantum compute.”

Beyond suppressing errors, IBM is also planning new software tools designed to speed up users’ applications and help them tackle larger problems. Last month, the company released two “primitives”–predefined basic programs that carry out core quantum operations–that users of Qiskit runtime can use to build applications. Starting in 2023, IBM will make it possible to run these primitives in parallel across multiple quantum processors, significantly speeding up users’ programs.

IBM’s ambitions also include letting developers build complex programs that combine both classical and quantum elements. That’s because many developers would like to bring quantum capabilities into specific parts of their existing workflows, says Johnson, and also because it could help tackle problems larger than the quantum hardware is capable of solving by itself.

Central to this quantum-classic mashup idea is the concept of “circuit knitting.” This is a family of techniques that split quantum computing problems into chunks that can be run on multiple processors in parallel, before using classical computers to jump in to stitch the results back together again. In a proof of concept last year, IBM researchers showed that they could use a circuit-knitting approach called entanglement forging to double the size of quantum system that can be simulated on a given number of qubits.

By 2025, the company plans to introduce a toolbox of circuit-knitting methods, which developers will be able to use to build algorithms that make the best of both classical and quantum resources. To support this, they will also adopt a “quantum serverless” approach in which developers don’t need to think about what hardware is required to run their code, and quantum or classical resources are provided automatically as needed.

“The serverless model allows the developer to focus on their code, use resources on demand when they need them, and have access to a variety of infrastructure resources without being an expert in the infrastructures themselves,” says Johnson.

The company’s road map predicts that all those things will likely come together by 2025, letting developers start producing full-blown applications for things like machine learning, optimization, and physical simulations.

- Here's a Blueprint for a Practical Quantum Computer - IEEE Spectrum ›

- How Much Has Quantum Computing Actually Advanced? - IEEE ... ›

- IBM Goes Big With 433-Qubit Osprey Chip - IEEE Spectrum ›

- An IBM Quantum Computer Will Soon Pass the 1,000-Qubit Mark - IEEE Spectrum ›

Edd Gent is a freelance science and technology writer based in Bengaluru, India. His writing focuses on emerging technologies across computing, engineering, energy and bioscience. He's on Twitter at @EddytheGent and email at edd dot gent at outlook dot com. His PGP fingerprint is ABB8 6BB3 3E69 C4A7 EC91 611B 5C12 193D 5DFC C01B. His public key is here. DM for Signal info.