Robots that deal with fast-moving objects tend to handle them in one of two ways: way one is to assume that the object is going to keep doing whatever it's been doing, allowing you to predict what's going to happen with it without having to work too hard. Way two is to instead constantly watch what the object is doing, and then continually update what's going to happen to it by working very hard. Way one is unreliable because the Universe is unreliable and assumptions are dangerous, and way two is very computationally intensive, which often makes it too slow to feed useful instructions through a controller to a robot.

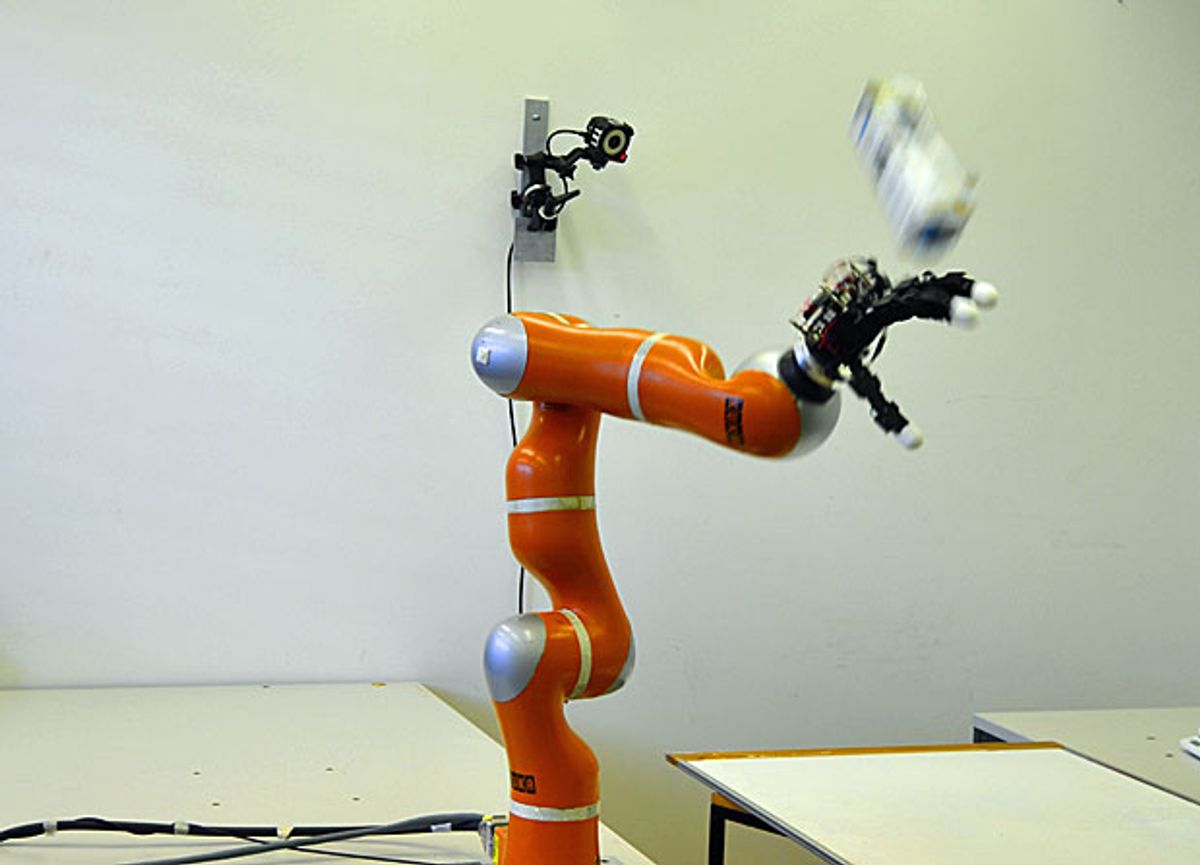

At the Learning Algorithms and Systems Laboratory at EPFL, they're leveraging fast vision, fast computers, fast controllers, fast motors, programming by demonstration, and object modeling to be able to snatch unpredictably unbalanced flying objects straight out of the air.

The most impressive thing here is that the robot is able to catch objects that are both statically and dynamically unbalanced. A baseball is balanced, in that when you throw it, it will (with a few exceptions) follow a predictable trajectory that depends on its speed, its direction, and gravity. It's relatively easy to model. A hammer is statically unbalanced, in that when you throw it, the fact that it's substantially heavier on one end will cause it to tumble somewhat erratically, making it harder to model. And something like a half-full bottle of water is dynamically unbalanced, in that its changing center of gravity (caused by the mass of the water sloshing around inside it) can cause it to spin in all sorts of crazy ways. Have fun trying to model that.

To catch things like this, the robot creates a model of the object that it's about to try to catch, while the object is flying through the air after having been thrown from just a few feet away. Loyal readers of Automaton will have likely noticed the motion capture system (an OptiTrack system by NaturalPoint) in the background of the video, and the fact that all of these objects have reflective markers plastered all over them.

But even with such fast and detailed data, it's still impressive that the system has time to generate a model and then plan and execute a trajectory for the arm (and hand and fingers) that leads to a catch. Let's be honest: from such a close distance, it's unlikely than any of us could execute a one-handed grasp of a half full water bottle flung at our heads.

UPDATED 22 May 2014: Motion tracking system used is OptiTrack, not Vicon.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.