The Creepy New Digital Afterlife Industry

These companies could use your data to bring you back—without your consent

It’s sometime in the near future. Your beloved father, who suffered from Alzheimer’s for years, has died. Everyone in the family feels physically and emotionally exhausted from his long decline. Your brother raises the idea of remembering Dad at his best through a startup “digital immortality” program called 4evru. He promises to take care of the details and get the data for Dad ready.

After the initial suggestion, you forget about it until today, when 4evru emails arrive to say that your father’s bot is available for use. After some trepidation, you click the link and create an account. You slide on the somewhat unwieldy VR headset and choose the augmented-reality mode. The familiar walls of your bedroom briefly flicker in front of you.

Your father appears. It’s before his diagnosis. He looks healthy and slightly brawny, as he did throughout your childhood, sporting a salt-and-pepper beard, a checkered shirt, and a grin. You’re impressed with the quality of the image and animation. Thanks to the family videos your brother gave 4evru, the bot sounds like him and moves like he did. This “Dad” puts his weight more heavily on his left foot, the result of a high school football injury, just like your father.

“Hey, kiddo. Tell me something I don’t know.”

The familiar greeting brings tears to your eyes. After a few tentative exchanges to get a feel for this interaction—it’s weird—you go for it.

“I feel crappy, really down. Teresa broke up with me a few weeks ago,” you say.

“Aw. I’m sorry to hear it, kiddo. Breakups are awful. I know she was everything to you.”

Your dad’s voice is comforting. The bot’s doing a good job conveying empathy vocally, and the face moves like your father’s did in life. It feels soothing to hear his full and deep voice, as it sounded before he got sick. It almost doesn’t matter what he says as long as he says it.

You look at the time and realize that an hour has passed. As you start saying goodbye, your father says, “Just remember what Adeline always says to me when I am down: ‘Sometimes good things fall apart so better things can come together.’”

Your ears prick up at the sound of an unfamiliar name—your mother’s name is Frances, and no one in your family is named Adeline. “Who,” you ask shakily, “is Adeline?”

Over the coming weeks, you and your family discover much more about your father through his bot than he revealed to you in life. You find out who Adeline—and Vanessa and Daphne—are. You find out about some half-siblings. You find out your father wasn’t who you thought he was, and that he reveled in living his life in secrecy, deceiving your family and other families. You decide, after some months of interacting with the 4evru’s version of your father, that while you are somewhat glad to learn who your father truly was, you’re mourning the loss of the person you thought you knew. It’s as if he died all over again.

What is the digital afterlife industry?

While 4evru is a fictional company, the technology described isn’t far from reality. Today, a “digital afterlife industry” is already making it possible to create reconstructions of dead people based on the data they’ve left behind.

Harry Campbell

Consider that Microsoft has a patent for creating a conversational chatbot of a specific person using their “social data.” Microsoft reportedly decided against turning this idea into a product, but the company didn’t stop because of legal or rights-based reasons. Most of the 21-page patent is highly technical and procedural, documenting how the software and hardware system would be designed. The idea was to train a chatbot—that is, “a conversational computer program that simulates human conversation using textual and/or auditory input channels”—using social data, defined as “images, voice data, social media posts, electronic messages,” and other types of information. The chatbot would then talk “as” that person. The bot might have a corresponding voice, or 2D or 3D images, or both.

Although it’s notable that Big Tech has made a foray into the field, most of the activity isn’t coming from big corporate players. More than five years ago, researchers identified a digital afterlife industry of 57 firms. The current players include a company that offers interactive memories in the loved one’s voice (HereAfter); an entity that sends prescheduled messages to loved ones after the user’s death (MyWishes); and a robotics company that made a robotic bust of a departed woman based on “her memories, feelings, and beliefs,” which went on to converse with humans and even took a college course (Hanson Robotics).

Some of us may view these options as exciting. Others may recoil. Still others may simply shrug. No matter your reaction, though, you will almost certainly leave behind digital traces. Almost everyone who uses technology today is subject to “datafication”: the recording, analysis, and archiving of our everyday activities as digital data. And the intended or unintended consequences of how we use data while we’re living has implications for every one of us after we die.

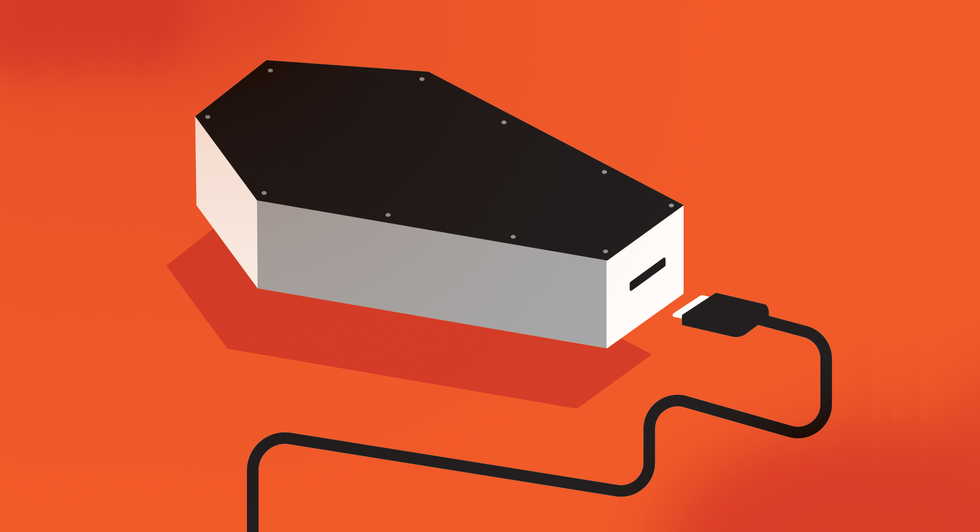

As humans, we all have to confront our own mortality. The datafication of our lives means that we now must confront the fact that data about us will very likely outlive our physical selves. The discussion about the digital afterlife thus raises several important, interrelated questions. First, should we be entitled to define our posthumous digital lives? The decision not to persist in a digital afterlife should be our choice. Yet could the decision to opt out really be enforced, given how “sticky” and distributed data are? Is deletion, for which some have advocated, even possible?

Data are essentially forever; we are most certainly not.

Digital estate planning: A checklist

- Do an inventory of your digital assets. These may include:

- hardware like computers, cellphones, and external drives and the data stored within, including files and browser history;

- data stored on the cloud;

- online accounts for things such as email, social media, photo and video sharing, gaming sites, shopping sites, money management sites, and cryptocurrency wallets;

- any websites or blogs that you manage;

- intellectual property such as copyrighted material and code;

- business assets such as domain names, mailing lists, and customer information.;

- Decide what you want done with each of these assets. Do you want accounts deleted, or preserved for your loved ones? Should revenue-generating assets like online stores be shut down, or continue to operate under someone else’s guidance? Write down the plan, including necessary login and password information.

- Name a digital executor—someone you trust—who will carry out your wishes.

- Store the plan in a secure location, either in digital or paper form. Make sure your next of kin know where your plan is and how to access it.

- Formalize it by adding the information about your executor and your plan to your will. Don’t make the plan part of your will itself, because wills become public records, and you don’t want sensitive information available to everyone.

Postmortem digital possibilities

Many of us aren’t taking the necessary steps to manage our digital remains. What will happen to our emails, text messages, and photos on social media? Who can claim them after we’re gone? Is there something we want to preserve about ourselves for our loved ones?

Some people may prefer that their digital presence vanish with their physical body. Those who are organized and well prepared might give their families access to passwords and usernames in the event of their deaths, allowing someone to track down and delete their digital selves as much as possible. Of course, in a way this careful preparation doesn’t really matter, since the deceased won’t experience whatever postmortem digital versions of themselves are created. But for some, the idea that someone could actually make them live again will feel wrong.

For those who are more bullish on the technology, there are a growing number of apps to which we can contribute while we’re alive so that our “datafied” selves might live on after we die. These products and possibilities, some creepier, some more harmless, blur the boundaries of life and death.

Data are essentially forever; we are most certainly not.

Our digital profiles—our datafied selves—provide the means for a life after death and possible social interactions outside of what we physically took on while we were alive. As such, the boundaries of human community are changing, as the dead can now be more present in the lives of the living than ever before. The impact on our autonomy and dignity hasn’t yet been adequately considered in the context of human rights because human rights are primarily concerned with physical life, which ends with death. Thanks to datafication and AI, we no longer die digitally.

We must also consider how bots—software applications that interact with users or systems online—might post in our stead after we’re gone. It is indeed a curious twist if a bot uses data we generated to produce our anticipated responses in our absence: Who is the creator of that content?

The story of Roman Mazurenko

In 2015, Roman Mazurenko was hit and killed by a car in Moscow. He died young, just on the precipice of something new. Eugenia Kuyda met him when they were both coming of age, and they became close friends through a fast life of fabulous parties in Moscow. They also shared an entrepreneurial spirit, supporting one another’s tech startups. Mazurenko led a vibrant life; his death left a huge hole in the lives of those he touched.

In grief, Kuyda led a project to build a text bot based on an open-source, machine-learning algorithm, which she trained on text messages she collected from Mazurenko’s family, friends, and her own exchanges with Mazurenko during his life. The bot learned “to be” Mazurenko, using his own words. The data Mazurenko created in life could now continue as himself in death.

Harry Campbell

Mazurenko did not have the opportunity to consent to the ways in which data about him were used posthumously—the data were brought back to life by loved ones. Can we say that there was harm done to the dead or his memory?

This act was at least a denial of autonomy. When we’re alive, we’re autonomous and move through the world under our own will. When we die, we no longer move bodily through the world. According to conventional thinking, that loss of our autonomy also means the loss of our human rights. But can’t we still decide, while living, what to do with our artifacts when we’re gone? After all, we have designed institutions to ensure that the transaction of bequeathing money or objects happens through defined legal processes; it’s straightforward to see if bank account balances have gotten bigger or whose name ends up on a property deed. These are things that we transfer to the living.

With data about us after we die, this gets complicated. These data are “us,” which is different from our possession. What if we don’t want to appear posthumously in text, image, or voice? Kuyda reconstructed her friend through texts he exchanged with her and others. There is no way to stop someone from deploying or sharing these kinds of data once we’re dead. But what would Mazurenko have wanted?

What can go wrong with digital immortals

The possibility of creating bots based on specific persons has tremendous implications for autonomy, consent, and privacy. If we do not create standards that give the people who created the original data the right to say yes or no, we have taken away their choice.

If technology like the Microsoft chatbot patent is executed, it also has implications for human dignity. The idea of someone “bringing us back” might seem acceptable if we think about data as merely “by-products” of people. But if data are more than what we leave behind, if they are our identities, then we should pause before we allow the digital reproduction of people. Like Microsoft’s patent, Google’s attempts to clone someone’s “mental attributes” (also patented), Soul Machines’ “digital twins,” or startup Uneeq’s marketing of “digital humans” to “re-create human interaction at infinite scale” should give us pause.

Players in the digital afterlife industry

Dozens of companies exist to help people manage their social media accounts and other digital assets, to communicate with loved ones after death, and to memorialize the deceased. Here is a small sampling of the services on offer.

Bcelebrated: You can create your own autobiographical website, to which your “activators” can add funeral and memorial information when you die. Automated emails alert your contacts and invite them to the site.

Directive Communication Systems: DCS organizes all of your online accounts (including “confidential accounts”) and executes your directives to shut them down, transfer them, or memorialize them.

GhostMemo: If you fail to reply to periodic “proof of life” emails from the company, it sends out your prewritten final messages.

HereAfter: You use an app to record stories about your life; when you’re gone, your loved ones can ask questions and hear the responses in your own voice.

Lifenaut: You provide a DNA sample and fill out a “mindfile” with biographical photos, videos, and documents in case it someday becomes possible to create a “conscious analogue” of you.

MyWishes: In addition to helping you make both a traditional will and a digital estate plan, this site lets you schedule messages to loved ones on dates after your death so you can send birthday greetings and the like.

My Wonderful Life: You can plan your own funeral, including the eulogists, music, and food, as well as leave letters for loved ones.

Part of what drives people to consider digital immortality is to give future generations the ability to interact with them. To preserve us forever, however, we need to trust the data collectors and the service providers helping us achieve that goal. We need to trust them to safeguard those data and to faithfully represent us going forward.

However, we can also imagine a situation where malicious actors corrupt the data by inserting inauthentic data about a person, driving outcomes that are different from what the person intended. There’s a risk that our digital immortal selves will deviate significantly from who we were, but how would we (or anyone else) really know?

Could a digital immortal be subject to degrading treatment or interact in ways that don’t reflect how the person behaved in real life? We don’t yet have a human rights language to describe the wrong this kind of transgression might be. We don’t know if a digital version of a person is “human.” If we treat these immortal versions of ourselves as part of who a living person is, we might think about extending the same protections from ill treatment, torture, and degradation that a living person has. But if we treat data as detritus, is a digital person also a by-product?

There might also be technical problems with the digital afterlife. Algorithms and computing protocols are not static, and changes could make the rendering of some kinds of data illegible. Social scientist Carl Öhman sees the continued integrity of a digital afterlife as largely a software concern. Because software updates can change the way data are analyzed, the predictions generated by the AI programs that undergird digital immortality can also change. We may not be able to anticipate all of these different kinds of changes when we consent.

In the 4evru scenario, the things that were revealed about the father actually made him odious to his family. Should digital selves and persons be curated, and, if so, by whom? In life, we govern ourselves. In death, data about our activities and thoughts will be archived and ranked based not on our personal judgment but by whatever priorities are set by digital developers. Data about us, even embarrassing data, will be out of our immediate grasp. We might have created the original data, but data collectors have the algorithms to assemble and analyze those data. As they sort through the messiness of reality, algorithms carry the values and goals of their authors, which may be very different from our own.

Technology itself may get in the way of digital immortality. In the future, data format changes that allow us to save data more efficiently may lead to the loss of digital personas in the transfer from one format to another. Data might be lost in the archive, creating incomplete digital immortals. Or data might be copied, creating the possibility of digital clones. Digital immortals that draw their data from multiple sources may create more realistic versions of people, but they are also more vulnerable to possible errors, hacks, and other problems.

The dead could be more present in the lives of the living than ever before.

A digital immortal may be programmed such that it cannot take on new information easily. Real people, however, do have opportunities to learn and adjust to new information. Microsoft’s patent does specify that other data would be consulted and thus opens the way for current events to infiltrate. This could be an improvement in that the bot won’t increasingly sound like an irrelevant relic or a party trick. However, the more data the bot takes in, the more it may drift away from the lived person, toward a version that risks looking inauthentic. What would Abraham Lincoln say about contemporary race politics? Does it matter?

And how should we think about this digital immortal? Is digital Abe a “person” who deserves human rights protections? Should we protect this person’s freedom of expression, or should we shut it down if their expression (based on the actual person who lived in a different time) is now considered hate speech? What does it mean to protect the right to life of a digital immortal? Can a digital immortal be deleted?

Life after death has been a question and fascination from the dawn of civilization. Humans have grappled with their fears of death through religious beliefs, burial rites, spiritual movements, artistic imaginings, and technological efforts.

Today, our data exist independent of us. Datafication has enabled us to live on, beyond our own awareness and mortality. Without putting in place human rights to prevent the unauthorized uses of our posthumous selves, we risk becoming digital immortals that others have created.

This article appears in the November 2023 print issue as “The Creepy New Digital Afterlife Industry.”

- Mum No More: 3D-Printed Vocal Tract Lets Mummy Speak ›

- Can We Quantify Machine Consciousness? ›

- Your Life As A Digital Ghost - IEEE Spectrum ›

- Illustrator Harry Campbell Captured Tech's Ineffability - IEEE Spectrum ›