Contact Tracing Apps Struggle to Be Both Effective and Private

Google, Apple, and governments are asking people to opt in to unproven technologies

In June, as the coronavirus swept across the United States, Paloma Beamer spent hours each day helping her university plan for a September reopening. Beamer, an associate professor of public health at the University of Arizona, was helping to test a mobile app that would notify users if they crossed paths with confirmed COVID-19 patients.

A number of such “contact tracing” apps have recently had their trials by fire, and many of the developers readily admit that the technology has not yet proven that it can slow the spread of the virus. But that caveat has not stopped national governments and local communities from using the apps.

“Right now, in Arizona, we’re in the full-blown pandemic phase,” Beamer said, speaking in June, well before the new-case count had peaked. “And even manual contact tracing is very limited here—we need whatever tool we can get right now to curb our epidemic.”

Traditionally, tracers would ask newly diagnosed patients to list the people they’d spent time with recently, then ask those people to provide contacts of their own. Such legwork has helped to control other infectiousdisease outbreaks, such as syphilis in the United States and Ebola in West Africa. However, while these methods can extinguish the first spark or the last embers of an epidemic, they’re no good in the wildfire stage, when the caseload expands exponentially.

That’s the reason to automate the job. Digital contact tracing may also jog fuzzy memories by dredging up relevant information on where a patient has been, and with whom. Some technologies can go further by automatically alerting people who have been in close proximity to a patient and thus may need to get tested or go into isolation. Speedy notification is particularly important during the COVID-19 pandemic, given that asymptomatic people seem capable of transmitting the virus.

Automatic alerts may sound great, but there are “limited real-world use cases” and “limited evidence for their effectiveness,” says Joseph Ali, associate director for global programs at the Johns Hopkins Berman Institute of Bioethics and coauthor of the book Digital Contact Tracing for Pandemic Response, published in May. Rushed deployment of unproven technologies runs the risk of misidentifying moments of exposure that in fact never happened—false positives—and missing moments that did happen, or false negatives.

Some governments have embraced these apps; others have struggled with the decision. The United Kingdom, for example, initially spent millions developing an app that would collect data and send it to a centralized data storage system run by the National Health Service. But privacy advocates raised concern about the system, and in June the government announced that it would abandon that effort and switch to a less-centralized alternative built on technology from the tech giants Apple and Google.

The U.K.’s indecision shows how the choice of strategy revolves around privacy trade-offs. Some countries have staked everything on effectiveness and nothing on privacy.

Wuhan, the Chinese city at the heart of the pandemic, squashed the virus, eased the lockdown, then saw a small resurgence of the contagion in May. Public-health authorities went all out: They tested the entire population of 11 million and instituted the tracking of each person’s movements. Would-be customers could enter a shop only by having their temperature taken and exchanging personal bar codes, displayed on their phones, with the shop’s own identifying barcode. They then had to repeat the exchange upon leaving. That way, if anyone in the shop ended up testing positive, the authorities would be able to find whoever was in the same place at the same time, test those people, and, if necessary, quarantine them.

It worked. As of mid-July, Wuhan was reporting that no new cases of the virus had been recorded for 50 consecutive days. But such a gargantuan effort is not always an option. In many parts of the world, most people will willingly participate only if they trust in the system.

This may prove especially challenging in the United States, where early apps rolled out by states such as Utah and North Dakota failed to catch on. Making matters even more awkward, an independent security analysis found that the North Dakota app violated its own privacy policy by sharing location data with the company Foursquare.

In an online survey of Americans conducted by Avira, a security software company, 71 percent of respondents said they don’t plan to use a COVID contact-tracing app. Respondents cited privacy as their main concern. In a telling contrast, 32 percent said they would trust apps from Google and Apple to keep their data secure and private—but just 14 percent said they would trust apps from the government.

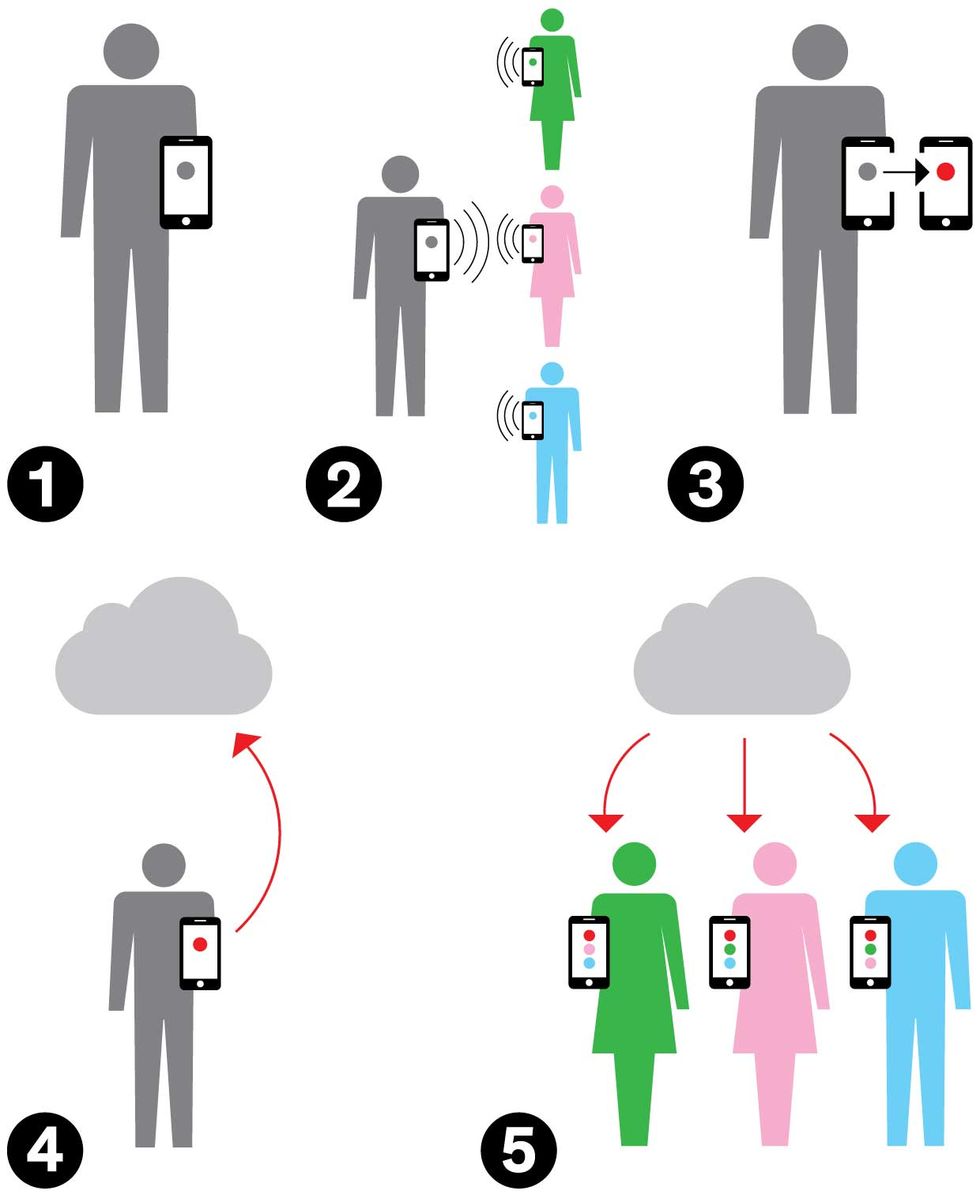

Tell It to the Cloud

[1] Person A commutes to work, bringing a Bluetooth-enabled phone with a digital key, which is used to communicate with other phones. [2] Person A comes in contact with persons B, C, and D; all their phones exchange key codes. [3] Person A later learns he’s infected and enters his new status in the app. [4] By agreeing to share his status with the database, A instructs the app to send the data to the cloud. [5] Meanwhile, B’s, C’s, and D’s phones are regularly checking the cloud database to check the status of their users’ contacts. When B, C, and D discover that A has reported himself infected, they all know they should get tested for the virus.

One shining example of effective digital contact tracing is South Korea, which built a centralized system that scrutinized patients’ movements, identified people who had been in contact with patients, and used apps to monitor people under quarantine. To date, South Korea has successfully contained its COVID-19 outbreaks without closing national borders or imposing local lockdowns.

The South Korean government’s system gave contact tracers access to multiple information sources, including footage from security cameras, mobile base station data, and credit card transaction data, says Uichin Lee, an associate professor of industrial and systems engineering at the Korea Advanced Institute of Science and Technology (KAIST), in Daejeon. “This system helps them to quickly identify hot spots and close contacts,” Lee says.

But South Korea’s system also publicly shares patients’ contact-trace data—including pseudonymized information on demographics, infection information, and travel logs. This approach raises serious privacy concerns, as Lee and his colleagues outlined in the journal Frontiers in Public Health. The travel logs alone could enable observers to infer where a patient lives and works.

By comparison, public-health authorities in Europe and the United States have shied away from publicly sharing such patient data. There’s also a middle way: A person’s phone may store data identifying people or location, and it may be left to the owner of the phone whether to share that information with public-health officials.

And then there’s the radical idea of not storing such data at all. That’s the approach taken by the Google/Apple Exposure Notification (GAEN) system. As these tech giants own, respectively, the Android and iOS smartphone standards, the GAEN system enables independent developers to build apps that can run on either standard. The system records Bluetooth transmissions between phones in close proximity to one another, and stores that data as anonymized beacons on each phone for a limited time. If one phone user tests positive for COVID-19 and enters that positive status in a mobile app built upon GAEN, the system will alert other phone users who have been in close proximity within the potentially infectious time period.

To protect user privacy, the system does all these things without ever recording the exact location of such encounters. It also limits the reported exposure time for each encounter to 5-minute increments, with a maximum possible total of 30 minutes. That constraint makes it more difficult for users to guess the source of their exposure.

The GAEN system also appeals to those wary of increased surveillance in the name of public health. Germany, Italy, and Switzerland have already deployed exposure-notification apps based on GAEN, and other countries will likely follow. In the United States, Virginia was the first to introduce one.

“If you collect identifying information along with Bluetooth data, it could potentially lead to new forms of surveillance,” says Tina White, founder and executive director of the nonprofit COVID Watch and a Ph.D. candidate at the Stanford Institute for Human-Centered Artificial Intelligence. “And that’s exactly what we don’t want to see.” COVID Watch is working with the University of Arizona on a privacy-centric app based on the GAEN system. Preliminary testing involving two phones placed at different indoor locations has ramped up to more real-life campus scenarios inside classrooms, dining halls, and the Cat Tran student shuttle, followed by a campuswide rollout in mid-August.

There’s one big hitch: Repurposing Bluetooth from its original communication function poses serious technical difficulties. At Trinity College Dublin, researchers found that Bluetooth can perform poorly on the crucial task of proximity detection when a phone is in the presence of reflective metal surfaces. In one experiment on a commuter bus, a Swiss COVID-19 app built on the GAEN system failed to trigger exposure notifications even though the phones were within 2 meters (a little over 6 feet) of each other for 15 minutes.

“Public transport, which seems kind of mundane, is actually one of the core use cases for contact-tracing apps, but it’s also a terrible radio environment,” says Douglas Leith, a professor of computer science and statistics at Trinity College. “All our measurements suggest that it probably won’t work on buses and trains.”

Another problem is the variation in antenna configuration over the thousands of Android phone models. Engineers must calibrate the software to make up for any loss in signal strength, Leith explains. And although Bluetooth-signal “chirps” require minimal power, simply listening for such chirps requires that the main phone processors be turned on, which can quickly drain battery power unless the apps are restricted to short listening periods.

Beyond the technical challenges faced by Bluetooth-based apps, all contact-tracing apps suffer from the same general problem: Unless a certain percentage of the population installs an app, it can’t do its work. People won’t opt in unless they believe in the public-health strategy behind an app and in the personal advantages they can hope to gain from it. Making that sale has been tough. In Germany, which has had some of the best results of any country in containing the virus, only 41 percent of the population has said it was willing to download what is known as the Corona-Warn-App.

Some researchers point to a University of Oxford study that modeled the coronavirus’s spread through a simulated city of 1 million people; it found that 60 percent adoption is needed to stop the pandemic and keep countries out of lockdown (although the study suggested that lower rates of adoption could still prove helpful).

The tech giants are making widespread adoption easier by deploying an app-less Exposure Notifications Express function for iOS and Android devices. If a phone user opts in, the phone begins listening for nearby Bluetooth beacons from other phones. And later, if a stored Bluetooth beacon proves to be a match for someone confirmed to be positive for COVID-19, the system will prompt the user to download an exposure-notification app for more information.

Bluetooth is not the only way forward. The COVID Safe Paths project led by the nonprofit PathCheck Foundation, an MIT spin-off, has been developing and fielding a mobile app that uses GPS location data instead. The GPS approach provides more location data than Bluetooth does, in exchange for less user privacy. But Safe Paths also aims to build a Bluetooth-based version with the GAEN system. “We have been mostly agnostic to the technology we want to use,” says Abhishek Singh, a Ph.D. candidate in machine learning at the MIT Media Lab and a member of Safe Paths.

“It matters less what’s actually happening in the back end, and more about communication and perception,” says Kyle Towle, a member of the technology team at Safe Paths and former senior director of cloud technology at PayPal. The crucial component, he says, is the “appeal to our community members to gain that trust in the first place.”

The best path to success may come from ample preparation. South Korea’s experience with an outbreak of Middle East respiratory syndrome (MERS) in 2015 prompted the government to update national laws and lay the bureaucratic and technological foundations for an efficient contact-tracing system. The resulting public-private partnership enables human contact tracers to pull together digital data on a suspected or confirmed case’s travel history within 10 minutes.

“A country like South Korea, maybe because they went through this before with other viruses five years ago, really got a head start, and they didn’t mess around,” says Marc Zissman, associate head of the cybersecurity and information sciences division at MIT Lincoln Laboratory. The lab is among those in the PACT (Private Automated Contact Tracing) project, which is testing GAEN’s Bluetooth-based app performance.

Zissman says that developing digital contact tracing during a pandemic is like building a plane and flying it at the same time while also measuring how well everything works. “In a perfect world, something like this would have taken a couple years to implement,” he says. “There just isn’t the time, so instead what’s happening is people are doing the best they can, and making the best engineering judgments they can, with the data they have and the time that they have.”

This article appears in the October 2020 print issue as “The Dilemma of Contact-Tracing Apps.”

Editor's note: This story originally stated that the Korean system made use, among other things, of GPS data from mobile phones; in fact, it uses mobile base station data.