Climate Control

We will be able to engineer the Earth to our liking—but we’d better start now

In the last century or so, humankind has rescripted its role in the natural world. We have learned to treat many dread diseases, feed billions of people, cut canals between continents, and harness the power of the atom. We’ve bent much of nature to our will.

But we still can’t do a darn thing about the weather.

Though we are clearly learning to adapt to extreme weather, it still killed 19 000 people per year on average between 2000 and 2004, according to data gathered at the Center for Research on the Epidemiology of Disasters at the Université Catholique de Louvain, in Brussels. In the United States, just one huge storm, Hurricane Katrina in 2005, killed at least 1600 people and caused damage estimated at US $81.2 billion. Climate change, the alteration in the established weather patterns, is likely to bring still more economic, political, and social havoc. Even the less extreme climate-change scenarios predict stronger and more frequent storms leading to more deaths and greater damage to property. In the more severe scenarios, cities—even entire nations—could disappear under rising oceans; once-productive farmlands parch; and vast swathes of ocean become increasingly acidic, sending out ripples of extinction.

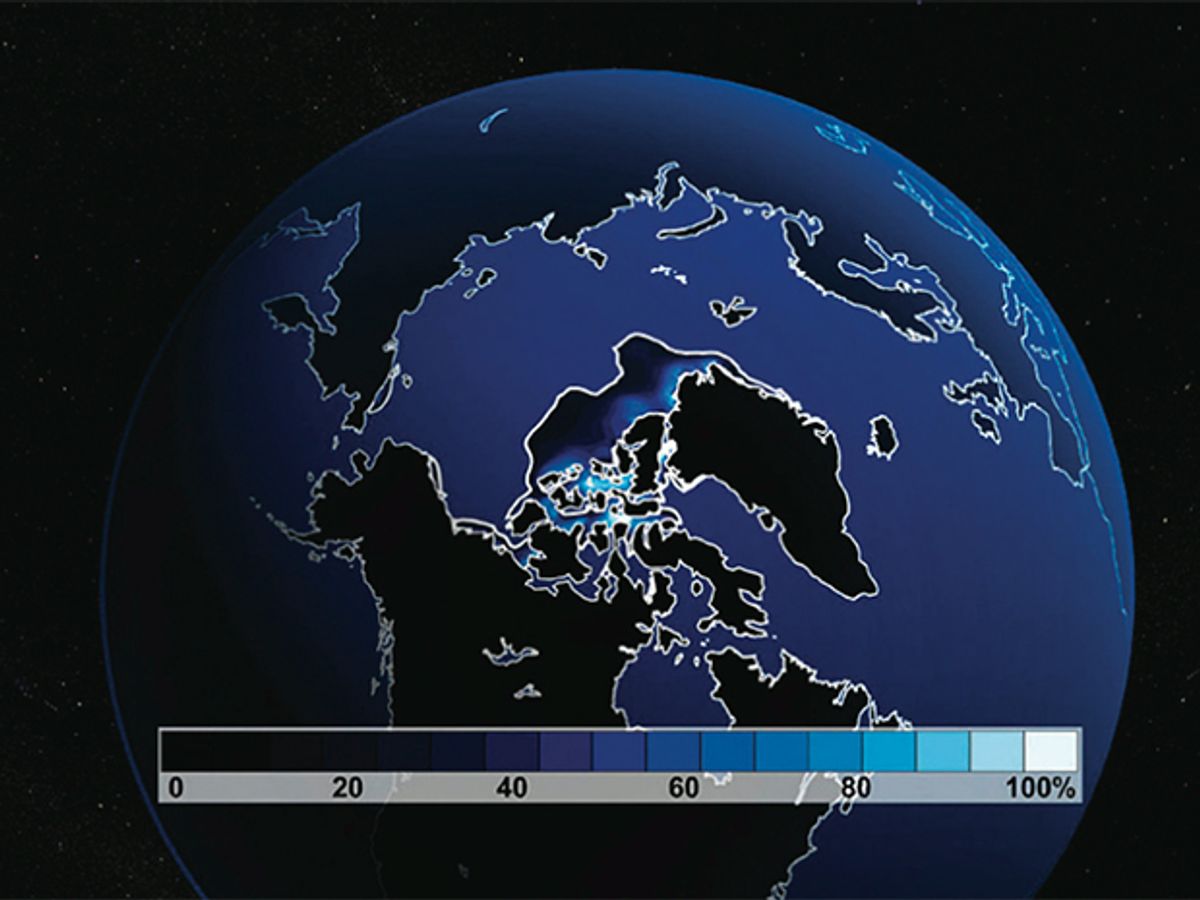

Overwhelming scientific evidence indicates that the Earth has warmed noticeably over the past century and a half. Eleven of the last 12 years were among the warmest since global records began in 1850. The global average temperature is up almost 1°C since that time. Sea level is rising by about 1 centimeter every three years, in part because the oceans absorb much of the increased heat and expand.

What is less clear is the extent of humankind’s role in the change. The latest consensus of climate scientists, summarized in last February’s report of the Intergovernmental Panel on Climate Change, is that by far the biggest component of the forces currently warming the Earth is the increasing concentration of carbon dioxide, and the source of that carbon dioxide is us.

Even if you differ with the panel’s conclusion, you undoubtedly agree that variations in climate don’t occur without a reason. Briefly, the observed global warming prior to 1950 is best explained by natural variations in the sun’s brightness and volcanic activity. In contrast, the warming since 1950 defies explanation by any known natural cause. Yet it fits quite closely with what we would expect from the well-documented human contribution to increased carbon dioxide. Among the strongest evidence is that the climate is changing with a geographic and altitude-specific pattern consistent with explanations based on greenhouse gases but not with other possible explanations-including such oft-suggested alternatives as variations in the sun’s brightness and in the intensity of cosmic rays.

Our influence on climate may be inadvertent, but it is a milestone in civilization’s progress. We have, for the first time, the technological capacity to noticeably alter climate on a global basis within a person’s lifetime. History suggests that our expanding population and increasing technological ability will cause this capacity to grow with time, not decline. If not because of greenhouse gas emissions, it will be because of something else, such as changes in land coverage or the acidification of the ocean. The question now is: Should we strive to channel this capacity to our benefit, or should we struggle perpetually to avoid having any impact, for better or worse?

I believe the choice is clear. Whether we start today or in a decade, it is inevitable that we will begin to apply our newfound capabilities to actively manage—even engineer—climate. In fact, it could be argued that our limited efforts to reduce greenhouse gases through the Kyoto Protocol represent a primitive form of engineering. It may be many decades before we have sufficient confidence in our skills to apply them more broadly, but there are moral as well as practical reasons to begin doing so. We are wise to invest in technologies that will help us adapt to a changing climate. But by themselves, they will still leave us vulnerable. Engineering the climate could help transform the remaining risks into benefits: increasing global crop yields through longer and more predictable growing seasons, altering large-scale weather patterns to deliver rainfall where it is needed, and limiting the frequency and magnitude of deadly floods and other natural disasters. Such engineering might also mitigate the natural climate change that has been a large and sometimes destructive force in human history—such as the Little Ice Age that is linked to many famines in Europe between the 14th and 19th centuries. Providing food and water to a growing global population and shielding them to the greatest extent possible against the ravages of severe weather is both a moral and a practical obligation. If society has the tools to do this within acceptable risk levels, it should apply them.

Still, you may think the idea of intentionally modifying the climate is frightening or even repellent. If so, you’re not alone—it would be a high-risk endeavor and raises the ire of environmentalists and atmospheric scientists alike. Some of our feelings about intentional modification—climate management—stem from the noble but naive expectation that all human influence on the climate can be eliminated. In truth, today we have likely already introduced irreversible ecological changes around the world. Among other things, as warming drives species northward, those in the polar regions have nowhere to go and may be long extinct before our efforts can cool the planet enough for them to survive.

The real debate is not how to eliminate all human influence—that is an unrealistic and perhaps even meaningless endeavor. The more relevant issue is how much human influence our planet and its inhabitants can tolerate. Surprisingly, the “less is better” conventional wisdom on this point is overly simplistic.

Suppose we accept, as many climate experts have, that a realistic goal for the next few decades is to halt the growth of greenhouse gas levels rather than reduce it. In choosing the carbon dioxide level to stop at, it is quite possible that we will find that an atmospheric carbon dioxide level of 550 parts per million—about twice the preindustrial amount and a level we’re likely to surpass before the end of this century—will alter precipitation patterns over some huge swath of the globe to the relative benefit of farmlands, population centers, and globally important ecosystems, as compared to a little less or a little more carbon dioxide, say, 500 ppm or 600 ppm. Or we might find something else entirely. The point is, we won’t know unless we ask the question and do the research.

Becoming climate managers will be one of the most difficult things human beings have ever done. It took relatively simple technology—cars and fossil-fuel power plants—to get to this point. It will take far more sophisticated stuff to get us to where we can confidently start deliberately altering climate. Such “geoengineering” technologies are mostly just fantasy now. The roster of possibilities ranges from the aeronautical to the agricultural: putting up space shields that cover billions of square meters; using chemicals to reflect sunlight or increase Earth’s cloud cover; stimulating massive growth of phytoplankton in the oceans; and huge reforestation projects [see illustration, "Nine Ways to Cool the Planet"]. These sound farfetched, but as is true of most multigenerational technology development efforts, our early ideas probably offer only a glimpse of what will eventually unfold.

There is now a small but growing will to support geo engineering research, despite entrenched reservations about the idea. Yet, as difficult as it would be to develop geoengineering technologies, deploying them would be a hugely more challenging affair, requiring not just the engineering technologies but breakthroughs in climate forecasting, systems management, global politics, economics, and social sciences.

Our tools and skills are clearly insufficient for climate management today. The consequences of mismanaging climate would certainly be global and could be catastrophic. We lack the scientific understanding to accurately predict the results of intentionally modifying the climate. And today we are missing the political system even to decide what sort of climate we want to strive for. But now is the time to commit to developing the tools we’ll need. One of the hidden dangers of delaying such a commitment is that gaining too much knowledge about the coming climate may be an impediment to making a better one. Our current scientific understanding is just beginning to support reliable climate predictions at spatial scales needed to determine which nations will benefit overall from the climate we are heading for, and which will suffer.

But within a decade or two—perhaps sooner—advances in our understanding of climate and the inevitable increase in computational power will likely let us predict who wins and who loses in considerable detail. At that point, those nations that believe they are winners will lose the incentive to support climate management efforts that lessen their advantage. Any international consensus for action will dissolve. And progress toward global solutions will cease. The upcoming 10- or 20-year time frame may well provide us our last opportunity to establish a long-term international agreement on how to manage climate. It is a grace period we mustn’t squander.

The Earth is a complicated planet. There is plenty we don’t understand about it and a lot more we’d need to know to manage its climate. Nevertheless, we have acquired a lot of confidence regarding the key elements of climate science. As far back as the late 1800s, for example, scientists knew that a minute increase in the amount of carbon dioxide in the atmosphere can have a tremendous influence on the average global temperature. Carbon dioxide is only 0.04 percent of the atmosphere, yet without it and similar greenhouse gases the global average temperature of 15 °C would be at least 20°C colder! Human-induced global warming occurs on top of this already substantial natural effect.

The basic theory behind the greenhouse effect is simple. The sun’s energy—about 344 watts per square meter on average—arrives largely in the visible portion of the electromagnetic spectrum. The Earth’s surface and atmosphere absorb this energy and reradiate it, largely in the far-infrared part of the spectrum. In thermal equilibrium, the power level of the incoming radiation matches what is going out; Earth’s temperature rises or falls until it reaches this radiation balance. Carbon dioxide, which is at its highest atmospheric concentration in at least 650 000 years, modifies this simple balance. It is a strong absorber of infrared wavelengths, so it traps energy that would otherwise escape to space and nudges upward the temperature at which the radiation balance occurs.

In reality, of course, the situation is more complicated. Warmer air holds more water vapor, which is itself an important greenhouse gas. If we add carbon dioxide to the atmosphere, water vapor becomes more abundant and amplifies the temperature increase that would result from the carbon dioxide alone. Indeed, somewhat more than half of any human-induced global warming comes from this and other feedback processes. (One critical difference between the effect of carbon dioxide and the effect of water vapor is that carbon dioxide lingers for decades to centuries in the atmosphere, but water vapor readily rains out when conditions change.) Scientists are working to further refine the theory through better understanding of positive and negative feedback loops introduced by clouds and aerosols, the impact of other greenhouse gases such as methane, and the role of land-cover changes such as deforestation, among other things.

Indeed, these feedback loops are what makes it challenging to determine how rapidly climate is changing and what is causing the change. We know that the atmospheric abundance of carbon dioxide has increased by a third since scientists first made direct measurements in the late 1950s. By the basic greenhouse theory, this fact alone would make us expect changes in climate. But how do we conclusively relate changing temperature and rising sea levels to carbon dioxide or any other cause, given all of the feedback mechanisms involved? And, even more important, how do we reliably distinguish human impacts from those that occur naturally?

As with any field of science, the answers lie in finding the theory that best fits the evidence. Basic physics tells us that added carbon dioxide must warm the atmosphere and oceans unless something else changes to counteract the additional trapped heat. It is entirely possible that natural global warming, due to solar variation or other mechanisms, could be occurring as well—but that doesn’t let us off the hook—our emissions and other activities must still be trapping heat and affecting the climate.

Here’s where things get challenging. The many feedback mechanisms, including absorption of atmospheric carbon into the ocean and changes in cloud patterns, mean that our seemingly simple question about additional heat turns rapidly into a complex question about how the climate responds. Computer models provide the only means to bring together all of the interactions and feedback mechanisms to provide an answer. What scientists find is that they can account for the observed patterns of temperature change around the world only if they build in human carbon dioxide emissions—natural variations such as those of solar output, volcanism, and cosmic rays just don’t do it. It is possible to find situations for which the greenhouse theory fails. But it succeeds far more than any competing theory and its success rate is only improving.

If you accept that climate management could be desirable, the next reasonable question is whether it is feasible. The first step on the road to climate management is to improve our ability to forecast the climate. That won’t be easy, because climate is chaotic—in the mathematical sense. Chaotic systems are characterized by high sensitivity to initial conditions, meaning that their future evolution can be accurately predicted only if their present state is known with great precision. To make detailed predictions of climate, we’d need to know recent climate with a level of precision unobtainable in practice. What saves us is that we do not need detailed climate predictions, but rather averages and trends, which are readily seen in chaotic systems. For example, it would be futile to try to accurately forecast the average temperature in a city during a single day a year from now. But predicting the average temperature in that city during the month of August is a realistic challenge, and averages are what’s really needed for climate forecasting.

Simulations run on supercomputers are the key to both weather and climate forecasts. Software simulates the planet as a giant grid, incorporating the influences of the atmosphere, oceans, and terrain at each grid point. Scientists initialize the models with today’s climatic conditions and then run the simulation over and over, tweaking the initial conditions each time, and averaging the result. In this way they can predict how both natural and human-caused forces will alter the climate in coming decades. But climate forecasting’s challenges go beyond those of weather forecasting; among other things, effective climate forecasting also requires that scientists estimate such things as the number of cars and factories in the year 2050, quantities strongly influenced by economic cycles and public policy and which are therefore very hard to predict. With all of these considerations, there is no simple metric to tell us how quickly our climate forecasting capabilities will improve. Best estimates from several scientists suggest that it will be a decade or two before we will know in detail, for any given country, whether the long-term impact will be more rainfall or less, a temperate climate or an extreme one.

Before we can make forecasts accurate enough to let us engineer the global climate, we will need three things: much more powerful supercomputers, better observations for initializing and testing the models they run, and deeper understanding of climate physics. The one thing we can really count on in this list is the steady advance of computational power, which will allow scientists to run models faster and with finer detail. The most powerful supercomputer in 1993 could perform 597 billion floating-point operations per second, or 597 gigaflops. Last year’s top number cruncher, the IBM BlueGene/L at Lawrence Livermore National Laboratory, in California, ran nearly five hundred times as fast, 280 teraflops. And, supercomputer makers are on target to reach 10 000 teraflops by 2012. The advance will let scientists simulate Earth at finer scales and incorporate more realistic physics while keeping the time it takes to run a simulation to a matter of months.

But even if computing power continues to advance for the foreseeable future at its present pace, and Moore’s Law suggests it will, a lack of data, rather than our ability to process them, will quickly become the fundamental limitation to our forecasting ability. Today’s climate-observing systems were designed primarily to monitor weather and lack the instruments we’ll need for reliable forecasting. We have only recently developed, for example, the first satellites to measure such critical climate parameters as the vertical distribution of clouds and the global concentrations of aerosols—small liquid or solid particles such as soot and dust. Clouds both trap Earth’s radiated energy, warming the planet, and reflect sunlight, cooling it. Knowing their vertical distribution is crucial, because the higher clouds are in the atmosphere, the more opportunity they have to reflect the sun’s energy before it is absorbed. Aerosols also play an important role in reflecting sunlight, again depending on their altitude.

So how do we advance from prediction to manipulation? Very carefully. A report in 1992 by the U.S. National Research Council summarized a host of geoengineering methods for countering global warming [see illustration, "Nine Ways to Cool the Planet"]. It described armadas of reflective balloons, aircraft fleets disbursing antiâ’’greenhouse-gas chemicals, and space-based mirrors. Fifteen years later, most of them still seem a little wacky. Indeed, our current view of geoengineering is probably analogous to Jules Verne’s 1860s view of spaceflight. Verne’s vision was prophetic, but the technology needed to really get us to the moon required another century of innovation. Our ideas today about geo engineering aren’t likely to be much more realistic than were Verne’s about spaceflight. But a century is a long time for engineers, and our early ideas are almost certain to be only a hint of the solutions that we will ultimately implement.

The first serious thinking about geoengineering began at the close of World War II, according to the companion Web site to the book The Discovery of Global Warming (Harvard University Press, 2003), by science historian Spencer Weart. After the war, some scientists were claiming that land use, such as felling forests, could alter local weather, and General Electric scientist Irving Langmuir was developing the chemical cloud-seeding techniques still in use today-though to questionable effect. Concerned that climate or weather could become weapons in whatever war came next, none other than computing pioneer John von Neumann took action. He brought together a group of top scientists at Princeton University who agreed that it was possible to alter the weather and the climate, launching geoengineering research in earnest. During this time, the Soviets even sought to melt Arctic sea ice to open up navigation. One strategy was to build a dam across the Bering Strait and pump Arctic water into the Pacific, thereby drawing warm Atlantic water in to melt the ice. For its part, the United States went so far as to try to flood North Vietnamese supply lines in the 1970s using rainmaking technology. None of these schemes did anything more than prompt the United Nations in 1978 to ban “environmental modification techniques” in warfare.

Geoengineering faded into the background as the efficacy of rainmaking technology came into question. Scientists realized they could not reliably predict the outcome of geoengineering attempts, and society became skeptical of big technological solutions. Even in the more recent debates over global warming, climate scientists have been reluctant to discuss geoengineering solutions.

But an important shift in perception began just last August. The impetus was an article by Paul J. Crutzen in the respected journal Climatic Change. Crutzen, an atmospheric chemist at the Max Planck Institute for Chemistry, in Mainz, Germany, shared the 1995 Nobel Prize for explaining the formation and destruction of ozone. He proposed injecting sulfate particles into the stratosphere—the region between 15 and 50 kilometers up—to reflect sunlight and cool the planet. This approach mimics the natural global cooling known to take place when volcanoes erupt. We have excellent examples of just how this works; the June 1991 eruption of Mount Pinatubo, in the Philippines, dropped the global average temperature by 0.5 °C for the following year.

Burning fossil fuels throws 55 billion metric tons per year of sulfur into the air, according to research by David I. Stern, an economist at Rensselaer Polytechnic Institute, in Troy, N.Y. About half of that turns to sulfate. But Crutzen thinks we could compensate for even a doubling of carbon dioxide with just about 10 percent of that, or 5.3 billion metric tons per year, and at a cost of $25 per metric ton of sulfur or $132.5 billion total. The key is to inject sulfur into the stratosphere near the equator instead of near Earth’s surface as polluting factories and power plants do. If that’s done, the sulfate will stay aloft and will reflect the sun for up to two years. Of course, there would be side effects. Acid rain would increase, and the ozone layer that we have been so carefully repairing would likely thin again.

There are, certainly, other ways besides intentionally polluting the atmosphere to put Earth in the shade. J. Roger P. Angel, an astronomer at the University of Arizona, in Tucson, who is a premier optical telescope designer proposed placing a system of 1-meter-wide refractive screens in space that would divert almost 2 percent of the sun’s rays. These screens would have to be located at the so-called inner Lagrange point, a spot where a satellite would remain essentially stationary between Earth and the sun. Angel estimates that getting all 20 million metric tons of the screens there would cost about $4 trillion, making Crutzen’s sulfate-seeding concept seem like a bargain.

Some have suggested that the best solution is to enhance Earth’s cloud cover, nature’s own solar reflector. Researchers showed in the late 1980s that an increase of 4 percent in the reflectivity of marine stratocumulus clouds would offset a doubling of carbon dioxide. Clouds both reflect sunlight and trap Earth’s reradiated heat, so the trick would be to ensure that reflection dominated trapping.

For that reason, the process works best over oceans, where the increased reflection predominates. John Latham, at the National Center for Atmospheric Research, in Boulder, Colo., suggests improving the reflectivity and longevity of low-lying marine clouds by blowing seawater droplets into the air. The salt causes water vapor in the air to condense around it, making clouds more bright and stable. Stephen Salter, a professor of engineering design at the University of Edinburgh, has even designed a multimillion-dollar ship to do the job. He estimates that it would take more than 5000 such vessels, each spewing 10 kilograms per second of salt-water vapor, to counter the expected increase in carbon dioxide over the coming decades. Implementing such techniques would require us to better understand cloud dynamics, including how clouds form, evolve, and dissipate in response to such artificial stimulation.

Another ocean-centered idea, though an ecologically questionable one, is to use blooms of photosynthetic organisms called phytoplankton to pull carbon dioxide out of the air. Phytoplankton use some of the carbon dioxide they breathe to form their bodies. When they die, they sink to the ocean floor, carrying away some carbon.

Puzzled by the vast stretches of open ocean where few phyto plankton live, oceanographer John Martin hypothesized in a 1989 Nature article that those areas had too little iron to support life. Several groups of scientists have since tested the hypothesis by spreading iron dust in the oceans. The iron indeed triggers a temporary bloom of phytoplankton, and a significant amount of carbon—thousands of tons in one experiment—sank to the ocean bottom. However, some scientists are concerned that in a full-scale geoengineering project, the ocean’s circulation would not be strong enough to keep the sea surface from becoming saturated with dead plankton—choking off the growth of new plankton, limiting the amount of carbon taken in, and, quite possibly, killing untold millions of other sea creatures.

Admittedly, many of these proposals seem fantastically expensive, risky, or both. But they are neither the last nor the best ideas science and engineering will have to offer. For example, in coming up with new ways to cool the planet, the chaotic nature of climate itself may rescue us. We know that the climate system amplifies some otherwise small influences. Greenhouse gases are one such amplifier. Recall that carbon dioxide makes up only 0.04 percent of the atmosphere, yet it is largely the cause of the crisis we find ourselves in now. What other such amplifiers can we find? It is possible, for example, that we might identify chemical “antidotes” that could rapidly break down certain greenhouse gases, enabling us to fine-tune their abundances. We might also find we can selectively modify how much solar energy reaches Earth’s surface through something as simple as directing commercial airline traffic to create contrails over one region but not another, and leveraging the ensuing cloud generation. If we could find such subtle amplification or alteration mechanisms, and discover how to use them safely, we might have a shot at controlling climate.

If and when we find we can alter climate to our needs, we may discover that our big challenges are just beginning. First, there would be the matter of choosing the climate. Who would get to pick? Climate affects everyone, so perhaps the best way to decide on one is by vote. A vote could, for instance, produce the mandate that our chosen climate should be one that most effectively protects the world’s ecosystems as we know them now. In that case, we might then decide to counteract natural climate changes. Or perhaps the mandate would establish a goal of complementing increasing urbanization through climate management that balances the impact of cities.

Before we picked a climate, we would need to evolve the political, commercial, and academic institutions to get us there. International institutions, in particular, would need to be strengthened to support the inevitably global solutions. The new technical discipline Earth systems engineering would have to be expanded and countless practitioners trained. We would have to develop complex new computer models, not only to forecast climate but also to understand how today’s costs should be balanced against tomorrow’s benefits. The private sector would need to envision climate change as opportunity, not impediment. The complete transition will take decades, if not centuries, but it can be accomplished in small steps.

The risks, of course, would be enormous. Virtually no significant technological breakthrough has ever occurred that nations did not find a way to apply to warfare, and the possibilities of global-scale climate alteration for military purposes would be staggering. Even putting those aside, the temptation of nations to use climate to gain economic advantage will be great. All human institutions suffer from mismanagement to some extent—those associated with climate will be no different. Any approach to climate management would have to be very robust to compensate for such failings.

Some may argue that humankind will never be able to manage projects that are so big and risky. Much the same was said about nuclear weapons, yet civilization has so far succeeded in controlling their enormous risk. In the case of climate change, the risks of not acting—relying on the belief that human climate influence can be eliminated soon and forever-after avoided—could be even more dangerous.

About the Author

William B. Gail is director of strategic development at Microsoft’s Virtual Earth unit. He serves on the U.S. National Research Council’s “Decadal Study” for Earth science and applications and has worked on various aspects of Earth science and technology policy.

To Probe Further

The International Panel on Climate Change (https://www.ipcc.ch) is generating its 4th assessment report, “Climate Change 2007.” This month the portion that deals with mitigating climate change will be released.

The essay that recently raised geoengineering’s standing among atmospheric scientists is “Albedo Enhancement by Stratospheric Sulfur Injections: A Contribution to Resolve a Policy Dilemma?” by Paul J. Crutzen, Climatic Change, August 2006, pp. 211-19.