Those thrilling moments when a soccer player kicks home the winning goal in the World Cup final or Beyonce debuts new dance choreography in concert might someday be recreated in full 3-D motion down to the smallest piece of confetti and played back from almost any angle. Such a possibility comes from a new motion-capture technique capable of reconstructing scenes captured by more than 500 video cameras mounted inside a two-story geodesic dome.

The new technique comes from Carnegie Mellon University researchers working in the Panoptic Studio—a video lab with a camera system capable of capturing 100,000 different points in motion at any time. Researchers developed a technique that uses consistent motion patterns as a cue for identifying and tracking certain points on an object captured by cameras. And it all works without the need for physical markers, such as those used by Hollywood motion-capture systems to translate the acting performance of Andy Serkis into the movements of the ape leader Caesar in the newest "Planet of the Apes" films.

"At some point, extra camera views just become 'noise,'" said Hanbyul Joo, a Ph.D. student in the Robotics Institute at Carnegie Mellon University, in a press release. "To fully leverage hundreds of cameras, we need to figure out which cameras can see each target point at any given time."

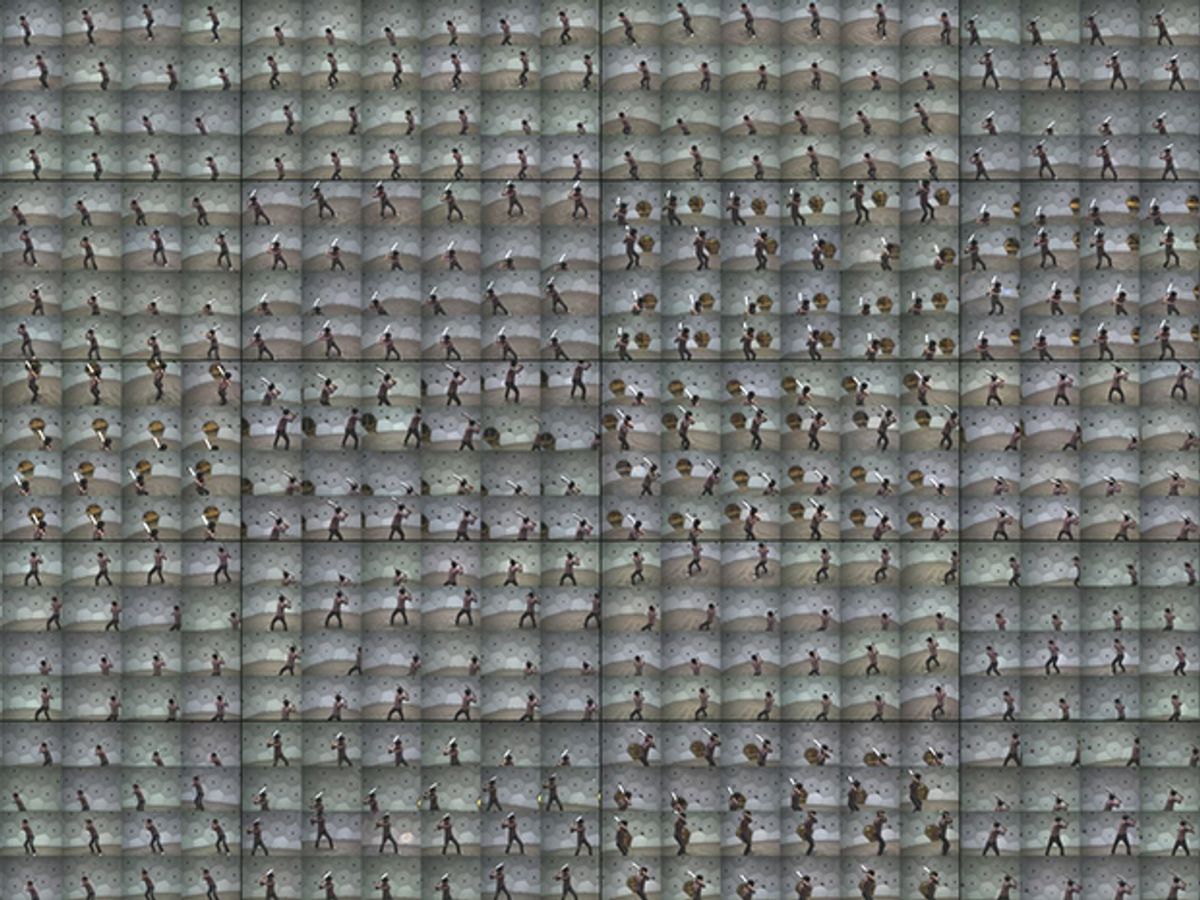

The camera system uses the motion patterns to figure out which of the Panopticon's 480 video cameras—plus an additional 30 high-definition video cameras—arrayed around the geodesic dome have the best views of a particular moving object. If many cameras are tracking a particular point on a person's body as moving to the right, a camera that detects motion moving in the opposite direction is likely picking up another object or can't see that particular point from its angle. The system can then figure out which video feeds to use or ignore to reconstruct the 3-D motion of that particular point.

Such technology could prove a huge help on feature film sets. Current motion-capture systems used by Hollywood films such as "Dawn of the Planet of the Apes" also use an array of many cameras, but specifically track certain markers placed on the bodies of actors. Carnegie Mellon's system has no need for using such markers, which could enable future Hollywood productions to create both digital actors and scenes.

Other researchers have shown that they can reconstruct 3-D still scenes based on using a large number of cameras, including the cameras of smartphones. But Carnegie Mellon's system has proven capable of reconstructing incredibly detailed, full-motion scenes such as a person tossing confetti in the air that tracks the individual confetti pieces as they get fed into a fan.

The researchers also raised the possibility of a similar system harnessing the power of video cameras in spectators' phones at events such as concerts and sporting events. But that would depend on somehow getting permission to access everyone's personal devices.

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.