Silicon has given us the computers we have today by allowing billions of transistors to be packed onto a single chip. And it may one day lead to far more powerful computers, now that researchers have demonstrated a silicon chip that manipulates individual photons to create a quantum photonic processor.

“We made a photonic quantum processor, which creates and manipulates two qubits encoded in photons for universal two-qubit quantum computation,” says Xiaogang Qiang, a research associate at the National University of Defense Technology in Changsha, China. Xiaogang was lead author of a paper describing the work that appeared in the September issue of Nature Photonics.

Quantum computing is based on the weird rules of quantum mechanics, which give it the potential to perform computations that traditional computer designs could never achieve—such as quickly breaking cryptographic codes or simulating the big bang. Quantum computers are based on qubits, analogous to the bits in classical computing. But unlike the familiar 1s and 0s of classical computers, qubits can be in superposition, holding more than one state simultaneously and thus expanding their calculating power. They can also be entangled, so that measuring one qubit provides information about the state of another.

Companies such as IBM and Google are hard at work trying to develop devices with enough linked qubits to perform powerful calculations. But so far, they’ve achieved only a few dozen qubits. The leading contenders for qubits are superconducting wires chilled to near absolute zero and trapped ions held in place by lasers. The trouble with these is that as the number of qubits in a system grows, the more likely they are to interact with the outside world, losing their quantum state—called coherence—and becoming useless.

But photons shouldn’t have that problem, says Xiaogang, who built the chip with a team of researchers primarily based at the University of Bristol, in the United Kingdom. “Photons do not interact with [the] environment, so we do not suffer with short coherence time,” he says. Photons can also be manipulated with ultrahigh precision, he says. And, of course, they’re transmitted at light speed. On top of that, there’s the fact that a photonic chip can take advantage of the entire silicon-based infrastructure that the computer industry has built up.

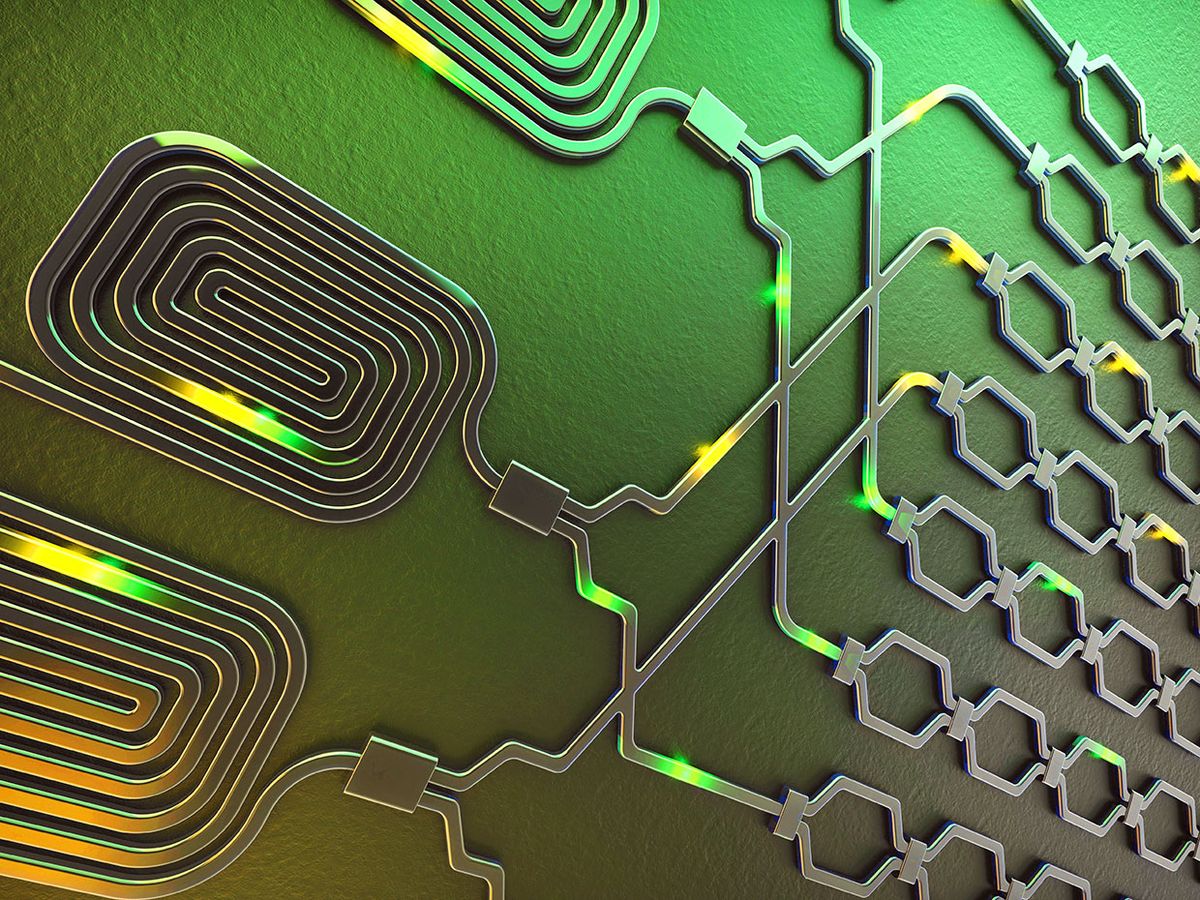

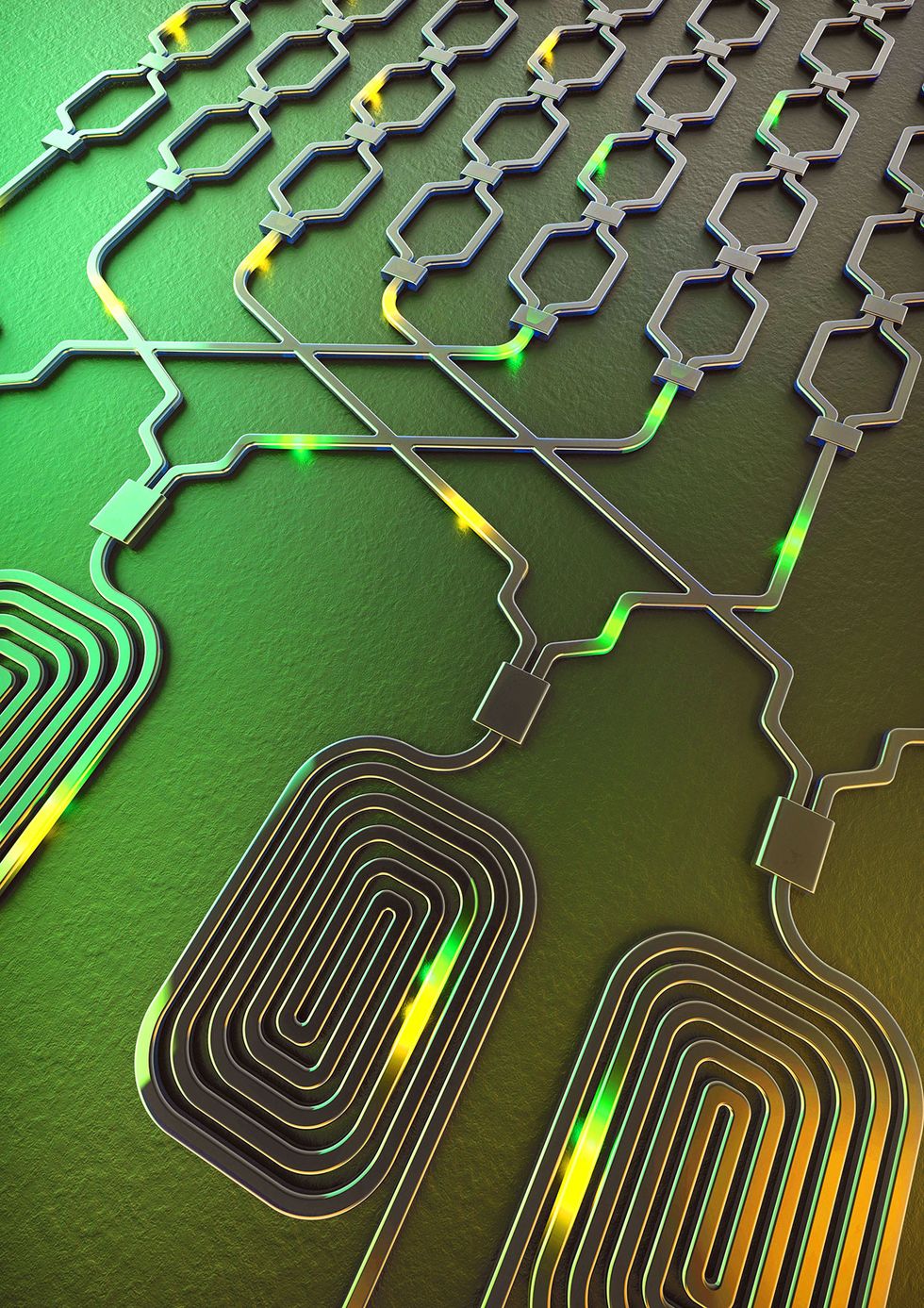

The chip consists of many interferometers, which split the photons into different spatial modes. Each mode passes through a specific waveguide, so having a photon in one waveguide represents a 1, while in another it represents a 0. Knowing which path one photon is following tells you which path its entangled partner is on.

The photons are encoded using thermo-optical phase shifters, which are controlled by electrical voltages. “Different settings of the phase shifters control the photon’s transmission behaviors in the interferometers, enabling different qubit-state encoding and different quantum operations,” Xiaogang says.

To scale up the system to something truly useful, the researchers will have to figure out a way to generate many more identical, entangled photons on the chip. There’s also the engineering challenge of fitting enough phase shifters, beam splitters, and other optical components onto the chip to handle all those photons. But Xiaogang says silicon photonics has shown the capacity for cramming many devices into tight spaces and getting them all to work with high precision, “and thus it in fact is the practical way to implement the ultimate large-scale photonic quantum processor.”

Neil Savage is a freelance science and technology writer based in Lowell, Mass., and a frequent contributor to IEEE Spectrum. His topics of interest include photonics, physics, computing, materials science, and semiconductors. His most recent article, “Tiny Satellites Could Distribute Quantum Keys,” describes an experiment in which cryptographic keys were distributed from satellites released from the International Space Station. He serves on the steering committee of New England Science Writers.