Quantum Computers Strive to Break Out of the Lab

Tech giants and startups alike want to bring quantum computing into the mainstream, but success is uncertain

Schrödinger’s cat you’ve met—the one that is both alive and dead at the same time. Now say hello to Schrödinger’s scientists, researchers who are in an eerie state of being simultaneously delighted and appalled.

Schrödinger’s famous thought experiment has come to life in a new form because quantum researchers are at the cusp of a long-sought accomplishment: creating a quantum computer that can do something no traditional computer can match. They’ve spent years battling naysayers who insisted that a quantum computer was an unachievable sci-fi fantasy, and now these researchers are finally beginning to indulge in some well-deserved self-congratulation.

But they are simultaneously cringing at a torrent of press accounts that wildly overstate the progress they’ve made. Exhibit A: Time magazine’s quantum-computing feature of 17 February 2014, with the editors declaring on the cover that “the Infinity Machine” is so revolutionary that it “promises to solve some of humanity’s most complex problems.” And since then, many press accounts have been equally hyperbolic.

Graeme Smith, a quantum-computing researcher at the University of Colorado Boulder, explains the conundrum now facing the field. “It used to be that if you were working in this area, you were the optimist telling everyone how great it’s going to be. But then things shifted, and now researchers like me can’t believe the things we’re hearing about how quantum computers will very soon be able to solve every problem blazingly fast. There’s almost a race to the bottom in making claims about what a quantum computer can do.”

The reason for the current excitement is that sometime this year, quantum computing is expected to reach an important milestone. Led by a research group at Google with another at IBM giving chase, scientists are expected to demonstrate “quantum supremacy.” That means the system will be able to solve a problem that no existing traditional computer has the memory or processing power to tackle.

But despite the click bait proclaiming “the arrival of quantum computing” that this event will inevitably generate, the accomplishment will be less significant than popular accounts might lead you to believe. For one thing, the algorithm Google is running to demonstrate quantum supremacy doesn’t do anything of practical importance: The problem is designed so that it is just beyond the computational reach of any current conventional computer.

Building quantum computers that can solve the sorts of real-world computing problems people actually care about will require many more years of research. Indeed, engineers working on quantum computing at both Google and IBM say that a quantum “dream machine” capable of solving computing’s most vexing problems might still be decades away.

And even then, virtually no one in the field is expecting quantum computers to replace traditional ones—despite popular accounts about how, with Moore’s Law of conventional computing losing steam, quantum stands ready to take over. All current designs for quantum computers involve pairing them with classical ones, which carry out myriad pre- and postprocessing steps. What’s more, many everyday programming tasks that can now be executed quickly on traditional computers might actually run more slowly on a quantum one, given the hardware and software overhead associated with getting a quantum computer to work in the first place.

“I don’t think anyone expects quantum computers to replace classical ones,” says Stephen Jordan, a quantum researcher who worked for many years at the National Institute of Standards and Technology (NIST) and recently joined Microsoft Research in Redmond, Wash. Rather, quantum machines are likely to be useful only for a select group of computing jobs that have massive payoffs but that can’t be readily handled by today’s computers.

The idea for a quantum computer is usually traced to a 1981 speech [PDF] by the Nobel Prize–winning physicist Richard Feynman, who speculated about the possibility of using the peculiar properties of subatomic particles to model the behavior of other subatomic particles. But a better starting point is a remarkable 1994 paper [PDF] by Peter Shor, then of AT&T Bell Laboratories and now of MIT, which showed how a quantum computer—assuming one could be built—could quickly find the prime factors of large numbers, thus defeating commonly used public-key encryption systems. Such a computer would have basically broken the Internet.

Many people took notice, especially the U.S. security agencies involved with encryption, which quickly began investing in quantum hardware research. Billions have been spent over the past two decades, mainly by governments. Now that the technology is closer to being commercialized, venture capitalists are getting in on the act as well, a phenomenon very likely correlated with the extent of the current quantum hype.

So how exactly do quantum computers work?

Providing a brief and user-friendly explanation is a forbidding task, which is why Canadian prime minister Justin Trudeau became a geek hero in April of 2016 when he did the job about as well as any layperson ever has. In a press conference appearance that quickly went viral, Trudeau explained that with “normal computers...it’s 1 or a 0. They’re binary systems. What quantum states allow for is much more complex information to be encoded into a single bit.”

If Trudeau had had more time, he might have gone on to say that the main building block of a quantum computer is a “qubit,” which is a quantum object and thus can be in an infinite number of states, ones that are related to the probability of finding it in one of the two states it can assume when it is measured. Anything with quantum properties, like an electron or photon, can serve as a qubit, as long as the computer can isolate and control it.

Once fashioned inside a computer, each qubit is attached to some mechanism capable of transmitting electromagnetic energy to it. To run a particular program, the computer zaps the qubit with a carefully scripted sequence of, say, microwave transmissions, each at a certain frequency and for a certain duration. Those pulses amount to the “instructions” of the quantum program. Each instruction causes the unmeasured state of the qubit to evolve in a specific way.

These pulsing operations are done not just on one qubit but on all the qubits in the system, often with each qubit or group of qubits receiving a different pulsed “instruction.” The qubits in a quantum computer interact through a process known as entanglement, which, in a manner of speaking, links their fates. The important point here is that quantum researchers have figured out how to use these successive changes to the state of the qubits in the computer to perform useful computations.

A Peek Into Five Quantum Computers

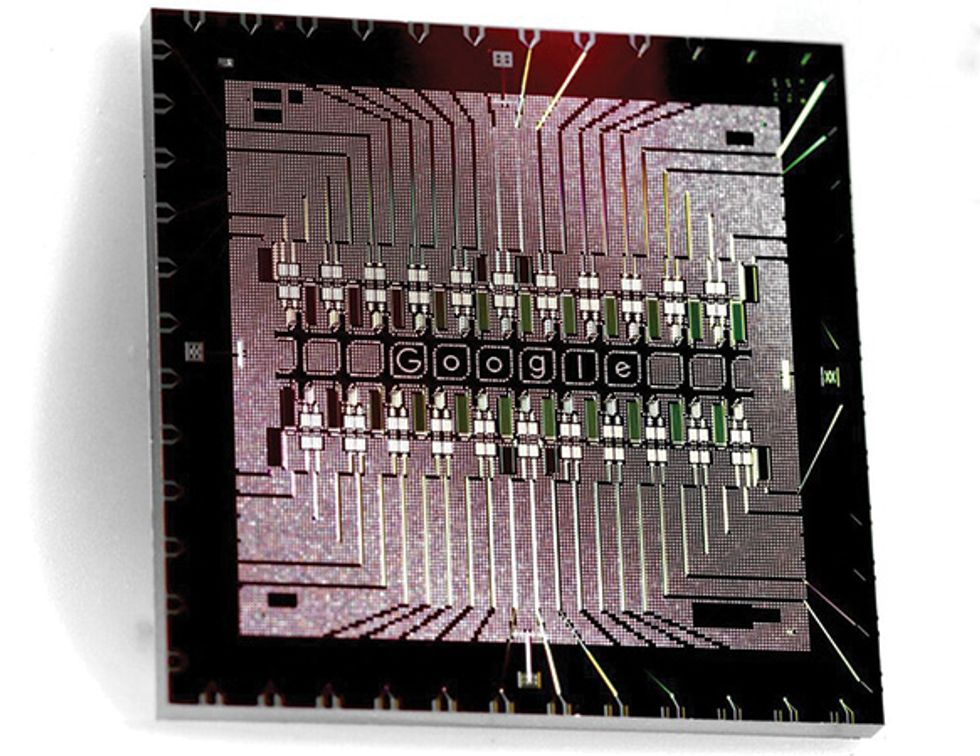

Google Google is using superconducting quantum processors such as this design with its 22 qubit elements arranged in two rows.Photo: Google

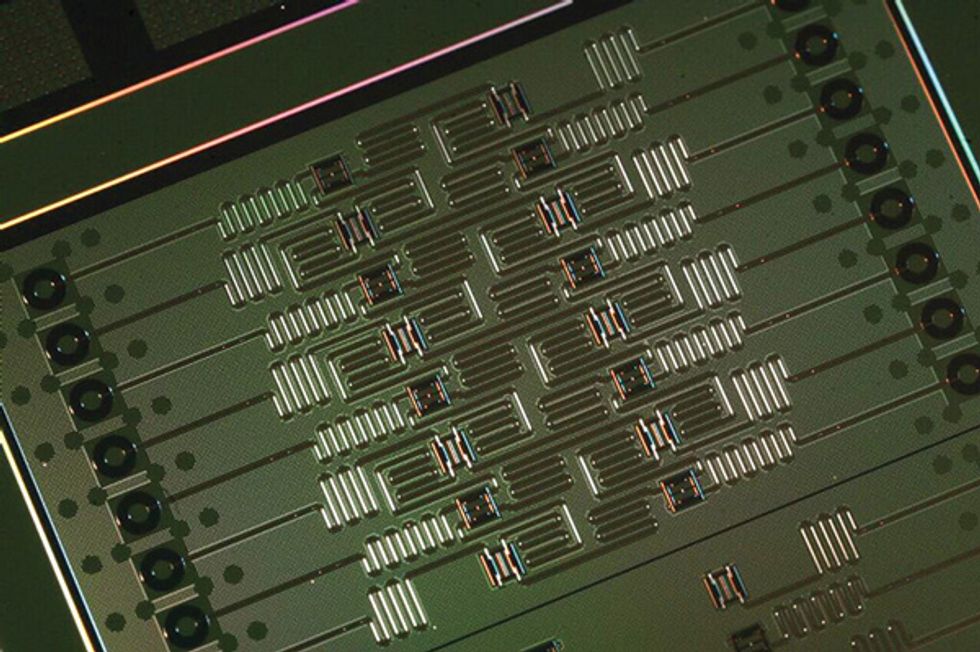

IBM This 16-qubit superconducting processor powers IBM’s publicly available platform for exploring quantum computing.Photo: IBM

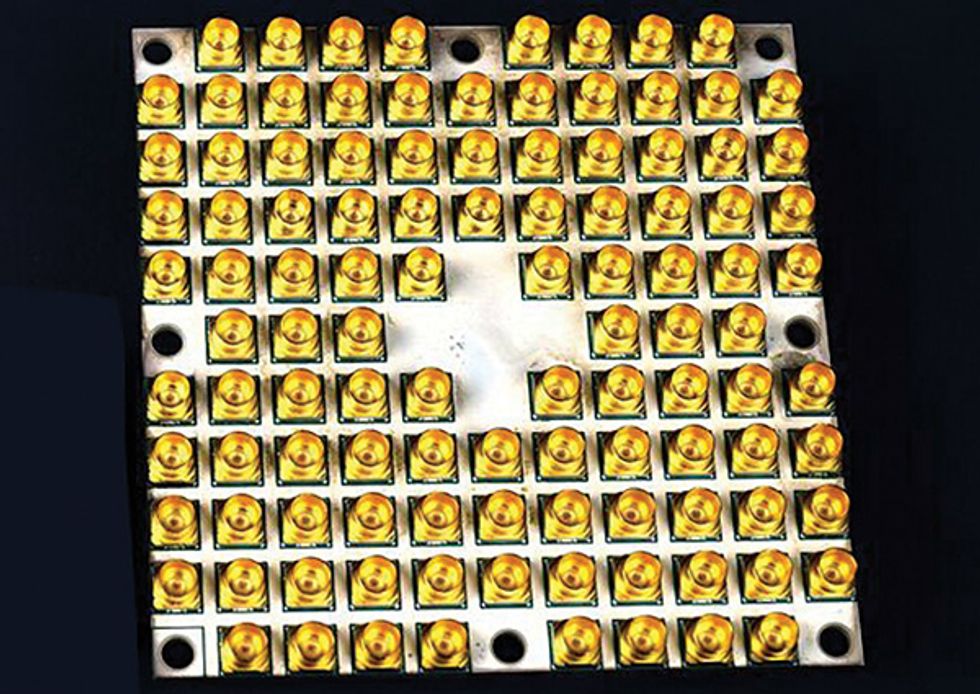

Intel This past January, Intel announced the fabrication of a 49-qubit superconducting quantum-computing chip, dubbed Tangle Lake.Photo: Intel

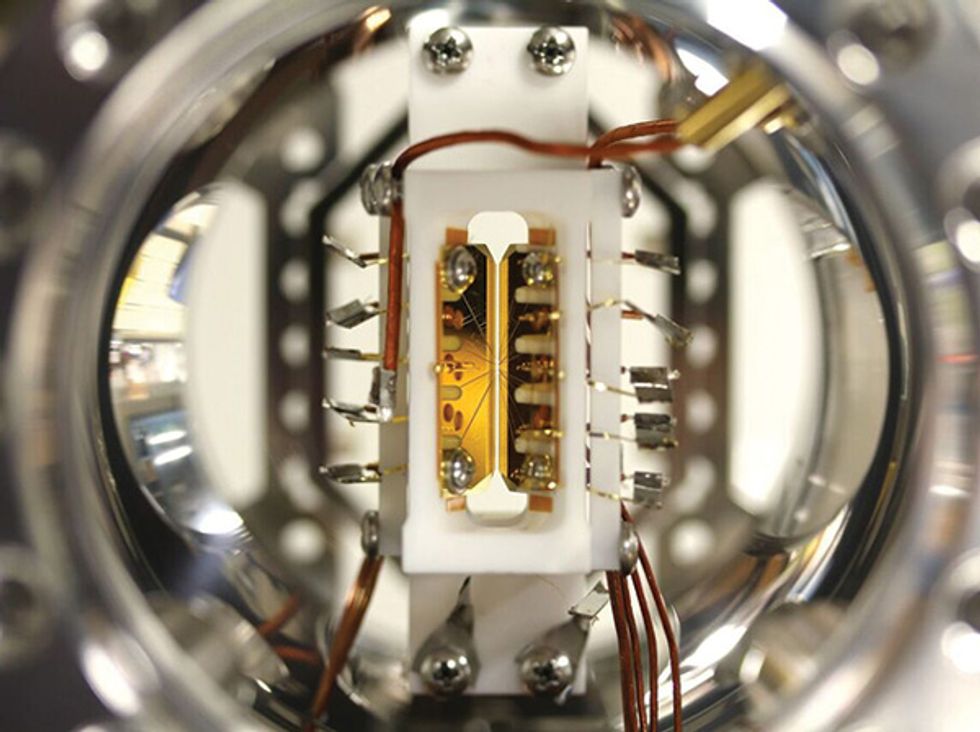

IonQ In 2016, IonQ demonstrated a working 5-qubit computer using lasers to manipulate ytterbium ions trapped in this device.Photo: Shantanu Debnath

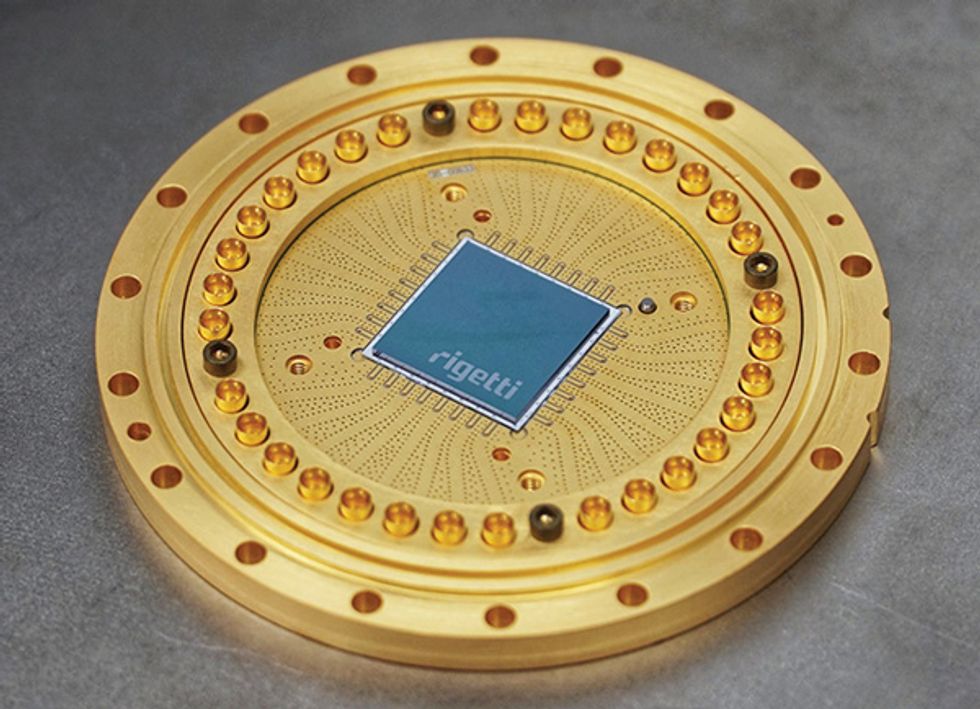

Rigetti Rigetti, a Berkeley, Calif., startup, has recently begun fabricating 19-qubit superconducting processor chips.Photo: Rigetti

Once the program is finished—thousands or even millions of pulses later—the qubits are measured to reveal the final result of the computation. Doing so causes each qubit to become either a 0 or a 1, the famous wave function collapse of quantum mechanics.

This would be a straightforward piece of engineering were it not for the fact that qubits must be kept isolated from even the most minute amount of outside interference, at least for as long as it takes for the computation to be completed. The difficulty of doing so is the main reason why, until a few years ago, the biggest quantum machines had only one or two dozen qubits and were capable of only the simplest arithmetic.

Because of all the noise that surrounds them, qubits tend to be error prone. To deal with this problem, quantum computers need to have extra qubits standing by as backups. If one qubit goes off-kilter, the system consults with the backups to restore the errant qubit to its proper state.

Such error correction occurs in regular computers too. But the number of required backups is much greater in quantum systems. Engineers estimate that for a reliable quantum computer, every qubit used might need 1,000 or more backups. Because many advanced algorithms require thousands of qubits to begin with, the total number of qubits necessary for a useful quantum machine, including those involved with error correction, could easily run into the millions.

Compare that with Google’s recently announced quantum-computing chip, which contains only 72 qubits. Just how valuable those qubits will prove to be for computation will depend on how error prone they are.

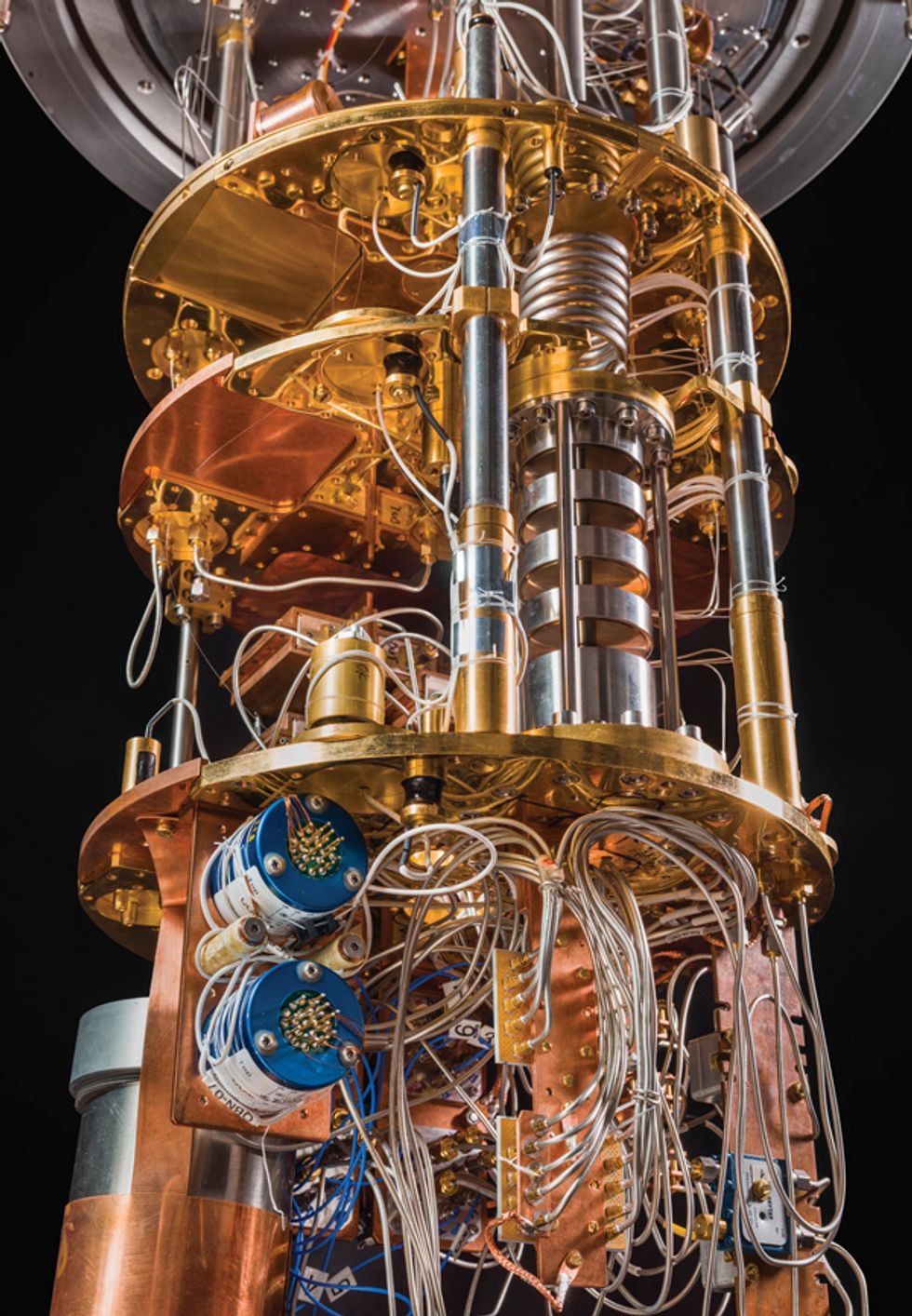

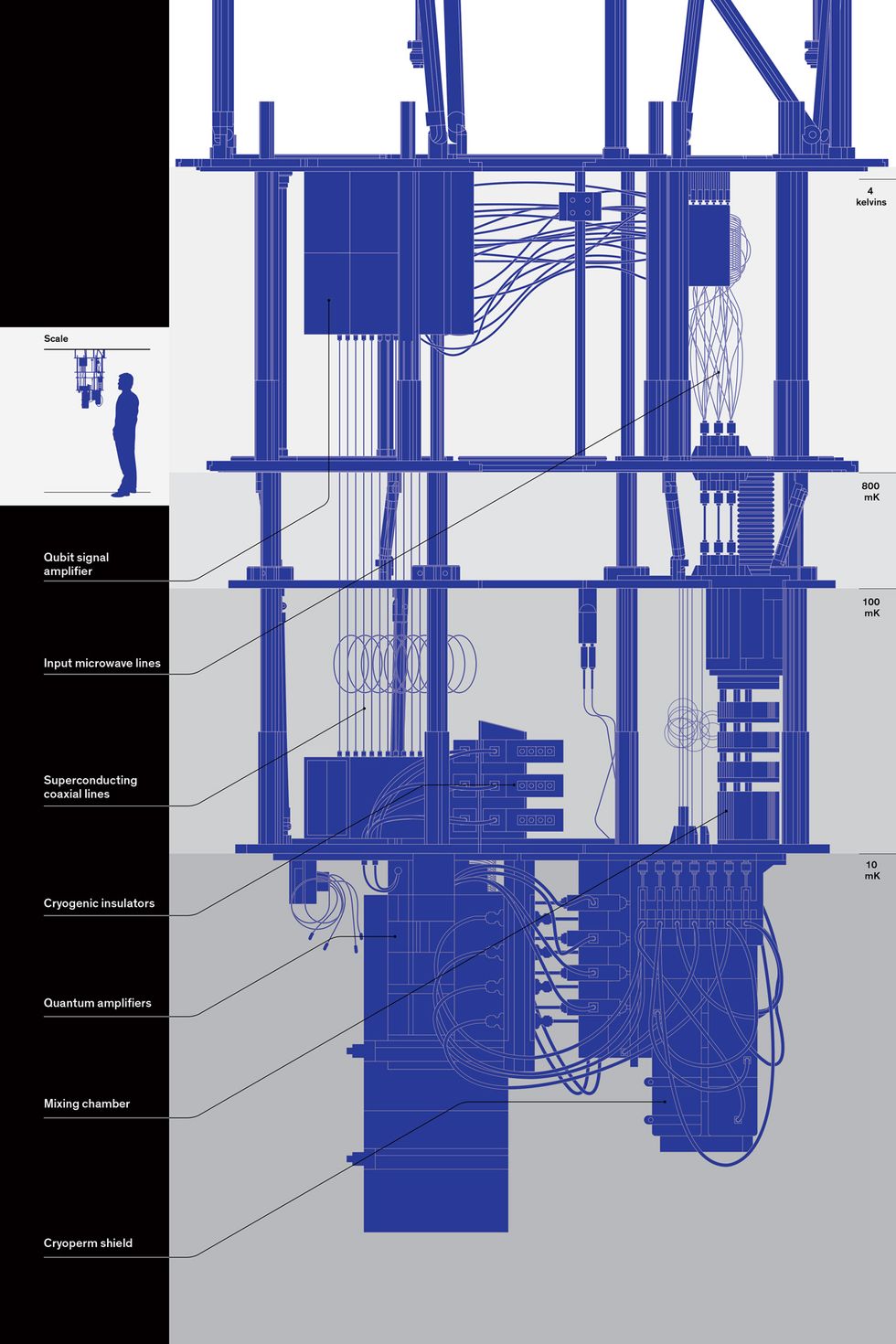

Work on Google’s quantum computer is spearheaded by a team hired en masse in 2014 from the University of California, Santa Barbara. And this past November, IBM announced that it had constructed a 50-qubit quantum computer. The two companies, along with Rigetti Computing, a startup in Berkeley, Calif., and Intel, which recently announced a 49-qubit array, rely on chips specially designed to have quantum properties by virtue of the superconducting circuit loops they contain. These chips must be kept at very low temperatures, necessitating elaborate cooling mechanisms that look like Hollywood sci-fi props and make for closet-size systems.

There is an entirely different quantum hardware architecture in which an actual quantum particle, an ion, is suspended in a system that runs at room temperature. IonQ, a startup in College Park, Md., cofounded by Duke University physicist Jungsang Kim and Christopher Monroe of the University of Maryland, is working to build a machine based on this approach, using ytterbium ions.

Microsoft is pursuing a third strategy, known as topological quantum computing. It holds theoretical promise, but no working hardware has yet been built.

None of those systems bears much resemblance to the quantum-related computer platform that has received the most publicity in recent years, from Canada’s D-Wave Systems. While D-Wave’s machines have been installed at such high-profile companies as Google and Volkswagen, a significant portion of the quantum research community views these devices with skepticism. Those scientists doubt that the D-Wave system will ever be able to do anything a traditional computer can’t, and indeed, they question whether it achieves any quantum speedup at all.

The Google-IBM-Rigetti superconducting strategy appears to be leading the hardware horse race, but it’s unclear yet what form of hardware will ultimately prove the most advantageous, or if all three approaches might end up coexisting. For their part, quantum programmers say they don’t care which design wins, as long as they get their qubits to play with.

One of the many unknowns about quantum computing is how rapidly the machines will be able to offer additional qubits. With traditional computer technology, Moore’s Law long guaranteed a doubling of transistor counts every two years or so. But because of the complex electronics associated with quantum machines, no such predictions are yet possible. Many engineers anticipate that for the intermediate future, we’ll be limited to machines with a relatively small number of qubits, perhaps in the few hundreds. Because bare-bones demonstrations of quantum supremacy probably can’t provide any useful results, and because mature systems are still many years away, engineers are focusing on algorithms that will work with the modestly sized quantum systems expected to be available in the near future.

The emerging consensus: While surprises are always possible, expect progress to be gradual.

“I don’t think anyone who claims that quantum computers will soon be able to solve real-world problems, or that you will be able to make any money with them, is being entirely honest,” says Wim van Dam, a physicist at UC Santa Barbara. “You’re going to need much bigger systems for those to happen. But that doesn’t mean that the field isn’t incredibly exciting right now.”

In the two decades since MIT’s Shor developed his factoring algorithm, quantum computing has been closely linked with cryptography. But concern about broken Internet encryption has abated in recent years, partly because the quantum community realized that a Shor-worthy machine is still a long way off and partly because of the rise of “postquantum cryptography” designed to be impervious to any form of quantum attack. Even now, NIST is evaluating various candidates for a postquantum cryptographic infrastructure.

Instead of being preoccupied with encryption, researchers these days tend to focus on using the machines to model atoms and molecules, in the spirit of Feynman’s original insight about quantum computing. Algorithms that simulate physics and chemistry are the most numerous in NIST’s Quantum Algorithm Zoo, and the payoffs could be substantial, researchers say. Imagine, for example, metals that are superconductive at close to room temperature.

Here, too, irrational exuberance should be avoided. Andrew Childs, a physicist and computer scientist at the University of Maryland, predicted that the first generation of quantum computers will be able to tackle only relatively simple physics and chemistry problems. “You can answer questions that people in the condensed-matter physics community would like to have the answer to with a reasonably small number of qubits,” he says. “But understanding high-temperature superconductivity, for example, is going to require many more.”

While researchers warn against excessive optimism about fresh-out-of-the-box quantum computers, they also don’t rule out the prospect of breakthroughs that will allow the machines to do much more with less. The more practice programmers get, the better their algorithms are likely to be, which is why IBM currently has its quantum machines online for researchers to tinker with.

“I could write down on this whiteboard the names of every single quantum algorithm researcher on the planet. And that’s a problem,” declares Chad Rigetti, of the eponymous Berkeley quantum-computing company. “We need more advances in algorithms, and having machines available for tens of thousands of students to learn on will help to catalyze the field.”

For their part, those students seem to relish being present at the dawn of a new era, with all its potential for surprising discoveries. Daniel Freeman, a physics graduate student at the University of California, Berkeley, says the fact that quantum machines are still in their very early days is a feature of the research field, not a bug.

“We’re essentially at the point that classical computing was at 100 years ago,” he says. “We’re not even at vacuum tubes yet. But I think that’s actually very cool.”

This article appears in the April 2018 print issue as “Quantum Computing: Both Here and Not Here.”

A correction to this article was made on date 26 March, 2018. The caption to the first image was changed to indicate that the computer’s maker is IBM.

Google Google is using

Google Google is using  IBM This 16-qubit superconducting processor powers IBM’s publicly available platform for exploring quantum computing.Photo: IBM

IBM This 16-qubit superconducting processor powers IBM’s publicly available platform for exploring quantum computing.Photo: IBM

IonQ In 2016, IonQ demonstrated a working 5-qubit computer using

IonQ In 2016, IonQ demonstrated a working 5-qubit computer using  Rigetti Rigetti, a Berkeley, Calif., startup, has recently begun fabricating 19-qubit superconducting processor chips.Photo: Rigetti

Rigetti Rigetti, a Berkeley, Calif., startup, has recently begun fabricating 19-qubit superconducting processor chips.Photo: Rigetti