Sure, artificial intelligence is transforming the world’s societies and economies—but can an AI come up with plausible ideas for a Halloween costume?

Janelle Shane has been asking such probing questions since she started her AI Weirdness blog in 2016. She specializes in training neural networks (which underpin most of today’s machine learning techniques) on quirky data sets such as compilations of knitting instructions, ice cream flavors, and names of paint colors. Then she asks the neural net to generate its own contributions to these categories—and hilarity ensues. AI is not likely to disrupt the paint industry with names like “Ronching Blue,” “Dorkwood,” and “Turdly.”

Shane’s antics have a serious purpose. She aims to illustrate the serious limitations of today’s AI, and to counteract the prevailing narrative that describes AI as well on its way to superintelligence and complete human domination. “The danger of AI is not that it’s too smart,” Shane writes in her new book, “but that it’s not smart enough.”

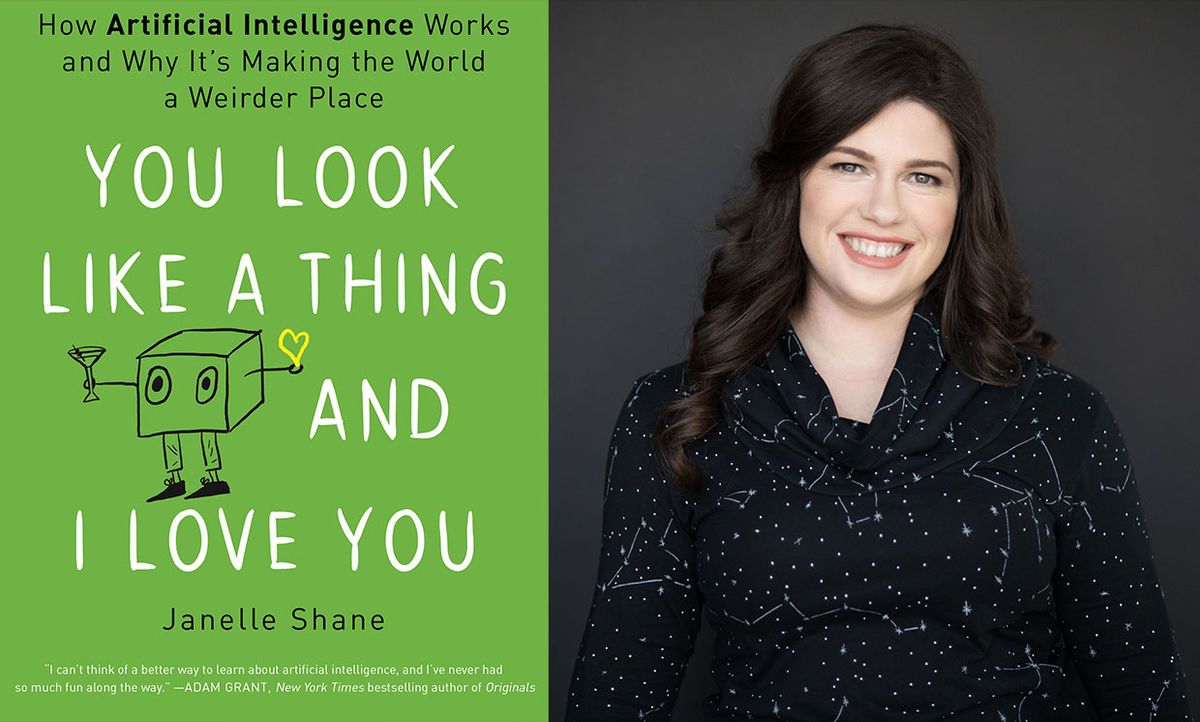

The book, which came out on Tuesday, is called You Look Like a Thing and I Love You. It takes its odd title from a list of AI-generated pick-up lines, all of which would at least get a person’s attention if shouted, preferably by a robot, in a crowded bar. Shane’s book is shot through with her trademark absurdist humor, but it also contains real explanations of machine learning concepts and techniques. It’s a painless way to take AI 101.

She spoke with IEEE Spectrumabout the perils of placing too much trust in AI systems, the strange AI phenomenon of “giraffing,” and her next potential Halloween costume.

- The un-delicious origin of her blog

- “The narrower the problem, the smarter the AI will seem”

- Why overestimating AI is dangerous

- Giraffing!

- Machine and human creativity

The un-delicious origin of her blog

IEEE Spectrum: You studied electrical engineering as an undergrad, then got a master’s degree in physics. How did that lead to you becoming the comedian of AI?

Janelle Shane: I’ve been interested in machine learning since freshman year of college. During orientation at Michigan State, a professor who worked on evolutionary algorithms gave a talk about his work. It was full of the most interesting anecdotes–some of which I’ve used in my book. He told an anecdote about people setting up a machine learning algorithm to do lens design, and the algorithm did end up designing an optical system that works… except one of the lenses was 50 feet thick, because they didn’t specify that it couldn’t do that. I started working in his lab on optics, doing ultra-short laser pulse work. I ended up doing a lot more optics than machine learning, but I always found it interesting. One day I came across a list of recipes that someone had generated using a neural net, and I thought it was hilarious and remembered why I thought machine learning was so cool. That was in 2016, ages ago in machine learning land.

Spectrum: So you decided to “establish weirdness as your goal” for your blog. What was the first weird experiment that you blogged about?

Shane: It was generating cookbook recipes. The neural net came up with ingredients like: “Take ¼ pounds of bones or fresh bread.” That recipe started out: “Brown the salmon in oil, add creamed meat to the mixture.” It was making mistakes that showed the thing had no memory at all.

Spectrum: You say in the book that you can learn a lot about AI by giving it a task and watching it flail. What do you learn?

Shane: One thing you learn is how much it relies on surface appearances rather than deep understanding. With the recipes, for example: It got the structure of title, category, ingredients, instructions, yield at the end. But when you look more closely, it has instructions like “Fold the water and roll it into cubes.” So clearly this thing does not understand water, let alone the other things. It’s recognizing certain phrases that tend to occur, but it doesn’t have a concept that these recipes are describing something real. You start to realize how very narrow the algorithms in this world are. They only know exactly what we tell them in our data set.

“The narrower the problem, the smarter the AI will seem”

Spectrum: That makes me think of DeepMind’s AlphaGo, which was universally hailed as a triumph for AI. It can play the game of Go better than any human, but it doesn’t know what Go is. It doesn’t know that it’s playing a game.

Shane: It doesn’t know what a human is, or if it’s playing against a human or another program. That’s also a nice illustration of how well these algorithms do when they have a really narrow and well-defined problem. The narrower the problem, the smarter the AI will seem. If it’s not just doing something repeatedly but instead has to understand something, coherence goes down. For example, take an algorithm that can generate images of objects. If the algorithm is restricted to birds, it could do a recognizable bird. If this same algorithm is asked to generate images of any animal, if its task is that broad, the bird it generates becomes an unrecognizable brown feathered smear against a green background.

Spectrum: That sounds… disturbing.

Shane: It’s disturbing in a weird amusing way. What’s really disturbing is the humans it generates. It hasn’t seen them enough times to have a good representation, so you end up with an amorphous, usually pale-faced thing with way too many orifices. If you asked it to generate an image of a person eating pizza, you’ll have blocks of pizza texture floating around. But if you give that image to an image-recognition algorithm that was trained on that same data set, it will say, “Oh yes, that’s a person eating pizza.”

Why overestimating AI is dangerous

Spectrum: Do you see it as your role to puncture the AI hype?

Shane: I do see it that way. Not a lot of people are bringing out this side of AI. When I first started posting my results, I’d get people saying, “I don’t understand, this is AI, shouldn’t it be better than this? Why doesn’t it understand?” Many of the impressive examples of AI have a really narrow task, or they’ve been set up to hide how little understanding it has. There’s a motivation, especially among people selling products based on AI, to represent the AI as more competent and understanding than it actually is.

Spectrum: If people overestimate the abilities of AI, what risk does that pose?

Shane: I worry when I see people trusting AI with decisions it can’t handle, like hiring decisions or decisions about moderating content. These are really tough tasks for AI to do well on. There are going to be a lot of glitches. I see people saying, “The computer decided this so it must be unbiased, it must be objective.”

“If the algorithm’s task is to replicate human hiring decisions, it’s going to glom onto gender bias and race bias.”

That’s another thing I find myself highlighting in the work I’m doing. If the data includes bias, the algorithm will copy that bias. You can’t tell it not to be biased, because it doesn’t understand what bias is. I think that message is an important one for people to understand. If there’s bias to be found, the algorithm is going to go after it. It’s like, “Thank goodness, finally a signal that’s reliable.” But for a tough problem like: Look at these resumes and decide who’s best for the job. If its task is to replicate human hiring decisions, it’s going to glom onto gender bias and race bias. There’s an example in the book of a hiring algorithm that Amazon was developing that discriminated against women, because the historical data it was trained on had that gender bias.

Spectrum: What are the other downsides of using AI systems that don’t really understand their tasks?

Shane: There is a risk in putting too much trust in AI and not examining its decisions. Another issue is that it can solve the wrong problems, without anyone realizing it. There have been a couple of cases in medicine. For example, there was an algorithm that was trained to recognize things like skin cancer. But instead of recognizing the actual skin condition, it latched onto signals like the markings a surgeon makes on the skin, or a ruler placed there for scale. It was treating those things as a sign of skin cancer. It’s another indication that these algorithms don’t understand what they’re looking at and what the goal really is.

Giraffing!

Spectrum: In your blog, you often have neural nets generate names for things—such as ice cream flavors, paint colors, cats, mushrooms, and types of apples. How do you decide on topics?

Shane: Quite often it’s because someone has written in with an idea or a data set. They’ll say something like, “I’m the MIT librarian and I have a whole list of MIT thesis titles.” That one was delightful. Or they’ll say, “We are a high school robotics team, and we know where there’s a list of robotics team names.” It’s fun to peek into a different world. I have to be careful that I’m not making fun of the naming conventions in the field. But there’s a lot of humor simply in the neural net’s complete failure to understand. Puns in particular—it really struggles with puns.

Spectrum: Your blog is quite absurd, but it strikes me that machine learning is often absurd in itself. Can you explain the concept of giraffing?

Shane: This concept was originally introduced by [internet security expert] Melissa Elliott. She proposed this phrase as a way to describe the algorithms’ tendency to see giraffes way more often than would be likely in the real world. She posted a whole bunch of examples, like a photo of an empty field in which an image-recognition algorithm has confidently reported that there are giraffes. Why does it think giraffes are present so often when they’re actually really rare? Because they’re trained on data sets from online. People tend to say, “Hey look, a giraffe!” And then take a photo and share it. They don’t do that so often when they see an empty field with rocks. There’s also a chatbot that has a delightful quirk. If you show it some photo and ask it how many giraffes are in the picture, it will always answer with some non zero number. This quirk comes from the way the training data was generated: These were questions asked and answered by humans online. People tended not to ask the question “How many giraffes are there?” when the answer was zero. So you can show it a picture of someone holding a Wii remote. If you ask it how many giraffes are in the picture, it will say two.

Machine and human creativity

Spectrum: AI can be absurd, and maybe also creative. But you make the point that AI art projects are really human-AI collaborations: Collecting the data set, training the algorithm, and curating the output are all artistic acts on the part of the human. Do you see your work as a human-AI art project?

Shane: Yes, I think there is artistic intent in my work; you could call it literary or visual. It’s not so interesting to just take a pre-trained algorithm that’s been trained on utilitarian data, and tell it to generate a bunch of stuff. Even if the algorithm isn’t one that I’ve trained myself, I think about, what is it doing that’s interesting, what kind of story can I tell around it, and what do I want to show people.

The Halloween costume algorithm “was able to draw on its knowledge of which words are related to suggest things like sexy barnacle.”

Spectrum: For the past three years you’ve been getting neural nets to generate ideas for Halloween costumes. As language models have gotten dramatically better over the past three years, are the costume suggestions getting less absurd?

Shane: Yes. Before I would get a lot more nonsense words. This time I got phrases that were related to real things in the data set. I don’t believe the training data had the words Flying Dutchman or barnacle. But it was able to draw on its knowledge of which words are related to suggest things like sexy barnacle and sexy Flying Dutchman.

Spectrum: This year, I saw on Twitter that someone made the gothy giraffe costume happen. Would you ever dress up for Halloween in a costume that the neural net suggested?

Shane: I think that would be fun. But there would be some challenges. I would love to go as the sexy Flying Dutchman. But my ambition may constrict me to do something more like a list of leg parts.

Eliza Strickland is a senior editor at IEEE Spectrum, where she covers AI, biomedical engineering, and other topics. She holds a master’s degree in journalism from Columbia University.