Old-fashioned telecommunication carriers are falling behind in the global bandwidth race as global giants of content and cloud computing are building their own global networks. Facebook has commissioned electronics and IT giant NEC Corporation to build the world's highest capacity submarine cable. When finished it will carry a staggering 500 terabits—some 4000 Blu-Ray discs of data—per second between North America and Europe on the world's busiest data highway.

For decades, transoceanic cables were laid by consortia of telecommunication carriers like AT&T and British Telecom. As cloud computing and data centers spread around the world, Google, Amazon, Facebook and Microsoft start joining cable consortia, and in the past few years Google began building its own cables. The new cable will give Facebook sole ownership of the world's biggest data pipeline.

Transoceanic fiber-optic cables are the backbones of the global telecommunications network, and their change in ownership reflects the rapid growth of data centers for cloud computing and content distribution. Google has 23 giant data centers around the globe, each one constantly updated to mirror the Google cloud for users in their region. Three years ago, flows between data centers accounted for 77 percent of transatlantic traffic and 60 percent of transpacific traffic, Alan Mauldin, research director at TeleGeography, a market-research unit of California-based PriMetrica, said at the time. Traffic between data centers is thought to be growing faster than the per-person data consumption, which Facebook says increases 20 to 30 percent a year.

Vying for maximum bandwidth at the intersection of Moore's Law and Shannon's limit

Fiber-optic developers have worked relentlessly to keep up with the demand for bandwidth. For decades, data capacity of a single fiber increased at a faster rate than the number of transistors squeezed onto a chip, the definition of Moore's Law. But in recent years that growth has slowed as data rates approached Shannon's limit, a point at which noise in the transmission system overwhelms the signal. In 2016 the maximum data rate per fiber pair (each fiber carrying a signal in one direction) was around 10 terabits per second, achieved by sending signals at 100 gigabits per second on 100 separate wavelengths through the same fiber.

Developing more sophisticated signal formats offered some improvement, but not enough to keep pace with the demand for bandwidth. The only way around Shannon's limit has been to open new paths for data delivery.

In 2018, Facebook and Google placed bets on broadening the transmission band of optical fibers by adding signals at a hundred new wavelengths to squeeze 24 terabits through a single fiber. Each bought one pair of fibers on the Pacific Light Cable stretching from Hong Kong to Los Angeles. The leader of the consortium, Pacific Light Data Communications, of Hong Kong, retained four other pairs in the six-pair cable. Although the cable was soon laid, the U.S. Federal Communications Commission has refused to license its connection to the U.S. network because of security concerns arising from its Chinese connections.

Meanwhile, Facebook and Google also placed bets on another approach, increasing the fiber count in cables. That had been limited to eight pairs by the need for the cable to supply electrical power to amplify optical signals repeatedly on their trip across the ocean. Developers believed improving power transmission and optical amplifiers would let a new generation of cables carry 16 to 25 fiber pairs. Mauldin tells Spectrum that Google's Dunant cable, which entered service in January, has 12 fiber pairs with total capacity 250 terabits per second. The Amitié consortium cable as well as Google's Grace Hopper cables now under construction in the Atlantic both have 16 pairs with full capacities of expected-to-be 350 to 370 terabits per second.

Facebook's new transatlantic cable will have 24 fiber pairs, each carrying more than 20 terabits per second for overall capacity of a record 500 terabits (0.5 petabits) per second. Exact capacities of the fibers in the new system differ because of engineering tradeoffs to maximize total capacity, says Maudlin.

It's "a great project indeed," says Peter Winzer, a Bell Labs veteran and founder of Nubis Communications. The overall data rate "jibes with where cable capacities should be in two years" when the cable will be turned on, he adds.

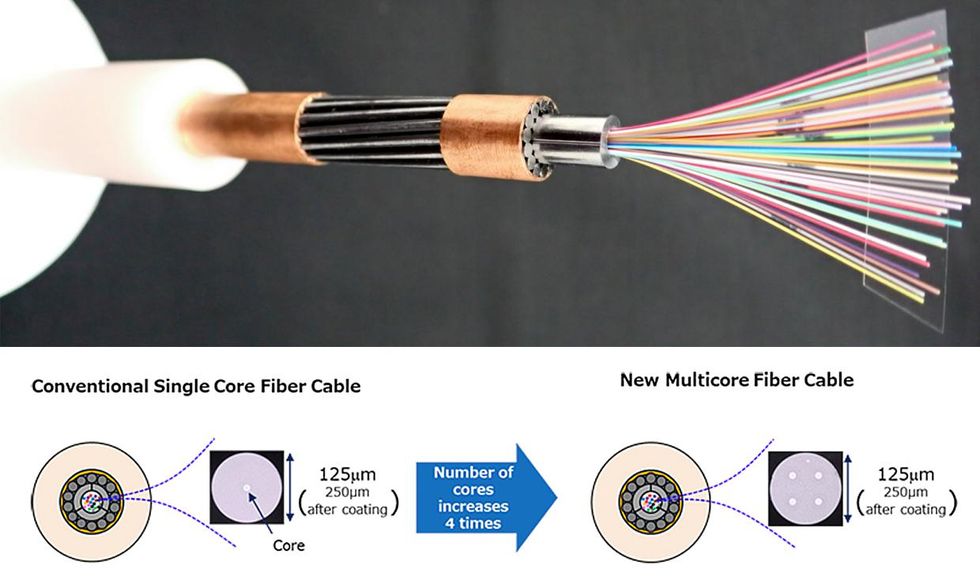

The next step is well along the development pipeline: fibers that can guide different light signals at the same wavelength along separate paths through the fiber. One approach is fabricating fibers with multiple parallel light-guiding cores running along their lengths. Another is fabricating cores larger than the usual nine micrometers that are designed to guide several separate light signals through their length without scrambling them together. The two techniques have been combined successfully in the lab, which could multiply the data rate.

Just a few days before announcing its cable deal with Facebook, NEC reported a successful field trial of a fiber containing four internal light guiding cores in a submarine cable, meeting "the exacting demands of global telecommunications networks." NEC expects multicore fiber to multiply the number of light-guiding paths available through fibers, without requiring major changes in cable size and structure—important considerations because the cables must withstand the tremendous pressures at the bottom of the ocean. With luck, that might buy enough bandwidth to keep up with data center growth for several more years.

Jeff Hecht writes about lasers, optics, fiber optics, electronics, and communications. Trained in engineering and a life senior member of IEEE, he enjoys figuring out how laser, optical, and electronic systems work and explaining their applications and challenges. At the moment, he’s exploring the challenges of integrating lidars, cameras, and other sensing systems with artificial intelligence in self-driving cars. He has chronicled the histories of laser weapons and fiber-optic communications and written tutorial books on lasers and fiber optics.