Is Keck’s Law Coming to an End?

After decades of exponential growth, fiber-optic capacity may be facing a plateau

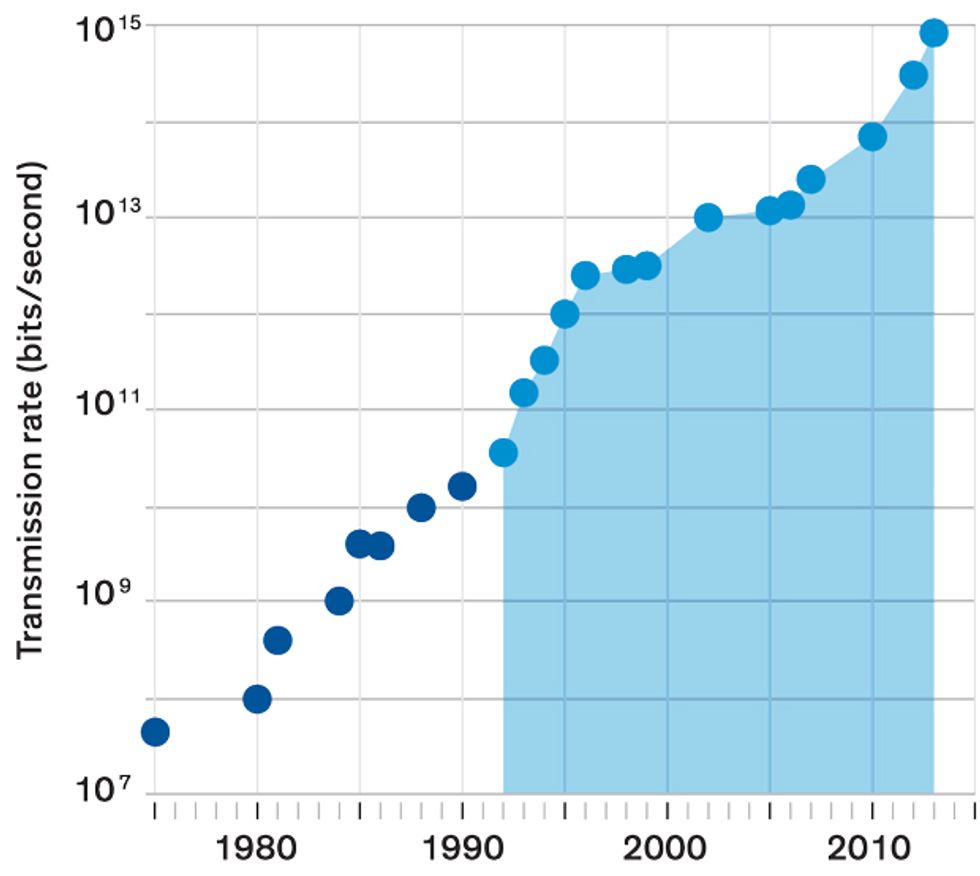

Since 1980, the number of bits per second that can be sent down an optical fiber has increased some 10 millionfold. That’s remarkable even by the standards of late-20th-century electronics. It’s more than the jump in the number of transistors on chips during that same period, as described by Moore’s Law. There ought to be a law here, too. Call it Keck’s Law, in honor of Donald Keck. He’s the coinventor of low-loss optical fiber and has tracked the impressive growth in its capacity. Maybe giving the trend a name of its own will focus attention on one of the world’s most unsung industrial achievements.

Moore’s Law may get all the attention. But it’s the combination of fast electronics and fiber-optic communications that has created “the magic of the network we have today,” according to Pradeep Sindhu, chief technical officer at Juniper Networks. The strongly interacting electron is ideal for speedy switches that can be used in logic and memory. The weakly interacting photon is perfect for carrying signals over long distances. Together they have fomented the technological revolution that continues to shape and define our times.

Now, as electronics faces enormous challenges to keep Moore’s Law alive, fiber optics is also struggling to sustain the momentum. For the past few decades, a series of new developments have allowed communications engineers to keep pushing more and more bits down fiber-optic networks. But the easy gains are behind them. To keep moving forward, they’ll need to conjure up some fairly spectacular innovations.

The Light Exponential

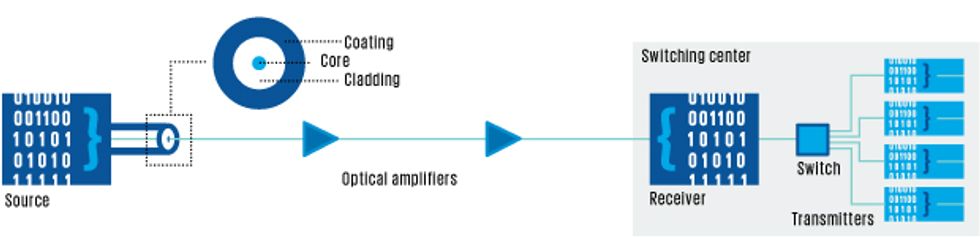

The heart of today’s fiber-optic connections is the core: a 9-micrometer-wide strand of glass that’s almost perfectly transparent to 1.55-µm, infrared light. This core is surrounded by more than 50 µm of cladding made of glass with a lower refractive index. Laser signals sent through the core are trapped inside by the cladding and guided along by internal reflection.

Those light pulses zip down the fiber at a rate of about 200,000 kilometers per second, two-thirds the speed of light in vacuum. The fiber is almost perfectly clear, but every now and then a photon will bounce off an atom inside the core. The longer the light travels, the more photons will scatter off atoms and leak into the surrounding layers of cladding and protective coating. After 50 km, about 90 percent of the light will be lost, mostly due to this scattering.

Communications engineers therefore need to boost the intensity of the light at regular intervals, but this approach has limitations of its own. The interaction between a powerful, freshly boosted signal and the glass in a fiber can cause distortions in the signal that build up with distance, a bit like a haze in the air that obscures distant objects more than nearby ones. These distortions are called nonlinear because they don’t double if the intensity of the light is doubled. Instead they increase at a faster rate. When the light is intense enough the distortions will drown the signal in noise. The story of fiber is a saga of finding ways to boost the data rate and the length of transmission despite the scattering and distortion problems.

The very first fiber-optic messages were encoded by simply switching the laser source on and off. Engineers made steady improvements in how quickly that switching could be done. By the mid-1980s, a few years into the dawn of commercial fiber networks, the strategy could be used to send several hundred megabits per second through a few tens of kilometers of glass.

To keep the signal going after the first 50 km, a repeater was then used to convert light pulses into electronic signals, clean them up, amplify them, and then retransmit them with another laser down the next length of fiber.

This electro-optical regeneration process was cumbersome and costly. Fortunately, a better approach soon emerged. In 1986, David Payne of the University of Southampton, in England, showed that it is possible to amplify light directly inside an optical fiber instead of using external electronics.

Payne added a dash of a rare-earth element called erbium to a fiber core. He found that by exciting erbium atoms with a laser, he could prime them to amplify incoming light with a wavelength of 1.55 µm—just the point where optical fibers are most transparent. By the mid-1990s, amplifiers containing erbium-doped fibers were already being installed to stretch fiber transmission distances. Depending on their spacing, a series of amplifiers could relay signals over a distance of 500 to several thousand kilometers before the signals had to be converted to electronic signals for cleaning up and regeneration with more expensive gear. Today, chains of erbium-fiber amplifiers can extend fiber connections across continents or oceans.

The emergence of the erbium-fiber amplifier opened the door to another way to boost data rates: multiple-wavelength communication. Erbium atoms actually amplify light across a range of wavelengths, and can be made to do so quite uniformly from 1.53 to 1.57 µm. That’s a wide enough band to accommodate multiple signals in the same fiber, each with its own much narrower band of wavelengths.

Modern Fiber Optics: The Basics

This multiwavelength approach, dubbed wavelength-division multiplexing, along with further improvements in how quickly laser signals could be turned on and off, led to an explosion in capacity in the mid- and late-1990s. By 2000, fiber-optic transmission systems were commercially available that could amplify as many as 80 separate signals, each carrying 10 gigabits per second. In reality, nobody needed all that transmission capacity at the time, so transmission systems were installed with only a few wavelengths and the option to add more channels later.

Network operators added more wavelengths to existing fibers as the Internet took off in the early 2000s. But about a decade ago, it became clear that the traditional way of encoding signals was reaching its limit, and that some routes would soon run out of capacity without new technology or more fibers. An on-off signal carries only one bit at a time (if light in a given interval exceeds a threshold power, it generally represents 1; light below the threshold represents 0). The only way to pack more bits per second with this approach is to do what engineers had traditionally done to such signals: Shorten how long each pulse—or lack of pulse—lasts.

Unfortunately, the shorter the pulse, the more vulnerable it becomes to an optical effect called dispersion. This is the same phenomenon that causes prisms to spread light into a rainbow of colors. It arises because the speed of light in glass varies with wavelength. Even a pulse of laser light, whose spectrum is as close to a single wavelength as you can get, will stretch out as it travels through a fiber. And as pulses stretch out, they interfere with one another. The problem gets worse as the data rate increases and the interval between successive pulses gets shorter. The upshot is that a fiber that could carry 10 Gb/s for 1,000 km would carry 100 Gb/s for only 10 km before the signal would need to be cleaned up and regenerated.

Fibers with improved designs were devised to cut down on pulse dispersion, but replacing the existing fiber network would have been prohibitively expensive. And by 2001, overbuilding during the Internet bubble had left behind vast amounts of unused, “dark” fiber. Fortunately, engineers had other tricks, including two techniques that were previously used to squeeze more wireless and radio signals into narrow slices of the radio spectrum.

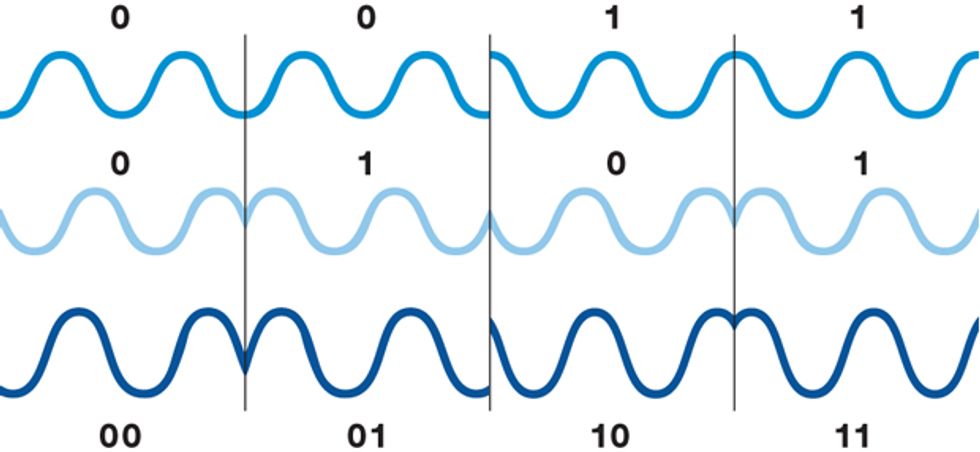

One was a change to the way signals are encoded. Instead of turning the laser on and off, leave it on all the time and modulate its phase—the timing of the arrival of its peaks and troughs. The simplest such digital phase modulation shifts the peak of a wave by a quarter wavelength, or 90 degrees, ahead or behind the wave’s natural arrival time. The wave representing a 1 will then be at its peak when the wave representing a 0 is at its trough. This approach will still yield two bits, but the capacity of the signal can be doubled by combining two waves. Together, they shift the phase in smaller increments, by +135, +45, -45, or -135 degrees. The four resulting states are used to represent the four possible two-bit combinations: 00, 01, 10, and 11.

In 2007, Bell Labs and Verizon used a variant of this approach called differential quadrature phase-shift keying, to send 100 Gb/s through some 500 km of fiber in Verizon’s Florida network. This was a big deal but still not quite good enough for Verizon, which, like other long-haul carriers, wants signals to be able to travel 1,000 to 1,500 km along the cables in its workhorse backbone system before requiring an expensive regenerator.

Fortunately, a second technique was able to bridge that distance. This one exploits coherence, an intrinsic property of laser light. Coherence means that if you cut across the beam at any point, you’ll find that all its waves will have the same phase. The peaks and troughs all move in concert, like soldiers marching on parade.

Coherence can be used to drastically improve a receiver’s ability to extract information. The scheme works by combining an incoming fiber signal with light of the same frequency generated inside a receiver. With its clean phase, the locally generated light can be used to help determine the phase of the noisier incoming signal. The carrier wave can then be filtered out, leaving the signal that was added to it. The receiver converts that remaining signal into an electronic form carrying the 1s and 0s of the information that was sent.

Achieving such coherent reception with infrared light was trickier than with radio waves; it was difficult for optical receivers to match the frequency of the input light signal. That changed with the development of advanced digital signal processors in the early 2000s. They allowed the receiver to deal with the mismatch between the local light and the incoming signal, reconstruct the signals’ phase and timing, and correct for pulse spreading that occurred en route.

Together, quadrature coding and coherent detection—along with the ability to transmit using two different polarizations of light—have carried optical fibers to their present limit. Today, new transmitter and receiver systems allow a single optical channel—a single wavelength—to carry 100 Gb/s over long distances, in fibers that were designed to carry only 10 Gb/s. And because a typical fiber can accommodate roughly 100 channels, the total capacity of the fiber can approach 10 terabits per second.

Quadrature Phase-Shift Keying

Most of the optical fibers installed since 1990 in regional, national, and international cable systems are compatible with this technology, and in the past six years, many backbone networks have been upgraded to carry signals at that rate. “It’s out there in pretty robust quantities in long-haul terrestrial transport, and most if not all transoceanic submarine cable upgrades are being done at 100 gig,” says Erik Kreifeldt, a senior analyst at the research firm TeleGeography.

For a sense of the numbers, consider a recent fiber system by Ciena Corp., a Hanover, Md.–based company. The system can transmit 96 channels, each carrying 100 Gb/s, across hundreds to thousands of kilometers. All together that amounts to 9.6 Tb/s—enough for 384,000 people to stream Ultra HD from Netflix. And that’s just one fiber; today’s fiber-optic cables can carry anywhere from about a dozen to several hundred fibers.

But, other than a brief period after the tech bubble collapsed in the early 2000s, the world has never had enough bandwidth. Global Internet traffic increased fivefold from 2010 to 2015, according to a recent report from Cisco. The trend is likely to continue with the growth of streaming video and the Internet of Things.

So developers are considering their options.

One idea is to adopt even more advanced signal-coding techniques. The quadrature phase shifting used today encodes two bits per signal interval, but Wi-Fi and other wireless systems use even more complex coding. The widely used 16-QAM code, for example, can carry all 16 possible combinations of four bits, from 0000 to 1111. Some cable television equipment uses 256-QAM.

Such advanced coding schemes do work in fiber, but as you might expect, there’s a trade-off. The more complex the encoding, the closer the information is packed together. The signal can tolerate fewer perturbations before parts of it wind up in the wrong place. Turning up the power can help, but it also creates nonlinear distortion, which worsens with distance. As a result, system makers are generally considering 16-QAM only for relatively short links—of up to a few hundred kilometers.

For longer-haul fibers, engineers have instead devised a way to squeeze channels closer together. There’s room to work with: Today’s advanced long-haul fibers may contain dozens of channels, but they leave chunks of wavelength unused between adjacent channels to prevent cross talk. If those buffer zones are removed, more channels could be packed into each fiber, creating what system engineers call a superchannel, which transmits at every wavelength inside a fiber-optic band. The change can increase transmission efficiency up to 30 percent, says Helen Xenos, director of product and technology marketing for Ciena.

The trick is to find a way to encode signals so they don’t interfere with one another, and at least a few companies have found ways to make it work. In 2013, Ciena and British telecommunications group BT packed multiple channels together without buffers to create an 800-Gb superchannel along a 410-km stretch running between London and Ipswich. At least one Ciena customer, the company says, is in the process of deploying a superchannel system on fibers in a transoceanic cable.

Ciena says it uses individual chips to generate each laser signal. But they can also be combined onto one chip, a more compact and potentially cheaper approach. “Our secret sauce is our photonic integrated circuit technology,” says Geoff Bennett, director of solutions and technology at Infinera. In 2014, Bennett says, the company demonstrated a short, 1-Tb superchannel made using 10 laser transmitters incorporated into a single photonic integrated circuit. He says future systems should be capable of taking fiber capacity to 12 Tb/s along long-haul networks—and twice that rate for shorter systems used in metropolitan areas.

Á La Mode

Such 12-Tb/s superchannels are still a few years away. But when they arrive, they’ll likely be the last capacity boost that we can give to the current generation of installed fibers. That’s because those fibers will be approaching a fundamental barrier called the nonlinear Shannon limit. It’s an extension of a limit, described in 1948 by information theorist Claude Shannon, that says a transmission channel can carry only so much information without error given its bandwidth and signal-to-noise ratio. The nonlinear version includes an additional factor: a limit on how much the power of a signal can be turned up before nonlinear effects that can arise in glass—but not in air—generate enough noise to drown out the signal.

There is no getting around the nonlinear Shannon limit. But when the time comes to install more fibers, carriers will have other options. “The change that is best established and best understood,” says Infinera’s Bennett, is to simply introduce fiber with a larger core. Early fibers were designed with small cores, which strongly limited the number of paths light could take. Using a smaller core helped prevent photons in the signal from bouncing off the core-cladding interface at different angles. If the photons in a pulse did that, they’d take different paths—some longer, some shorter—spreading out the pulse so that it would interfere with the next one.

New fiber designs use novel core microstructures, such as photonic crystals, to constrain the light to follow the same path through cores with up to about twice the cross-sectional area as the standard 9-µm fiber. Because the signal has more space, cross-section-wise, to pass through, its energy density is lower. This decrease in energy density cuts down on those same nonlinear distortions that limit transmission distances and speeds. The end result is an increase in data rates; future versions might boost capacity by as much as a factor of 10, says Bennett.

These larger-core fibers are already being deployed, mostly in submarine cables where transmission capacity is most valuable. And they’re generally a good option for a new connection, says Bennett: “If somebody is planning to deploy new terrestrial fiber, they might as well deploy large-area fiber.” But as attractive as they may be, large-core fibers don’t completely eliminate the nonlinear distortion problem.

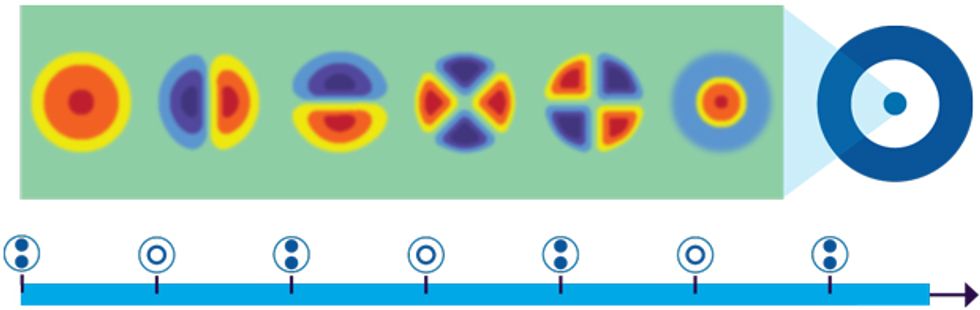

A potentially more promising approach is to create multiple parallel paths along which separate light signals can travel. Developers call it spatial-division multiplexing because the strategy splits the transmitted data into different physical paths.

The term actually refers to three very different kinds of parallel transmission. The simplest and most obvious approach is to add more physical paths by adding more fibers to a cable. Multifiber cables are already in wide use, but boosting capacity can be costly and complex because each fiber in a cable needs its own transmitters, receivers, and amplifiers.

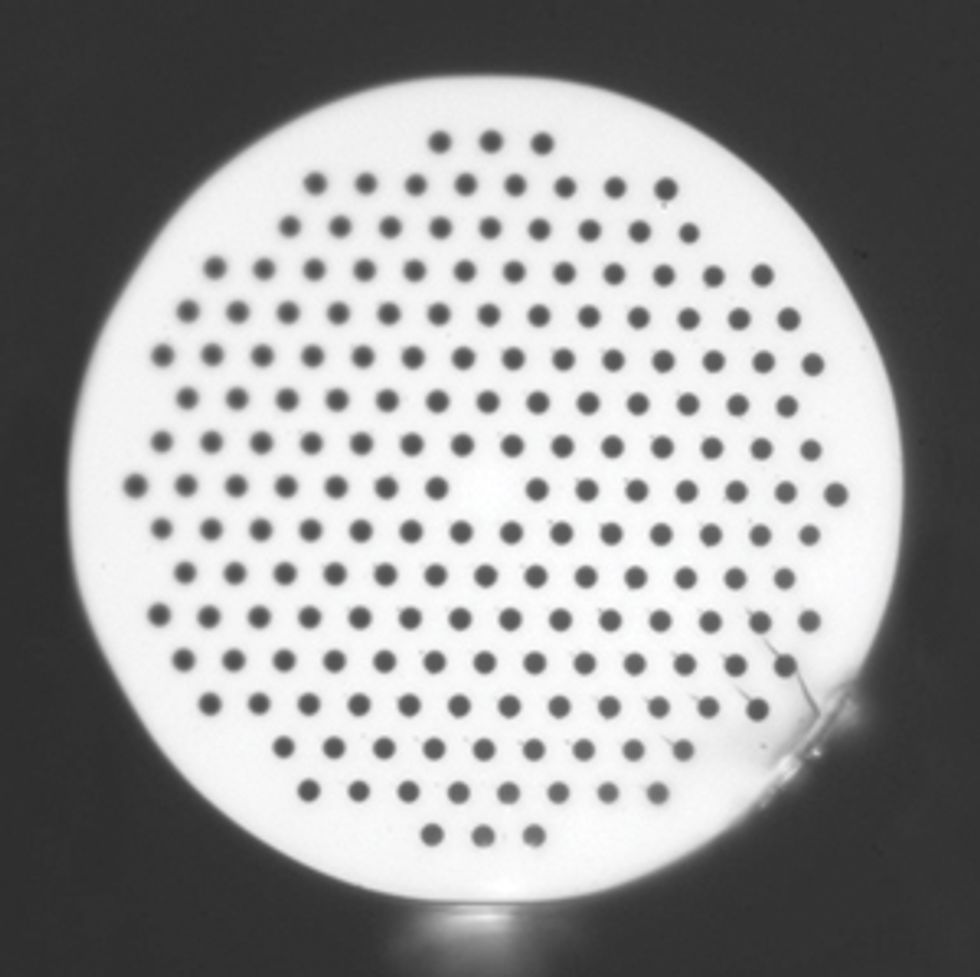

Much bigger rewards may be possible if engineers can find a way to integrate separate light paths within the same fiber in a compact fashion. One way to do that is to construct fibers that contain several light-guiding cores running along their length. Like an ordinary fiber, a multicore fiber is made by first assembling the required materials in a cylindrical “preform,” which is then heated so the glass can be pulled into a long thin fiber.

Unlike multifiber cables, which need a separate fiber amplifier for each fiber, a multicore fiber could be paired with a multicore amplifier. An eight-core amplifier could potentially cost much less than eight single-fiber amplifiers.

An alternative is to make a core that can guide light in a few distinct ways, called modes. Light signals in two different modes pass through each other along the fiber, but they can be isolated from each other when they emerge at the end of the fiber.

To create multiple modes in a fiber, the mode for each signal must be shaped to have the right cross section as it goes into the fiber. Each mode would need to be generated by its own laser, and optics and electronics at the receiving end must be able to separate out the modes. This separation is already done in radio systems using multiple-input/multiple-output antennas.

So far, both multimode and multicore fiber transmission are in the early stages of development. There have been multiple laboratory tests, dubbed “hero experiments” because they’re out to set records that impress reporters or supervisors. Such demonstrations suggest that each approach has the potential to multiply fiber capacity significantly. Together they might push capacity up by a factor of perhaps a few hundred.

But the systems needed to exploit these approaches aren’t practical yet, and a host of questions remain. “Basically all spatial-division multiplexing techniques have their own showstopping problems today,” says Bennett. For example, for multicore and multimode fibers, simply connecting the ends of the fibers to transmitters and receivers is far more complex than for standard fibers. In both cases, much more mechanical precision is needed. Great care has to be taken to make sure light goes in exactly as intended. And for multicore fibers with multicore amplifiers, the cores in each system have to match up with extreme precision.

Barring an engineering breakthrough, “it’s almost always easier to just light up another fiber,” Bennett says. “This is what service providers are telling us.”

Peter Winzer, a distinguished member of technical staff at Bell Labs and a leader in high-speed fiber systems, agrees that installing new cables with even more fibers is the simplest approach. But in a recent article, he warned that this approach, which will add to the cost of a cable, might not be popular among telecommunications companies. It wouldn’t reduce the cost per transmitted bit as much as they had come to expect from earlier technological improvements.

New ideas continue to emerge. In June 2015, Nikola Alic of the University of California, San Diego, and colleagues reported a way of increasing fiber transmission distance by using optical frequency combs, which naturally lock laser wavelengths relative to one another, eliminating jitter and improving signal quality. “We can at least double the data rate of any system” by using a frequency comb, says Alic. “It is very nice and solid work,” says Winzer, but he doubts it would have much practical impact, because developers want a bigger increase.

What will come next? Today telecommunications carriers have their hands full installing 100-Gb coherent systems. Superchannels will boost maximum capacity by 30 percent or so, and spatial-division multiplexing looks like the best candidate for the next big jump in capacity. But beyond that, who knows?

Perhaps some new twist on an old idea might come along. Coherent transmission, which was finally adopted around 2010, was actually a hot topic in the 1980s, but it lost out then to other technologies that were ready to deploy. Something totally new might emerge from the fertile ground of photonics research. And we could always lay more fibers. In any case, the global thirst for data will keep engineers working very hard to keep pumping up the bandwidth.

This article originally appeared in print as “Great Leaps of Light.”

On 27 January 2016 this text was updated to clarify quadrature coding terminology.

About the Author

Freelance writer Jeff Hecht has covered fiber for roughly 40 years—“an embarrassingly long time,” he says—and written several books on the topic along the way. In retrospect, he says, “It was sort of like covering a winning sports team on a roll.” Now the question is what comes next.