At a human-computer interaction conference this week in Glasgow, U.K., Carnegie Mellon University researcher Michal Luria is presenting a paper on “Challenges of Designing HCI for Negative Emotions.” The discussion includes a case study involving what Luria calls “cathartic objects”: robotic contraptions that you can beat, stab, smash, and swear at to help yourself feel better.

In the paper, presented at the ACM CHI Conference on Human Factors in Computing Systems, Luria and co-authors Amit Zoran and Jodi Forlizzi point out that technology tends to try and handle negative emotions by attempting to “fix” them immediately:

Technology is often designed to support positive emotions, yet it is not very common to encounter technology that helps people engage with emotions of sadness, anger or loneliness (as opposed to resolving them)... As technology gains a central role in shaping everyday life and is becoming increasingly social, perhaps there is a design space for interaction with social and personal negative emotions.

The researchers acknowledge that it’s going to be challenging to find “cathartic” ways of engaging with negative emotions using technology that can demonstrably improve well-being, and that studying the topic is going to be tricky as well. But it certainly seems like an important design space to investigate, especially as we look to social robots of all kinds to play a more prominent role in our lives. Luria built her “cathartic objects” to explore a range of possible physical interactions:

Object 1 senses when it is poked with a sharp object. It responds in side-to-side gestures that signal it has absorbed the pain. When too many objects are inserted, it continues shaking until everything is removed, to encourage completion of a catharsis cycle.

Object 2 allows the user to verbally express frustration through cursing. The object recognizes cursing words, “absorbs” them, and “re-purposes” them as light energy.

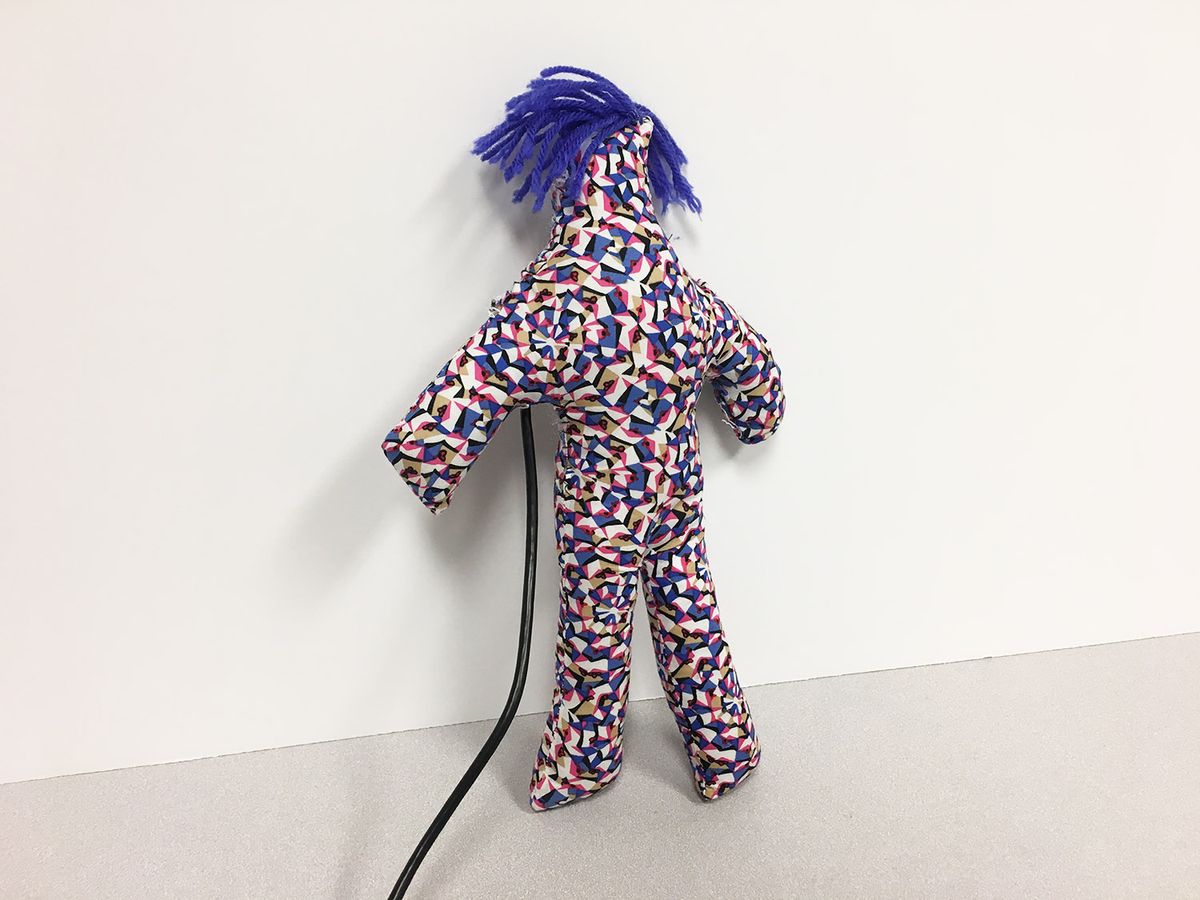

Object 3 is a doll-like prototype that laughs in an irritating way when it senses the user is angry. Its goal is to encourage the user to physical express their emotional state using the doll. As the user hits the soft prototype against something, it stops laughing and re-evaluates the user’s need for additional catharsis.

Object 4 allows the user to create a personalized message, and then to destroy it. The user inscribes a ceramic tile and inserts it into the object to destroy with a hammer. As a result, the tile breaks and triggers a sequence of expressive light and sound, but is kept inside the object. The object allows the user to address a specific source of frustration without doing harm, and to use the artifact to document and reflect on their cathartic action.

It’s worth pointing out that these robotic objects are not being improperly used or “mistreated.” Here, the robots are being interacted with in exactly the way they were designed for. If it helps, think of these robots as being akin to a punching bag, although you could say there’s a big difference between a silent punching bag and a robotic object that appears to have agency and reacts to your, er, inputs. So, clearly, encouraging aggression towards such objects brings up some deep ethical questions.

We asked Luria, who is currently a Ph.D. candidate at CMU’s Human-Computer Interaction Institute, about this and other issues via email.

IEEE Spectrum: Why did you decide on these four objects with these specific interaction modes? Were there other kinds of objects that you considered?

Michal Luria: The chosen prototypes were designed to probe a range of physical interaction, as it is a critical part of cathartic expression. The prototypes varied is multiple physical aspects: the interaction input (verbal or physical), the output (movement, light or sound), the quality of interaction (instantaneous, continuous, forceful or gentle), and the material they robotic objects are made of (fabric, ceramics, and plastic). I also tested the idea of cathartic expression that is documented versus one that leaves no trace.

The goal was to create a broad range of nuanced interactions to see how it would influence the cathartic experience. Some ideas that were not created but I might include in future iterations are an Alexa-type device made of concrete that would break in parts when things are thrown at it, and a robotic prototype made of wax that would melt through extended interaction.

How will you be able to figure out the effectiveness of objects like these without user studies? In your own personal experience, how effective do you feel like these objects are?

It has been extremely challenging to get approval for formal human subject studies that center around negative emotions and destructive behaviors. We also know, according to research in psychology, that people tend to feel aversion towards the idea of any negative emotions, which does not help the case. My first step towards figuring out the effectiveness is through auto-ethnography or auto-biographical methods, that have been recently adopted in HCI design research. These methods has some drawbacks, but might be suitable for understanding long-term and intimate interaction, especially on a topic that is somewhat controversial.

From my anecdotal experiences with the prototypes, I especially enjoyed the verbal one. It felt very cathartic—I think it might have to do with personality and personal preference. When I get very angry my tendency is to swear, but only when a very close person is nearby. I realized that surprisingly I don’t swear when I’m alone, and of course I don’t do it around peers or acquaintances. Even with some closer friends it seems inappropriate. This is why I think it might be an interesting space for robotic objects: We don’t want to take our aggressions on other people, but we also frequently don’t let that energy out when we are alone. Maybe there is a safe space to express negative emotions with technology. Similarly, I enjoyed the prototype that begins laughing when you get too angry—that small encouragement really worked for me.

I hope that as some point I would be able to test these interactions with more people. This might be in through formal user studies, or in an art exhibition.

Are you concerned that using robots like these to assist in the expression of negative emotions might reinforce negative behaviors towards other robots, especially in children?

Yes, 100 percent. I think this is an extremely important question, and is one of the concerns in approving human subject research for this work. That said, this is a question that we are going to have to deal with eventually, not only regarding negative emotions, but also around sex robots, or even a simple interaction like kids saying ‘please’ to Alexa—this has an effect on how we understand human interactions and how we interact with each other.

So I think we need to find a way to safely conduct this kind of research so we can better understand the potential consequences or benefits. Catharsis has been controversial since its early days, but recently researchers have been finding that physical expression of anger in particular contexts, or combined with reflection can be beneficial.

This concern is also the reason for why I designed the prototypes to be somewhat expressive, but overall very non-anthropomorphic. I hope that if the creature you interact with seems nothing like a human but can still give a sense that it absorbs your pain, it might work. I did have a response from a friend who said she felt extremely sorry for the object that is poked with needles, as it reminded her of the ottoman in “Beauty and the Beast.”

How can robotics as a field make better use of negative as well as positive emotions in human-robot interactions?

I try through this project to push back on the tendency to build technology that sets out to make people “happier” or more efficient. We can’t treat every negative emotion as a problem that should be solved by technology. After reading a wonderful book of essays that highlights the importance of every single emotion that is perceived as “negative,” I came to realize that negative emotions are positive.

I have been able to feel much more comfortable being sad or angry or bored, and I hope that as a field we can support these kinds of emotions too (in humans! not robots!) and consider them regardless of their complexity. This is especially true for social robots. We are designing social robots to learn, understand, and respond to emotional cues, and at the moment as designers we have no idea about how a robot should deal with negative emotions of any kind—they probably shouldn’t just ignore them.

How would you like to continue this research?

I am currently working on integrating the objects in long-term autobiographical research to see whether they are effective for catharsis over time. I am also interested in researching how robots should respond to natural negative expressions among users (rather than encourage them)—social robots are going to be exposed to negative emotions if they are going to be in intimate spaces, so we should probably begin to think about that.

[ Michal Luria ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.