This article is part of our exclusive IEEE Journal Watch series in partnership with IEEE Xplore.

When it’s suspected that a person may have a certain neurological disorder, such as multiple sclerosis or Parkinson’s disease, doctors will often assess the person’s ability to walk. Simply by looking at someone’s gait, clues may emerge about an underlying neurological disorder.

In a recent study, a team of researchers at the University of Illinois explored a technique using standard video cameras combined with AI that can assess a person’s gait and identify those who may have Parkinson’s disease or MS. The results, which show the approach can reach accuracies as high as 79 percent, were published on 20 September in the IEEE Journal of Biomedical and Health Informatics.

Neurological disorders can often cause subtle changes in a person’s gait, even during the early-to-mid stages of disease. Often health care professionals will use specialized equipment such as a lab-based motion-capture system, force plates, or electromyography sensors to assess a person’s gait for neurological abnormalities, which can be expensive and require skilled personnel to analyze the results.

“The integration of video of people walking and AI may allow for a wider range of health care providers in rural or underserved communities to identify early gait changes from neurological conditions and more efficiently provide a potential diagnosis,” explains Manuel Enrique Hernandez, an assistant professor in the Department of Kinesiology and Community Health at the University of Illinois at Urbana-Champaign.

“Properly developed, this could be a game changer.” —Richard Sowers

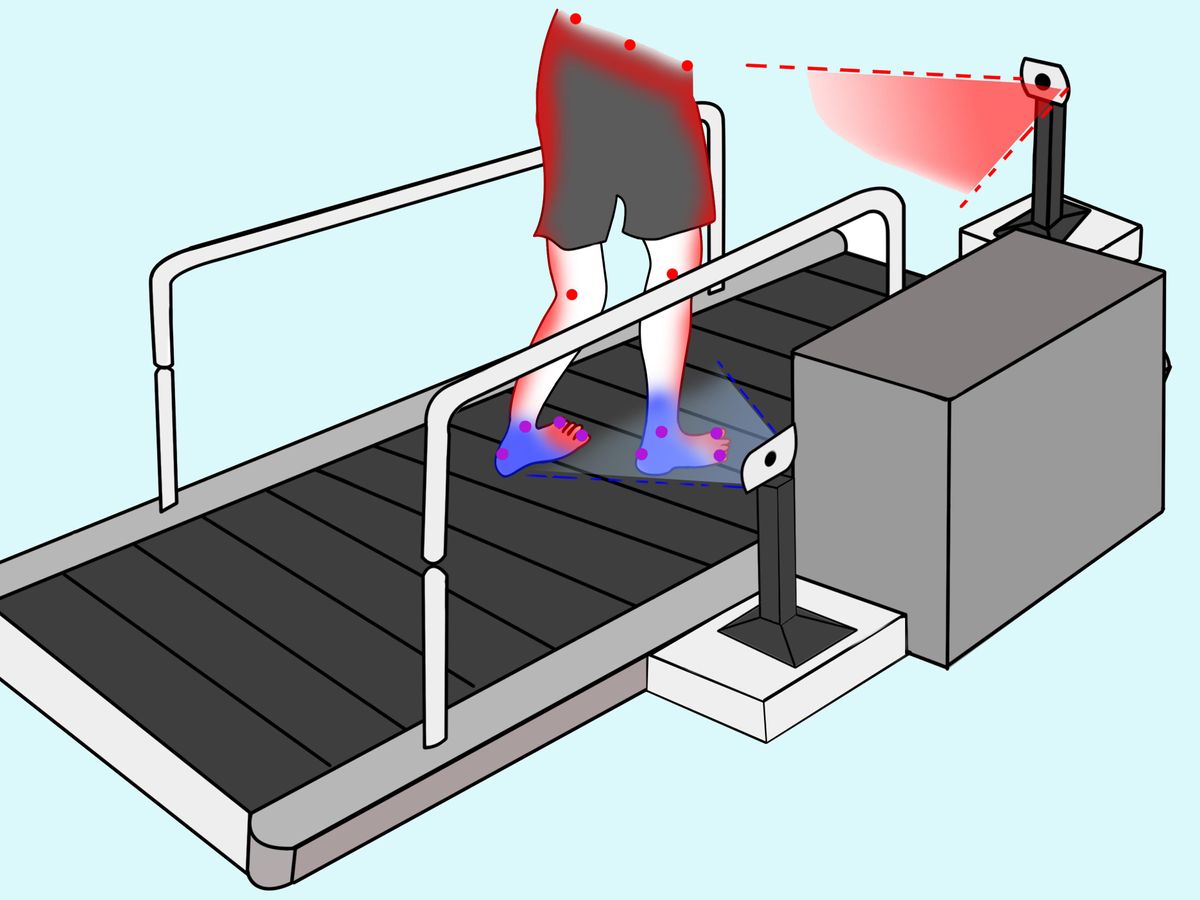

In their study, Hernandez and his colleagues recruited a total of 33 volunteers—10 with MS, 9 with Parkinson’s disease, and 14 who did not have any neurological disease. All of the volunteers were asked to walk on a treadmill while two standard RGB cameras recorded their movements from side and front angles.

“We looked at the body coordinates for hips, knees, ankles, the big and small toes and the heels,” explains Rachneet Kaur, a Ph.D. student at the University of Illinois who was involved in the research. “We analyzed how these coordinates moved over time to look for differences between adults with and without MS or Parkinson’s disease.”

In total, the researchers developed and validated 16 different AI algorithms to assess these gait movements. Several of the algorithms were more than 75 percent accurate in predicting a person’s neurological status, with the top-performing algorithm—a convolutional deep-learning model—achieving 79 percent of accuracy.

“We were pleasantly surprised with the validation results of using somewhat inexpensive video equipment and open-source image processing software to get the performance we saw,” says Richard Sowers, a professor in the departments of Mathematics and of Industrial and Enterprise Systems Engineering at Urbana-Champaign who was also involved in the study. “Properly developed, this could be a game changer.”

Although commercialization of such an approach is still a few years away, the team says they have made their work available for free online for other researchers to use. In future work, they hope to explore how the inclusion of people with other neurological disorders could improve the accuracy of this approach. They also hope to experiment with the number and positioning of the cameras.

- AI Could Analyze Speech to Help Diagnose Alzheimer's - IEEE ... ›

- Paige's AI Diagnostic Tech Is Revolutionizing Cancer Diagnosis ... ›

- App identifies Parkinson’s, COVID-19 based on user’s voice - IEEE Spectrum ›

Michelle Hampson is a freelance writer based in Halifax. She frequently contributes to Spectrum's Journal Watch coverage, which highlights newsworthy studies published in IEEE journals.