A Half Century Ago, Better Transistors and Switching Regulators Revolutionized the Design of Computer Power Supplies

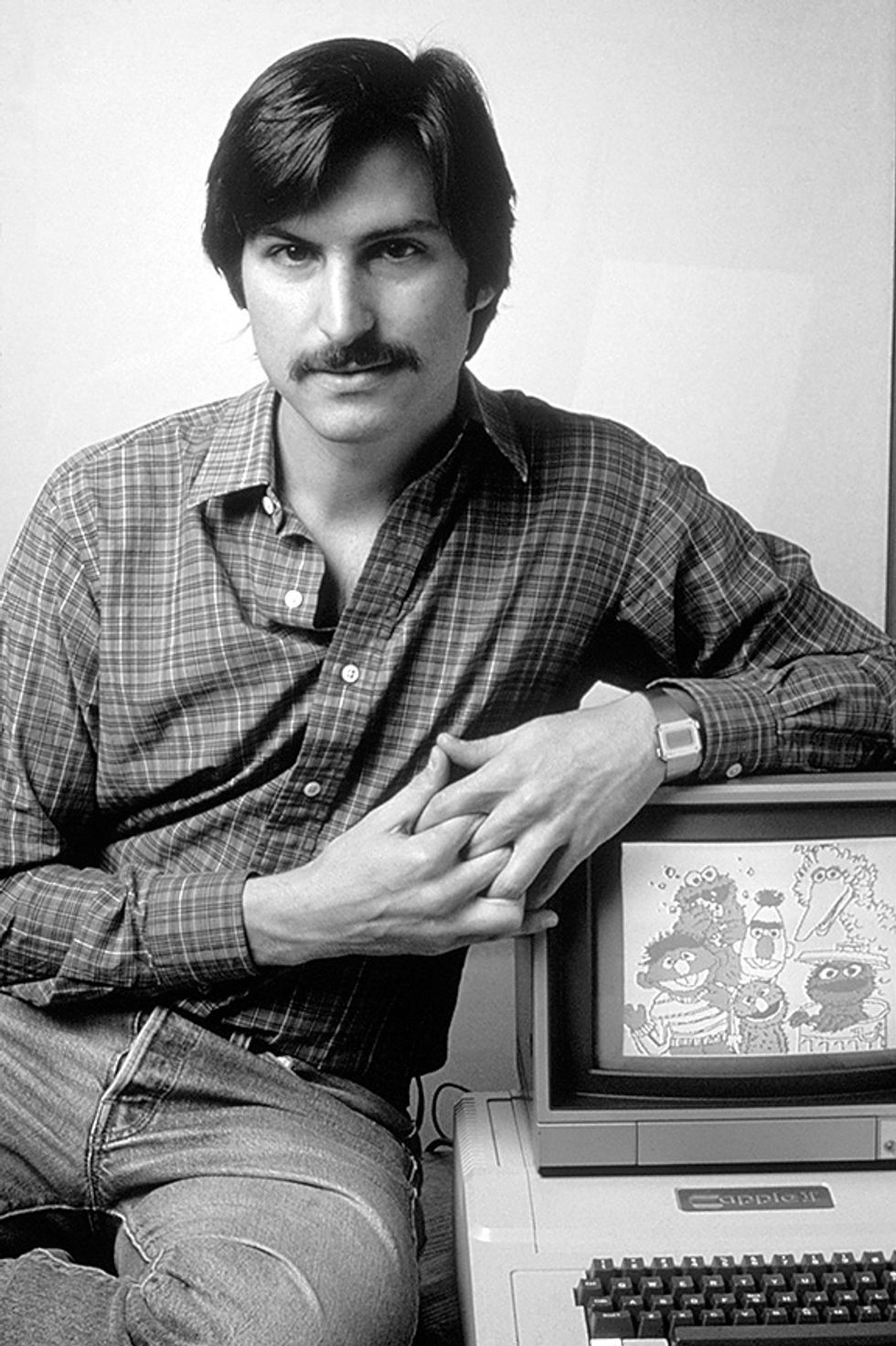

Apple, for one, benefited, though it didn’t spark this revolution, as Steve Jobs claimed

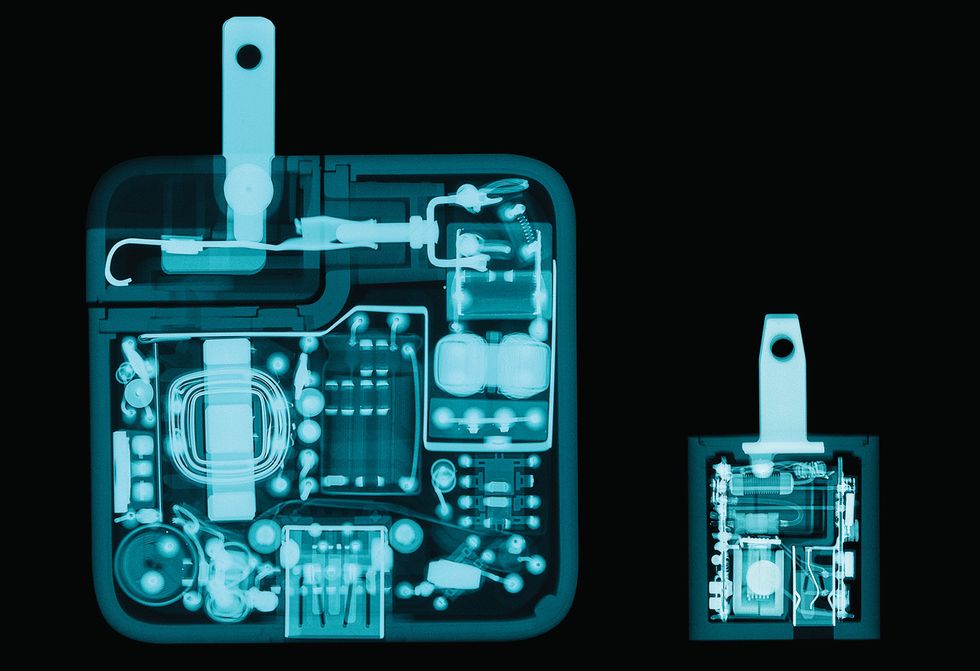

Intel Not Inside: X-rays reveal the component parts of a switching power supply used in the original Apple II microcomputer, released in 1977.

Computer power supplies don't get much respect.

As a tech enthusiast, you probably know what microprocessor is in your computer and how much physical memory it has, but odds are you know nothing about the power supply. Don't feel bad—even for manufacturers, designing the power supply is an afterthought.

That's a shame, because it took considerable effort to create the power supplies found in personal computers, which represent a huge improvement from the circuits that powered other kinds of consumer electronics up until about the late 1970s. This breakthrough resulted from huge strides made in semiconductor technology a half century ago, specifically improvements in switching transistors and innovations in ICs. And yet, it's a revolution that goes completely unrecognized by the general public and even by many people familiar with the history of microcomputers.

Power supplies are not without ardent champions, however, including one that might surprise you: Steve Jobs. According to his authorized biographer, Walter Isaacson, Jobs had strong feelings about the power supply of the pioneering Apple II personal computer and its designer, Rod Holt. Jobs's claim, as reported by Isaacson, goes like this:

Instead of a conventional linear power supply, Holt built one like those used in oscilloscopes. It switched the power on and off not sixty times per second, but thousands of times; this allowed it to store the power for far less time, and thus throw off less heat. “That switching power supply was as revolutionary as the Apple II logic board was," Jobs later said. “Rod doesn't get a lot of credit for this in the history books, but he should. Every computer now uses switching power supplies, and they all rip off Rod Holt's design."

Jobs's claim is a big one, and it didn't sit right with me, so I did some investigating. I discovered that, although switching power supplies were revolutionary, the revolution took place between the late 1960s and the mid-1970s as switching power supplies took over from simple but inefficient linear power supplies. The Apple II, introduced in 1977, benefitted from this revolution but didn't instigate it.

This correction to Jobs's version of events is much more than a bit of engineering trivia. Today, switching power supplies are a ubiquitous mainstay, which we use daily to charge our smartphones, tablets, laptops, cameras, and even some of our cars. They power clocks, radios, home audio amplifiers, and other small appliances. The engineers who actually did foment this revolution deserve to be recognized. And it's a pretty good story, too.

The power supply in a desktop computer like the Apple II converts alternating-current line voltage into direct current, providing highly stable voltages to power the system. Power supplies can be built in a variety of ways, but linear and switching designs are the two most common.

A typical linear power supply uses a bulky transformer to convert the relatively high-voltage AC from the power lines into low-voltage AC, which is then converted to low-voltage DC using diodes, usually four of them wired in the classic bridge configuration. Large electrolytic capacitors are used to smooth the output of the diode bridge. Computer power supplies use a circuit called a linear regulator, which reduces DC voltage to the desired level and keeps it fixed there even as the load varies

Linear power supplies are almost trivial to design and build. And they use inexpensive low-voltage semiconductors. But they have two major drawbacks. One is the large capacitors and the hefty transformer needed, which could never be packaged into anything as small, light, and convenient as the chargers we all now use with our smartphones and tablets. The other is the linear regulator, a transistor-based circuit, which turns the excess DC voltage—anything above the designated output voltage—into waste heat. So such power supplies typically squander more than half of the power they consume. And they often require large metal heat sinks or fans to get rid of all that heat.

Warts and All

loyaltyshopping_cartlocal_librarydelete

In the past, small electronic devices typically used bulky wall transformers, disparagingly called “wall warts." Around the turn of the 21st century, technology improvements made compact, low-power switching supplies practical for small devices. As the price of switching AC/DC adapters dropped, they rapidly replaced bulky wall transformers for most household devices.

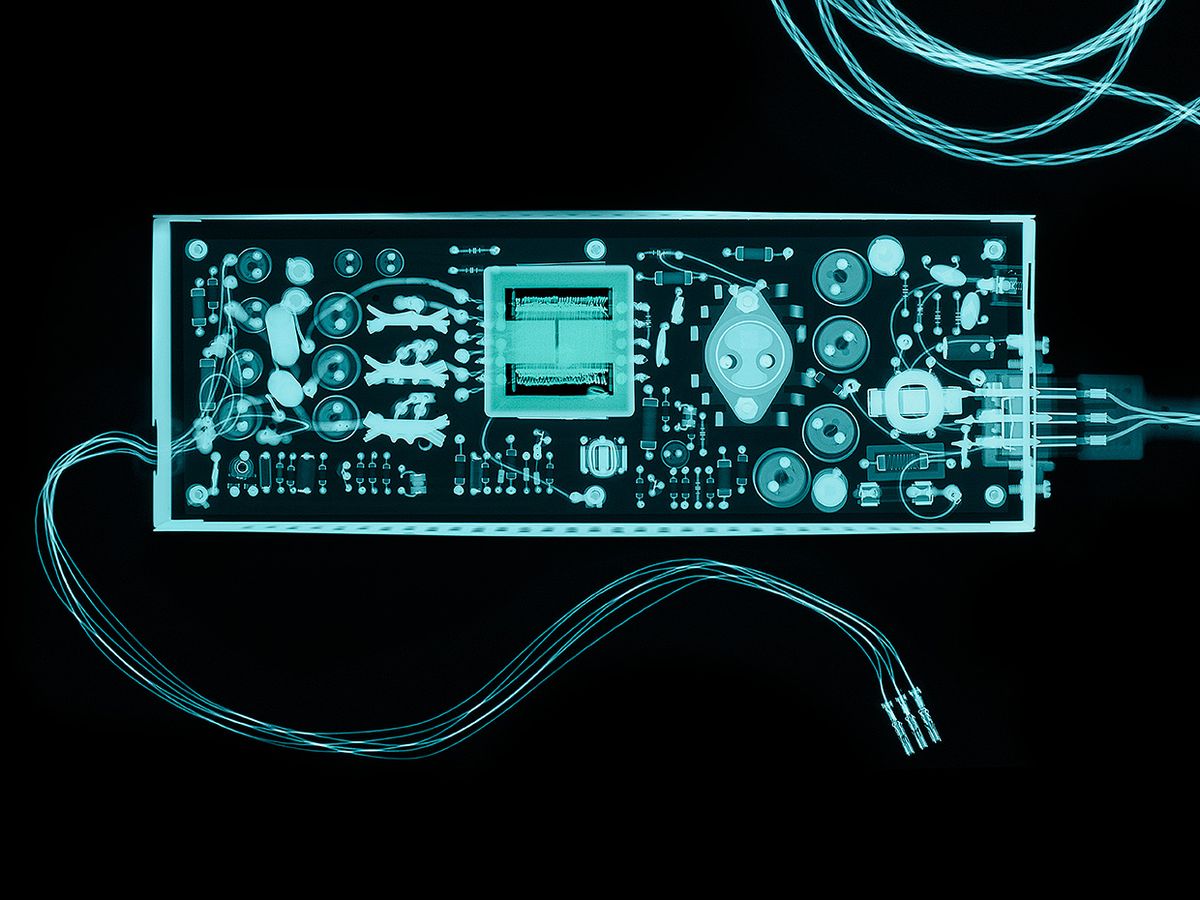

Apple made the charger into a highly designed object, introducing a sleek iPod charger in 2001 with a compact IC-controlled flyback power supply inside [left]. USB chargers soon became ubiquitous, with Apple's ultracompact inch-cube charger (introduced in 2008) becoming iconic [right].

The latest trend in high-end chargers of this type is to use gallium-nitride (GaN) semiconductors, which are able to switch faster than silicon transistors and thus be more efficient. Pushing technology in the other direction, the cheapest USB chargers are now sold for under a dollar, although at the cost of bad power quality and missing safety features. —K.S.

A switching power supply works on a different principle: In a typical switching power supply, the AC line input is converted to high-voltage DC, which is switched on and off tens of thousands of times a second. The high frequencies employed allow the use of much smaller and lighter-weight transformers and smaller capacitors. A special circuit precisely times the switching to control the output voltage. Because they don't need linear regulators, such supplies waste little energy: They're typically 80 to 90 percent efficient and therefore give off much less heat.

A switching power supply is, however, considerably more complex than a linear power supply, and thus it is harder to design. In addition, it is much more demanding on the components, requiring high-voltage power transistors that can efficiently switch on and off at high speed.

As a side note, I should mention that some computers have used power supplies that are neither linear nor switching. One crude but effective technique was to run a motor off of line power and use that motor to drive a generator that creates the desired output voltage. Motor-generator units were used for decades, at least as far back as the IBM punch-card machines of the 1930s and continuing through the 1970s for such things as Cray supercomputers.

Another option, popular from the 1950s through the 1980s, was to use ferroresonant transformers, a special type of transformer that provides a constant voltage output. Also, the saturable reactor, a controllable inductor, was used for power-supply regulation for vacuum-tube computers in the 1950s. It reappeared [PDF] as the “mag amp" in some modern PC power supplies, providing additional regulation. But in the end, these odd approaches largely gave way to switching power supplies.

The principles behind the switching power supply have been known to electrical engineers since the 1930s, but this technique found limited use in the vacuum-tube era. Special mercury-containing tubes called thyratrons were used in some power supplies of the time that could be considered primitive, low-frequency switching regulators. Examples include the REC-30 Teletype power supply from the 1940s and the supply used in the IBM 704 computer from 1954. With the introduction of power transistors in the 1950s, though, switching power supplies rapidly improved. Pioneer Magnetics started building switching power supplies in 1958. And General Electric published an early design for a transistorized switching power supply in 1959.

Through the 1960s, NASA and the aerospace industry provided the main driving force behind the development of switching power supplies, because for aerospace applications, the advantages of small size and high efficiency trumped the high cost. For example, in 1962 the Telstar satellite (the first satellite to transmit television pictures) and the Minuteman missile both used switching power supplies. As the decade wore on, costs came down, and switching supplies were designed into things sold to the public. In 1966, for example, Tektronix used a switching power supply in a portable oscilloscope, allowing it to run off mains current or batteries.

That trend accelerated as power-supply manufacturers started selling switching units to other companies. In 1967, RO Associates introduced the first 20-kilohertz switching power supply product, which it claimed was the first commercially successful example of a switching power supply. Nippon Electronic Memory Industry Co. started developing standardized switching power supplies in Japan in 1970. By 1972, most power-supply manufacturers were selling switching supplies or were about to offer them.

It was about this time that the computer industry started using switching power supplies. Early examples include Digital Equipment's PDP-11/20 minicomputer in 1969 and Hewlett-Packard's 2100A minicomputer in 1971. A 1971 industry publication stated that companies using switching regulators “read like a 'Who's Who' of the computer industry: IBM, Honeywell, Univac, DEC, Burroughs, and RCA, to name a few." In 1974, minicomputers using switching power supplies included Data General's Nova 2/4, Texas Instruments' 960B, and systems from Interdata. In 1975, switching power supplies were used in the HP2640A display terminal, IBM's typewriter-like Selectric Composer, and the IBM 5100 portable computer. By 1976, Data General was using switching supplies in half of its systems, and HP was using them for smaller systems such as the 9825A Desktop Computer and the 9815A Calculator. Switching power supplies were also showing up in the home, powering some color television sets by 1973.

Switching power supplies were widely featured in electronics magazines of this era, both in advertisements and articles. As far back as 1964, Electronic Design recommended switching power supplies for better efficiency. The October 1971 cover of Electronics World featured a 500-watt switching power supply and an article titled, “The Switching Regulator Power Supply." Computer Design in 1972 discussed switching power supplies in detail and the increasing prevalence of such supplies in computers, although it mentioned that some companies were still skeptical. In 1976, a cover of Electronic Design announced, “Suddenly it's easier to switch" describing the new switching power-supply controller ICs. Electronics ran a long article on the subject; Powertec ran two-page ads on the advantages of its switching power supplies with the catchphrase, “The big switch is to switchers"; and Byte announced switching power supplies for microcomputers from a company called Boschert.

Robert Boschert, who quit his job and started building power supplies on his kitchen table in 1970, was a key developer of this technology. He focused on simplifying these designs to make them cost competitive with linear power supplies, and by 1974 he was producing in volume a low-cost power supply for printers, which was followed by a low-cost 80-W switching power supply in 1976. By 1977, Boschert Inc. had grown to a 650-person company. It made power supplies for satellites and the Grumman F-14 fighter aircraft, later producing computer power supplies for companies such as HP and Sun.

The introduction of high-voltage, high-speed transistors at low cost in the late 1960s and early 1970s, by companies such as Solid State Products Inc. (SSPI), Siemens Edison Swan (SES), and Motorola, among others, helped push switching power supplies into the mainstream. Faster transistor switching speeds boost efficiency because heat is dissipated in such a transistor mostly while it is switching between on and off states, and the faster the device could make that transition, the less energy it would waste.

Transistor speeds were increasing by leaps and bounds at the time. Indeed, transistor technology was moving so fast that the editors of Electronics World claimed in 1971 that the 500-W power supply featured on its cover couldn't have been built with the transistors available just 18 months earlier.

Another notable advance came in 1976, when Robert Mammano, a cofounder of Silicon General Semiconductors, introduced the first IC to control a switching power supply, designed for an electronic Teletype machine. His SG1524 controller IC drastically simplified the design of these supplies and lowered costs, triggering a surge in sales.

By 1974, give or take a year or two, it was clear to anyone with even a smattering of knowledge of the electronics industry that a real revolution in power-supply design was taking place.

The Apple II personal computer was introduced in 1977. One of its features was a compact, fanless switching power supply [PDF], which provided 38 W of power at 5, 12, –5, and –12 volts. It used Holt's simple design, a kind of switching power supply known as an off-line flyback converter topology. Jobs claimed that every computer now rips off Holt's revolutionary design. But was this design truly revolutionary in 1977? And was it copied by every other computer maker?

No, and no. Similar off-line flyback converters were being sold at the time by Boschert and other companies. Holt obtained a patent on a couple of specific features of his supply, but those features never became widely used. And building the control circuitry out of discrete components, as was done for the Apple II, proved a technological dead end. The future of switching power supplies belonged to special-purpose controller ICs.

If there's one microcomputer that did have a lasting impact on power-supply designs, it was the IBM Personal Computer, launched in 1981. By then, just four years after the Apple II, power-supply technology had greatly changed. While both of these early personal computers used off-line flyback power supplies with multiple outputs, that's about all they had in common. Their drive, control, feedback, and regulation circuits were all different. Even though the IBM PC power supply used an IC controller, it contained approximately twice as many components as the Apple II power supply. These extra components provided additional regulation on the outputs and a “power good" signal when all four voltages were correct.

In 1984, IBM released a significantly upgraded version of its personal computer, called the IBM Personal Computer AT. Its power supply used a variety of new circuit designs, entirely abandoning the earlier flyback topology. It swiftly became the de facto standard and remained so until 1995, when Intel introduced the ATX form-factor specification, which among other things defined the ATX power supply, still standard today.

Despite the advent of the ATX standard, computer power systems became more complicated in 1995 with the introduction of the Pentium Pro, a microprocessor that required lower voltage at higher current than an ATX power supply could provide directly. To supply this power, Intel introduced the voltage regulator module (VRM)—a DC-to-DC switching regulator installed next to the processor. It reduced the 5 V from the power supply to the 3 V used by the processor. The graphics cards found in many computers also contain VRMs to power the high-performance graphics chips they contain.

A fast processor these days might require as much as 130 W from a VRM—vastly more than the mere half a watt of power used by the Apple II's 6502 processor. Indeed, a modern processor chip alone can use more than three times the power consumed by the entire Apple II computer.

The growing power consumption of computers has become a cause for environmental concern, resulting in initiatives and regulations to make power supplies more efficient. In the United States, the government's Energy Star and the industry-led 80 Plus certifications pushed manufacturers to produce more “green" power supplies. They've been able to do so using a variety of techniques: more efficient standby power, more efficient startup circuits, resonant circuits that reduce power losses in the switching transistors, and “active clamp" circuits that replace switching diodes with more efficient transistorized circuits. Improvements in power MOSFET transistor and high-voltage silicon rectifier technology in the past decade have also led to efficiency improvements.

The technology of switching power supplies continues to advance in other ways, too. Today, instead of using analog circuits, many power supplies use digital chips and software algorithms to control their outputs. Designing a power-supply controller has becomes as much a matter of programming as of hardware design. Digital power management lets power supplies communicate with the rest of the system for higher efficiency and logging. While these digital technologies are largely reserved for servers now, they are starting to influence the design of desktop computers.

It's hard to square this history with Jobs's assertion that Holt should be better known or that “Rod doesn't get a lot of credit for this in the history books, but he should." Even the very best power-supply designers don't become known outside of a tiny community. In 2009, the editors of Electronic Design welcomed Boschert into their Engineering Hall of Fame. Robert Mammano received a lifetime achievement award in 2005 from the editors of Power Electronics Technology. Rudy Severns received another such lifetime achievement award in 2008 for his innovations in switching power supplies. But none of these luminaries in power-supply design are even Wikipedia-famous.

Jobs's much-repeated assertion that Holt has been overlooked led to Holt's work being described in dozens of popular articles and books about Apple, from Paul Ciotti's “Revenge of the Nerds," which appeared in California magazine in 1982 to Isaacson's best-selling biography of Jobs in 2011. So ironically enough, even though his work on the Apple II was by no means revolutionary, Rod Holt has probably become the most famous power-supply designer ever.

This article appears in the August 2019 print issue as “The Quiet Remaking of Computer Power Supplies?"