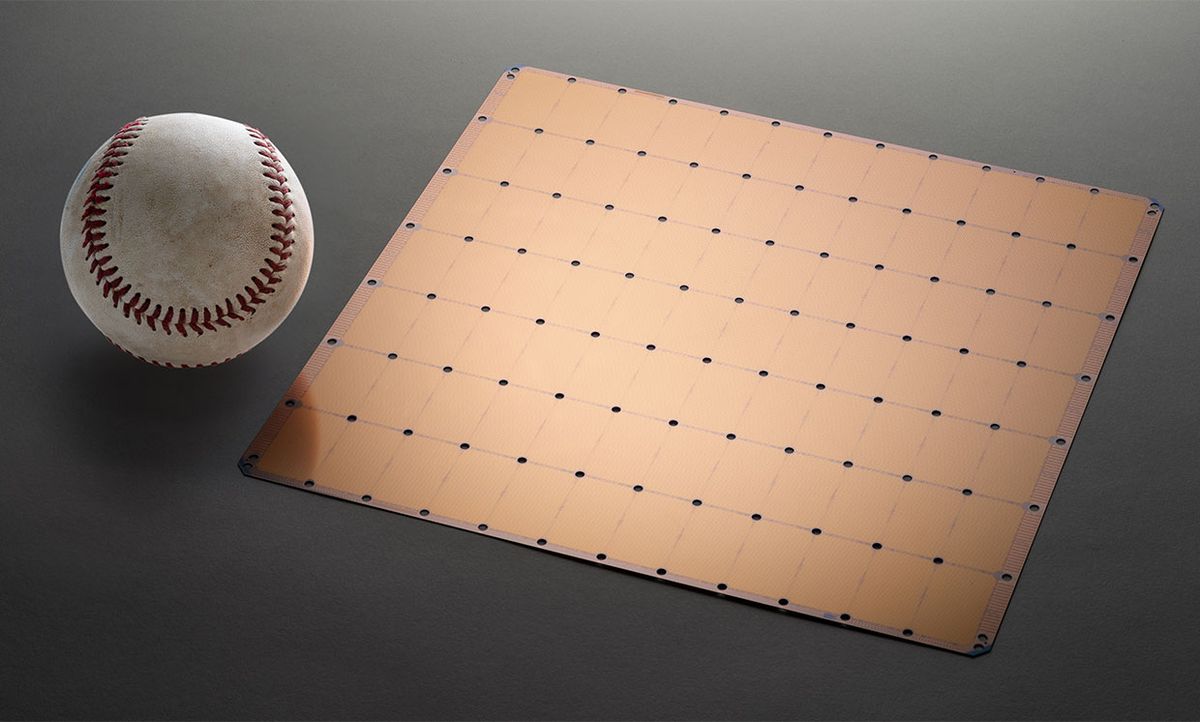

On Monday at the IEEE Hot Chips symposium at Stanford University, startup Cerebras unveiled the largest chip ever built. It is roughly a silicon wafer-size system meant to reduce AI training time from months to minutes. It is the first commercial attempt at a wafer-scale processor since Trilogy Systems failed at the task in the 1980s.

1 | The stats

As the largest chip ever built, Cerebras’s Wafer Scale Engine (WSE) naturally comes with a bunch of superlatives. Here they are with a bit of context where possible:

Size: 46,225 square millimeters. That’s about 75 percent of a sheet of letter-size paper, but 56 times as large as the biggest GPU.

Transistors: 1.2 trillion. Nvidia’s GV100 Volta packs in 21 billion.

Processor cores: 400,000. Not to pick on the GV100 too much, but it has over 5000 CUDA cores and more than 600 tensor cores, both of which are used in AI workloads. (Fifty-six GV100s would then have more than 300,000 cores.)

Memory: 18 gigabytes of on-chip SRAM. Cerebras says this is 3,000 times as much as the GPU. The Volta has 6 MB of SRAM in its L2 cache according to this whitepaper [page 10]. But SRAM may not be a fair comparison as each GV100 works with 32 GB of high-bandwidth DRAM.

Memory bandwidth: 9 petabytes per second. According to Cerebras, that’s 10,000 times our favorite GPU. Cerebras is here comparing the Volta’s 900 GB/s bandwidth to high-bandwidth DRAM rather than the on-chip SRAM [page 21].

2 | Why do you need this monster?

Cerebras makes a pretty good case in its white paper [PDF] for why such a ridiculously large chip makes sense. Basically, the company argues that the demand for training deep learning systems and other AI systems is getting out of hand. The company says that training a new model—creating a system that, once trained, can recognize people or win a game of Go—is taking weeks or months and costing hundreds of thousands of dollars of compute time. That cost means there’s little room for experimentation, and that’s stifling new ideas and innovation.

The startup’s answer is that the world needs more, and cheaper, training compute resources. Training needs to take minutes not months, and to do that you need more cores, more memory close to those cores, and a low-latency, high-bandwidth connection between the cores.

Those are goals that are clearly in effect for everyone in the AI space. But, by its own admission, Cerebras took the idea to its logical extreme. A big chip offers more silicon area for processor cores and the memory that needs to snuggle up next to it. And a high-bandwidth, low-latency connection is only achievable if data never has to leave the short, dense interconnects on a chip. Thus one big chip.

3 | What’s in those 400,000 cores?

According to the company, the WSE’s cores are specialized to do AI, but still programmable enough that they’re not locked into only one flavor of it. They call them Sparse Linear Algebra (SLA) cores. These processing units are specialized to “tensor” operations key to AI work, but they also include a feature that reduces the work, particularly for deep-learning networks. According to the company, 50 to 98 percent of all the data in a deep learning training set are zeros. The nonzero data is therefore “sparse.”

The SLA cores cut down on the work by simply not multiplying anything by zero. The cores have built in data-flow elements that trigger computing actions based on the data, so when it encounters a zero in the data, it doesn’t waste its time.

4 | How did they do this?

The fundamental idea behind Cerebras’s massive single chip has been obvious for decades, but it has also been impractical. To quote myself:

Back in the 1980s, parallel computing pioneer Gene Amdahl hatched a plan to speed mainframe computing: a silicon-wafer-sized processor. By keeping most of the data on the processor itself instead of pushing it through a circuit board to memory and other chips, computing would be faster and more energy efficient.

With US $230 million from venture capitalists, the most ever at the time, Amdahl founded Trilogy Systems to make his vision a reality. This first commercial attempt at “wafer-scale integration” was such a disaster that it reportedly introduced the verb “to crater” into the financial press lexicon.

The most basic problem is that the bigger the chip, the worse the yield; that’s the fraction of working chips you get from each wafer. Logically, this should mean a wafer-scale chip would be unprofitable, because there would always be flaws in your product. Cerebras’s solution is to add a certain amount of redundancy. According to EE Times, the Swarm communications networks have redundant links to route around damaged cores, and about 1 percent of the cores are spares.

Cerebras also had to work around some key manufacturing limits. For one, chip tools are designed to cast their feature-defining patterns onto relatively small rectangles and do that over and over, perfectly across the wafer. That alone would keep a lot of systems from being built on a single wafer, because of the cost and difficulty of casting different patterns in different places on the wafer.

But the WSE resembles a typical wafer full of the exact same chips, just as you’d ordinarily manufacture. The big difference was a method they worked out with TSMC to make connections across the space between the chips, an area called the scribe lines. This space is typically left blank because the chips are diced up along those lines.

According to Tech Crunch, Cerebras also had to invent a way to provide the chips 15 kilowatts of power and cool the system as well as create new kinds of connectors that could deal with the way it expands when it heats up.

5 | Is this the only way to make a wafer-scale computer?

Of course not. For example, a team at University of California, Los Angeles, and University of Illinois Urbana-Champaign is working on a similar system that would eventually use bare processor dies that have already been built and tested and mounts them on a silicon wafer that’s already patterned with the needed dense network of interconnects. This concept, called a silicon interconnect fabric, allows these dielets to sit as close as 100 micrometers from each other, allowing for interchip communication that nears the characteristics of a single chip.

“This is a huge validation of the research we’ve been doing,” says the University of Illinois’s Rakesh Kumar. “We like the fact that there is commercial interest in something like this.”

Kumar believes that the silicon interconnect fabric approach has some advantages over Cerebras’s monolithic wafer-scale scheme. For one, it allows a designer to mix and match technologies, and use the best manufacturing process for each. A monolithic approach means picking the best process for the most crucial subsystem—logic, for example—and using it for memory and other components even if it’s not ideal for them.

In that approach, Cerebras could be limited in the amount of memory it can put on the processor, Kumar suggests. “They have 18 gigabits of SRAM on the wafer. Maybe that’s enough for some models today, but what about models tomorrow and the day after?”

6 | When does it come out?

According to Fortune, the first systems ship to customers in September, and some have already received prototypes. According to EE Times, the company plans to reveal results from complete systems at the Supercomputing Conference in November.

This post was corrected on 22 August 2019 and again on 2 September to give the correct number of transistors and cores in the Nvidia chip, as well as to add better context to these and other numbers. IEEE Spectrum thanks to Peter Glaskowsky for keeping us honest.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.