Inexpensive, Durable Plastic Hands Let Robots Get a Grip

This rubber-jointed hand can pick up a telephone and use a drill

Video: Ian Chant and Celia Gorman; Footage: iRobot

The human hand is one of nature’s marvels—and a stupendous challenge to engineers who would replicate it. It’s an intricate assemblage with 29 flexible joints and thousands of specialized nerve endings, overseen by a control system so sensitive that it can instantly indicate how hot an object is, how smooth its surface is, and even how firmly it should be grasped.

No wonder, then, that creating robot hands with even a fraction of human capabilities has proved an elusive goal. But increasingly, researchers are concluding that copying nature is not the right approach in this case. The better idea is to decide which of the hand’s critical functions are to be emulated and how this can best be accomplished with the technologies now available.

Industrial robots have, of course, been manipulating objects for decades. But these generally employ simple parallel-jaw grippers that open and close on command to grasp, hold, or move a single type of object that they’ve been specifically programmed to handle. That inflexibility isn’t a problem on the assembly line, but it won’t suffice for future robots designed to interact with people in a much less structured environment.

Like many robotics researchers, we envision a new generation of robots roaming around residences, nursing homes, factories, and the like. These machines will be called on to brew coffee, deliver medications, and shuttle components around a shop floor. These functions will in turn demand many smaller capabilities. For example, opening a jar will require a robot to identify the size and shape of the object, grasp it effectively on the first try, and then apply enoughpressure and torque to open it—but not enough pressure to break it. To meet those needs, robot hands will need the flexibility to adapt to a huge variety of situations on the fly, as well as a gentler touch.

The quest for a versatile robot hand has produced designs that precisely mimic the human hand and others that look more like metal clamps. Two of us—Dollar at Yale and Howe at Harvard—have been working for almost a decade on a compromise between these two methods: hands that have some of the dexterity of human appendages but without their great complexity. The hands we’ve developed don’t look human, but they have proved adept at gripping and manipulating a wide variety of objects in many different settings and tasks.

We got a chance to find out just how adept at a competition sponsored by the Defense Advanced ResearchProjects Agency (DARPA) not long ago—the Autonomous Robotic Manipulation program. Inspired by the success of the agency’s Grand Challenge, which helped to spur innovation in the field of self-driving cars, DARPA asked teams to develop multifingered robotic hands that could complete a variety of tasks, like picking up a telephone handset or operating a power drill. After years of work, it was a chance for us, along with our colleagues at iRobot, to see how our design approach stacked up against those of other researchers.

Since the 1980s, researchers have been able to produce robotic hands with three or four fingers and an opposable thumb, replicating the structure of the human hand. These hands had a futuristic, sci-fi look, and they attracted lots of attention, but most of them weren’t very effective. Re-creating the many joints of the human hand increased the complexity and cost of anthropomorphic hands. It also introduced more chances for something to go wrong. Some examples had more than 30 motors, each of which powered a single joint and each of which could potentially fail. And having tactile sensors with limited sensitivity on every finger made it harder to coordinate a response between all the points of contact.

Underactuated hands are an alternative approach to robotic manipulation. They’re called underactuated because they have fewer motors than joints. They use springs or mechanical linkages to connect rigid parts—such as the sections of a finger—and couple their motions. Careful design of these connections can allow the hand to automatically adapt to object shapes. This means the fingers can, for example, wrap themselves around an object without the need for active sensing and control.

We were pleased with our previous underactuated hand designs, but we knew we had plenty of work to do before our design could meet DARPA’s specifications. And we had just 18 months to do it. The newly formed team took a fresh look at underactuated hand design with the specific challenges posed by DARPA in mind. How would we lift a thin object like a key off a tabletop? What would we need to do to turn on a flashlight? We reconsidered such fundamental aspects as the number of fingers, their placement around the base of the hand, and the grip the fingertips could provide.

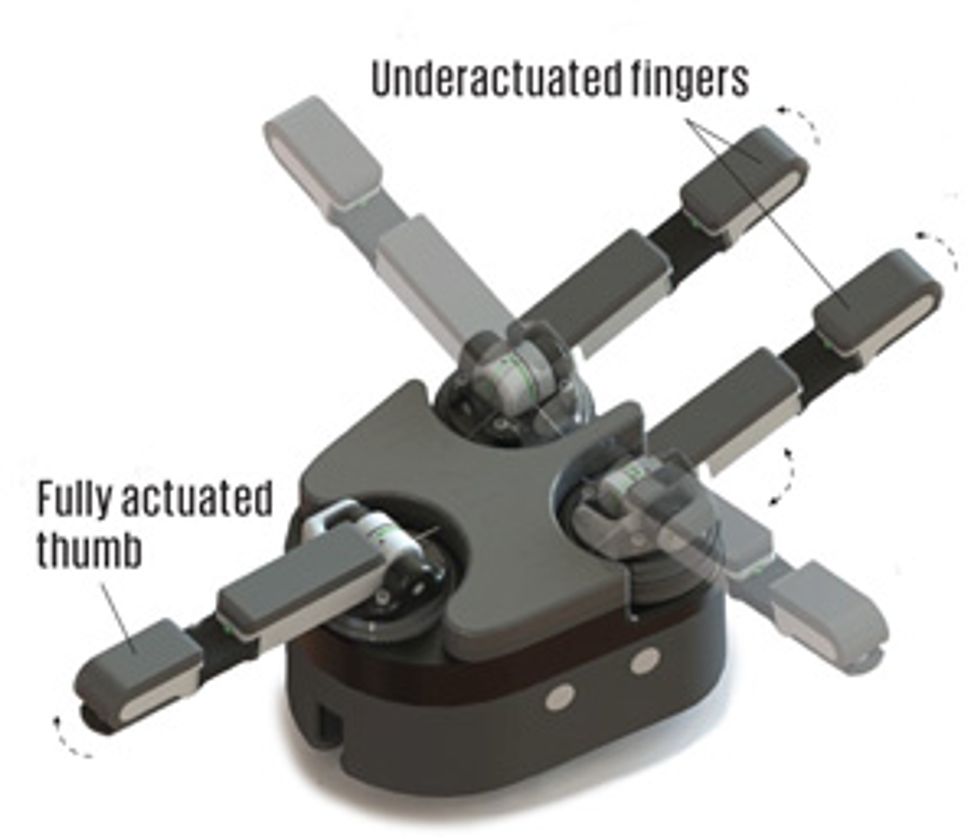

We settled on a design that used two fingers and an opposable thumb. Those three digits were driven by a set of five motors, so the overall number of moving parts in the hand was relatively low. We dubbed our entry the iHY (pronounced “eye-high”) hand, representing the three organizations involved in developing it: iRobot, based in Bedford, Mass., which oversaw the project as a whole, and Harvard and Yale universities, whose students and professors brought additional years of expertise in underactuated hand design.

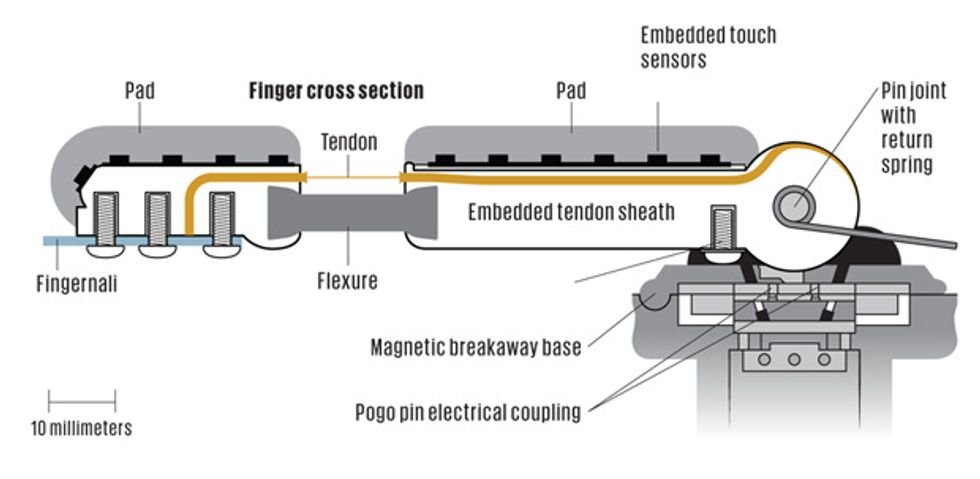

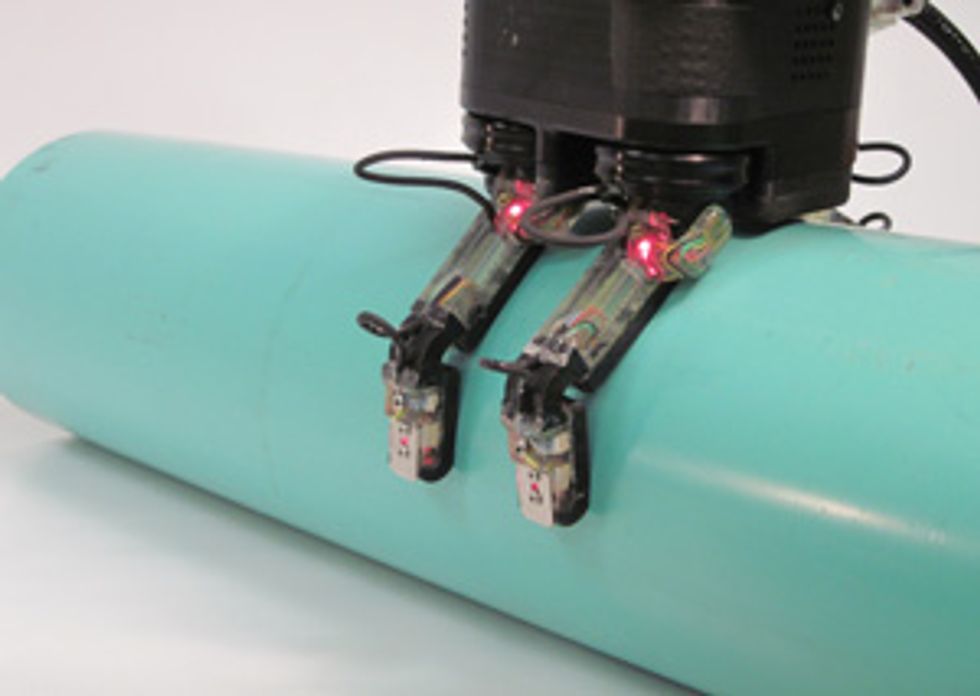

Each digit of the iHY hand consisted of two links—a proximal link that connected the finger to the base of the hand and a distal link that extended to the fingertip. Those links were connected by a heavy-duty elastic joint that made the finger unit flexible, letting it bend on contact to match the shape of an object and form a grip around it, a technique known as passive adaptation.

To give that grip power, we used cable “tendons” that ran from the tip of each finger to a motor in the base of the hand. When that motor pulled the tendons tight, the fingers went from being wrapped around an object to clutching it firmly. And because the initial grip was passive, nothing had to run in reverse to loosen it—letting the tendons go slack released our hand’s grasp as its rubbery joints moved back into place on their own.

Because passive adaptation let the fingers of the iHY hand conform to the shape of the object it was grasping, we didn’t need to control how they bent in the middle. One of the hand’s three digits, though, not only had to grip objects but also manipulate them—to push the button on a flashlight, for example. To accomplish that, we needed to be able to control that digit at both joints.

We decided to give the thumb two independently controllable joints. We did that by connecting both the upper and lower parts of the thumb to individual tendons, each driven by a separate motor. That way, we could manipulate the bottom part of the finger to place it above a button and then control the fingertip to make it push the button. That was a level of control not available in the two other fingers, where a single tendon was connected to a single motor, allowing the digit to apply pressure and form a tight grip around objects.

While four motors drove the three digits, a final motor allowed the fingers to move quickly between two configurations for different kinds of grasping motions. For a powerful “wrap grasp,” the two fingers were set in parallel on one side of the hand with the thumb opposite them, interlacing to close the grip. This grasp, in effect, arranged all three digits into a cage around target objects before pulling tight around them. We could also perform “power grasps” by using all three fingers in a triangular formation to grip an object. This configuration enabled the iHY hand to grip large objects, like a basketball, firmly in its palm. Facing one another on either side of the hand, the two fingers closed in a “pinch grasp,” which could pick up small items.

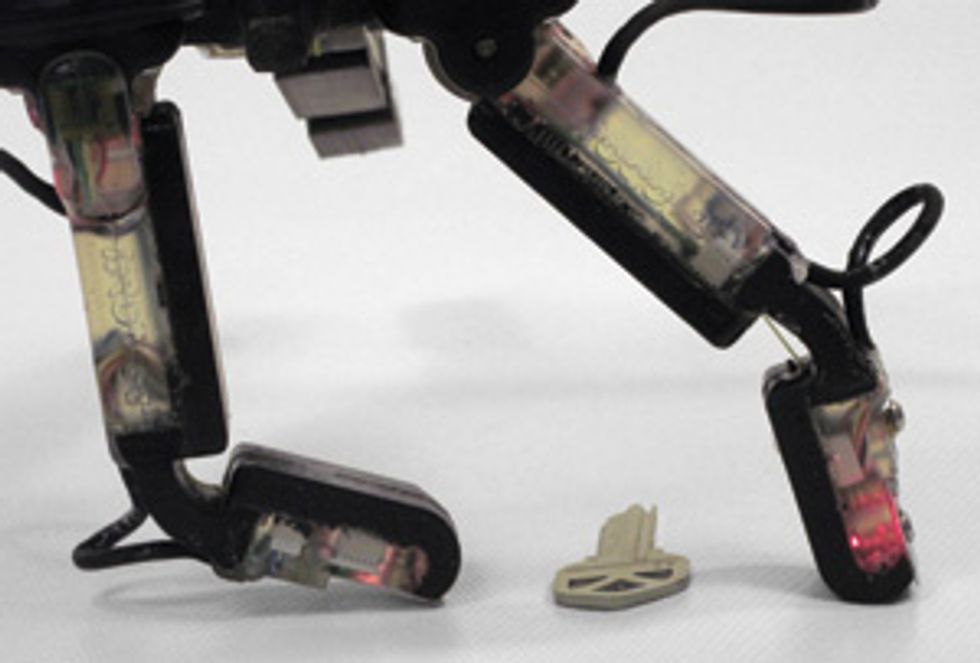

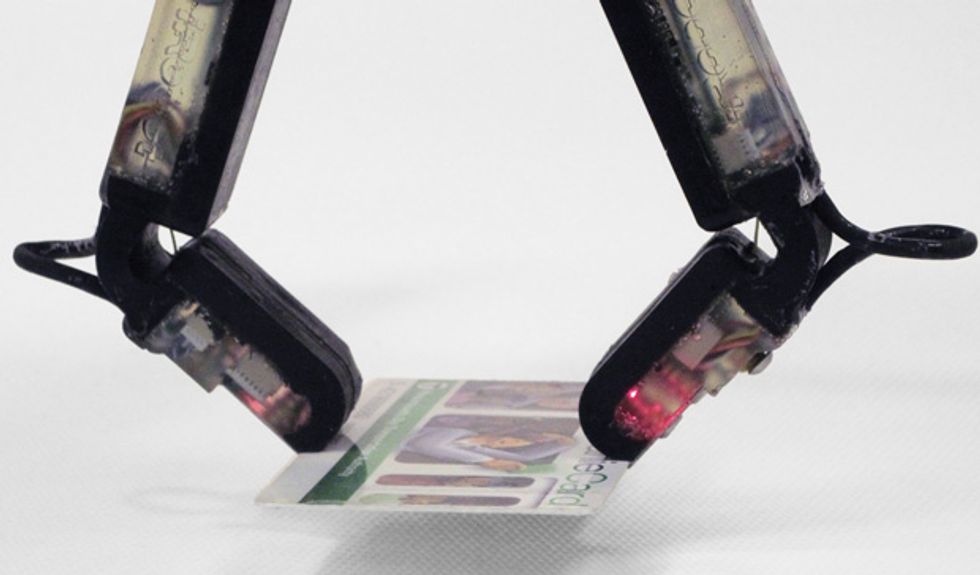

These different grasping motions helped us meet all the requirements of the DARPA competition. In its pinching configuration, the iHY hand could lift a key off a tabletop during the DARPA challenge. One of our Yale team members, Lael Odhner, developed a technique that let the hand squeeze objects into its grasp. For example, the hand put one finger behind the key, while the opposite finger moved toward it, flipping the object into its grip. To facilitate this pinch grip, we added thin metal “fingernails” to each finger, which helped the hand keep its hold on small items.

The pinch grasp would have been useless for some of the challenges posed by DARPA, like using a hammer. To complete tasks like that, we used the wrap grasp, which let us lift and swing a hammer five times during the competition, taking an average of less than 15 seconds per swing.

Although team members Nicholas Corson and Mark Claffee operated the hand during the DARPA challenges, it would eventually need to be controlled by an autonomous robot. That meant developing sensors that could give the robot a sense of the shape, weight, and pliability of the object it was handling. These sensors give software designers the information they need to program robots that will one day control the hand independently. We used two kinds of sensors on the iHY hand—one that detected where an object made contact with the hand and another that monitored how the fingers moved around that object.

To track the motion of the fingers around their target, our Harvard colleague Leif Jentoft developed a set of fiber-optic sensors. These consisted of a loop of fiber-optic cable embedded in the rubber middle joint and a pair of photodiode receptors housed in each of the finger links. The fiber-optic cables emitted light that hit the receptors differently depending on how the joint was bent—for instance, when the joint was bent at a 60 degree angle, the fiber-optic light hit the receptors in a different place and with a different intensity than at a 75 degree angle. That data could eventually be used to map where each finger rested during a passive grasp.

To complement that information, we also installed arrays of pressure detectors. Harvard’s Yaroslav Tenzer adapted these off-the-shelf sensors, originally designed for weather and GPS applications in smartphones, to act like the nerves in human skin. The sensors could tell if the hand was touching an object at the fingertip, the palm, or somewhere in between. Patterns in that information provided data about the shape of the object being grasped to the computer controlling the hand. For instance, the handle of a screwdriver would make contact with different sensors than a telephone handset would.

The pressure sensors also supplied data on an object’s weight and the pliability of its surface, telling robots how tightly to grip an object. Heavy objects typically exert a lot of pressure and need a firm grasp, while those that offer less resistance require a lighter touch. Each of the fingers on the iHY hand housed 22 of these sensors, connected to printed-circuit boards and embedded in therubber finger pads of the hand. Another 48 lined the palm of the hand.

Our iRobot colleagues developed the hardware and software that let us control the hand and relay information from it to a connected computer. Microcontrollers embedded in each digit collected data from the joint and touch sensors in the fingers and thumb and sent it to a controller in the palm. This controller acted as a sort of traffic cop for the whole hand, sending readings from the hand to the control computer via Ethernet and relaying commands from that computer to individual fingers. While this information wasn’t used to control the hand during the competition, we provided visualizations of the information to demonstrate its sensing capabilities.

A hand is nothing without its fingers, and the iHY hand is no exception. To build the digits, we took inspiration from our Yale and Harvard colleagues, who had built robotic parts with electronic components already embedded in them for previous robotic hands. We created the individual parts using several different molds, first crafting the rubber finger joint with its embedded fiber-optic sensors. Then we placed the printed-circuit boards and pressure sensors of the fingers in a pair of molds and poured rubber over these components, creating soft pads for the fingers that housed the more fragile electronics. To strengthen the finger design, we molded rigid backing pieces that would act as the bones of the iHY fingers. We affixed these pieces to the rubber finger pads and placed them in a final mold, where the upper and lower pieces that would make up the finger were chemically bonded to the rubber joint. That result was a single, unified finger unit that housed all the electronics it needed to function.

The manufacturing method let us simplify our design. From an initial finger prototype composed of 60 different parts, we ended up with one made from just 12 parts. And by connecting the parts in a mold, we eliminated the need for small screws and other fasteners that can be points of failure in a robotic hand. Crafting the fingers from rubber and polyurethane also made for fingers that were sensitive to touch but could survive severe impacts, bending on impact rather than breaking.

We also used magnets to connect the finger units to the hand. This caused the fingers to separate entirely from the hand if they were in danger of becoming overloaded, instead of breaking in the middle. That way we could simply reattach the finger rather than having to replace a part. To test its durability, we brought the iHY prototype to a park and knocked a baseball out of its grip with a bat. The hand continued working after multiple strikes, demonstrating its durability and confirming its standing as the world’s most advanced baseball tee.

Using common plastics and rubber to make our fingers and the molds to build them not only made them durable, it also kept costs down. That helped us stay close to DARPA’s expectation that competitors produce a versatile robotic hand for around US $5,000—a fraction of the cost of models with comparable capabilities currently on the market.

On the day of the competition, in June 2012, the iHY hand outperformed all our expectations. The challenge, which took place in Arlington, Va., consisted of 19 tests—nine different objects the hand would have to grasp, nine it would have to grasp and then manipulate, and one test of the hand’s pure strength—each performed five times to demonstrate that no performance was a fluke. Grasping such items as a ball, a canteen, and a telephone handset took us just seconds. Even manipulation tasks like drilling a hole in a wood block and activating a handheld radio were accomplished with ease. Perhaps most surprising was the strength test, where the iHY hand lifted and held a 22-kilogram weight—6 kg more than it had held in previous lab tests.

Though the challenges were scheduled to take all day, we finished with a couple of hours to spare. That let us show off some of the other capabilities of the hand, including ones we didn’t know it had. In one impromptu test, an iRobot staffer placed a pair of tweezers and a thin straw on the test table, challenging us to pick up the tweezers with the iHY hand—and then pick up the straw using the tweezers. This was uncharted territory for the hand and its operators, but we did it in just one try.

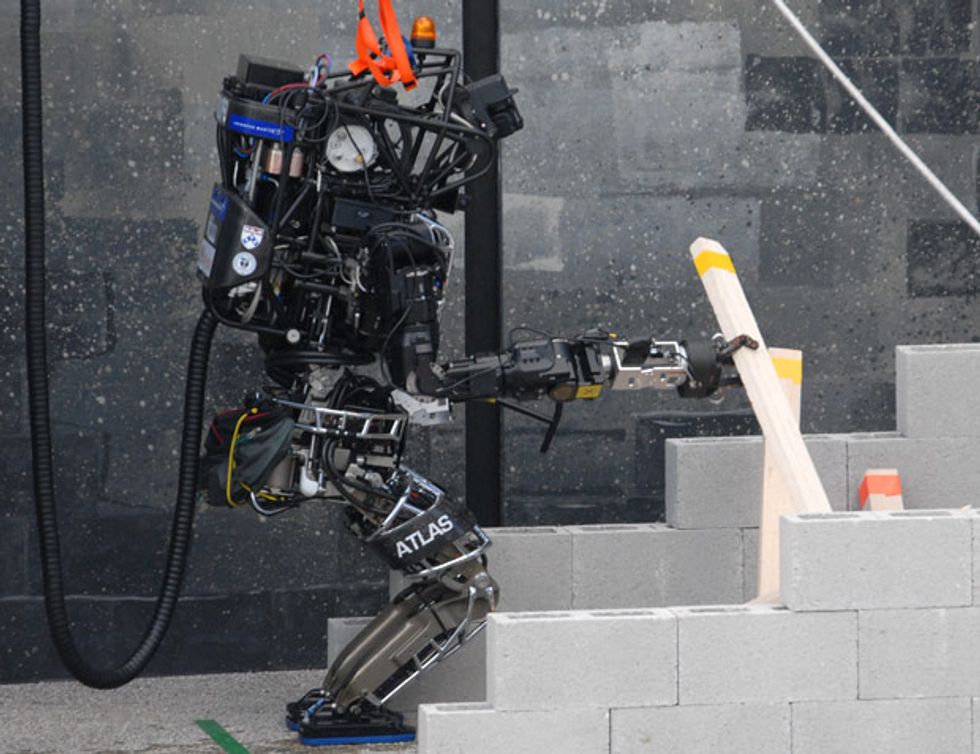

While we were happy with our performance, DARPA had invited only one team at a time to compete, so we didn’t know how we measured up against teams from SRI International (formerly known as the Stanford Research Institute) and Sandia National Laboratories. But a few weeks later, DARPA contacted us to let us know we had won the competition. Our victory meant that DARPA would continue using the iHY hand in future robotics competitions. Several teams of competitors in the DARPA Robotics Challenge, in which entrants design humanoid robots to respond to emergency situations, have used a version of the iHY hand. Attached to humanoid robot bodies like that of Boston Dynamics’ Atlas, the iHY hand has been used in that competition to open doors and handle fire hoses, suggesting crisis response as one possible application for the iHY hand and its descendants.

Eventually, we hope to develop versions of the iHY hand for a variety of commercial purposes. But first, we hope that the low cost and high durability of the iHY hand will help to make hands like it a fixture in robotics research labs around the world. While there are many fine robot hands available, the expense of procuring one—and of repairing one if it is damaged during an experiment—can make researchers timid about how they use it, slowing the pace of research.

The iHY hand, however, is hard to break and inexpensive to replace if you do. That should make it less frightening for researchers to push its limits in the lab. And with a strong, capable hand easily accessible, other teams can concentrate their efforts on writing new control software or making iterative improvements to the hardware, rather than building new hands from scratch. Our research has already spun off into a company, RightHand Robotics, based in Cambridge, Mass. The company has just begun to ship the beta version of the ReFlex Hand, a direct descendant of the iHY hand designed for lab research.

As we develop the technology further, it’s likely that the company will offer several different models of the hand to research teams, from basic, stripped-down versions to more complex models with full sensor suites. While we learned a lot about underactuated design during the competition, the design has plenty of room for improvement. By making the technology easily accessible to other teams of researchers and engineers, we think those improvements will come more quickly, not just to the hands we’ve worked on but to the field of robotic manipulation as a whole.

Considering the complexity of grasping, it’s likely this design won’t be the final word on robot hands. Just as the DARPA Robotics Challenge competitors have used hands we designed for some tasks as well as other hands—including those developed by Sandia—there is likely space for a number of hand designs, each with different capabilities and specifications. But as the field moves forward, we feel that offering our fellow researchers a simple, durable, and effective hand and inviting them to improve on it is a good place to start.

This article originally appeared in print as “Robots Get a Grip.”

About the Authors

The head of the Harvard Biorobotics Laboratory, Robert Howe investigates how engineers can take cues from nature to build more effective robots. In general, he says, inspiration is preferable to mere imitation. “For generic manipulation, the human hand is not a great model,” Howe says. “It brings along a lot of biological baggage.” That kind of thinking led to the three-fingered iHY robotic hand, which he designed with coauthors Aaron Dollar of Yale and Mark Claffee of iRobot.