Taming Wind Power With Better Forecasts

Sophisticated weather simulations are making wind power more grid friendly

Wind energy. It’s clean. It’s renewable. Its potential is enormous. But to draw energy from the wind and send it to people’s homes reliably and efficiently, you have to know when the wind will blow and when it won’t. When it stops or changes rapidly, you have to be ready to substitute power from another source. And because such sources aren’t always available at a moment’s notice, you need this information many hours and even several days ahead.

None of this matters much if the wind supplies only a small percentage of the electricity going into the power grid. But several countries have already gone far beyond that, and more will soon, as wind is now the fastest growing source of energy in the world. Denmark already gets 28 percent of its electrical power from the wind and has at times drawn all of its electrical energy from wind turbines. The wind is already providing 20 percent or more of the electricity for several U.S. states, including Iowa, Kansas, and South Dakota. Colorado, for example, has at times obtained 60 percent of its electric power from the wind. And the United States as a whole will likely produce 20 percent of its electricity from wind power within 15 years.

Even though they can’t control the wind, utilities in these regions are able to reliably manage wind power today and will be able to do so even more efficiently in the future. That’s not because they have installed massive warehouses of batteries or have a large number of natural-gas generators ready to power up instantly. It’s because since the 2000s, scientists have been dramatically improving their ability to forecast the amount of power that utilities will be able to draw from the wind. And these forecasts are getting better all the time, allowing utilities to depend more and more on their wind resources and less on fossil fuels or nuclear power.

Managing the supply of power to the electrical grid is tricky. Utilities must gauge power demand and constantly adjust generation to meet it. If you underestimate the need and produce too little, you get a brownout. Sudden changes in wind production may cause a deviation from the normal frequency and voltage of the grid, causing local problems like flickering lights and possibly damaging unprotected electronics, or at higher levels tripping circuit breakers in the grid and causing blackouts.

Grid operators are pretty good at formulating such estimates and can usually make adjustments quickly if the situation changes—at least when they have direct control of the generation systems, as is the case with coal, natural gas, nuclear energy, and much of hydropower. They have some ability to dial these sources up or down in a couple of hours—or minutes for many natural-gas plants—as long as the generators are already in operation.

Wind is different. Maintaining a steady flow of wind energy into the power grid is not so much a matter of controlling wind energy as it is of compensating for its fluctuations. But it takes 12 hours or more to fully start up a nuclear reactor and 6 hours for a coal plant. So the only quick fix is to use an expensive “spinning” reserve, typically a power plant running well below its capacity, like an idling car. Unless they want to keep a large number of such spinning reserves up and running—which is a wasteful proposition—utilities must be able to forecast the wind accurately.

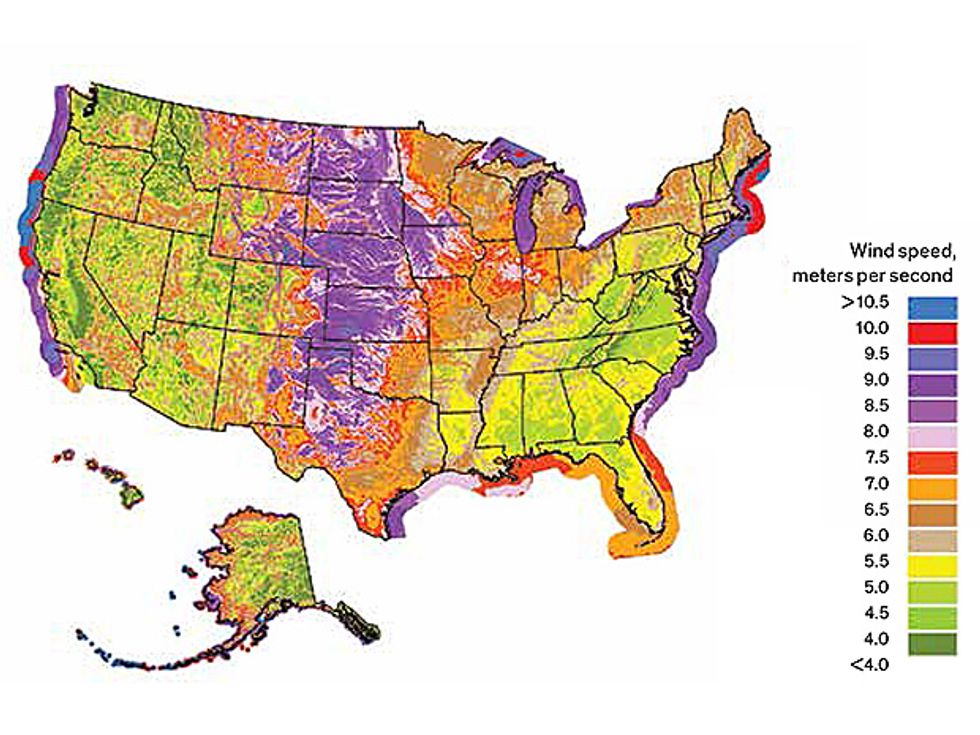

Predicting winds at a specific site isn’t easy. Terrain, nearby bodies of water, buildings, and vegetation dramatically influence local wind speeds. Traditional weather-prediction models are typically too coarse to pick up these details. It takes a specialized computer model that considers these local influences along with the effects of larger-scale weather systems.

Utilities need accurate wind forecasts for two distinct time frames: one to five days ahead and 15 minutes to 6 hours ahead. They use their longer-range forecasts to plan the energy mix—both what the utility will produce from its different generation facilities and what it will buy if needed from other producers. Utility operators generally need this information a day ahead, say, by 6 a.m. for how much wind will be blowing from midnight that night through the following midnight. That gives them time to get coal, nuclear, or gas plants up and running and to place orders to buy electricity from other producers at an economical rate—purchases made any later would command premium prices. But one day isn’t always enough lead time: Markets shut down on weekends, so utility planners need to have Monday’s wind forecast on Friday morning. And over holidays they will need to plan their purchases even sooner.

Knowing how the wind will change just minutes to 6 hours ahead is important, too, because the weather can shift dramatically in that time, and with even a little warning of a big drop or increase in available wind energy, operators can adjust spinning reserves.

No single forecasting system performs optimally across both of these time scales. So we and our colleagues at the National Center for Atmospheric Research (NCAR) have been working to develop tools for both types of forecasts. We’re doing this in partnership with Xcel Energy—a utility supplying energy to residents and businesses in Colorado, Michigan, Minnesota, New Mexico, North Dakota, South Dakota, Texas, and Wisconsin. Xcel has the largest wind-energy capacity of any utility in the United States, some 5,700 megawatts.

We call what we’ve built the NCAR Wind Power Forecasting System. Its development started in 2008. Xcel Energy began using an early version operationally in 2009, and NCAR has been improving it ever since. Denmark, Germany, Australia, China, and numerous private companies are developing similar prediction systems to enable them to reliably increase their use of wind power.

At the core of our forecasts—as with all weather forecasts today—are numerical models. Such systems start with measurements of temperature, barometric pressure, wind speed and direction, moisture, solar radiation, cloud cover, and more made by weather stations, weather balloons, satellites, and ground-based radars. These models assimilate the data into a grid of points covering the region of interest. They then compute changes in those measurements at each point using equations that govern the evolution of the atmosphere, taking many factors into account, such as changes in solar radiation, the effects of atmospheric turbulence, the physics of cloud formation, and land-surface usage. These numerical-prediction schemes are able to model everything from the large-scale weather systems circling the globe to the turbulent eddies that form when the wind flows over bumps in the local terrain. To do so, today’s models typically include tens of millions of grid cells and require thousands of computer processors running in parallel to produce multiday forecasts as often as hourly.

Wind forecasts are publicly available from the U.S. National Weather Service (NWS), which runs its own numerical weather model. Ten years ago, when this was the only tool for wind forecasting, it provided weather forecasts at a resolution of 36 kilometers. At this level, smaller features of the local terrain are ignored. And although the model could generally indicate that a weather system would move through a region in the morning or the afternoon, it couldn’t tell exactly when it would arrive at a particular spot.

Today, some NWS models generate predictions at a 3-km resolution over the United States—a huge improvement—and they can time the movement of weather fronts to within just a few tens of minutes.

But that’s not quite good enough to tell us exactly what the winds will be doing at utility wind farms. So we’re also running a specialized version of the Weather Research and Forecasting (WRF) model. We’ve specially configured it to provide information specific to Xcel Energy’s needs. We call this the Real Time Four Dimensional Data Assimilation version of WRF. Like the new, high-resolution NWS model, it runs at a 3-km resolution. But for the vast majority of Xcel’s 3,000 wind turbines, we’ve added a key piece of data—the wind speeds measured by the sensors sited on top of the turbines. We started using these additional data in 2008, and they have made a dramatic improvement in the accuracy of our wind forecasts.

A few wind farms have on-site weather stations mounted on special meteorological towers continuously measuring wind and temperature at different levels. This helps determine the stability of the atmosphere and can improve wind predictions, especially at nighttime, when the wind at the ground often weakens faster than the wind at turbine height, causing wind shear and making the turbines less efficient. We’re using these data as well in the model we’ve adapted for Xcel.

To optimize our predictions, we combine results from this model with those from other forecasting models—including the NWS models—blending them all together with something called the Dynamic Integrated foreCast (DICast) system. NCAR started developing DICast almost 20 years ago for the Weather Channel. Since then NCAR, as well as some commercial weather companies, have refined and implemented it in various operational forecast systems.

We started using DICast for wind prediction in 2009. We train it by giving it information on how well each of the models we’re blending performs under different weather conditions. We also give it past observations that allow it to determine the different biases and errors in certain models. One model, say, may always deviate from the correct forecast at a certain elevation by a certain amount; another may include too many clouds in a certain region, preventing adequate surface heating that may drive winds.

So now we run DICast in two stages. The first corrects the models for biases and errors; the second blends the weather models, giving them different weights depending on which models perform best under conditions that resemble the current weather for the given location and lead time. Although DICast works well enough with just 30 days of training data, it improves with time because it constantly checks its predictions against what actually happened and adjusts its bias corrections and weightings.

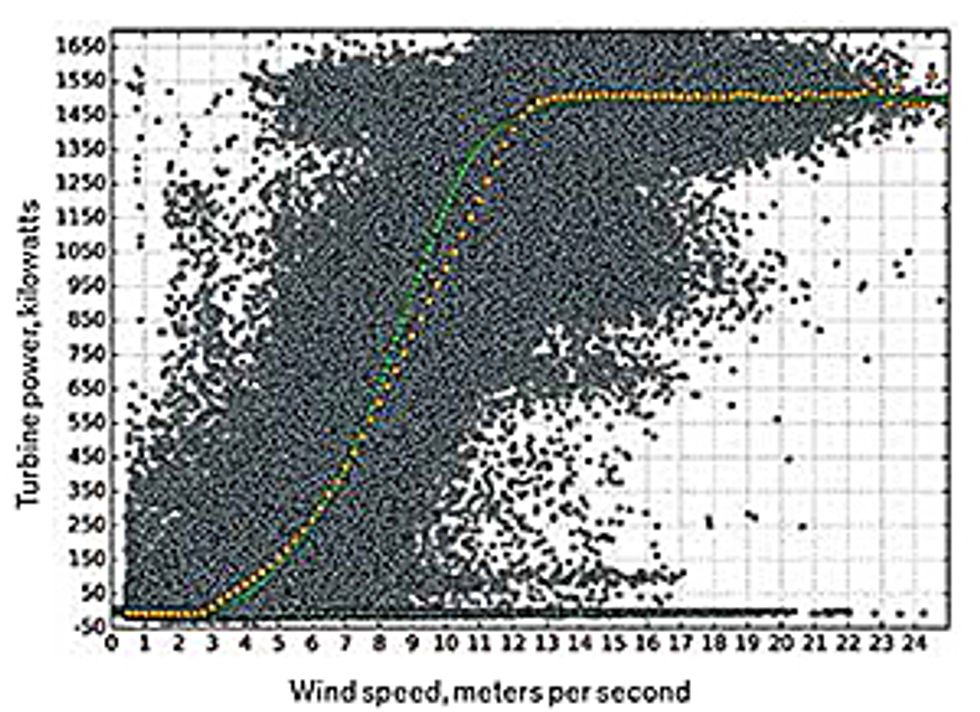

After our systems generate a wind forecast using these various components, we need to translate the predicted wind speeds into information that utilities can use, which is the amount of power the winds will generate at the local wind farm.

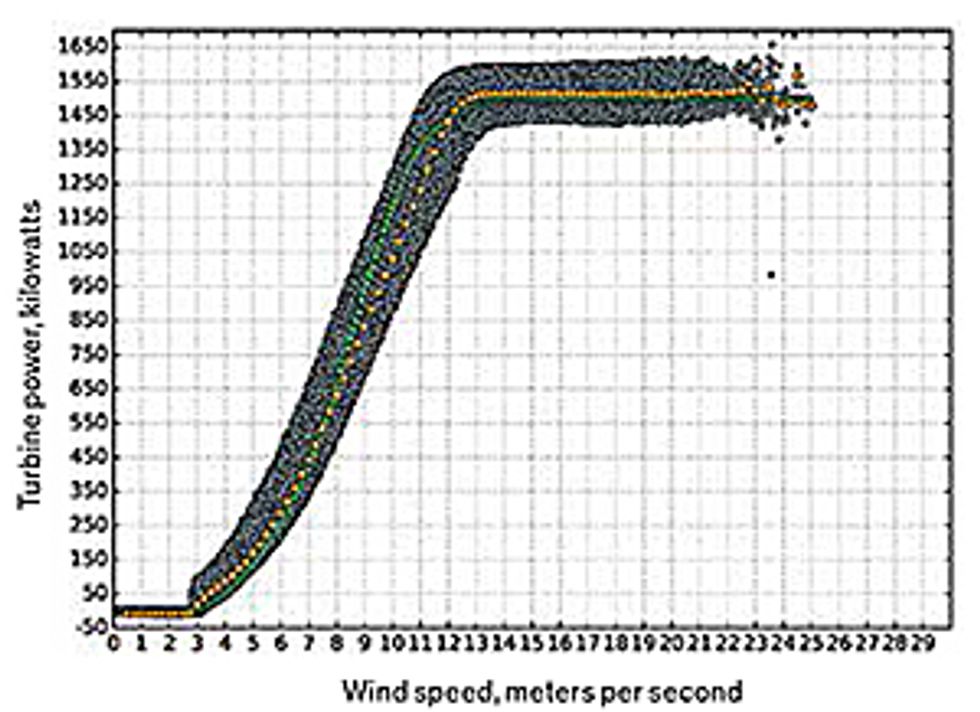

To do this, we first use the DICast system to predict the wind speed at the height of the hubs of the turbines—about 80 to 100 meters above the ground. Then our system translates those predicted wind speeds into power forecasts. Each type of wind turbine has a particular relationship between the wind speed and the power output, known as its power curve. But power production often deviates substantially from the turbine manufacturer’s power curve, and a single wind speed can correspond to a variety of power outputs. Bad correlations between wind and power output can be caused by a variety of factors, such as times when the turbine is down for maintenance, snow or ice is on the blades, the utility is intentionally curtailing power, or the wind speed exceeds the design limits for safe operation and operators shut down the turbine. To avoid the worst anomalies in our wind-to-power conversions, we typically just consider the middle 50 percent of possible power values for each wind speed at each turbine.

That’s how we get from a basic weather forecast to detailed wind-power predictions at specific sites. There’s one more thing we’re doing to make those predictions even more accurate. It involves a unique approach to using ensembles of forecasts to predict weather.

In ensemble forecasting, about 10 to 30 different weather models are run concurrently depending on the available computing resources and the current weather situation. These differ in such factors as initial conditions, boundary conditions, and the use of physics packages—formulas that represent processes like land-surface usage or changes in solar radiation. From that set, the system generates an ensemble of possible outcomes. Sometimes the differences are slight, with all the models predicting, say, that a hurricane will follow a particular path. Other times the results vary widely, and it may be hard to predict with any confidence whether that hurricane is going to turn toward land or head out to sea.

At NCAR, we are refining the use of ensemble forecasting by identifying analogous forecasts—previous forecasts that predicted conditions similar to those of the current forecast. We then determine the errors in those analogous predictions by comparing them with how the weather actually evolved. If previous predictions tended to be off in some consistent way, we can correct the current forecast by compensating for the past errors.

We typically identify hundreds of analogous forecasts, then use the 10 to 20 that are most similar to the current forecast to generate an ensemble. We then look carefully at how well those past predictions played out so that we can adjust the current forecast to compensate for any consistent bias. And we use the range of errors to help estimate the uncertainty in today’s forecast.

Over the past year, this approach has improved the accuracy of our forecasts and allowed us to give the utilities probabilities to help them with their planning. A utility would be more likely to keep additional spinning reserves on line when the possibility of strong winds is 20 percent, rather than 80 percent, for example.

All of these efforts are aimed at getting one- to five-day forecasts. For our very short range forecasts, we need a different approach—for two reasons. Most important is that these computationally intensive weather models take longer to run than there is time available. Also, we want to take advantage of local information, which the larger-scale models miss.

At the shortest time scales, it is currently difficult to beat what is called a persistence forecast. For example, 5 minutes from now, the wind speed is likely to be much as it is at the moment. So it’s appropriate to predict no change at these very short time scales. But in 30 minutes to several hours, wind speeds can shoot up and down dramatically.

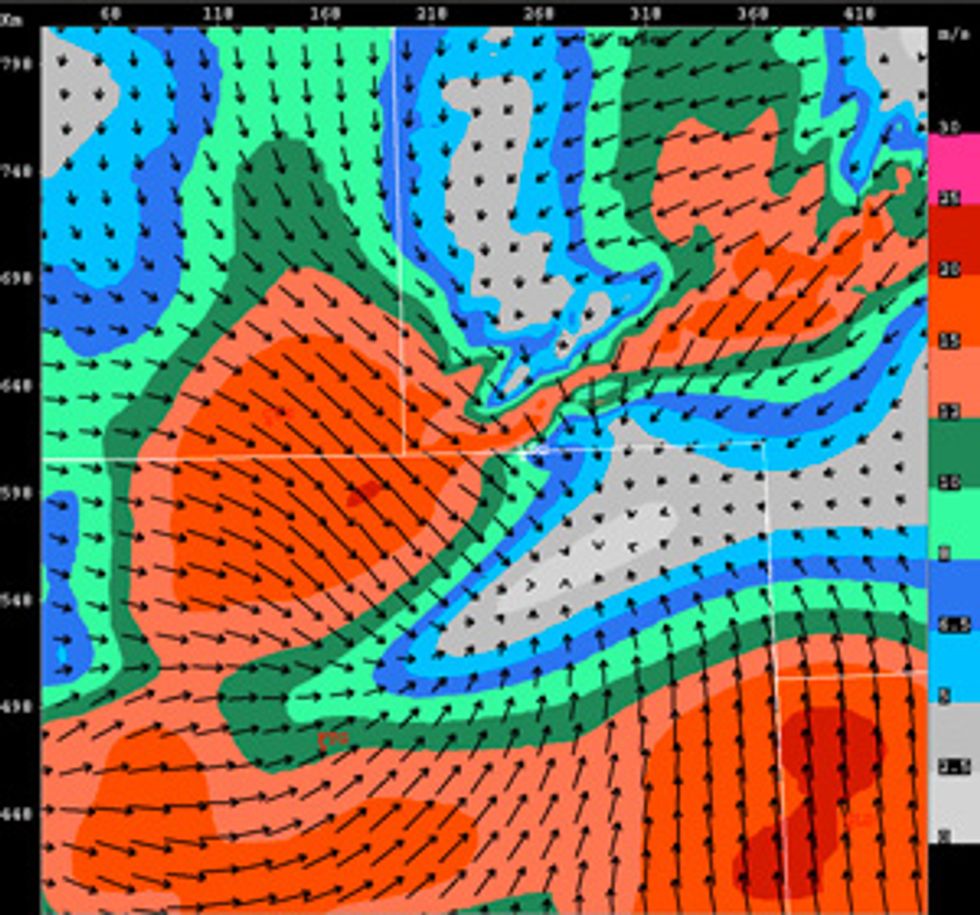

To forecast these rapid changes, we need to include observations near the wind farms. One approach that we’ve been evaluating blends radar observations into a rapidly updating weather model. It uses data from Doppler weather radars called Next Generation Weather Radar, which the NWS has deployed around the United States. (If you live in that country, you likely see images from these radars when you tune in to a local TV weather forecast.) The radars send out pulses of microwave energy and measure the amount that is reflected off various sorts of particles entrained in the air, including raindrops and hailstones. Changes in the frequency of the signal—the Doppler shift—indicate the speed of the particles’ horizontal movement toward or away from the radar antenna, and thus the speed of the wind. Combining results from multiple radars allows you to ascertain the direction of the wind.

Mapping radar echoes lets you identify general weather patterns—patterns such as fronts that tend to persist even as the overall weather system moves along horizontally. So we can use our knowledge of the overall pattern and how it is moving to predict how the wind will soon change in one spot. This allows us to produce much better short-term forecasts.

How are we doing so far? Each of Xcel Energy’s service regions has different challenges, and as a consequence the reliability of our forecasts varies. But even in the most difficult areas, we’re doing well. For example, Xcel’s Public Service Company of Colorado subsidiary operates in a region with very complex terrain, where it is notoriously difficult to provide good local wind forecasts. In 2008, the year we started working on this problem, the forecast error at a typical wind farm in this region was about 18 percent for 18 to 42 hours ahead, calculated as a percentage of the capacity of each farm. But by 2014, the mean forecast error had fallen to 10.8 percent. Xcel estimates that improvements in forecasting have saved customers US $49 million so far.

That’s pretty good, but we think we can do better. This past winter, we added the ability to forecast turbine icing. Sometimes icing happens gradually, and the turbines slow before stopping. In such situations, we can sometimes use the drop in power to diagnose the icing. Other times, such as with freezing rain, icing can happen quickly, and the turbines need to be shut down immediately to avoid damage from the weight of the ice. Some of the most advanced work in icing prediction is in Scandinavia, where this phenomenon is a constant threat, and some wind turbines there and in other similarly cold, wet regions are even beginning to include mechanisms to remove ice from the spinning blades.

While we are excited to incorporate methods to predict icing in our model, and while we’ve had a few successes with them, we still have a long way to go. The United States didn’t see many ice-producing storms this past winter, so we have less data than we expected to test our modeling.

Having even more accurate wind forecasts—ones that give day-ahead wind-speed projections with the accuracy of today’s 6-hour forecasts—is essential to moving the world toward a largely renewable-energy future.

About the Authors

Sue Ellen Haupt leads the Weather Systems and Assessment Program at the National Center for Atmospheric Research (NCAR), in Boulder, Colo. Early in her career, she did day-ahead energy trading for an electric utility, when wind power was a novelty. “We had one turbine in the parking lot,” Haupt recalls, “but it produced very little power.”

William P. Mahoney is deputy director of NCAR’s Research Applications Laboratory. He has flown (on purpose) into microburst wind shears to study their characteristics and their effects on aircraft performance, giving him a real appreciation for the power of wind.