In-hand manipulation is one of the things near the top of a very, very, very long list of things that humans do without thinking that are extraordinarily difficult for robots. It’s the act of repositioning an object with one hand, usually with your fingers—you do it whenever you pick up a pen, for example, to switch from a “picking up” grasp to a “writing something” grasp. Next time you do this, pay attention to the intricate, coordinated motion that happens, and ask yourself just how in the world you could honestly expect a robot to do something similar.

And yet, robots are learning to do such things. For example, OpenAI recently taught a five-fingered hand to manipulate a cube, which is great, if you have a lot of patience and/or computing resources, and the budget for a fancy hand and stuff. For those of us without wealthy (and occasionally eccentric) patrons, a conventional gripper is a more realistic option, and researchers from Yale University’s GRAB Lab have developed a two-finger design with a clever variable-friction system that can do in-hand manipulation at a fraction of the cost.

“Our approach is quite different and uses a very simple hand setup and a very simple controller, but with finger surfaces inspired by the unique biomechanical properties of the human finger pads, which change their effective friction as normal force is increased,” says Ad Spiers, a former GRAB Lab researcher and now a research scientist at the Max Planck Institute for Intelligent Systems in Stuttgart, Germany. “This enables people to choose whether their fingers grip or slide over objects.”

The nice thing about your fingers is that you (probably) have bones in them, and they are (probably) surrounded by squishy tissue bits, and (hopefully) covered in textured skin. This is a phenomenal combination, since the bone lets you execute gripping force when you want to, while the skin and fat can maintain softer contact to let objects slide around. By using hard, friction-y contact in concert with softer sliding-y contact, you’re able to manipulate objects in all sorts of ways. Yale’s variable-friction fingers are an attempt to replicate this sort of functionality, through hardware that can turn friction on and off to alternate between gripping and sliding.

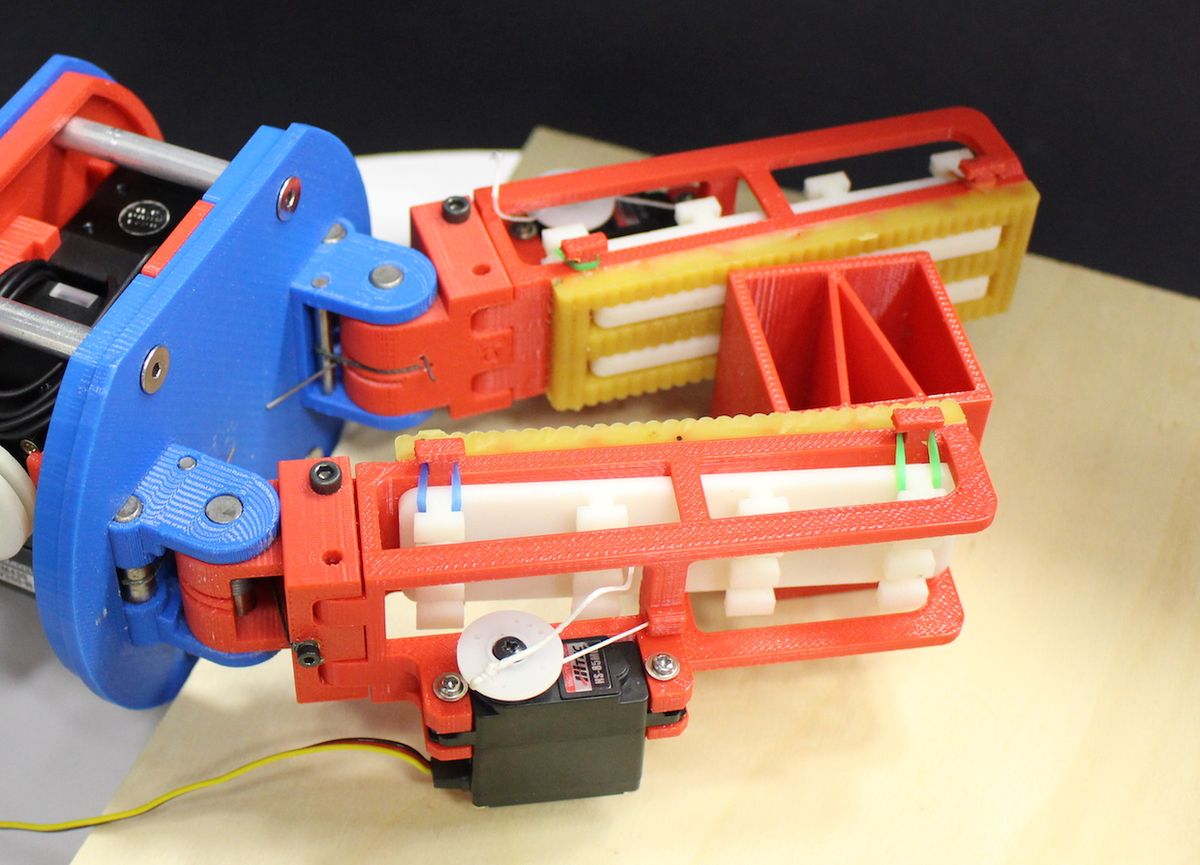

It’s a fairly simple system, compensating for lack of complexity with clever design. The fingers are mostly 3D printed, with a molded elastomer friction-enhanced insert. The low friction bits are just ABS. The fingers can be either friction-y, slide-y, passively switchable by using downward force on an elastically suspended element, or actively switchable with the help of a servo. The cheapest and simplest way is to go with the passively switchable version, which still allows the gripper to adopt different manipulation modes, as the GRAB Lab group, led by Professor Aaron Dollar, describes in a recent paper: “Manipulating an object between the fingers with a low-torque reference enables object sliding [translation], while manipulating with a high-torque reference enables pivoting.”

And if you have the budget for it, adding in those servos lets you do even fancier things, like rotating an object without translating it. In general, the gripper is able to achieve arbitrary object poses through in-hand manipulation, resulting in “a within-hand manipulation workspace beyond

the majority of the gripper designs in literature.” It seems like in order for this to be true you’d need a highly complex software controller or something, but it’s really not all that fancy, the researchers say:

An unsophisticated controller alternates between torque and position control for each finger, in order to maintain object grasp while also modulating grip force. This in turn varies finger surface friction to allow sliding, gripping and rolling of objects. The later addition of active fingers permits controlled sliding of [an] object on both fingers. This extends the capability of the hand and enables in-place rotation or proximal/distal transfer, for the fine positioning of objects within the gripper workspace.

The controller is open loop right now, but they’re working on a closed loop version that will allow the fingers to move objects into target poses, which will make the gripper much more useful. The researchers also suggest that the system they’ve come up with could relatively easily be integrated into other grippers to extend their capabilities.

Hopefully, this hardware will be miniaturized and combined with a variety of manipulation controllers to take advantage of other grasping techniques, like using a nearby surface for help (as shown in the video). Pure in-hand manipulation is cool, of course, but in a very practical sense, what’s important here is the objective: making it possible for robots to affordably and reliably do useful things in the real world, especially when it comes to things like prosthetics. And with that in mind, the researchers are in the process of open sourcing the design files to allow other folks to experiment with the hardware or develop more advanced controllers.

“Variable-Friction Finger Surfaces to Enable Within-Hand Manipulation via Gripping and Sliding,” by Adam J. Spiers, Berk Calli, and Aaron M. Dollar from Yale University, appears in IEEE Robotics and Automation Letters.

[ GRAB Lab ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.