Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next few months; here’s what we have so far (send us your events!):

ICRES 2019 – July 29-30, 2019 – London, U.K.

DARPA SubT Tunnel Circuit – August 15-22, 2019 – Pittsburgh, Pa., USA

IEEE Africon 2019 – September 25-27, 2019 – Accra, Ghana

ISRR 2019 – October 6-10, 2019 – Hanoi, Vietnam

Ro-Man 2019 – October 14-18, 2019 – New Delhi, India

Humanoids 2019 – October 15-17, 2019 – Toronto, Canada

Let us know if you have suggestions for next week, and enjoy today’s videos.

I’m sure you’ve seen this video already because you read this blog every day, but if you somehow missed it because you were skiing across Antarctica (the only valid excuse we’re accepting today), here’s our video introducing HMI’s Aquanaut transforming robot submarine.

And after you recover from all that frostbite, make sure and read our in-depth feature article here.

[ Aquanaut ]

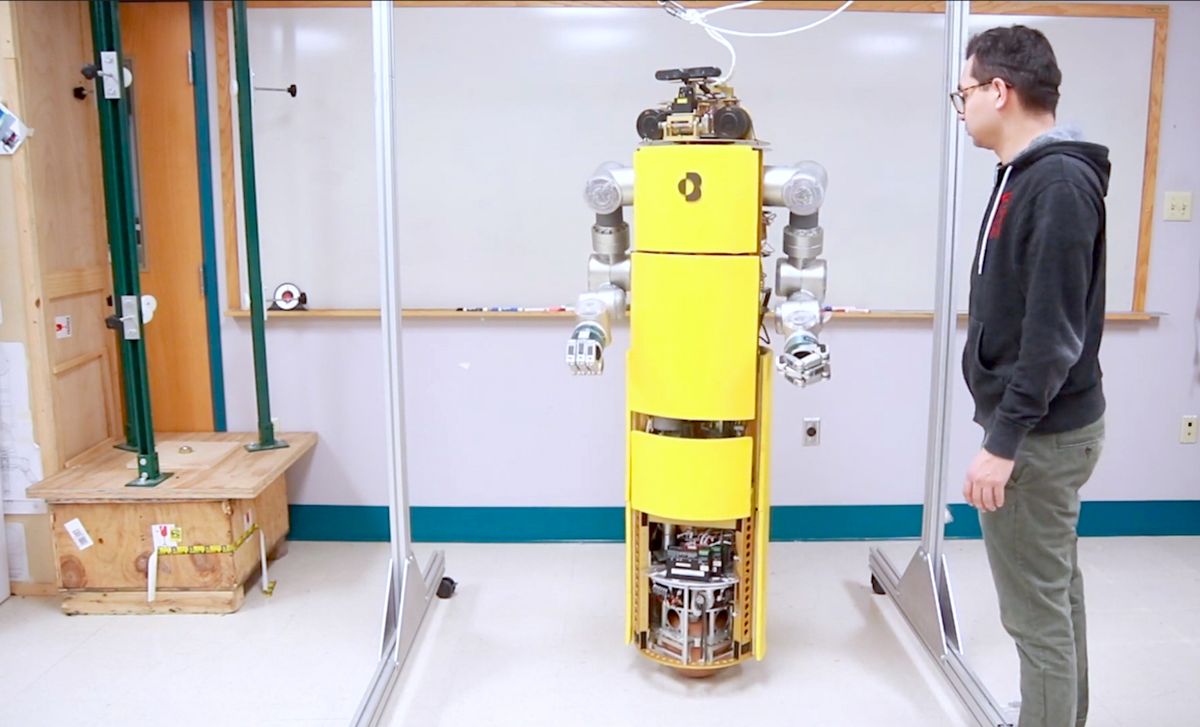

Last week we complained about not having seen a ballbot with a manipulator, so Roberto from CMU shared a new video of their ballbot, featuring a pair of 7-DoF arms.

We should learn more at Humanoids 2019.

[ CMU ]

Thanks Roberto!

The FAA is making it easier for recreational drone pilots to get near-realtime approval to fly in lightly controlled airspace.

[ LAANC ]

Self-reconfigurable modular robots are usually composed of multiple modules with uniform docking interfaces that can be transformed into different configurations by themselves. The reconfiguration planning problem is finding what sequence of reconfiguration actions are required for one arrangement of modules to transform into another. We present a novel reconfiguration planning algorithm for modular robots. The algorithm compares the initial configuration with the goal configuration efficiently. The reconfiguration actions can be executed in a distributed manner so that each module can efficiently finish its reconfiguration task which results in a global reconfiguration for the system. In the end, the algorithm is demonstrated on real modular robots and some example reconfiguration tasks are provided.

[ CKbot ]

A nice design of a gripper that uses a passive thumb of sorts to pick up flat objects from flat surfaces.

[ Paper ] via [ Laval University ]

I like this video of a palletizing robot from Kawasaki because in the background you can see a human doing the exact same job and obviously not enjoying it.

[ Kawasaki ]

This robot cleans and “brings joy and laughter.” What else do we need?

I do appreciate that all the robots are named Leo, and that they’re also all female.

[ LionsBot ]

This is less of a dishwashing robot and more of a dishsorting robot, but we’ll forgive it because it doesn’t drop a single dish.

[ TechMagic ]

Thanks Ryosuke!

A slight warning here that the robot in the following video (which costs something like $180,000) appears “naked” in some scenes, none of which are strictly objectionable, we hope.

Beautifully slim and delicate motion life-size motion figures are ideal avatars for expressing emotions to customers in various arts, content and businesses. We can provide a system that integrates not only motion figures but all moving devices.

[ Speecys ]

The best way to operate a Husky with a pair of manipulators on it is to become the robot.

[ UT Austin ]

The FlyJacket drone control system from EPFL has been upgraded so that it can yank you around a little bit.

In several fields of human-machine interaction, haptic guidance has proven to be an effective training tool for enhancing user performance. This work presents the results of psychophysical and motor learning studies that were carried out with human participant to assess the effect of cable-driven haptic guidance for a task involving aerial robotic teleoperation. The guidance system was integrated into an exosuit, called the FlyJacket, that was developed to control drones with torso movements. Results for the Just Noticeable Difference (JND) and from the Stevens Power Law suggest that the perception of force on the users’ torso scales linearly with the amplitude of the force exerted through the cables and the perceived force is close to the magnitude of the stimulus. Motor learning studies reveal that this form of haptic guidance improves user performance in training, but this improvement is not retained when participants are evaluated without guidance.

[ EPFL ]

The SAND Challenge is an opportunity for small businesses to compete in an autonomous unmanned aerial vehicle (UAV) competition to help NASA address safety-critical risks associated with flying UAVs in the national airspace. Set in a post-natural disaster scenario, SAND will push the envelope of aviation.

[ NASA ]

Legged robots have the potential to traverse diverse and rugged terrain. To find a safe and efficient navigation path and to carefully select individual footholds, it is useful to predict properties of the terrain ahead of the robot. In this work, we propose a method to collect data from robot-terrain interaction and associate it to images, to then train a neural network to predict terrain properties from images.

[ RSL ]

Misty wants to be your new receptionist.

[ Misty Robotics ]

For years, we’ve been pointing out that while new Roombas have lots of great features, older Roombas still do a totally decent job of cleaning your floors. This video is a performance comparison between the newest Roomba (the S9+) and the original 2002 Roomba (!), and the results will surprise you. Or maybe they won’t.

[ Vacuum Wars ]

Lex Fridman from MIT interviews Chris Urmson, who was involved in some of the earliest autonomous vehicle projects, Google’s original self-driving car among them, and is currently CEO of Aurora Innovation.

Chris Urmson was the CTO of the Google Self-Driving Car team, a key engineer and leader behind the Carnegie Mellon autonomous vehicle entries in the DARPA grand challenges and the winner of the DARPA urban challenge. Today he is the CEO of Aurora Innovation, an autonomous vehicle software company he started with Sterling Anderson, who was the former director of Tesla Autopilot, and Drew Bagnell, Uber’s former autonomy and perception lead.

[ AI Podcast ]

In this week’s episode of Robots in Depth, Per speaks with Lael Odhner from RightHand Robotics.

Lael Odhner is a co-founder of RightHand Robotics, that is developing a gripper based on the combination of control and soft, compliant parts to get better grasping of objects. Their work focuses on grasping and manipulating everyday human objects in everyday environments.This mimics how human hands combine control and flexibility to grasp objects with great dexterity.

The combination of control and compliance makes the RightHand robotics gripper very light-weight and affordable. The compliance makes it easier to grasp objects of unknown shape and differs from the way industrial robots usually grip. The compliance also helps in a more unstructured environment where contact with the object and its surroundings cannot be exactly predicted.

[ RightHand Robotics ] via [ Robots in Depth ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the director of digital innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.