A 2D cousin of flash memory is not only roughly 5,000 times faster, but can store multiple bits of data instead of just zeroes and ones, a new study finds.

Flash drives, hard disks, magnetic tape and other forms of non-volatile memory help store data even after the power is removed. One key weakness of these devices is how they are often slow, typically requiring at least hundreds of microseconds to write data, a few orders of magnitude longer than their volatile counterparts.

Now researchers have developed non-volatile memory that only takes nanoseconds to write data. This makes it thousands of times faster than commercial flash memory and roughly as speedy as the dynamic RAM found in most computers. They detailed their findings online this month in the journal Nature Nanotechnology.

The new device is made of layers of atomically thin 2-D materials. Previous research found that when two or more atomically thin layers of different materials are placed on top of each other to form so-called heterostructures, novel hybrid properties can emerge. These layers are typically held together by weak electric forces known as van der Waals interactions, the same forces that often make adhesive tapes sticky.

Scientists at the Chinese Academy of Sciences’ Institute of Physics in Beijing and their colleagues noted that silicon-based memory is ultimately limited in speed because of unavoidable defects on ultra-thin silicon films that degrade performance. They reasoned that atomically flat van der Waals heterostructures could avoid such problems.

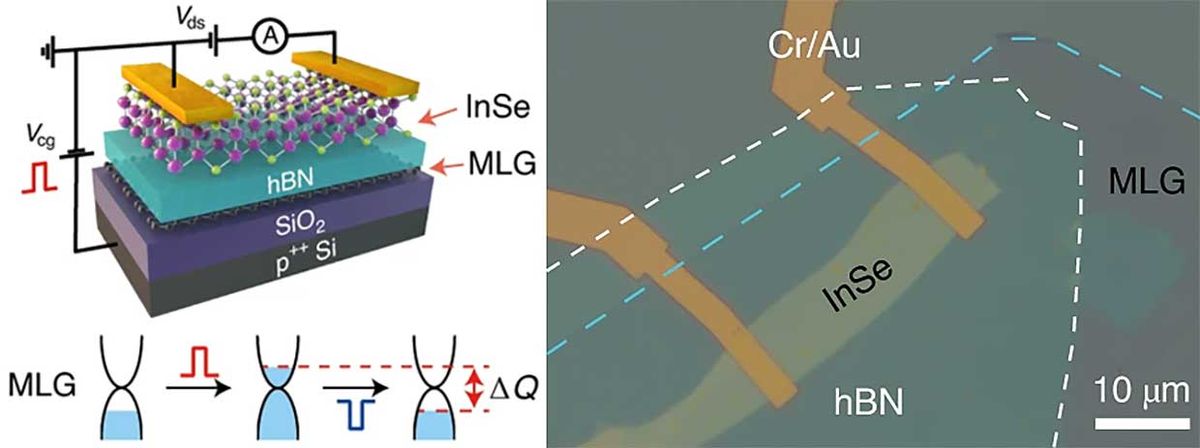

The researchers fabricated a van der Waals heterostructure consisting of an indium selenide semiconducting layer, a hexagonal boron nitride insulating layer, and multiple electrically conductive graphene layers sitting on top of a wafer of silicon dioxide and silicon. A voltage pulse lasting only 21 nanoseconds can inject electric charge into graphene to write or erase data. These pulses are roughly as strong as those used to write and erase in commercial flash memory.

Besides speed, a key feature of this new memory is the possibility of multi-bit storage. A conventional memory device can store a bit of data, either a zero or one, by switching between, say, a highly electrically conductive state and a less electrically conductive state. The researchers note their new device could theoretically store multiple bits of data with multiple electric states, each written and erased using a different sequence of voltage pulses.

“Memory can become much more powerful when a single device can store more bits of information—it helps build denser and denser memory architectures,” says electrical engineer Deep Jariwala at the University of Pennsylvania, who did not take part in this research.

The scientists projected their devices can store data for 10 years. They noted another Chinese group recently achieved similar results with a van der Waals heterostructure made of molybdenum disulfide, hexagonal boron nitride and multi-layer graphene.

A major question now is whether or not researchers can make such devices on commercial scales. “This is the Achilles heel of most of these devices,” Jariwala says. “When it comes to real applications, scalability and the ability to integrate these devices on top of silicon processors are really challenging issues.”

Charles Q. Choi is a science reporter who contributes regularly to IEEE Spectrum. He has written for Scientific American, The New York Times, Wired, and Science, among others.