The U.S. electrical grid has been plagued by ever more and ever worse blackouts over the past 15 years. In an average year, outages total 92 minutes per year in the Midwest and 214 minutes in the Northeast. Japan, by contrast, averages only 4 minutes of interrupted service each year. (The outage data excludes interruptions caused by extraordinary events such as fires or extreme weather.)

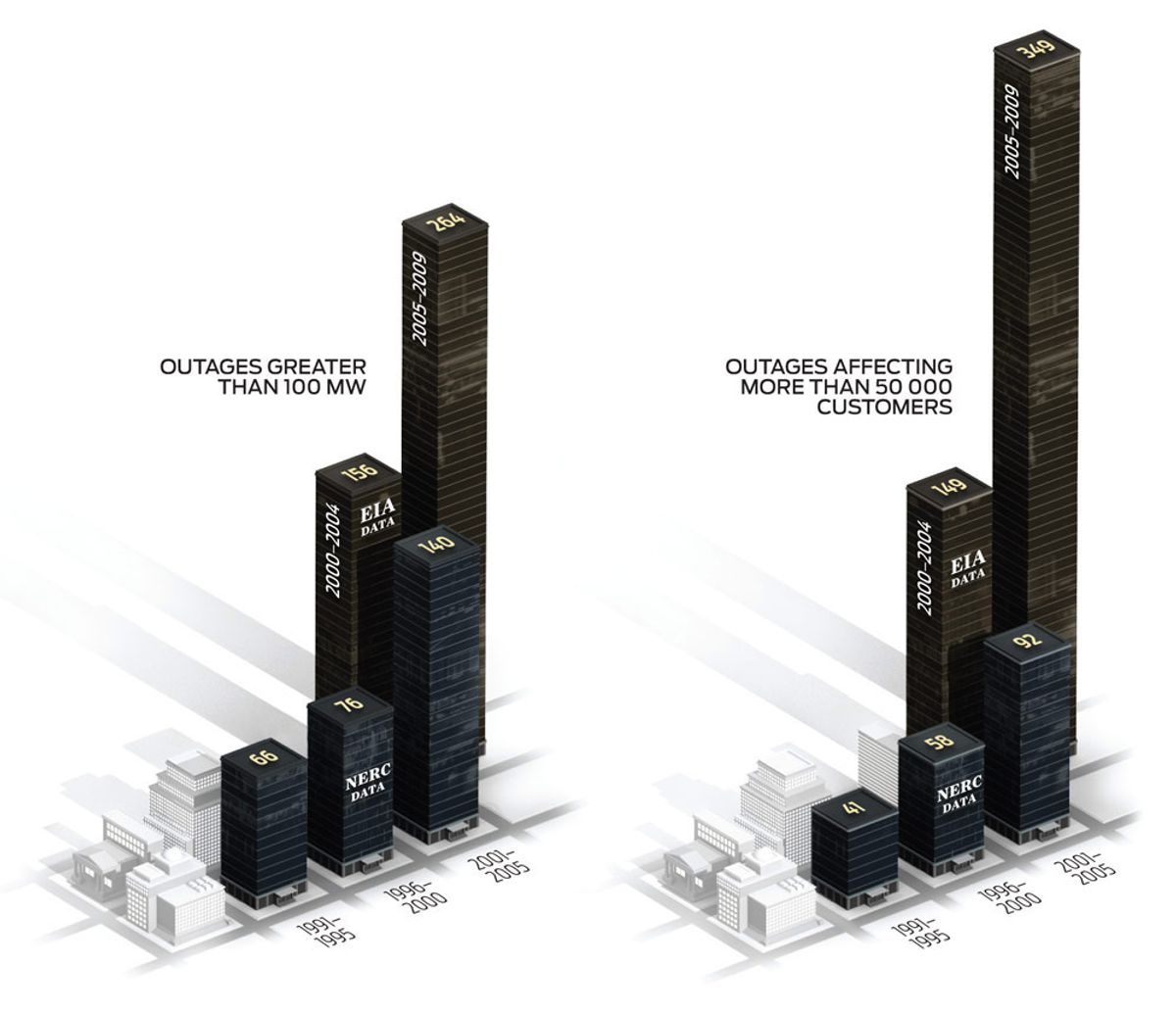

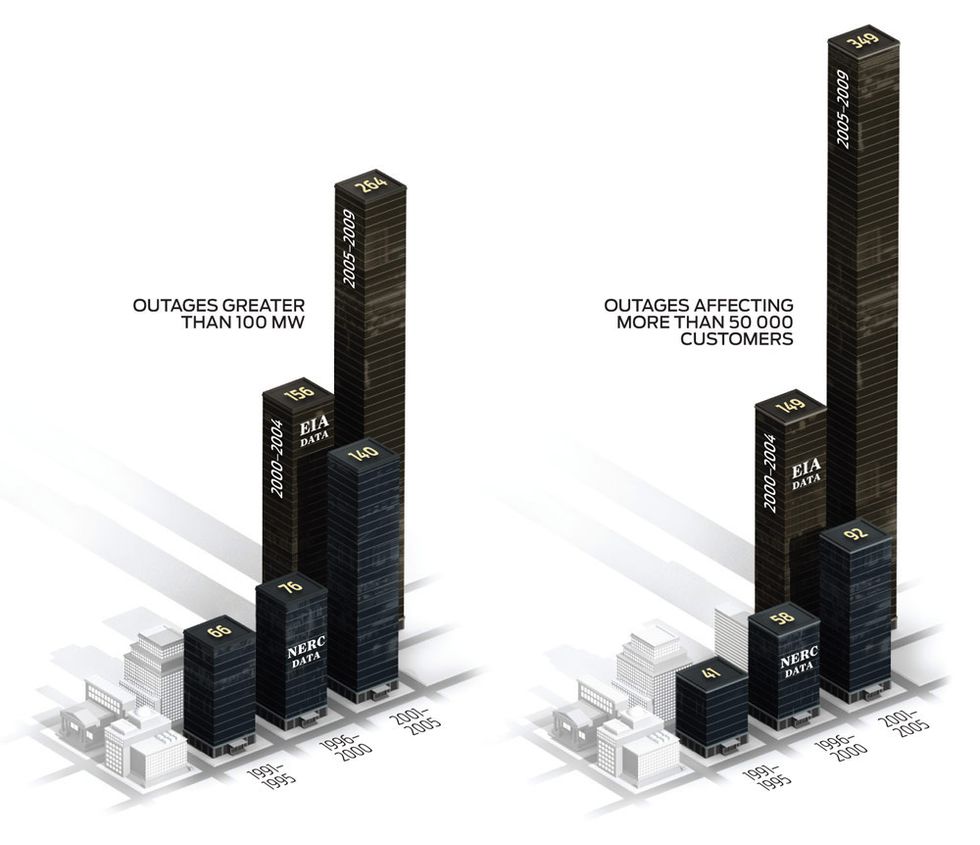

I analyzed two sets of data, one from the U.S. Department of Energy's Energy Information Administration (EIA) and the other from the North American Electric Reliability Corp. (NERC). Generally, the EIA database contains more events, and the NERC database gives more information about the events. In both sets, each five-year period was worse than the preceding one.

What happened? Starting in 1995, the amortization and depreciation rate has exceeded utility construction expenditures. In other words, for the past 15 years, utilities have harvested more than they have planted. The result is an increasingly stressed grid. Indeed, grid operators should be praised for keeping the lights on, while managing a system with diminished shock absorbers.

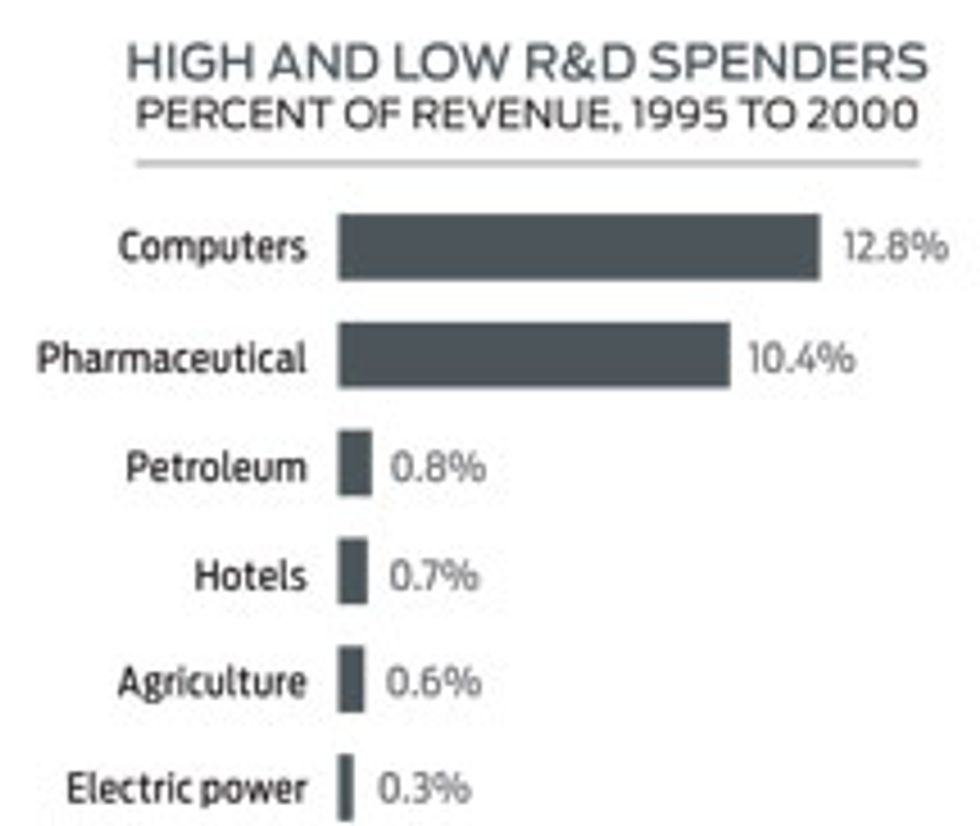

R&D spending for the electric power sector dropped 74 percent, from a high in 1993 of US $741 million to $193 million in 2000. R&D represented a meager 0.3 percent of revenue in the six-year period from 1995 to 2000, before declining even further to 0.17 percent from 2001 to 2006. Even the hotel industry put more into R&D.

Our first strategy for greater reliability should be to expand and strengthen the transmission backbone (at a total cost of about $82 billion), augmented with highly efficient local microgrids that combine heat, power, and storage systems. In the long run, we need a smart grid with self-healing capabilities (total cost, $165 to $170 billion).

Investing in the grid would pay for itself, to a great extent. You'd save stupendous outage costs—about $49 billion per year (and get 12 to 18 percent annual reductions in emissions). Improvement in efficiency would cut energy usage, saving an additional $20.4 billion annually.

This article was updated on 6 January 2011.

This article was modified on 24 January 2011.