A new way of building optical circuits on ordinary computer chips could speed up communications between microprocessors by orders of magnitude while reducing waste heat, increasing the processing power of laptops and smartphones.

“What we’re talking about is integrating optics with electronics on the same chip,” says Milos Popovic, a professor of electrical and computer engineering at Boston University. The method entails adding “a handful” of processing steps to the standard way of making microprocessors in bulk silicon and should not add much time or cost to the manufacturing process, Popovic says.

He, along with colleagues from the Massachusetts Institute of Technology; University of California, Berkeley; University of Coloardo, Boulder; and SUNY Polytechnic Institute, Albany, NY, described the method in a recent paper in Nature.

Their approach adds a thin layer of polycrystalline silicon on top of features already on the chips. The same material is used on chips as a gate dielectric, but in a form that absorbs too much light to be useful as a waveguide.

To make a material more suitable for photonics, the researchers tweaked the deposition process, altering factors such as temperature, to obtain a different crystalline structure. They also took trenches of silicon dioxide, already used to electrically isolate transistors from one another, and made them deeper, to prevent light from leaking out of their polycrystalline silicon to the silicon substrate.

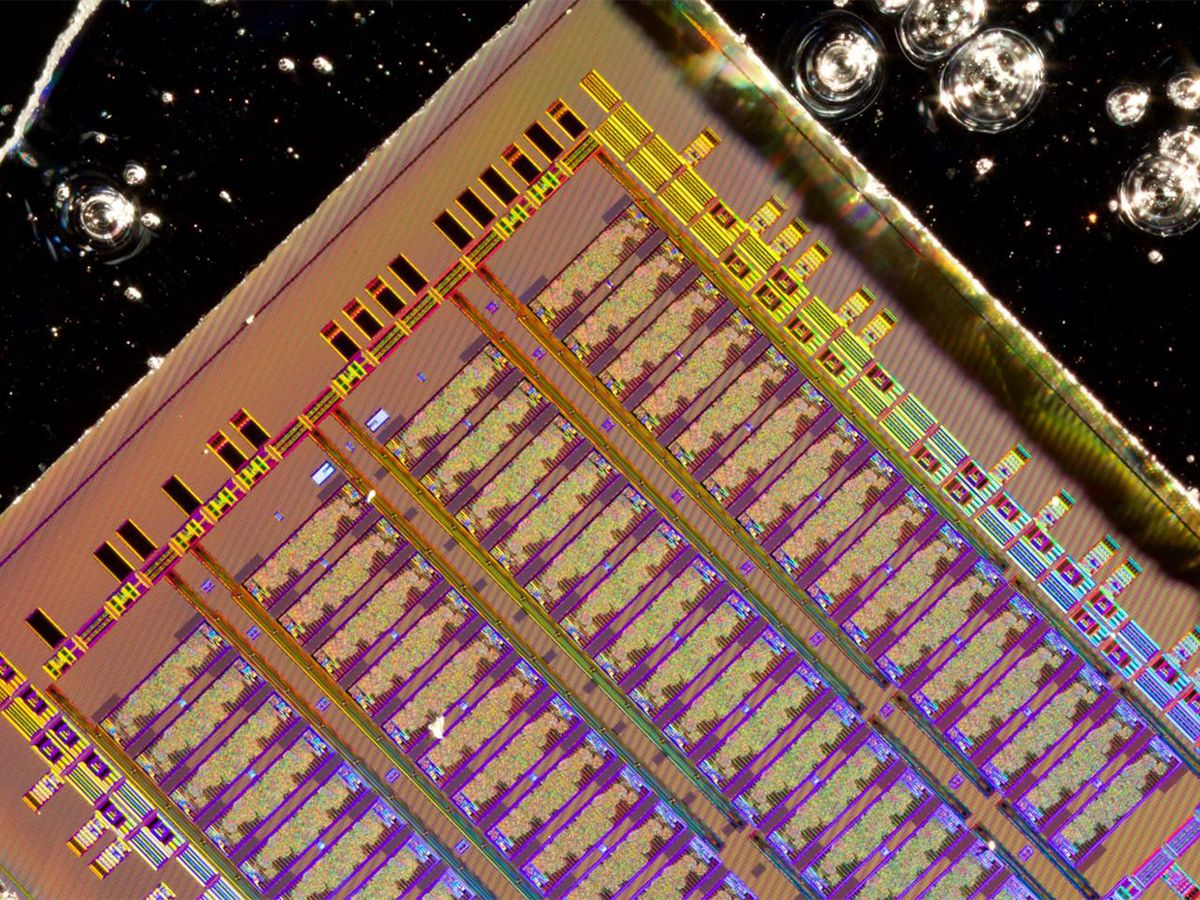

Using the approach, the researchers built chips with all the necessary photonic components—waveguides, microring resonators, vertical grating couplers, high-speed modulators, and avalanche photodetectors—along with transistors with 65-nm feature sizes. A laser light source would sit outside the chip. The photodetectors rely on defects that absorb the photons. The chips were built at the 65 nm node because that is what the semiconductor manufacturing research fab at SUNY Albany is capable of, but Popovic says it should be easy to apply the same processes to transistors being made with much smaller features.

Many of the same researchers had come up with a process for integrating photonics on chips in 2015, but that only worked on more expensive silicon-on-insulator processors. The vast majority of chips are made using bulk complementary metal-oxide-semiconductor technology, which this new technique addresses.

The reason this is all necessary is that computer makers are increasingly relying on multicore chips; graphical processing units used for gaming and artificial intelligence can contain hundreds of cores. The copper wires that carry data between cores are the major bottleneck for speed, as well as producing a lot of waste heat.

“A single electrical wire can only carry 10 to 100 gigabits per second, and there’s only so many you can put in,” Popovic says. By contrast, splitting the signal into many wavelengths could allow a single optical fiber to carry 10 to 20 terabits per second. And at the tiny distances between microprocessors, optical losses are basically zero, so the system requires less power than copper.

This new method could lead to chips with increased processing power that would allow greater use of artificial intelligence techniques for pattern recognition. That could bring the facial recognition used in iPhones to less expensive smartphones, Popovic says, as well as create low-cost LIDAR sensors for self-driving cars.

Neil Savage is a freelance science and technology writer based in Lowell, Mass., and a frequent contributor to IEEE Spectrum. His topics of interest include photonics, physics, computing, materials science, and semiconductors. His most recent article, “Tiny Satellites Could Distribute Quantum Keys,” describes an experiment in which cryptographic keys were distributed from satellites released from the International Space Station. He serves on the steering committee of New England Science Writers.