Replicating the human sense of touch is complicated—electronic skins need to be flexible, stretchable, and sensitive to temperature, pressure and texture; they need to be able to read biological data and provide electronic readouts. Therefore, how to power electronic skin for continuous, real-time use is a big challenge.

To address this, researchers from Glasgow University have developed an energy-generating e-skin made out of miniaturized solar cells, without dedicated touch sensors. The solar cells not only generate their own power—and some surplus—but also provide tactile capabilities for touch and proximity sensing. An early-view paper of their findings was published in IEEE Transactions on Robotics.

When exposed to a light source, the solar cells on the s-skin generate energy. If a cell is shadowed by an approaching object, the intensity of the light, and therefore the energy generated, reduces, dropping to zero when the cell makes contact with the object, confirming touch. In proximity mode, the light intensity tells you how far the object is with respect to the cell. “In real time, you can then compare the light intensity…and after calibration find out the distances,” says Ravinder Dahiya of the Bendable Electronics and Sensing Technologies (BEST) Group, James Watt School of Engineering, University of Glasgow, where the study was carried out. The team used infra-red LEDs with the solar cells for proximity sensing for better results.

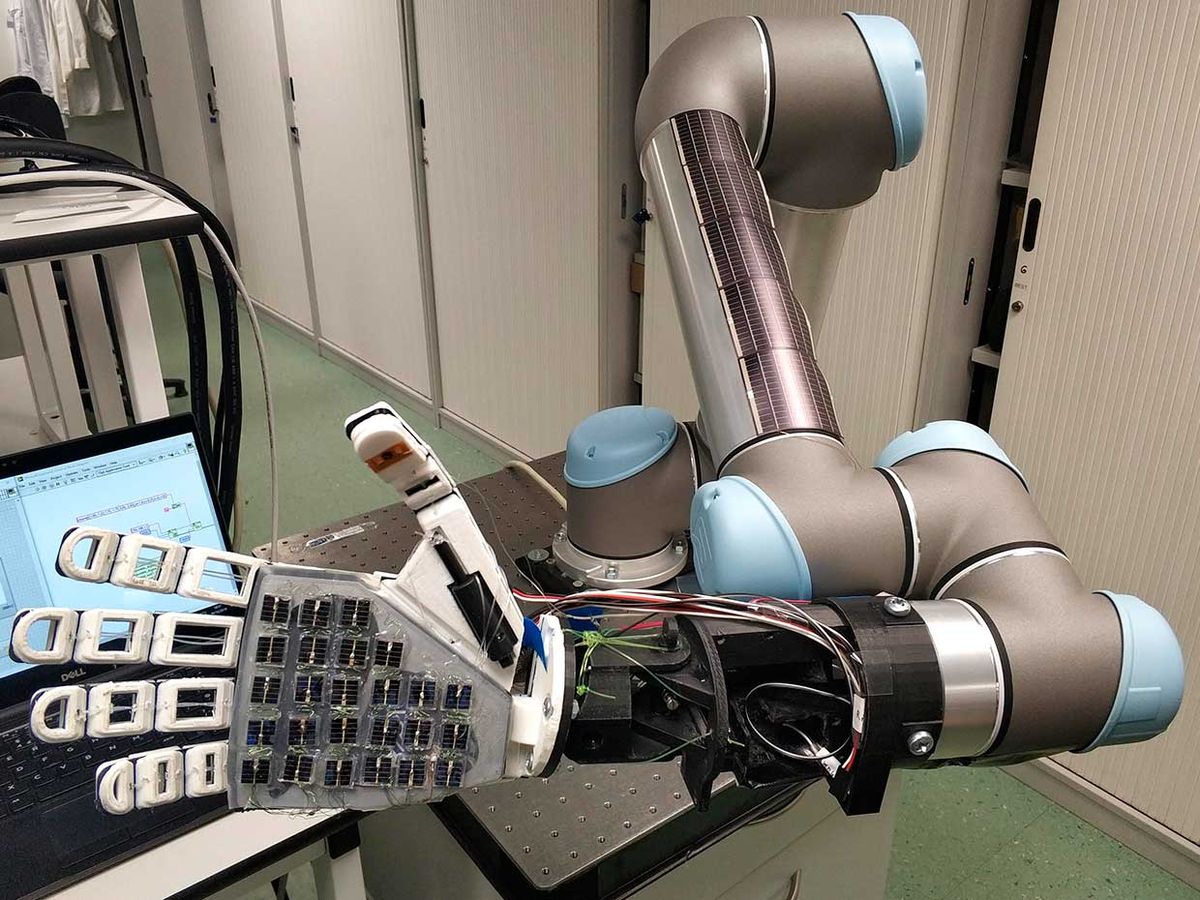

To demonstrate their concept, the researchers wrapped a generic 3D-printed robotic hand in their solar skin, which was then recorded interacting with its environment. The proof-of-concept tests showed an energy surplus of 383.3 mW from the palm of the robotic arm. “The eSkin could generate more than 100 W if present over the whole body area,” they reported in their paper.

“If you look at autonomous, battery-powered robots, putting an electronic skin [that] is consuming energy is a big problem because then it leads to reduced operational time,” says Dahiya. “On the other hand, if you have a skin which generates energy, then…it improves the operational time because you can continue to charge [during operation].” In essence, he says, they turned a challenge—how to power the large surface area of the skin—into an opportunity—by turning it into an energy-generating resource.

Dahiya envisages numerous applications for BEST’s innovative e-skin, given its material-integrated sensing capabilities, apart from the obvious use in robotics. For instance, in prosthetics: “[As] we are using [a] solar cell as a touch sensor itself…we are also [making it] less bulkier than other electronic skins.” This, he adds, will help create prosthetics that are of optimal weight and size, thus making it easier for prosthetics users. “If you look at electronic skin research, the the real action starts after it makes contact… Solar skin is a step ahead, because it will start to work when the object is approaching…[and] have more time to prepare for action.” This could effectively reduce the time lag that is often seen in brain–computer interfaces.

There are also possibilities in the automation sector, particularly in electrical and interactive vehicles. A car covered with solar e-skin, because of its proximity-sensing capabilities, would be able to “see” an approaching obstacle or a person. It isn’t “seeing” in the biological sense, Dahiya clarifies, but from the point of view of a machine. This can be integrated with other objects, not just cars, for a variety of uses. “Gestures can be recognized as well…[which] could be used for gesture-based control…in gaming or in other sectors.”

In the lab, tests were conducted with a single source of white light at 650 lux, but Dahiya feels there are interesting possibilities if they could work with multiple light sources that the e-skin could differentiate between. “We are exploring different AI techniques [for that],” he says, “processing the data in an innovative way [so] that we can identify the the directions of the light sources as well as the object.”

The BEST team’s achievement brings us closer to a flexible, self-powered, cost-effective electronic skin that can touch as well as “see.” At the moment, however, there are still some challenges. One of them is flexibility. In their prototype, they used commercial solar cells made of amorphous silicon, each 1cm x 1cm. “They are not flexible, but they are integrated on a flexible substrate,” Dahiya says. “We are currently exploring nanowire-based solar cells…[with which] we we hope to achieve good performance in terms of energy as well as sensing functionality.” Another shortcoming is what Dahiya calls “the integration challenge”—how to make the solar skin work with different materials.

Payal Dhar (she/they) is a freelance journalist on science, technology, and society. They write about AI, cybersecurity, surveillance, space, online communities, games, and any shiny new technology that catches their eye. You can find and DM Payal on Twitter (@payaldhar).