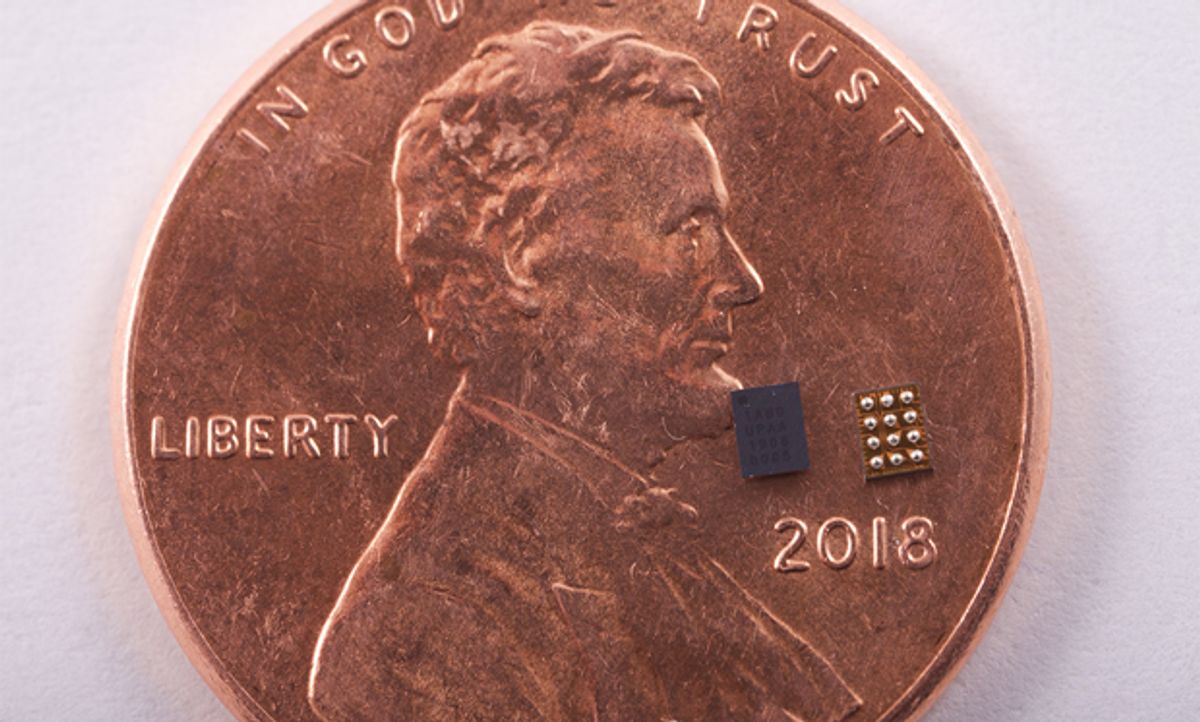

Syntiant’s custom AI chip has passed Amazon’s Alexa qualification, the Irvine, Calif. startup announced on Monday. The NDP100 series of chips can recognize up to 63 words or other sensor patterns while consuming just 150 microwatts, a 200-fold improvement over what a typical microcontroller could offer, the company says. It’s passing Amazon’s stringent signal-to-noise tests mean Bluetooth earphones and other small-battery-operated devices will be able to listen for wake words and other commands that bring them to full power.

“This allows the Amazon Echo ecosystem to expand into most battery powered devices, which it couldn’t previously do,” says Syntiant CEO Kurt Busch. “You really couldn’t do always on listening with small battery powered devices, and now you can.”

Sanjay Voleti, senior manager of device enablement with Alexa Voice Service was impressed with Syntiant’s solution. “We’re excited to see developers begin using this technology in their devices and deliver new Alexa experiences for customers,” he said in a press release.

The chip connects directly to a digital microphone or other sensor and triggers an “interrupt” line connecting to the larger—usually sleeping—system. Once that system’s yawned and stretched, it can then interrogate the NDP100 to determine what wake word or command it heard. The chip also keeps a three-second audio buffer in case the system needs to catch up on what was said during its wakeup routine.

Like most AI ASICs, the chip only performs the inferencing step of deep learning. The training happens in the cloud using the common TensorFlow software library. One of the chip’s biggest advantages for developers, according to Busch, is that it doesn’t require any compiling or optimization step between the trained network and what goes on the chip. “It’s fair to say that the guts of our chip look like the Tensor Flow graph,” says Busch.

Busch says Syntiant is now working on a larger, more capable version of the chip to expand into more markets. It is also developing a version that uses analog computation and embedded flash memory to do AI computations with less power.

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.