SnotBot Drone Swoops Over Blowholes to Track Whale Health

The SnotBot project uses drones, data, and deep learning to tell us about the health of whales and the oceans

The SnotBot drone passes over a blue whale at the moment of exhalation.

It’s a beautiful morning on the waters of Alaska’s Peril Strait—clear, calm, silent, and just a little cool. A small but seaworthy research vessel glides through gentle swells. Suddenly, in the distance, a humpback whale the size of a school bus explodes out of the water. Enormous bursts of air and water jet out of its blowholes like a fire hose, the noise echoing between the banks.

“Blow at eleven o’clock!” cries the lookout, and the small boat swarms with activity. A crew member wearing a helmet and cut-proof gloves raises a large quadcopter drone over his head, as if offering it to the sun, which glints off the half dozen plastic petri dishes velcroed to the drone.

Further back in the boat, the drone pilot calls, “Starting engines in 3, 2, 1! Takeoff in 3, 2, 1!” The drone’s engines buzz as it zooms 20 meters into the air and then darts off toward where the whale just dipped below the water’s surface. With luck, the whale will spout again nearby, and the drone will be there when it does.

The drone is a modified DJI Inspire 2. About the size of a toaster oven, it’s generally sold to photographers, cinematographers, and well-heeled hobbyists, but this particular drone is on a serious mission: to monitor the health of whales, the ocean, and by extension, the planet. The petri dishes it carries collect the exhaled breath condensate of a whale—a.k.a. snot—which holds valuable information about the creature’s health, diet, and other qualities. Hence the drone’s name: the Parley SnotBot.

The flyer comes standard with a forward-facing camera for navigation, collision-avoidance detectors, ultrasonic and barometric sensors to track altitude, and a GPS locator. With the addition of a high-definition video camera on a stabilized gimbal that can be directed independently, it can stream 1080p video live while simultaneously storing the video on a microSD card as well as high-resolution images on a 1-terabyte solid-state drive. Given that both cameras run during the entire 26 minutes of a typical flight, that’s a lot of data. More on what we are doing with that data later, but first, a bit of SnotBot history.

Petri dishes aboard SnotBot collect whale exhalate for later analysis.

Iain Kerr was one of the early pioneers in using drones as a platform to collect and analyze whale exhalation. He’s the CEO of Ocean Alliance, in Gloucester, Mass., a group dedicated to protecting whales and the world’s oceans. Whale biologists know that whale snot contains an enormous amount of biological information, including DNA, hormones, and microorganisms. Scientists can use that information to determine a whale’s health, sex, and pregnancy status, and details about its genetics and microbiome. The traditional and most often used technique for collecting that kind of information is to zoom past a surfacing whale in a boat and shoot it with a specially designed crossbow to capture a small core sample of skin and blubber. The process is stressful for both researchers and whales.

Researchers had demonstrated that whale snot can be a viable replacement for blubber samples, but collection involved reaching out over whales using long, awkward poles—difficult, to say the least. The development of small but powerful commercial drones inspired Kerr to launch an exploratory research project in 2015 to go after whale snot with drones. He received the first U.S. National Oceanic and Atmospheric Administration (NOAA) research permit for collecting whale snot in U.S. waters. Since then, there have been dozens of SnotBot missions around the world, in the waters off Alaska, Gabon, Mexico, and other places where whales like to congregate, and the idea has spread to other teams around the globe.

The SnotBot design continues to evolve. The earliest versions tried to capture snot by trailing gauzy cloth below the drone. The hanging cloth turned out to be difficult to work with, however, and the material itself interfered with some of the lab tests, so the researchers scrapped that method. The developers didn’t consider using petri dishes at first, because they assumed that if the drone flew directly into a whale’s spout, the rotor wash would interfere with collection. Eventually, though, they tried the petri dishes and were happy to discover that the rotors’ downdraft improved rather than hindered collection.

For each mission, the collection goals have been slightly different, and the team tweaks the design of the craft accordingly. On one mission, the focus might be to survey an area, getting samples from as many whales as possible. The next mission might be a “focal follow,” in which the team tracks one whale over a period of hours or days, taking multiple samples so that they can understand things like how a whale’s hormone levels change throughout the day, either from natural processes or as a response to environmental factors.

Collecting and analyzing snot is certainly an important way to assess whale health, but the SnotBot team suspected that the drone could do more. In early 2017, staffers from Parley for the Oceans, a nonprofit environmental group that was working with Ocean Alliance on the SnotBot project, contacted one of us (Willke) to find out just how much more.

Willke is a machine-learning and artificial-intelligence researcher who leads Intel’s Brain-Inspired Computing Lab, in Hillsboro, Ore. He immediately saw ways of expanding the information gathered by SnotBot. Willke enlisted two researchers in his lab—coauthor Keller and Javier Turek—and the three of us got to work on enhancing SnotBot’s mission.

The quadcopters used in the SnotBot project carry high-quality cameras with advanced auto-stabilization features. The drone pilot relies on the high-definition video being streamed back to the boat to fly the aircraft and collect the snot. We knew that these same video streams could simultaneously feed into a computer on the boat and be processed in real time. Could that information help assess whale health?

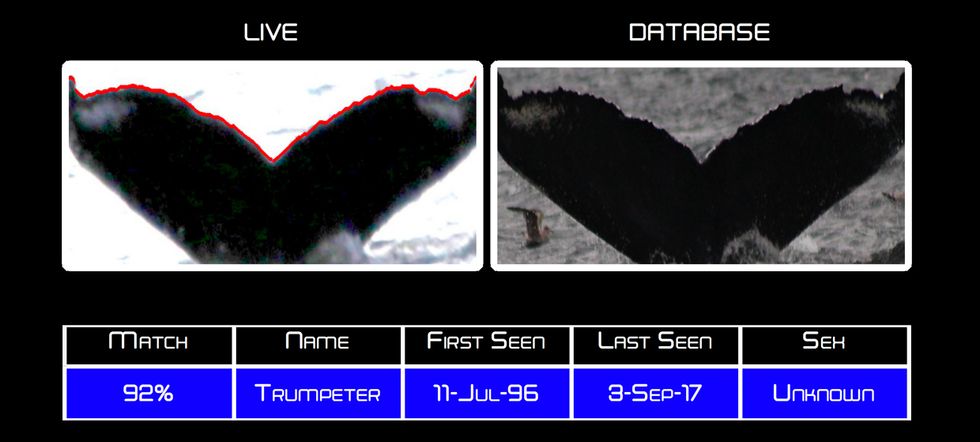

Working with Ocean Alliance scientists, we first came up with a tool that analyzes a photo of a whale’s tail flukes and, using a database of whale photographs collected by the Alaska Whale Foundation, identifies individual whales by the shape of the fluke and its black and white patterns. Identifying each whale allows researchers to correlate snot samples over time.

Such identification can also help whale biologists cope with tricky regulatory issues. For example, there are at least two breeding populations of humpback whales that migrate to Alaska. Most come from Hawaii, but a smaller group comes from Mexico. The Mexican population is under greater stress at the moment, and so NOAA requests that researchers focus on the healthier, Hawaiian whales and leave the Mexican whales alone as much as possible. However, both populations are exactly the same species and thus indistinguishable from each other as a group. The ability to recognize individual whales allows researchers to determine whether a whale had been previously spotted in Mexico or Hawaii, so that they can act appropriately to comply with the regulation.

We also developed software that analyzes the shape of a whale from an overhead shot, taken about 25 meters directly above the whale. Since a skinny whale is often a sick one or one that hasn’t been getting enough to eat, even that simple metric can be a powerful indicator of well-being.

The biggest challenge in developing these tools was what’s called data starvation—there just wasn’t enough data. A standard deep-learning algorithm would look at a huge set of images and then figure out and extract the key distinguishing features of a whale. In the case of the fluke-ID tool, there were only a few pictures of each whale in the catalog, and these were often too low quality to be useful. For overhead health monitoring, there were likewise too few photos or videos of whales shot with the right camera, from the right angle, under the right conditions.

To address these problems, our team turned to classic computer-vision techniques to extract what we considered the most useful data. For example, we used edge-detection algorithms to find and measure the trailing edge of a fluke, then obtained the grayscale values of all the pixels in a line extending from the center notch of the fluke to the outer tips. We trained a small but effective neural network on this data alone. If more data had been available, a deep-learning approach would have worked better than our approach did, but we had to work with the limited data we had.

The latest model of SnotBot flies into action, with custom mounting points for petri dishes and its new paint scheme, designed to camouflage it against a cloud-studded sky.

New discoveries in whale biology have already come from our tools. Besides the ability to distinguish between the Mexican and Hawaiian whale populations, researchers have discovered they can identify whales from their calls, even when the calls were recorded many years previously.

That latter discovery came during the summer of 2017, when we joined Fred Sharpe, an Alaska Whale Foundation researcher and founding board member, to study teams of whales that worked together to feed. While observing a small group of humpback whales, the boat’s underwater microphone picked up a whale feeding call. Sharpe thought it sounded familiar, and so he consulted his database of whale vocalizations. He found a similar call from a whale called Trumpeter that he had recorded some 20 years ago. But was it really the same whale? There was no way to know for sure from the whale call.

Then a whale surfaced briefly and dove again, letting us capture an image of its flukes. Our software found a match: The flukes indeed belonged to Trumpeter. That told the researchers that adult whale feeding calls likely remain stable for decades, maybe even for life. This insight gave researchers another tool for identifying whales in the wild and improving our understanding of vocal signatures in humpback whales.

Meanwhile, whale-ID tools are getting better all the time. The original SnotBot algorithm that we developed for whale identification has been essentially supplanted by more capable services. One new algorithm relies on the curvature of the trailing edge of the fluke for identification.

SnotBot’s real contribution, it turns out, is in health monitoring. Our shape-analysis tool has been evolving and, in combination with the spray samples, is giving researchers a comprehensive picture of an individual whale’s health. We call this tool Morphometer. We recently teamed up with Kelly Cates, a Ph.D. candidate in marine biology at the University of Alaska Fairbanks, and Fredrik Christiansen, an assistant professor and whale expert at the Aarhus Institute of Advanced Studies, in Denmark, to make the technology more powerful and also easier to use.

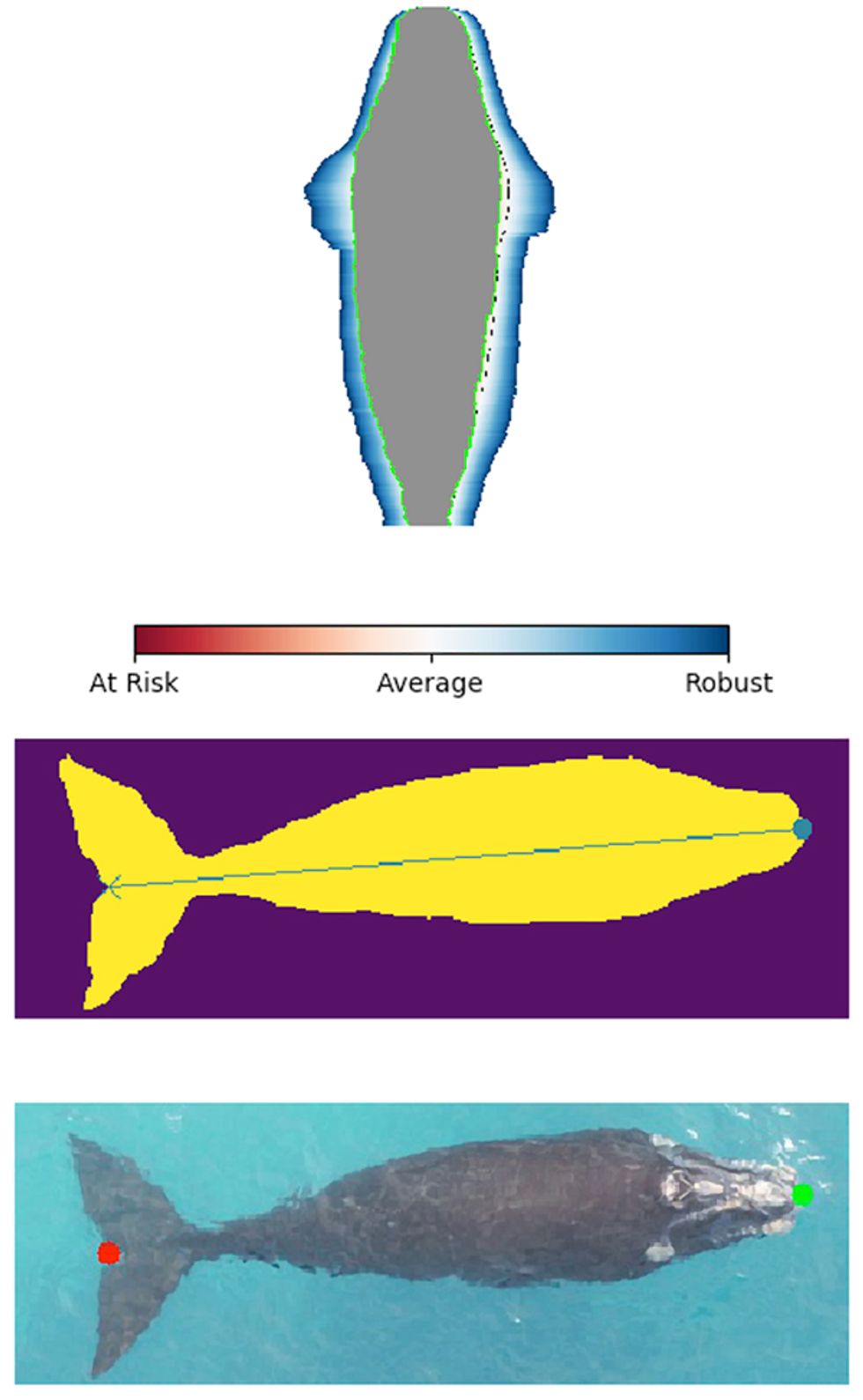

Here’s how it works. Researchers who make measurements and assessments of baleen whales—the type of whales that filter-feed—have typically used a technique developed by Christiansen in 2016. (So far the effort has involved humpback and southern right whales, but the process could work for any kind of baleen whale.) The researchers start with photographic prints or images on a computer and hand-measure the body widths of whales in the images at intervals of 5 percent of the overall length from the snout to the notch of the tail flukes. They then feed this set of measurements to software that calculates an estimate of the whale’s volume. From the relationship between the body length and volume, they can determine if an individual whale is relatively fatter or thinner compared with population norms, taking into account the significant but normal changes in girth that occur as whales accumulate energy reserves during the feeding season and then use those energy stores for migration and during the breeding season.

Morphometer also uses photos, but it measures the whale’s width continuously at the highest resolution possible given the quality of the photo, yielding hundreds of width measurements for each animal, instead of only the small number of measurements that are feasible for human researchers. The result is thus much more accurate. It also processes the data much faster than a human could, allowing biologists to focus on biology rather than doing tedious measurements by hand.

To improve Morphometer, we trained a deep-learning system on images of humpback and southern right whales in all sorts of different weather, water, and lighting conditions to allow it to understand exactly which pixels in an image belong to a whale. Once a whale has been singled out, the system identifies the head and tail and then measures the whale’s length and width at each pixel point along the outline of its body. Our software tracks the altitude from which the drone photographed the whale and combines that data with camera specifications entered by the drone operator, allowing the system to automatically convert the measurements from pixels to meters.

Morphometer compares this whale with others of its body type, displaying the result as an image of the subject whale superimposed on a whale-shape color-coded diagram with zones indicating the average measurements of similar whales. It’s immediately obvious if the whale is normal size, underweight, or larger than average, as would be the case with pregnant females [see illustration, "Measuring Up"].

For our early prototype, we input parameters for a “normal” body shape based on age, sex, and other factors. But now Morphometer is in the process of figuring out “normal” for itself by processing large numbers of whale images. Whale researchers who use their own drones to collect whale photos have been sending us their images. Eventually, we envision setting up a collaborative website that would allow images and morphometry models to be shared among researchers. We also plan to adapt Morphometer to analyze videos of whales, automatically extracting the frames or clips in which the whale’s position and visibility are the best.

To help researchers gain a more complete picture, we’re building statistical models of various whale populations, which we will compare to models derived from human-estimated measurements. Then we’ll take new photos of whales whose age and gender are known, and see whether the software correctly classifies them and gives appropriate indications of health; we’ll have whale biologists verify the results.

Once this model is working reliably, we expect to be able to say how a given whale’s size compares with those of its peers of the same gender, in the same region, at the same time of year. We’ll also be able to identify historical trends—for example, this whale is not skinnier than average compared with last year, but it is much skinnier than whales in its class a few decades ago, assuming comparison data exists. If, in addition, we have snot from the same whale, we can create a more complete profile of the whale, in the same way your credit card company can tell a lot about you by integrating your personal data with the averages and variances in the general population.

So far, SnotBot has told us a lot about the health of individual whales. Soon, researchers will start using this data to monitor the health of oceans. Whales are known as “apex predators,” meaning they are at the top of the food chain. Humpback whales in particular are generalist foragers and have wide-ranging migration patterns, which make them an excellent early-warning system for environmental threats to the ocean as a whole.

This is where SnotBot can really make a difference. We all depend on the oceans for our survival. Besides the vast amount of food they produce, we depend on them for the air we breathe: Most of the oxygen in the atmosphere comes from marine organisms such as phytoplankton and algae.

Lately, ocean productivity associated with a North Pacific warm-water anomaly, or “blob,” has resulted in a reduction of births and more reports of skinny whales, and that should worry us. If conditions are bad for whales, they’re also bad for humans. Thanks to Project SnotBot, we’ll be able to find out—accurately, efficiently, and at a reasonable cost—just how the health and numbers of whales in our oceans are trending. With that information, we hope, we will be able to spur society to take steps to protect the oceans before it’s too late.

The whale images in this article were obtained under National Marine Fisheries Service permits 18636-01 and 19703.

This article appears in the December 2019 print issue as “SnotBot: A Whale of a Deep-Learning Project.”

About the Authors

Bryn Keller is a deep-learning research scientist in Intel’s Brain-Inspired Computing Lab. Senior principal engineer Ted Willke is the lab’s director.